Qinghao Ye*, Haiyang Xu*, Guohai Xu*, Jiabo Ye, Ming Yan†, Yiyang Zhou, Junyang Wang, Anwen Hu, Pengcheng Shi, Yaya Shi, Chaoya Jiang, Chenliang Li, Yuanhong Xu, Hehong Chen, Junfeng Tian, Qian Qi, Ji Zhang, Fei Huang

DAMO Academy, Alibaba Group

*Equal Contribution; † Corresponding Author

English | 简体中文

- We provide an online demo on modelscope for the public to experience.

- We released code of mPLUG-Owl🦉 with its pre-trained and instruction tuning checkpoints.

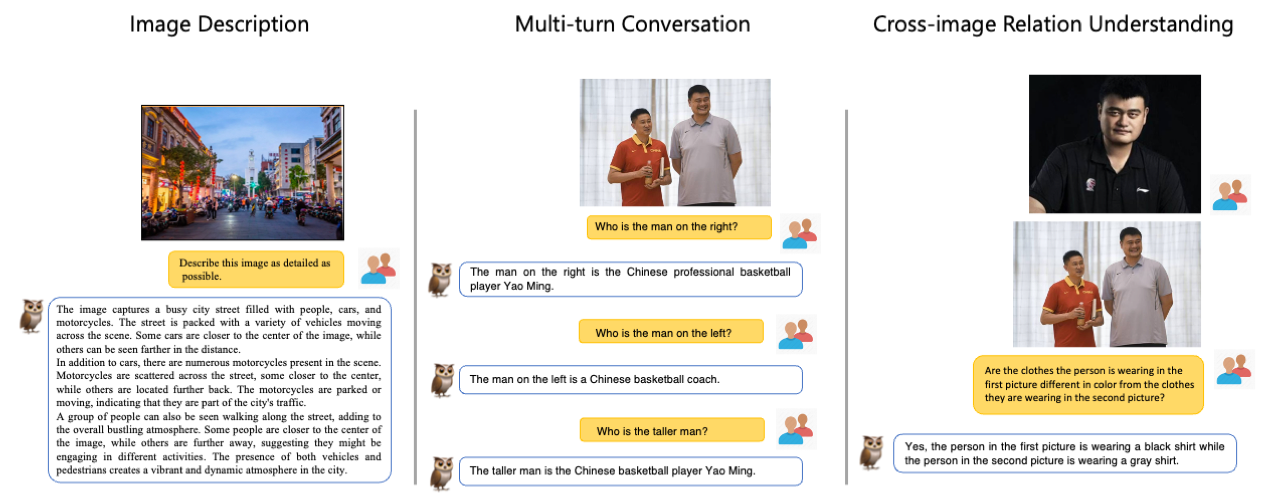

- A new training paradigm with a modularized design for large multi-modal language models.

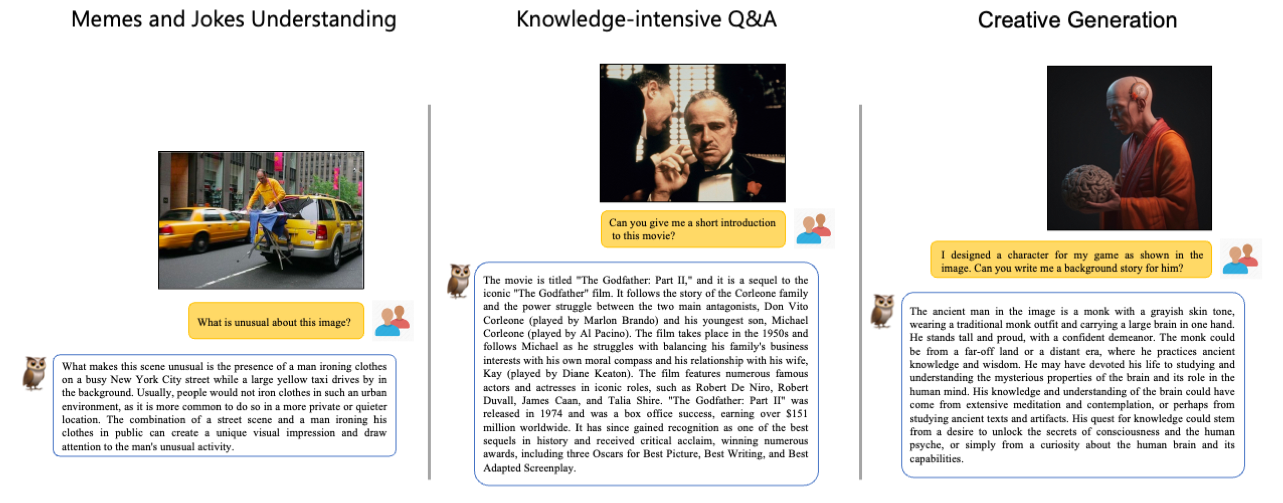

- Learns visual knowledge while support multi-turn conversation consisting of different modalities.

- Observed abilities such as multi-image correlation and scene text understanding, vision-based document comprehension.

- Release a visually-related instruction evaluation set OwlEval.

- Our outstanding works on modularization:

- comming soon

- Huggingface space demo.

- Instruction tuning code and pre-training code.

- Multi-lingustic support (e.g., Chinese, Japanese, Germen, French, etc.)

- A visually-related evaluation set OwlEval to comprehensively evaluate various models.

- Instruction tuning on interleaved data (multiple images and videos).

Demo of mPLUG-Owl on Modelscope

| Model | Phase | Download link |

|---|---|---|

| mPLUG-Owl 7B | Pre-training | Download link |

| mPLUG-Owl 7B | Instruction tuning | Download link |

| Tokenizer model | N/A | Download link |

Core library dependency:

- PyTorch=1.12.1

- transformers=4.28.1

- Apex

- einops

- icecream

- flask

- ruamel.yaml

- uvicorn

- fastapi

- markdown2

- gradio

You can also refer to the exported Conda environment configuration file env.yaml to prepare your environments.

Apex needs to be manually compiled from source code, because mPLUG-Owl rely on the its cpp extension (MixedFusedLayerNorm).

We provide a script to deploy a simple demo in your local machine.

python -m server_mplug.owl_demo --debug --port 6363 --checkpoint_path 'your checkpoint path' --tokenizer_path 'your tokenizer path'Build model, toknizer and processor.

from interface import get_model

model, tokenizer, img_processor = get_model(

checkpoint_path='checkpoint path', tokenizer_path='tokenizer path')Prepare model inputs.

# We use a human/AI template to organize the context as a multi-turn conversation.

# <image> denotes an image placehold.

prompts = [

'''The following is a conversation between a curious human and AI assistant. The assistant gives helpful, detailed, and polite answers to the user's questions.

Human: <image>

Human: Explain why this meme is funny.

AI: ''']

# The image paths should be placed in the image_list and kept in the same order as in the prompts.

# We support urls, local file paths and base64 string. You can custom the pre-process of images by modifying the mplug_owl.modeling_mplug_owl.ImageProcessor

image_list = ['https://xxx.com/image.jpg',]For multiple images inputs, as it is an emergent ability of the models, we do not know which format is the best. Below is an example format we have tried in our experiments. Exploring formats that can help models better understand multiple images could be beneficial and worth further investigation.

prompts = [

'''The following is a conversation between a curious human and AI assistant. The assistant gives helpful, detailed, and polite answers to the user's questions.

Human: <image>

Human: <image>

Human: Do the shirts worn by the individuals in the first and second pictures vary in color? If so, what is the specific color of each shirt?

AI: ''']

image_list = ['https://xxx.com/image_1.jpg', 'https://xxx.com/image_2.jpg']Get response.

# generate kwargs (the same in transformers) can be passed in the do_generate()

from interface import do_generate

sentence = do_generate(prompts, image_list, model, tokenizer,

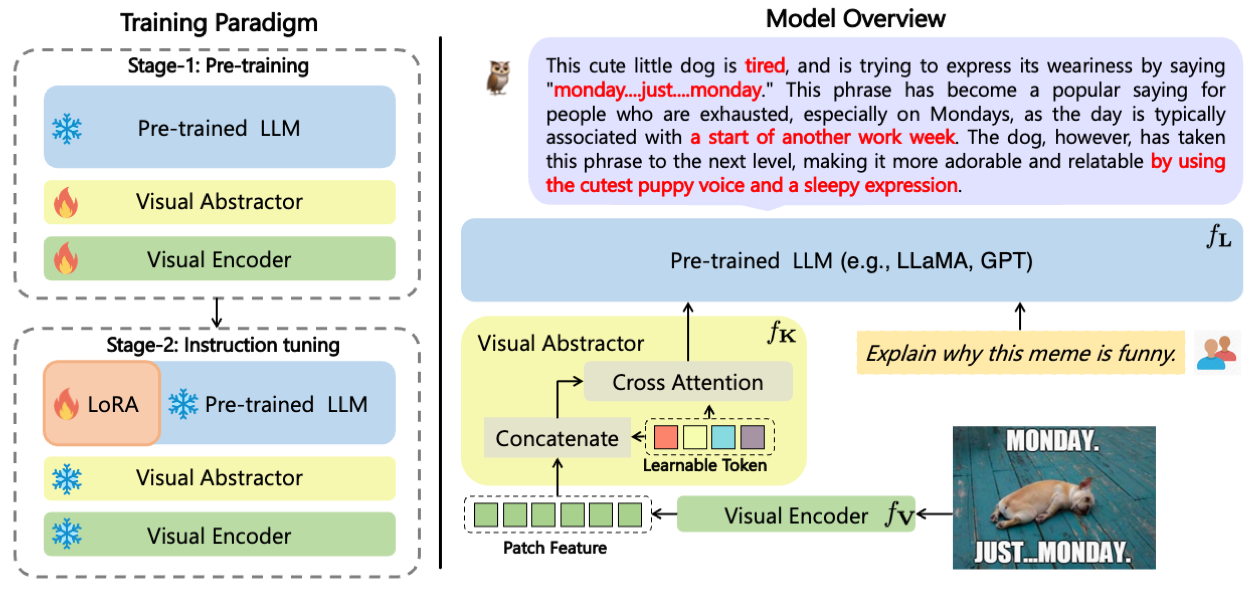

img_processor, max_length=512, top_k=5, do_sample=True)The comparison results of 50 single-turn responses (left) and 52 multi-turn responses (right) between mPLUG-Owl and baselines with manual evaluation metrics. A/B/C/D denote the rate of each response.

- LLaMA. A open-source collection of state-of-the-art large pre-trained language models.

- Baize. An open-source chat model trained with LoRA on 100k dialogs generated by letting ChatGPT chat with itself.

- Alpaca. A fine-tuned model trained from a 7B LLaMA model on 52K instruction-following data.

- LoRA. A plug-and-play module that can greatly reduce the number of trainable parameters for downstream tasks.

- MiniGPT-4. A multi-modal language model that aligns a frozen visual encoder with a frozen LLM using just one projection layer.

- LLaVA. A visual instruction tuned vision language model which achieves GPT4 level capabilities.

- mPLUG. A vision-language foundation model for both cross-modal understanding and generation.

- mPLUG-2. A multimodal model with a modular design, which inspired our project.

If you found this work useful, consider giving this repository a star and citing our paper as followed:

@misc{ye2023mplugowl,

title={mPLUG-Owl: Modularization Empowers Large Language Models with Multimodality},

author={Qinghao Ye and Haiyang Xu and Guohai Xu and Jiabo Ye and Ming Yan and Yiyang Zhou and Junyang Wang and Anwen Hu and Pengcheng Shi and Yaya Shi and Chaoya Jiang and Chenliang Li and Yuanhong Xu and Hehong Chen and Junfeng Tian and Qian Qi and Ji Zhang and Fei Huang},

year={2023},

eprint={2304.14178},

archivePrefix={arXiv},

primaryClass={cs.CL}

}