This project is an experimental sandbox for testing out ideas related to running local Large Language Models (LLMs) with Ollama to perform Retrieval-Augmented Generation (RAG) for answering questions based on sample PDFs. In this project, we are also using Ollama to create embeddings with the nomic-embed-text to use with Chroma. Please note that the embeddings are reloaded each time the application runs, which is not efficient and is only done here for testing purposes.

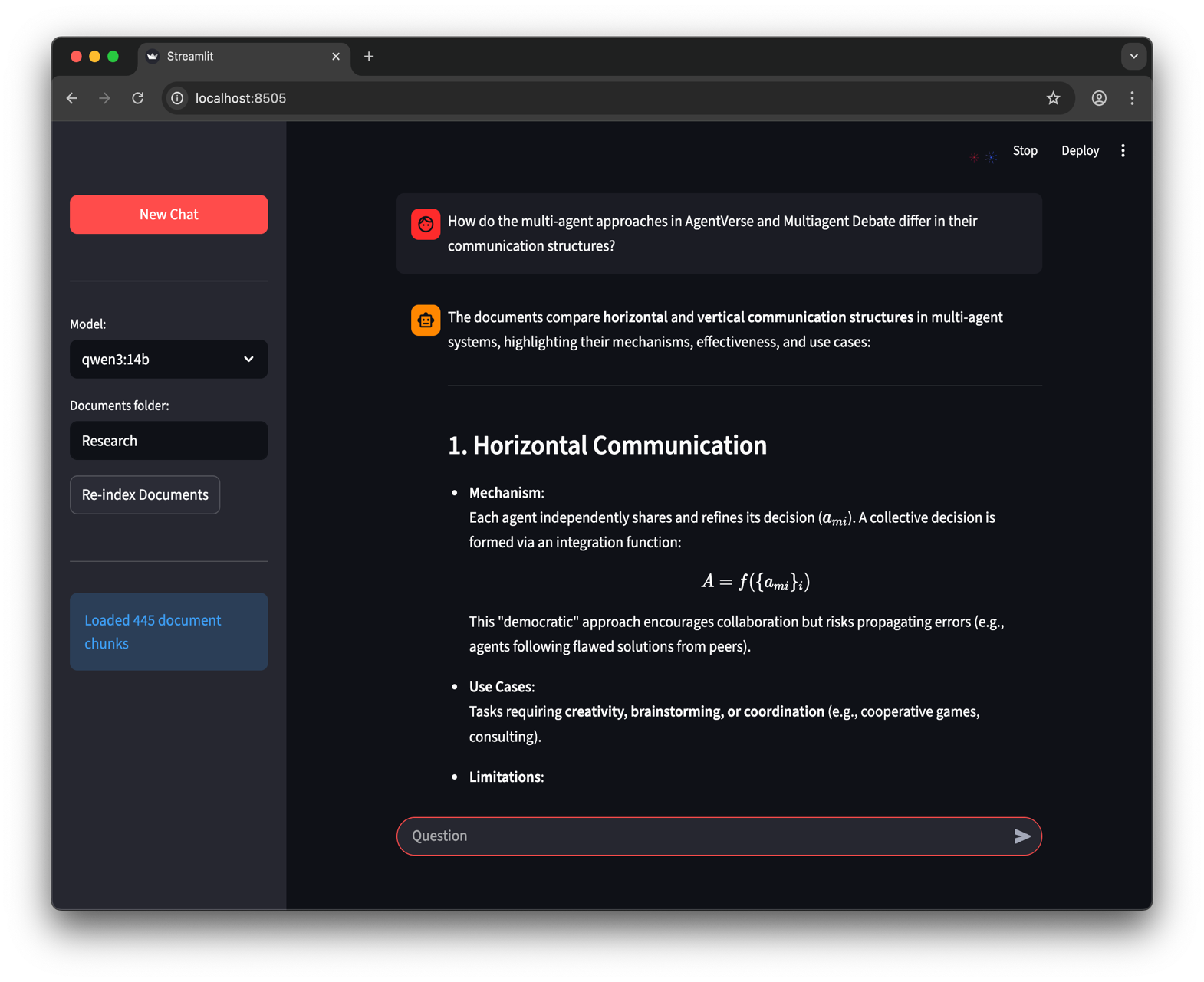

There is also a web UI created using Streamlit to provide a different way to interact with Ollama.

- Ollama verson 0.1.26 or higher.

- Clone this repository to your local machine.

- Create a Python virtual environment by running

python3 -m venv .venv. - Activate the virtual environment by running

source .venv/bin/activateon Unix or MacOS, or.\.venv\Scripts\activateon Windows. - Install the required Python packages by running

pip install -r requirements.txt.

Note: The first time you run the project, it will download the necessary models from Ollama for the LLM and embeddings. This is a one-time setup process and may take some time depending on your internet connection.

- Ensure your virtual environment is activated.

- Run the main script with

python app.py -m <model_name> -p <path_to_documents>to specify a model and the path to documents. If no model is specified, it defaults to mistral. If no path is specified, it defaults toResearchlocated in the repository for example purposes. - Optionally, you can specify the embedding model to use with

-e <embedding_model_name>. If not specified, it defaults to nomic-embed-text.

This will load the PDFs and Markdown files, generate embeddings, query the collection, and answer the question defined in app.py.

- Ensure your virtual environment is activated.

- Navigate to the directory containing the

ui.pyscript. - Run the Streamlit application by executing

streamlit run ui.pyin your terminal.

This will start a local web server and open a new tab in your default web browser where you can interact with the application. The Streamlit UI allows you to select models, select a folder, providing an easier and more intuitive way to interact with the RAG chatbot system compared to the command-line interface. The application will handle the loading of documents, generating embeddings, querying the collection, and displaying the results interactively.

- Langchain: A Python library for working with Large Language Model

- Ollama: A platform for running Large Language models locally.

- Chroma: A vector database for storing and retrieving embeddings.

- PyPDF: A Python library for reading and manipulating PDF files.

- Streamlit: A web framework for creating interactive applications for machine learning and data science projects.