- Simplified GPT2 train scripts(based on Grover, supporting TPUs)

- Ported bert tokenizer,multilingual corpus compatible

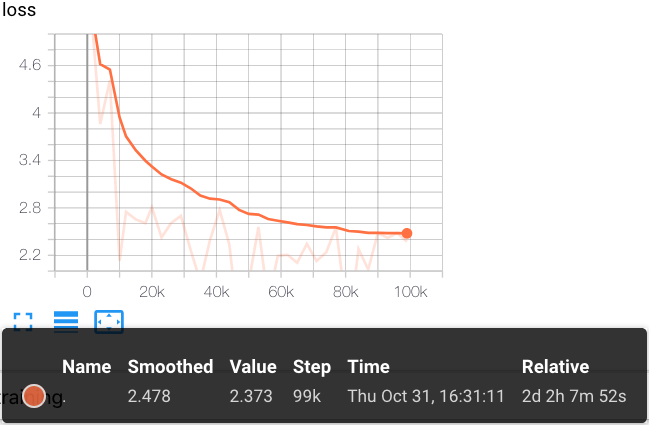

- 1.5B GPT2 pretrained Chinese model ( ~15G corpus, 10w steps )

- Batteries-included Colab demo #

- 1.5B GPT2 pretrained Chinese model ( ~50G corpus, 100w steps )

1.5B GPT2 pretrained Chinese model [Google Drive]

SHA256: 4a6e5124df8db7ac2bdd902e6191b807a6983a7f5d09fb10ce011f9a073b183e

Corpus from THUCNews and nlp_chinese_corpus

Using Cloud TPU Pod v3-256 to train 10w steps

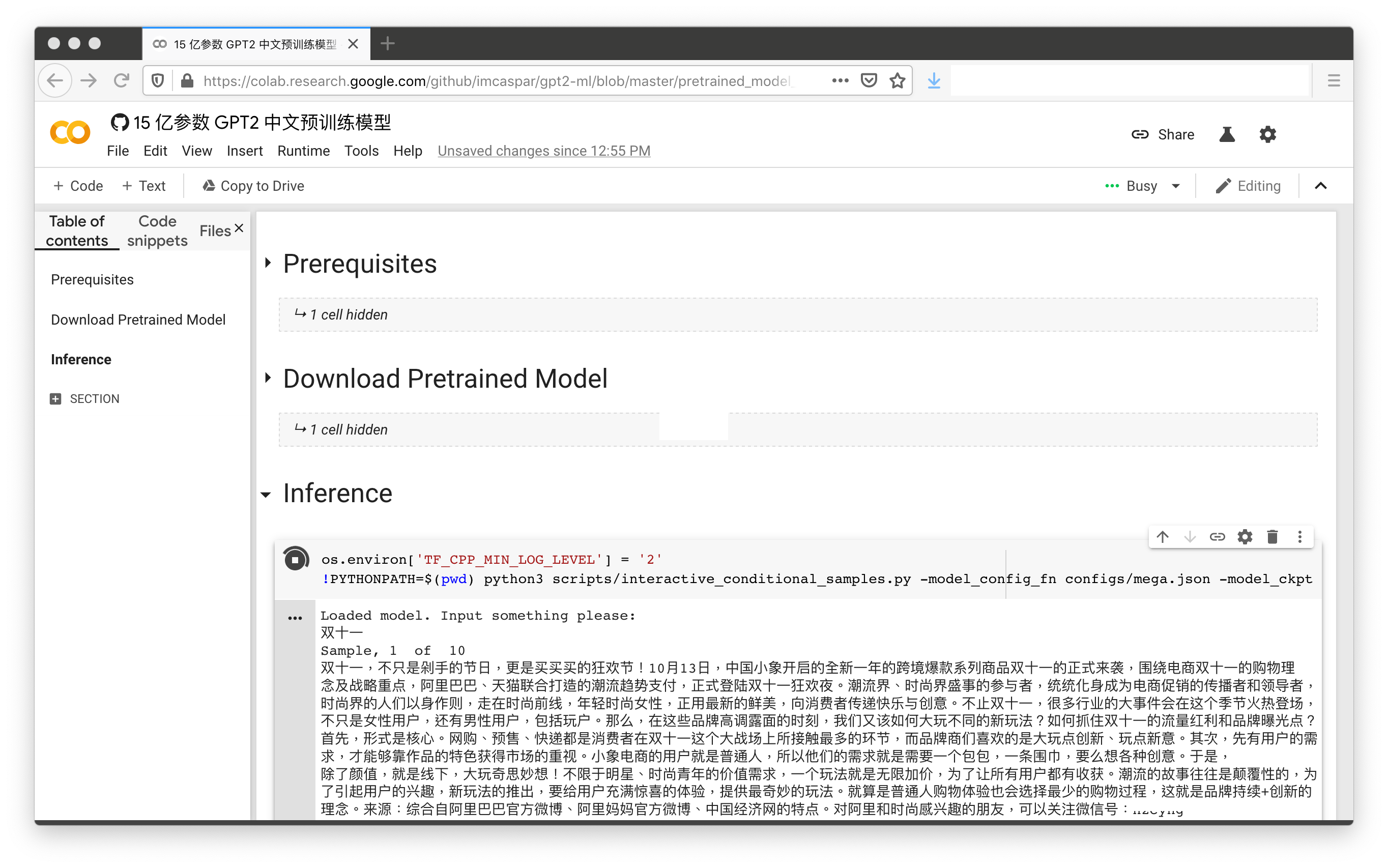

With just 2 clicks (not including Colab auth process), the 1.5B pretrained Chinese model demo is ready to go:

The contents in this repository are for academic research purpose, and we do not provide any conclusive remarks.

@misc{GPT2-ML,

author = {Zhibo Zhang},

title = {GPT2-ML: GPT-2 for Multiple Languages},

year = {2019},

publisher = {GitHub},

journal = {GitHub repository},

howpublished = {\url{https://github.com/imcaspar/gpt2-ml}},

}

https://github.com/google-research/bert

https://github.com/rowanz/grover

Research supported with Cloud TPUs from Google's TensorFlow Research Cloud (TFRC)