deep emoji generative adversarial network

(trying to) generate new emojis with DCGAN 🤗🏭

usage

The emojis are taken from a git submodule to initialize it after cloning this repo run:

git submodule init

git submodule updateThe code itself is currently hosted in a jupyter notebook so you may run jupyter notebook to access the latest version of the GAN and run all the cells to learn the network.

development

keeping track of different network designs and hyperparameters

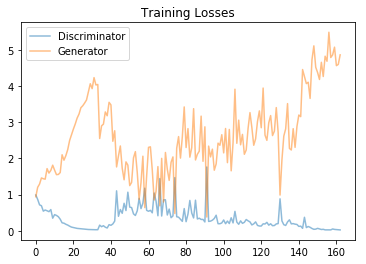

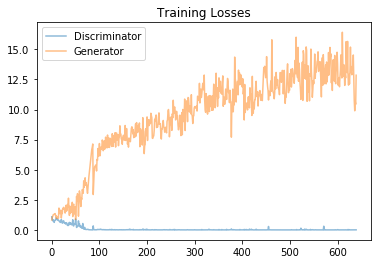

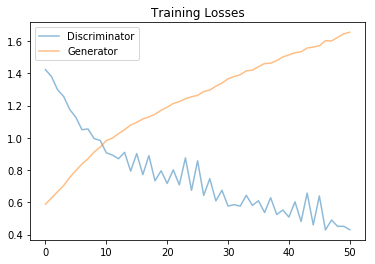

15817e6

generator design

convolutions: 4

features: 512 > 256 > 128 > 64 > 4

kernel size: 5

discriminator design

convolutions: 3

features: 64 > 128 > 256

kernel size: 5

hyper params

training set: 225 (people no tones)

epochs: 768

learning rate: 0.0002

batch size: 64

opt.beta: 0.4

result

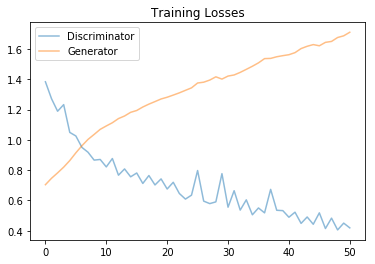

fa696d4

generator design

convolutions: 4

features: 256 > 128 > 32 > 4

kernel size: 5

discriminator design

convolutions: 3

features: 32 > 128 > 256

kernel size: 5

hyper params

training set: 714 (people & activity)

epochs: 768

learning rate: 0.0003

batch size: 256

opt.beta: 0.5

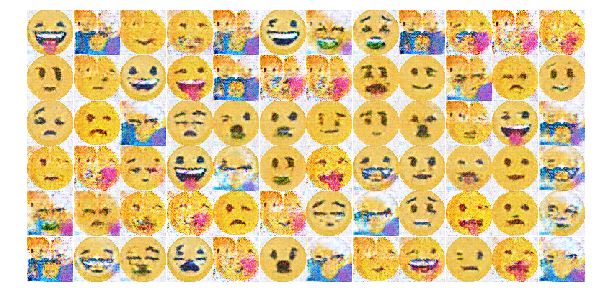

result

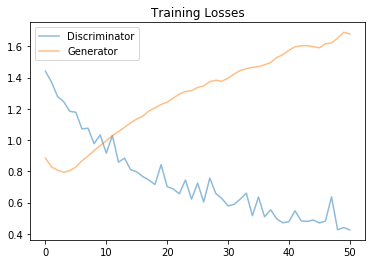

e8285ca

generator design

convolutions: 4

features: 256 > 128 > 32 > 4

kernel size: 5

discriminator design

convolutions: 3

features: 32 > 128 > 256

kernel size: 5

hyper params

training set: 714 (people & activity)

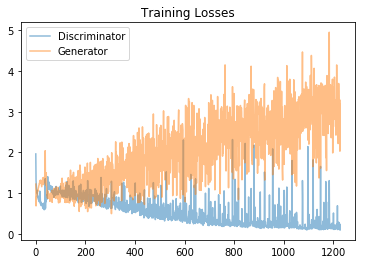

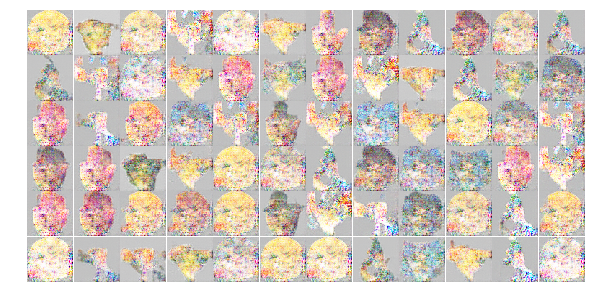

epochs: 4096 (only 1200 run?)

learning rate: 0.0003

batch size: 256

opt.beta: 0.5

result

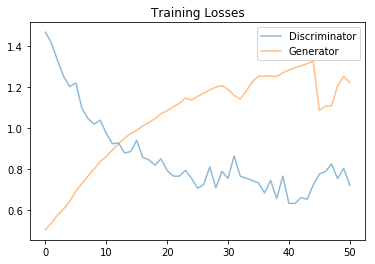

151284d

generator design

convolutions: 3

features: 64 > 32 > 16

kernel size: 4 > 6 > 8

discriminator design

convolutions: 3

features: 8 > 16 > 32

kernel size: 8 > 6 > 4

hyper params

training set: 714 (people & activity)

epochs: 4096 (only 1200 run?)

learning rate: 0.0003

batch size: 256

opt.beta: 0.5

result

9637353

generator design

convolutions: 4

features: 1024 > 512 > 128 > 64

kernel size: 3 > 5 > 5 > 7

discriminator design

convolutions: 3

features: 16 > 46 > 256

kernel size: 5 > 4 > 3

hyper params

training set: 714 (people & activity)

epochs: 1024

learning rate: 0.0002

batch size: 1289

opt.beta: 0.5

result

21b7da3

generator design

convolutions: 4

features: 256 > 128 > 32 > 4

kernel size: 5

discriminator design

convolutions: 3

features: 32 > 128 > 256

kernel size: 5

hyper params

training set: 1262 (no regionla, no symbols)

epochs: 1000

learning rate: 0.0003

batch size: 256

opt.beta: 0.5

result

0344c27

generator design

convolutions: 4

features: 256 > 128 > 32 > 4

kernel size: 5

discriminator design

convolutions: 3

features: 32 > 128 > 256

kernel size: 5

hyper params

training set: 5063 (multi-set, no regionla, no symbols)

epochs: 1600

learning rate: 0.0003

batch size: 256

opt.beta1: 0.4

opt.beta2: 0.7

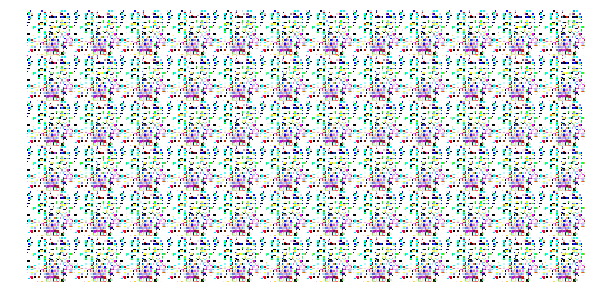

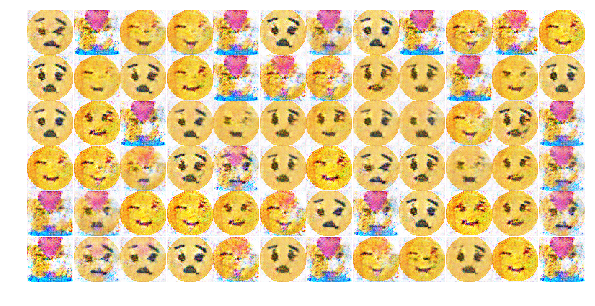

result

Best sample

Tuning Hyperparameters

training set: 1565 (multi-set, people)

generator design

convolutions: 4

features: 256 > 128 > 32 > 4

kernel size: 5

discriminator design

convolutions: 3

features: 32 > 128 > 256

kernel size: 5

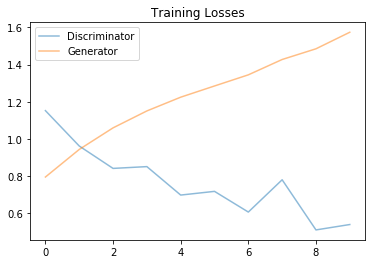

6dfe858

generator design

convolutions: 3

features: 128 > 64 > 4

kernel size: 5

discriminator design

convolutions: 2

features: 64 > 128

kernel size: 5

hyper params

training set: 1 (one round shocked face)

epochs: 1000

learning rate: 0.003

batch size: 32

opt.beta: 0.5

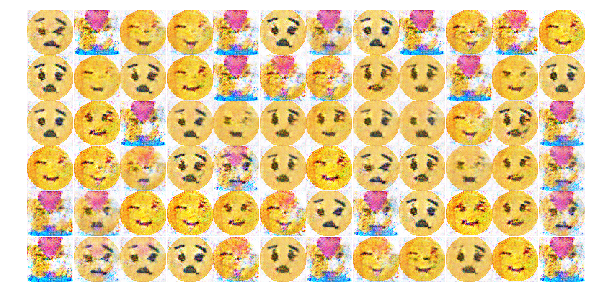

result

63abc3b

generator design

convolutions: 2

features: 1024 > 256 > 4

kernel size: 5

discriminator design

convolutions: 2

features: 64 > 256

kernel size: 5

hyper params

training set: 1 (one round shocked face)

epochs: 5000

learning rate: learning_rate_d=0.0003, learning_rate_g=0.001

batch size: 32

opt.beta: 0.5

result

8c948e8

generator design

convolutions: 3

features: 512 > 128 > 64

kernel size: 5

discriminator design

convolutions: 3

features: 64 > 128 > 512

kernel size: 5

hyper params

training set: 1 (one round shocked face)

epochs: 200

learning rate: learning_rate_d=0.0002, learning_rate_g=0.0002

batch size: 768

opt.beta: 0.5

result

Note: The goal of this run was to proof that a DCGAN is able to train on a single image and will end up replicating this image. This was a way of testing the overall chain and exposed a bug in the data preparation methods.

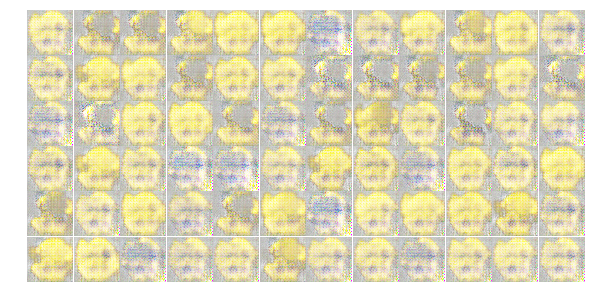

86359f9

generator design

convolutions: 3

features: 512 > 128 > 64

kernel size: 5

discriminator design

convolutions: 3

features: 64 > 128 > 512

kernel size: 5

hyper params

training set: 273 (1565 filtered for being yellow)

epochs: 800

learning rate: learning_rate_d=0.0003, learning_rate_g=0.0003

batch size: 768

opt.beta: 0.5

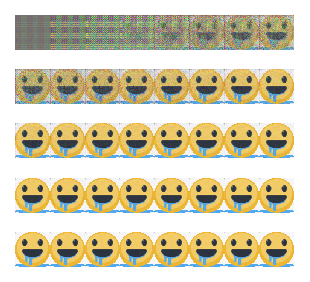

result

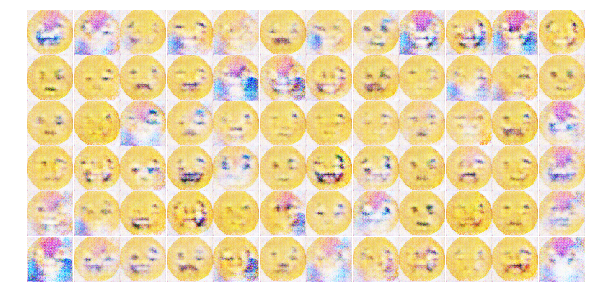

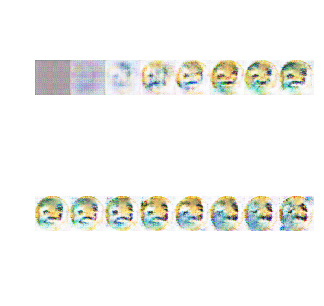

Degradation of diversity

What's interesting is that the network managed to somehow learn diverse features and put them together and at epoch ~600 got scrambled and forgot some of the features like a sticked out tongue.

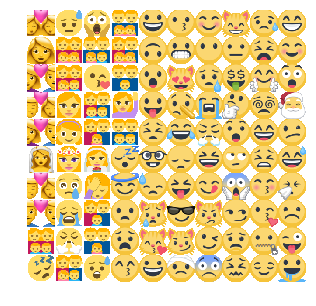

The network was trained with several emojis of this type:

Emoji shape forming at epoch50

First details emerging at e150

Diversity in the generated images at e600

Something creating a lot of noise at e650

Final result at e800 with less features than e600

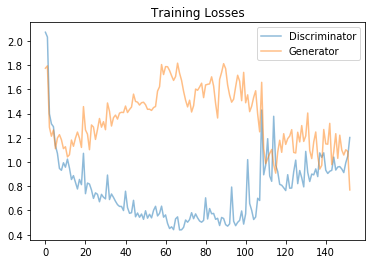

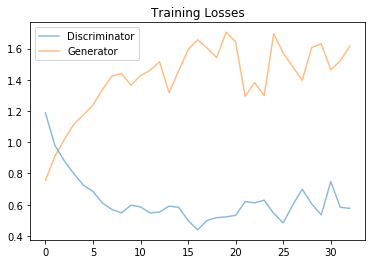

7e1480d

generator design

convolutions: 3

features: 256 > 128 > 64

kernel size: 5

discriminator design

convolutions: 3

features: 64 > 128 > 256

kernel size: 5

hyper params

training set: 141 (only "face")

epochs: 800

learning rate: learning_rate_d=0.0001, learning_rate_g=0.0001

batch size: 768

opt.beta: 0.5

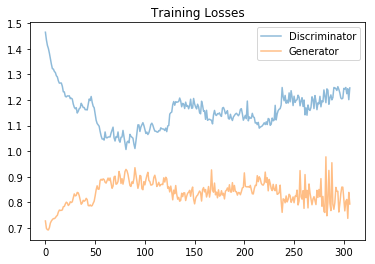

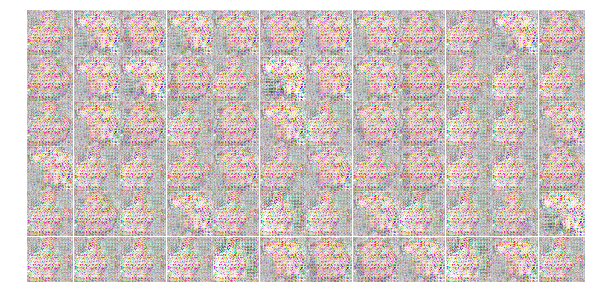

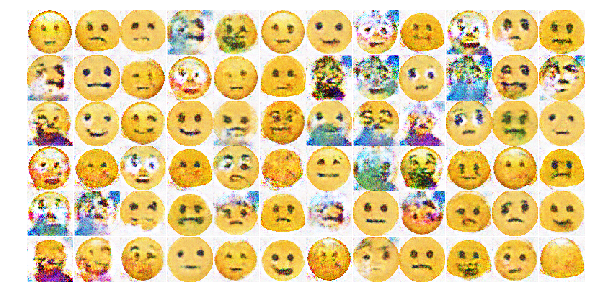

result

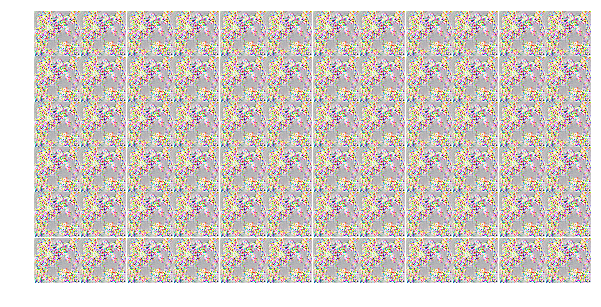

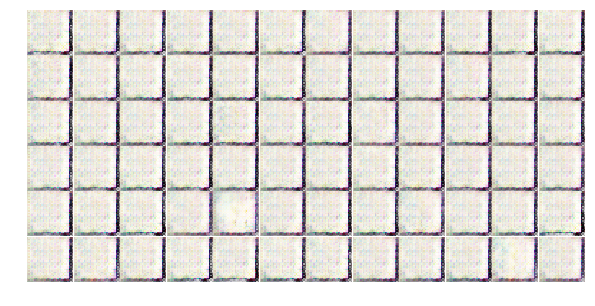

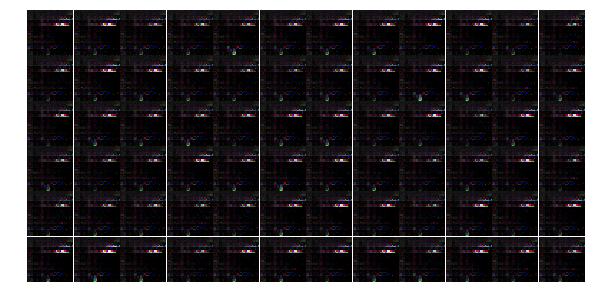

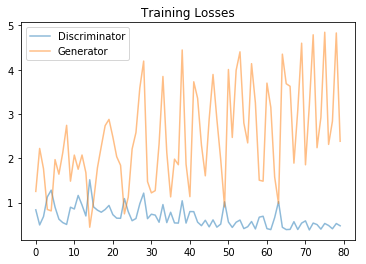

Evolution over epochs

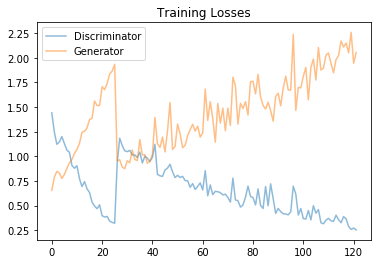

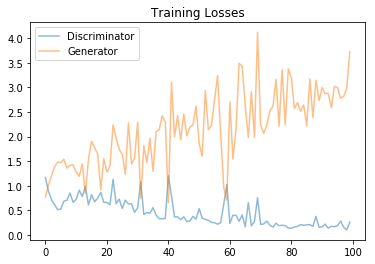

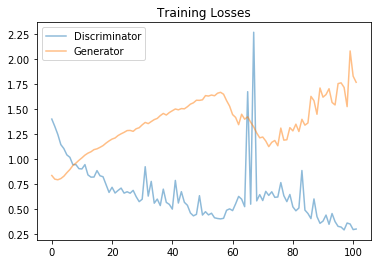

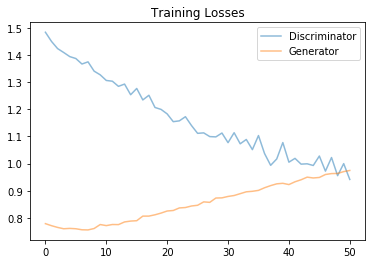

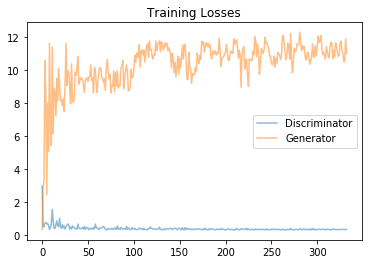

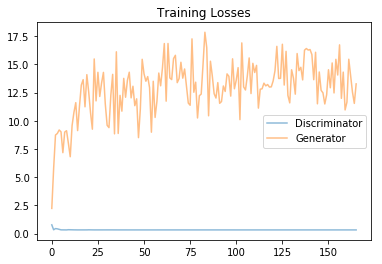

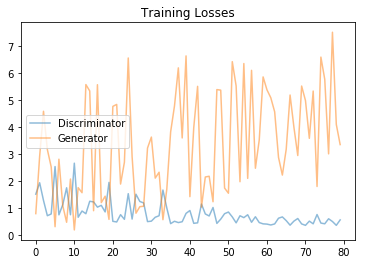

Losses

Epoch200

Epoch 450

Final sample