This code is modified from the Gradient Norm OOD repository

This work combines the Energy Score with the Learned Temperature from GODIN and ablates one term.

Please download ImageNet-1k and place it in

./data/ImageNet/ILSVRC.

All remaining ID datasets for CIFAR10/100 evaluations are automatically downloaded in our script to ./data

Following MOS, we use the following 4 OOD datasets for evaluation for ImageNet-1k: iNaturalist, SUN, Places, and Textures.

For iNaturalist, SUN, and Places, we have sampled 10,000 images from the selected concepts for each dataset, which can be download from the following links:

wget http://pages.cs.wisc.edu/~huangrui/imagenet_ood_dataset/iNaturalist.tar.gz

wget http://pages.cs.wisc.edu/~huangrui/imagenet_ood_dataset/SUN.tar.gz

wget http://pages.cs.wisc.edu/~huangrui/imagenet_ood_dataset/Places.tar.gzFor Textures, we use the entire dataset, which can be downloaded from their official website.

Please put all downloaded OOD datasets into ./data.

For more details about these OOD datasets, please check out the MOS paper.

All remaining OOD datasets for CIFAR10/100 evaluations are automatically downloaded in our script to ./data

The following will create and activate a conda environment.

conda env create -f environment.yml

conda activate abet

To train a model, please run:

python main.py --save-model-path <path you would like to save model weights to> --architecture <"resnet20" for CIFAR10/100 and "resnet101" for ImageNet-1k> --out-dataset <OOD dataset> --in-dataset <ID dataset>

For reproducibility purposes, we host our pre-trained models in a common Google Drive folder.

All AbeT results use the models with the _learned_temperature.pth postfix - as our method requires the use of a learned temperature.

To evaluate a model using AbeT, please run:

python main.py --load-model-path <path to model weights you would like to load> --architecture <"resnet20" for CIFAR10/100 and "resnet101" for ImageNet-1k> --out-dataset <OOD dataset> --in-dataset <ID dataset> --no-train --inference-mode ablated_energy

If you would like to reproduce Figure 1, run this with the flag --hist and the result will be saved to the pngs directory under the name <OOD dataset name>_<ID dataset name>_histogram.png.

If you would like to reproduce Figure 2, run this with the flag --tsne and the result will be saved to the pngs directory under the name <OOD dataset name>_<ID dataset name>_tsne.png.

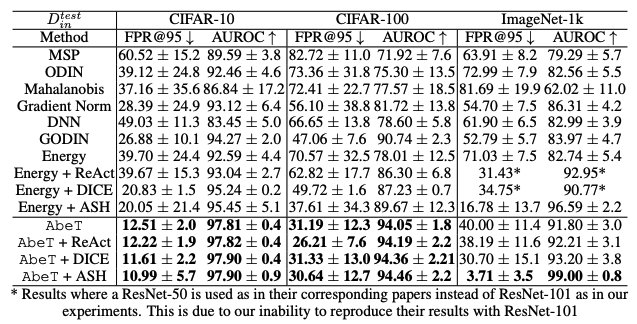

AbeT achieves state-of-the-art performance averaged on all standard OOD datasets in classification. Changes from our submission to ICML on OpenReview are bolded, rather than the best results.

For more information about our Segmentation and Object Detection results, please see the segmentation and object_detection