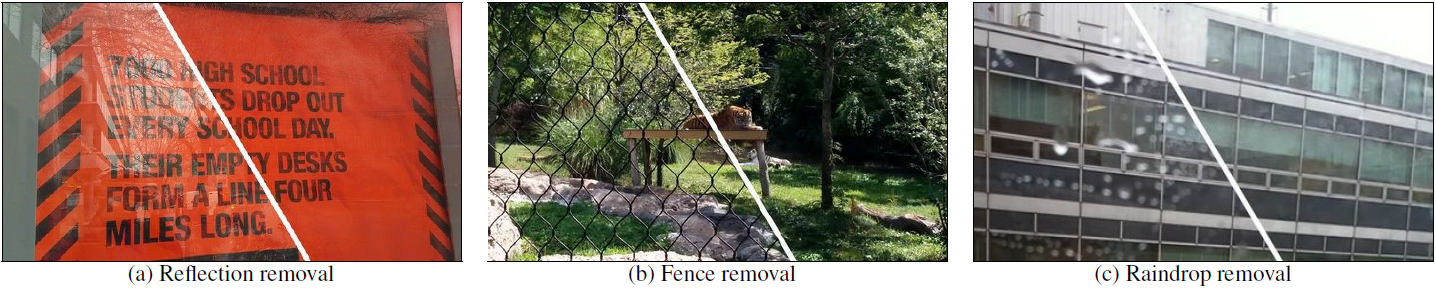

We present a learning-based approach for removing unwanted obstructions, such as window reflections, fence occlusions or raindrops, from a short sequence of images captured by a moving camera. Our method leverages the motion differences between the background and the obstructing elements to recover both layers. Specifically, we alternate between estimating dense optical flow fields of the two layers and reconstructing each layer from the flowwarped images via a deep convolutional neural network. The learning-based layer reconstruction allows us to accommodate potential errors in the flow estimation and brittle assumptions such as brightness consistency. We show that training on synthetically generated data transfers well to real images. Our results on numerous challenging scenarios of reflection and fence removal demonstrate the effectiveness of the proposed method.

Paper

This is the author's reference implementation of the multi-image reflection removal using TensorFlow described in: "Learning to See Through Obstructions" Yu-Lun Liu, Wei-Sheng Lai, Ming-Hsuan Yang, Yung-Yu Chuang, Jia-Bin Huang (National Taiwan University & Google & Virginia Tech & University of California at Merced & MediaTek Inc.) in CVPR 2020. If you find this code useful for your research, please consider citing the following paper.

Further information please contact Yu-Lun Liu.

-

- tested using TensorFlow 1.10.0

-

- Please overwrite

tfoptflow/model_pwcnet.pyandtfoptflow/model_base.pyusing the ones in this repository.

- Please overwrite

-

To download the pre-trained models:

- Run your own sequence (reflection removal):

CUDA_VISIBLEDEVICES=0 python3 run_reflection.py- Run your own sequence (fence removal):

CUDA_VISIBLEDEVICES=0 python3 test_fence.py[1] Yu-Lun Liu, Wei-Sheng Lai, Ming-Hsuan Yang, Yung-Yu Chuang, and Jia-Bin Huang. Learning to See Through Obstructions. Proceedings of IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2020