NOTE: THIS REPO IS NOT LONGER MAINTAINED OR UPDATED. IT IS HERE FOR HISTORICAL REFERNCES❗ Red Hat does not provide commercial support for the content of this repo

##############################################################################

DISCLAIMER: THE CONTENT OF THIS REPO IS PROVIDED "AS-IS"

THE CONTENT IS PROVIDED AS REFERENCE WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT.

##############################################################################This is a reference documentation for POCs of OpenShift 4.6 UPI bare-metal deployment using PXE Boot.

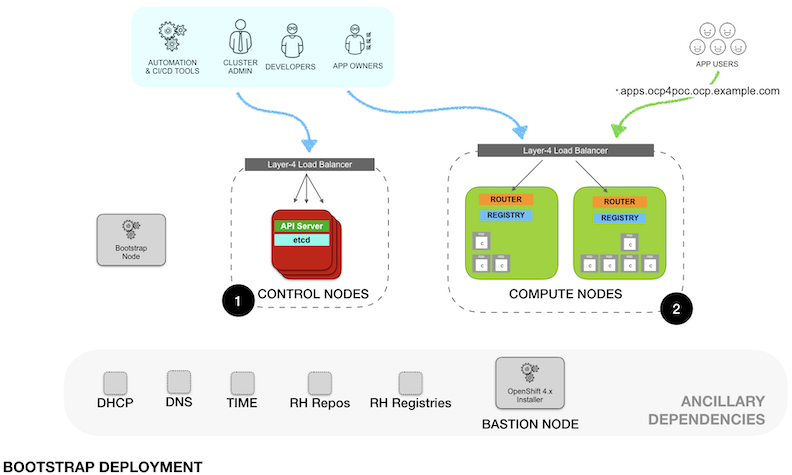

The initial deployment is as per the following diagram

NOTE: Even when the diagram shows two different load balancers, the access for the operations and administration tasks as well as the applications traffic are documented to go over the same load balancer. For a production grade deployment, the control and operation ports (K8s API, Machine Server, etc.) should not be exposed outside the organization.

- Clone this repo to the Bastion Node

- Edit the

install-config.yamlto match your environment - Execute

poc.shscript:

./poc.sh clean

./poc.sh ignition

./poc.sh custom

./poc.sh prep_ign

- Power up the Bootstrap Node and PXE install the RHCOS

- Power up the Master Nodes and PXE install the RHCOS

# Monitor bootstrap progress:

./poc.sh debug_bootstrap

- Once bootstrap complete, shutdown Bootstrap Node

- Power up the Worker Nodes and PXE install

# Monitor OCP install

./poc.sh debug_install

- Monitor CSR requests from the Worker Nodes and accept the certificates

NOTE: There are two CSR's per Node that need to be accepted.

$ export KUBECONFIG=./ocp4poc/auth/kubeconfig

$ ./oc get csr

$ ./oc adm certificate approve <crt-name>

- Base Domain: example.com

- Cluster Name: ocp4poc

| NODE | IP ADDRESS |

|---|---|

| bootstrap | 192.168.1.10 |

| master-0 | 192.168.1.11 |

| master-1 | 192.168.1.12 |

| master-2 | 192.168.1.13 |

| worker-0 | 192.168.1.15 |

| worker-1 | 192.168.1.16 |

| worker-2 | 192.168.1.17 |

NOTE: For easy customization, clone this repo to your Bastion Node.

Modify the following environment variables of ./poc.sh script to match your environment.

OCP_RELEASE_PATH=ocp # valid options are "ocp" or "ocp-dev-preview"

OCP_SUBRELEASE=4.6.0-rc.3

RHCOS_RELEASE=pre-release # "4.5" for latest stable, "pre-release" for nightly

WEBROOT=/opt/nginx/html

TFTPROOT=/opt/dnsmasq # Using dnsmasq container as tftpserver, otherwise /tftpboot or /var/lib/tftpboot

POCDIR=ocp4

NOTE: Next instructions assume this has been customized.

- Example of forward DNS records included in the

utilsfolder - Example of reverse records included in the

utilsfolder - DNS must have entries for:

- DNS forward records for all Nodes

- DNS reverse records for all the Nodes

- DNS entries for special records used by OCP:

etcd,etcd srv,api,api-int, and*.appswildcard subdomain

Reference official documentation for details on special entries required in DNS.

NOTE: If there is NO way to reach out to the external NTP and DNS servers from the masters and workers node then you can set proxy DNS . DNS/NTP query in this will flow like this .

- master node > bastion node > external DNS/NTP server

- worker node > bastion node > external DNS/NTP server

In this case no need to use bastion as recursive DNS, set port=0 in /etc/dnsmasq.conf file.

NOTE: While setting up the external DNS server make sure the A records priorities for master and etcd are set properly . We want to have higher priority for master A records as compared to etcd A record . Otherwise what will happen is when we try to install master nodes ,during the reverse lookup step it might get the etcd FQDN instead of master FQDN!!

NOTE: If using Microsoft external DNS server you can use CNAME for etcd FQDN's

- Setup load balancerconfiguration in pass-through for Kubernetes API (

tcp/6443), Machine Server Config (tcp/22623), OpenShift Routers HTTP and HTTPS (tcp/80,tcp/443)

Reference Load Balancer configurations available in the utils folder (use one of the two):

- Load balancer using HAProxy at system level (installed from RPM)

- Load balancer using HAProxy as System Container managed by systemd

NOTE: If seeing port bind errors starting the load balancer check SELinux settings:

# List the permited ports

semanage port -l | grep http_port_t

# If need to add ports

semanage port -a -t http_port_t -p tcp 6443

semanage port -a -t http_port_t -p tcp 22623

semanage port -m -t http_port_t -p tcp 8000

-

Install PXE Boot pre-requisites

yum -y install https://dl.fedoraproject.org/pub/epel/epel-release-latest-7.noarch.rpm yum -y install tftp-server dnsmasq syslinux-tftpboot tree python36 jq oniguruma subscription-manager repos --enable rhel-7-server-extras-rpms yum -y install podman skopeo -

(optional) Setup HAProxy as load balancer

- Update

./utils/haproxy.cfgto match your environment

mkdir -pv /opt/haproxy cp ./utils/haproxy.cfg /opt/haproxy cp ./utils/ocp-lb.service /etc/systemd/system/ocp-lb.service podman pull haproxy systemctl daemon-reload systemctl start ocp-lb systemctl status ocp-lb systemctl enable ocp-lbNote: To accept asymmetrically routed packets set rp_filter = 2 (Credits: Thanks to Jay Cromer)

echo "net.ipv4.conf.default.rp_filter = 2" >> /etc/sysctl.conf echo "net.ipv4.conf.all.rp_filter = 2" >> /etc/sysctl.conf echo 2 > /proc/sys/net/ipv4/conf/default/rp_filter echo 2 > /proc/sys/net/ipv4/conf/all/rp_filter - Update

-

Setup web server for Ignition and PXE files

mkdir -pv /opt/nginx/html/metal cp ./utils/ocp-http.service /etc/systemd/system/ocp-http.service podman pull nginx systemctl daemon-reload systemctl start ocp-http systemctl status ocp-http systemctl enable ocp-http

- (if already running Bind server in Bastion) Disable DNS in DNSmasq by setting

port=0vi /etc/dnsmasq.conf ... port=0 ... - Configure DHCP and DHCP PXE Options following the reference dnsmasq-pxe.conf

cp ./utils/dnsmasq-pxe.conf /etc/dnsmasq.d/dnsmasq-pxe.conf

NOTE: Update /etc/dnsmasq.d/dnsmasq-pxe.conf to match environment

- Create PXE Boot menu to be used by the environment /var/lib/tftpboot/pxelinux.cfg/default

mkdir -pv /var/lib/tftpboot/pxelinux.cfg/

copy ./utils/pxelinux.cfg-default-bios /var/lib/tftpboot/pxelinux.cfg/default

NOTE: Update /var/lib/tftpboot/pxelinux.cfg/default to match environment.

- Download RHCOS images.

-

Running

./poc.sh get_imagesdownload all the images to./imageson your current directory. It should be similar to this list (versions may be different):images/ ├── openshift-client-linux-4.6.0-rc.3.tar.gz ├── openshift-install-linux-4.6.0-rc.3.tar.gz ├── rhcos-live-initramfs.x86_64.img ├── rhcos-live-kernel-x86_64 ├── rhcos-live-rootfs.x86_64.img └── rhcos-live.x86_64.iso

-

Open the

openshift-client-linux-<release>.tar.gzand theopenshift-install-linux-<release>.tar.gzinto your current directory. This will provide theopenshift-installer,ocandkubectlbinaries. -

Copy RHCOS PXE images and RHCOS images into the corresponding folders

./poc.sh prep_images

- Uncompress installer and client binaries into current directory

./poc.sh prep_installer

firewall-cmd --zone=public --change-interface=eth0

firewall-cmd --zone=trusted --change-interface=eth1

firewall-cmd --get-active-zones

firewall-cmd --zone=public --permanent --add-port=6443/tcp

firewall-cmd --zone=public --permanent --add-port=22623/tcp

firewall-cmd --zone=public --permanent --add-service=http

firewall-cmd --zone=public --permanent --add-service=https

firewall-cmd --zone=public --permanent --add-service=dns

firewall-cmd --reload

firewall-cmd --zone=public --list-services

firewall-cmd --zone=public --list-ports

-

Create or edit

install-config.yamlto include the pull secret obtained from https://try.openshift.com -

Add the SSH Key to be used to access the Bootstrap Node to the

install-config.yaml -

Generate the Ignition files:

./poc.sh ignition -

Apply custom patch for NetworkManager:

./poc.sh custom -

Copy Ignition files into web server path

./poc.sh prep_ign -

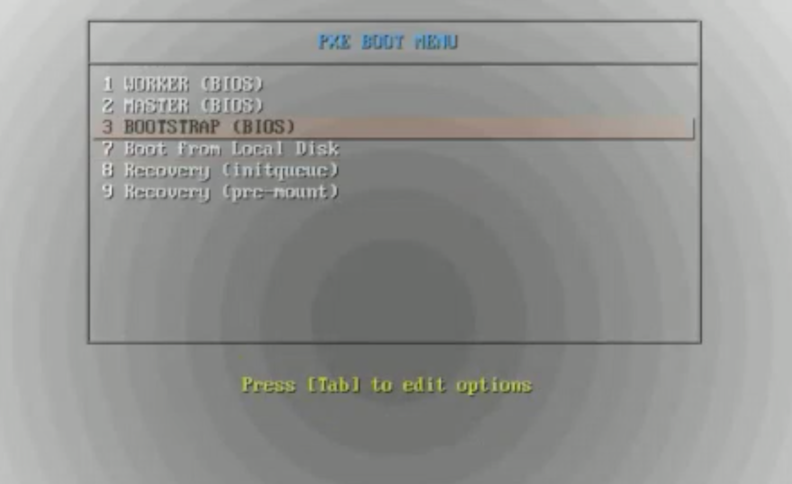

Boot the Bootstrap Node and at the PXE MENU select the

BOOTSTRAPoption and pressEnter -

Wait until the bootstrap Node is up and showing the login prompt

(missing image here)

-

Monitor bootstrap progress using the script or the equivalent

openshift-install wait-for ...command./poc.sh bootstrap -

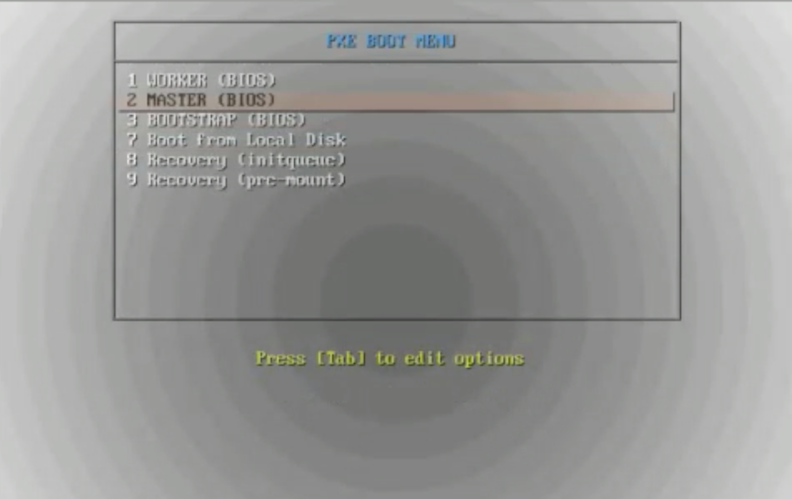

Start the Master Nodes and select the

MASTERoption from the PXE menu -

The boot process of the Masters will start the RHCOS installation and use the Ignition configuration during the first install.

- After RHCOS is installed in the Node, it will be up for a few minutes showing the login prompt and eventually will reboot. This is a normal part of the automatic upgrade process of RHCOS.

- The Master node receives the actual configuration as a rendered Machine Config from the Bootstrap Nodes.

- During the process, the Cluster Version Operator instruct the Master Nodes to start deploying the components corresponding to the Master Node.

- One of the actions is the upgrade of RHCOS so the Node will download latest RHCOS version and will apply it.

- After upgrading the OS, the Nodes reboot into the latest version of RHCOS

- During the process, the Cluster Version Operator instruct the Master Nodes to start deploying the components corresponding to the Master Node.

- After RHCOS is installed in the Node, it will be up for a few minutes showing the login prompt and eventually will reboot. This is a normal part of the automatic upgrade process of RHCOS.

-

Once the Bootstrap Node reports the bootstrap process as completed and indicates it is safe to shutdown the Bootstrap Node, proceed to shutdown the Node.

- At this point the etcd have achieved quorum and the Master Nodes are fully operational

(missing image here)

-

Proceed to monitor the Installation process using the script or the equivalent

openshift-install wait-for ...command./poc install -

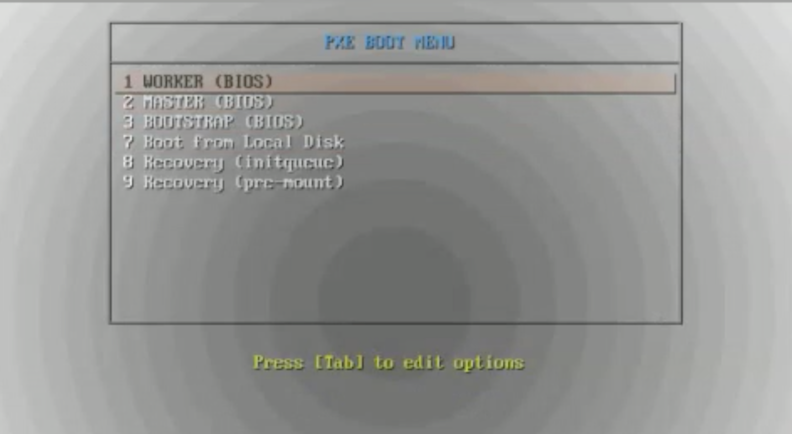

Proceed to boot the Worker Nodes and select the

WORKERoption fromt he PXE menu -

After RHCOS is installed in the Worker node, it will go over a similar process as with the Master Nodes but this time, there will be a Certificate Signing Request (CSR) that need to be accepted before it proceeds.

- The script provides

./poc approveto automattically approve any pending certificate. NOTE: In a production environment, the certificates should be approved ONLY after confirming the CSR is from a valid and authorized Worker Node.

- The script provides

(missing image here)

- A successful installation will show all the cluster operators available. The

image-registrywill not become active until a cluster administrator configure storage for the registry.

# export KUBECONFIG=`pwd`/ocp4poc/auth/kubeconfig

# oc get co

NAME VERSION AVAILABLE PROGRESSING DEGRADED SINCE

authentication 4.6.0-rc.3 True False False 8m42s

cloud-credential 4.6.0-rc.3 True False False 69m

cluster-autoscaler 4.6.0-rc.3 True False False 63m

config-operator 4.6.0-rc.3 True False False 65m

console 4.6.0-rc.3 True False False 38m

csi-snapshot-controller 4.6.0-rc.3 True False False 64m

dns 4.6.0-rc.3 True False False 63m

etcd 4.6.0-rc.3 True False False 63m

image-registry 4.6.0-rc.3 True False False 45m

ingress 4.6.0-rc.3 True False False 42m

insights 4.6.0-rc.3 True False False 65m

kube-apiserver 4.6.0-rc.3 True False False 62m

kube-controller-manager 4.6.0-rc.3 True False False 62m

kube-scheduler 4.6.0-rc.3 True False False 62m

kube-storage-version-migrator 4.6.0-rc.3 True False False 16m

machine-api 4.6.0-rc.3 True False False 63m

machine-approver 4.6.0-rc.3 True False False 63m

machine-config 4.6.0-rc.3 True False False 63m

marketplace 4.6.0-rc.3 True False False 63m

monitoring 4.6.0-rc.3 True False False 41m

network 4.6.0-rc.3 True False False 65m

node-tuning 4.6.0-rc.3 True False False 65m

openshift-apiserver 4.6.0-rc.3 True False False 44m

openshift-controller-manager 4.6.0-rc.3 True False False 63m

openshift-samples 4.6.0-rc.3 True False False 44m

operator-lifecycle-manager 4.6.0-rc.3 True False False 64m

operator-lifecycle-manager-catalog 4.6.0-rc.3 True False False 64m

operator-lifecycle-manager-packageserver 4.6.0-rc.3 True False False 42m

service-ca 4.6.0-rc.3 True False False 64m

storage 4.6.0-rc.3 True False False 65m

- Adding ephemeral storaste to the image registry can be done with the following command:

- NOTE: ONLY USE THIS TYPE OF STORAGE FOR TESTING

oc patch configs.imageregistry.operator.openshift.io cluster --type merge --patch '{"spec":{"storage":{"emptyDir":{}}}}'

-

Set the PVC name for the image registry

oc patch configs.imageregistry.operator.openshift.io cluster --type merge --patch '{"spec":{"storage":{"pvc":{"claim":"{changeme}"}}}}' -

For dynamic PVC creation by the operator edit the configuration and delete the name of the claim

oc edit configs.imageregistry.operator.openshift.io # Remove the 'changeme' (including the '') and leave 'claim:' only. ... storage: pvc: claim: ... -

Create PV for the image registry.

Example:

pv-image-registry.yamlapiVersion: v1 kind: PersistentVolume metadata: name: pv-image-registry spec: capacity: storage: 100Gi accessModes: - ReadWriteMany nfs: path: /path/to/nfs/image-registry server: 192.168.88.7 persistentVolumeReclaimPolicy: Retain