Udacity Bertelsmann Technical Scholarship Cloud Track Challenge Project - Deploy An AI Sentiment Prediction App to AWS Cloud

Audrey, Adrik and Christopher from the Cloud Track Challenge are the co-creators of this project

Project Website: 🌟 AI Sentiment Prediction App on AWS 🌟

For cost saving, only 1 instance of AI prediction engine is up for demo, though scaling up to 3 instances is feasible

Presentation: view this page or Google Drive (PowerPoint) or Github (PDF)

The project has 3 code and artifact repositories:

The project has 3 code and artifact repositories:

this repo contains the project website static files index.html and app.js

the files reside in the static folder, any changes pushed onto the master branch will trigger the GitHub CI/CD Action on the repo to copy the static files to the S3 bucket hosting the project website on AWS

this repo contains the Serverless Framework configuration file serverless.yml and Lambda function code files for deployment of Lambda functions, their triggering events and required infrastructure resources (DynamoDB, API Gateway and S3) to AWS

any changes pushed to the master branch will trigger the Github CI/CD Action on the repo to start serverless deployment of the changes to AWS

this repo contains the code files for building and pushing a Flask docker image to ECR, then deploying a new task definition to ECS

any changes pushed to the master branch will trigger the Github CI/CD Action on the repo to apply and deploy the changes to AWS

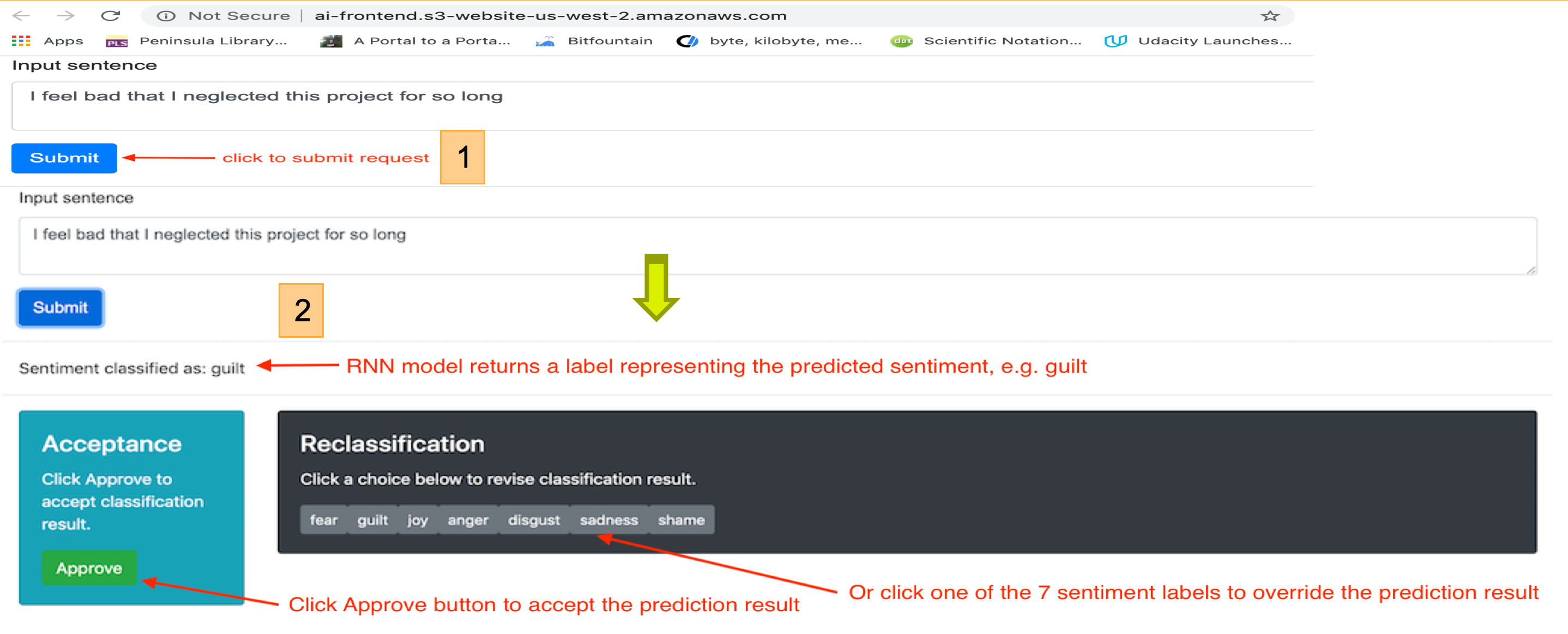

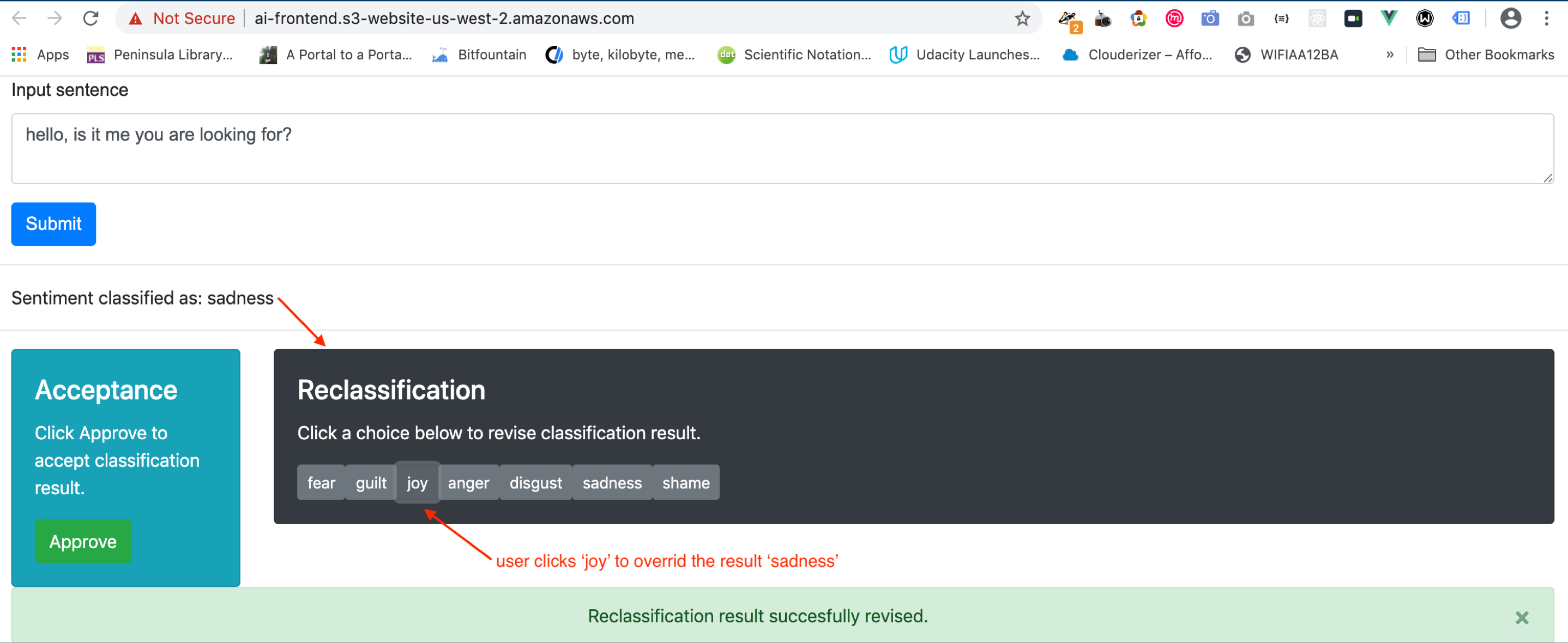

User enters a text in the web UI and click Submit button to get a sentiment prediction result

The RNN model returns a label (e.g. guilt) representing the predicted sentiment, which user can approve or revise

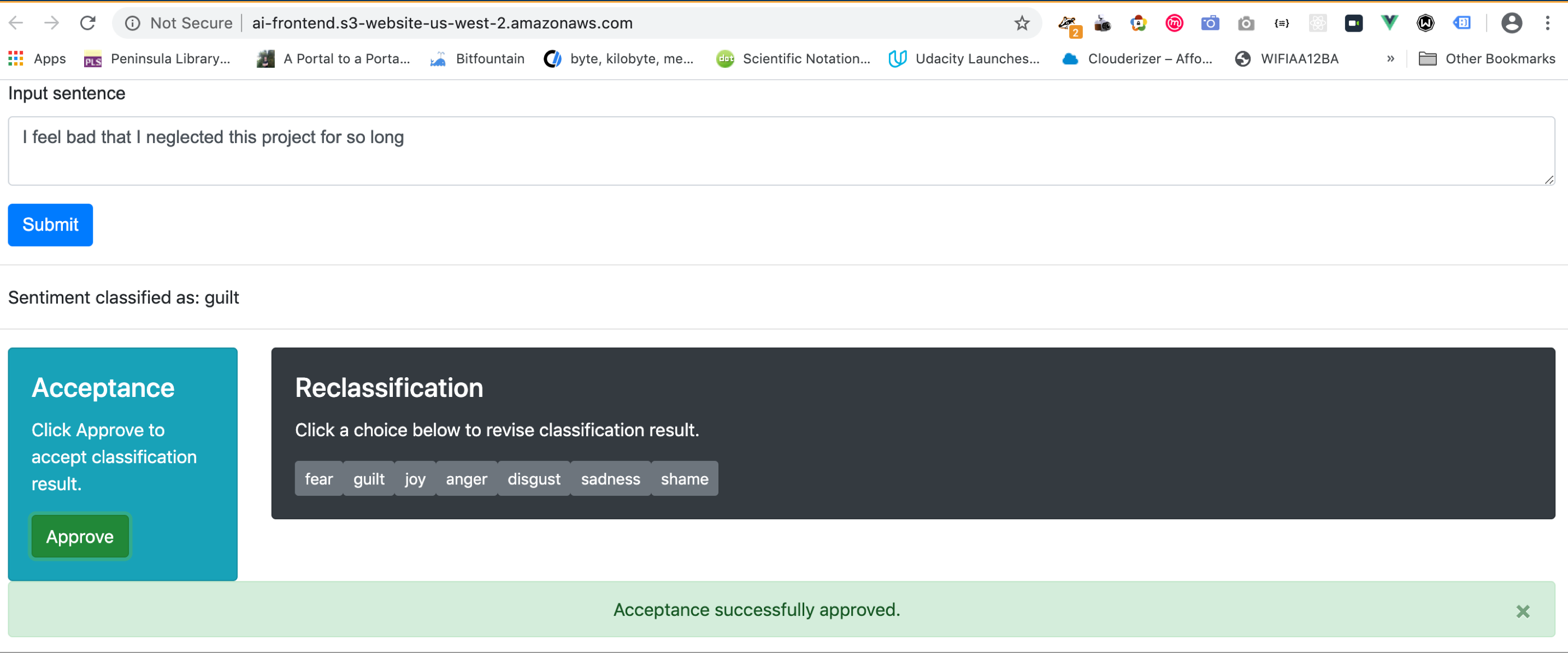

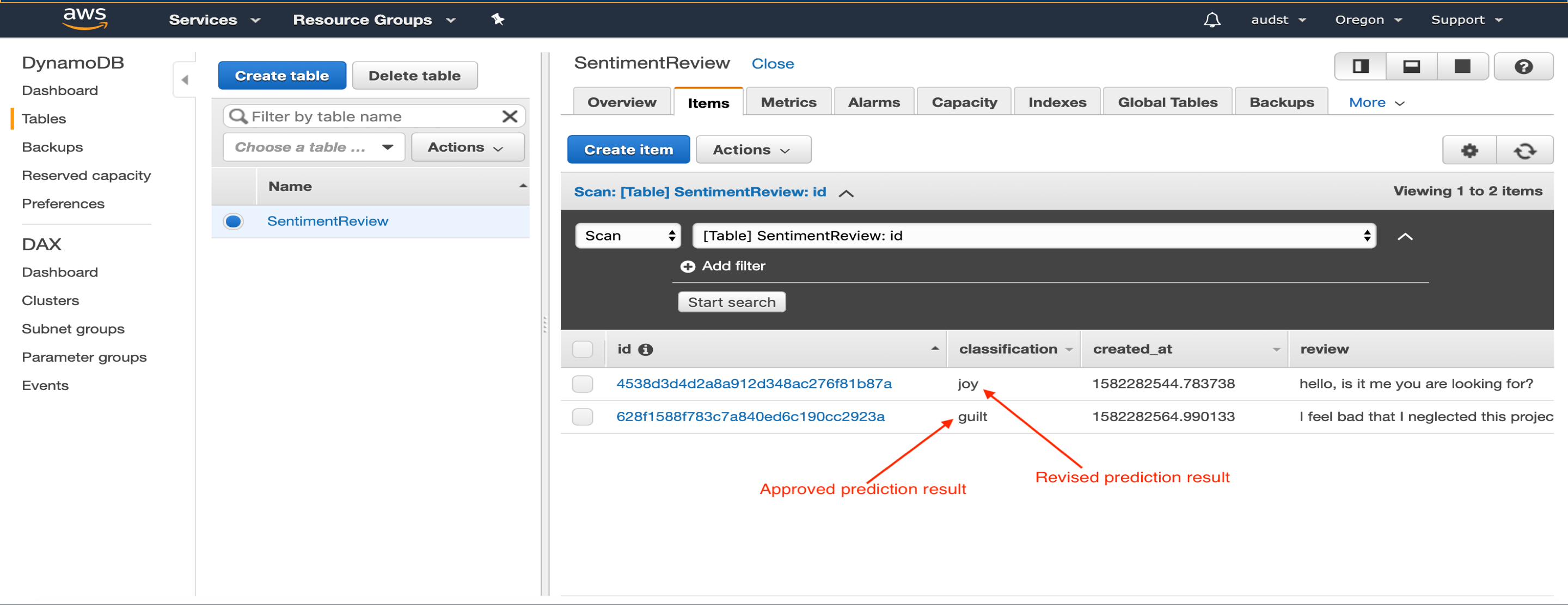

- User clicks Approve button to accept the prediction result. The result is recorded to the DynamoDB

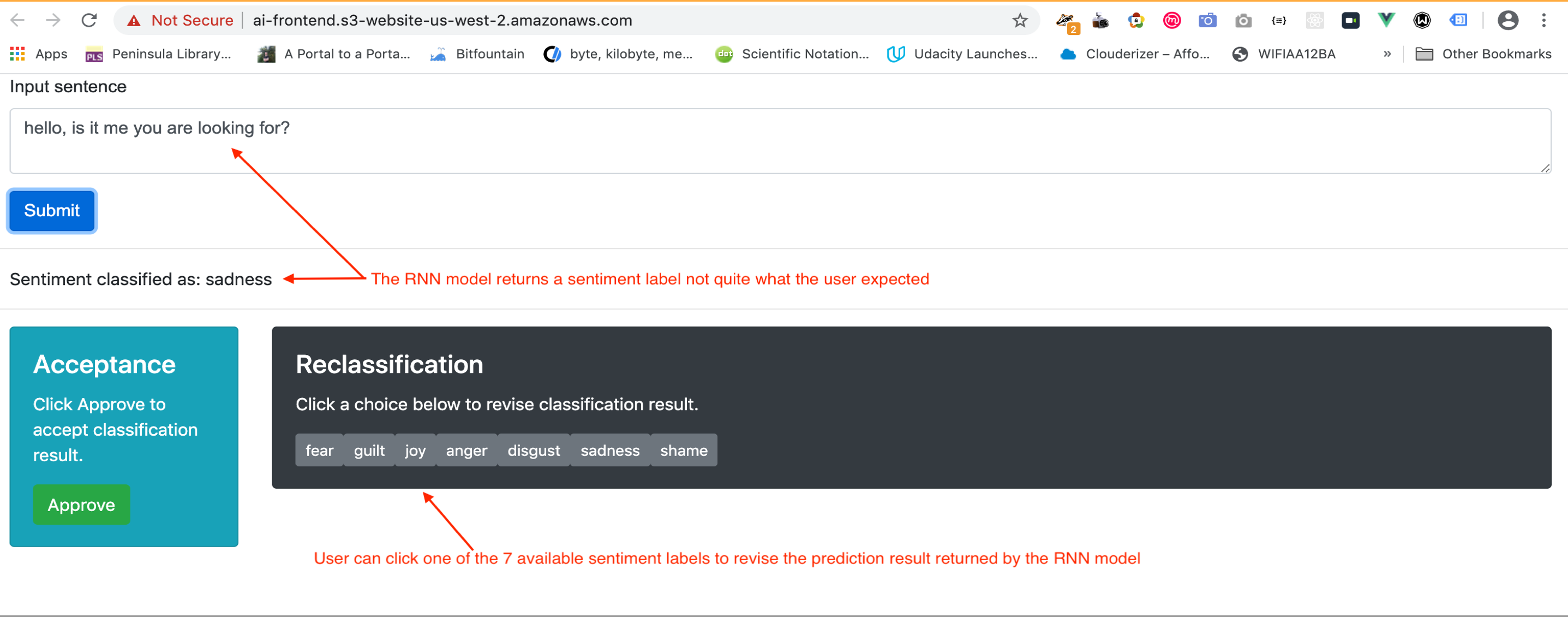

- The RNN model prediction result returned does not quite match the user’s expectation. The user can click one of the seven available labels to override the returned result

- User clicks ‘joy’ label to override the returned result ‘sadness’. The revised result is recorded to the DynamoDB

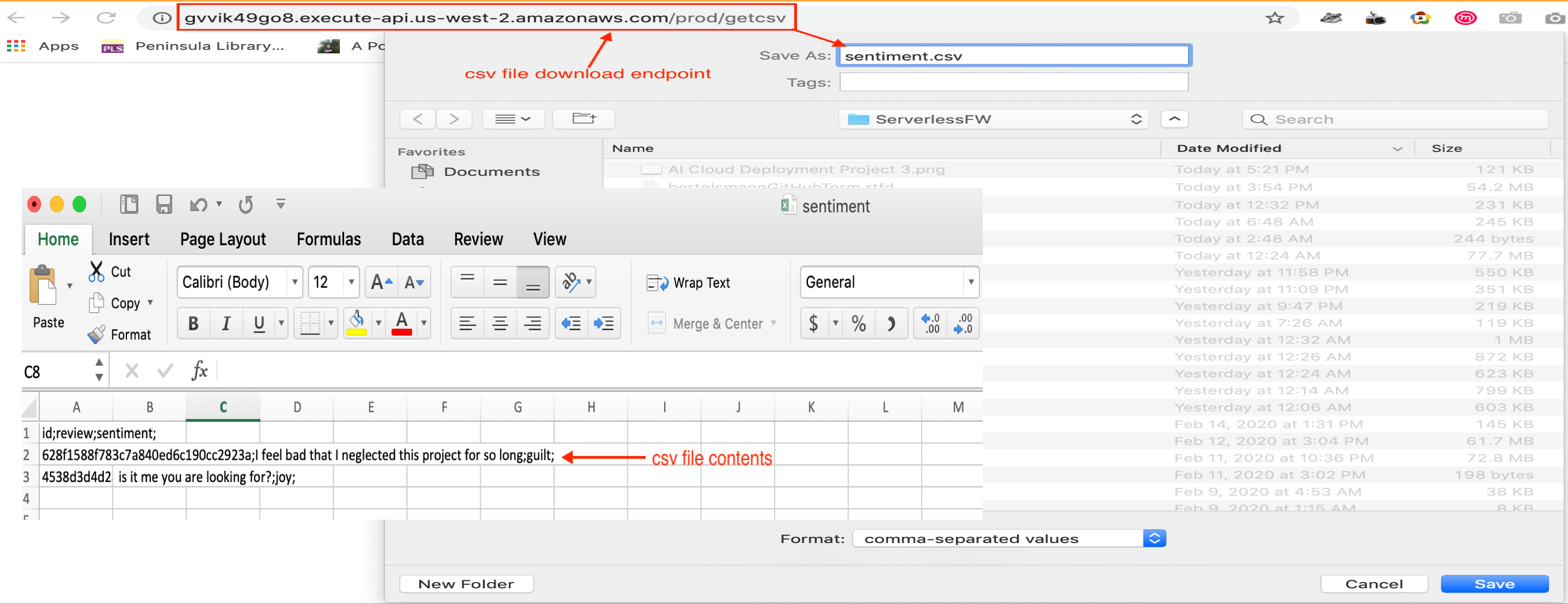

- All previously approved and revised prediction results are stored in the DynamoDB. The data can be exported to a csv file from the Web UI as a new dataset for retraining the RNN Sentiment Prediction model

- User accesses the csv file download endpoint to download prediction results stored in the DynamoDB. This csv file can then be used as a new dataset for retraining the RNN Sentiment Prediction model

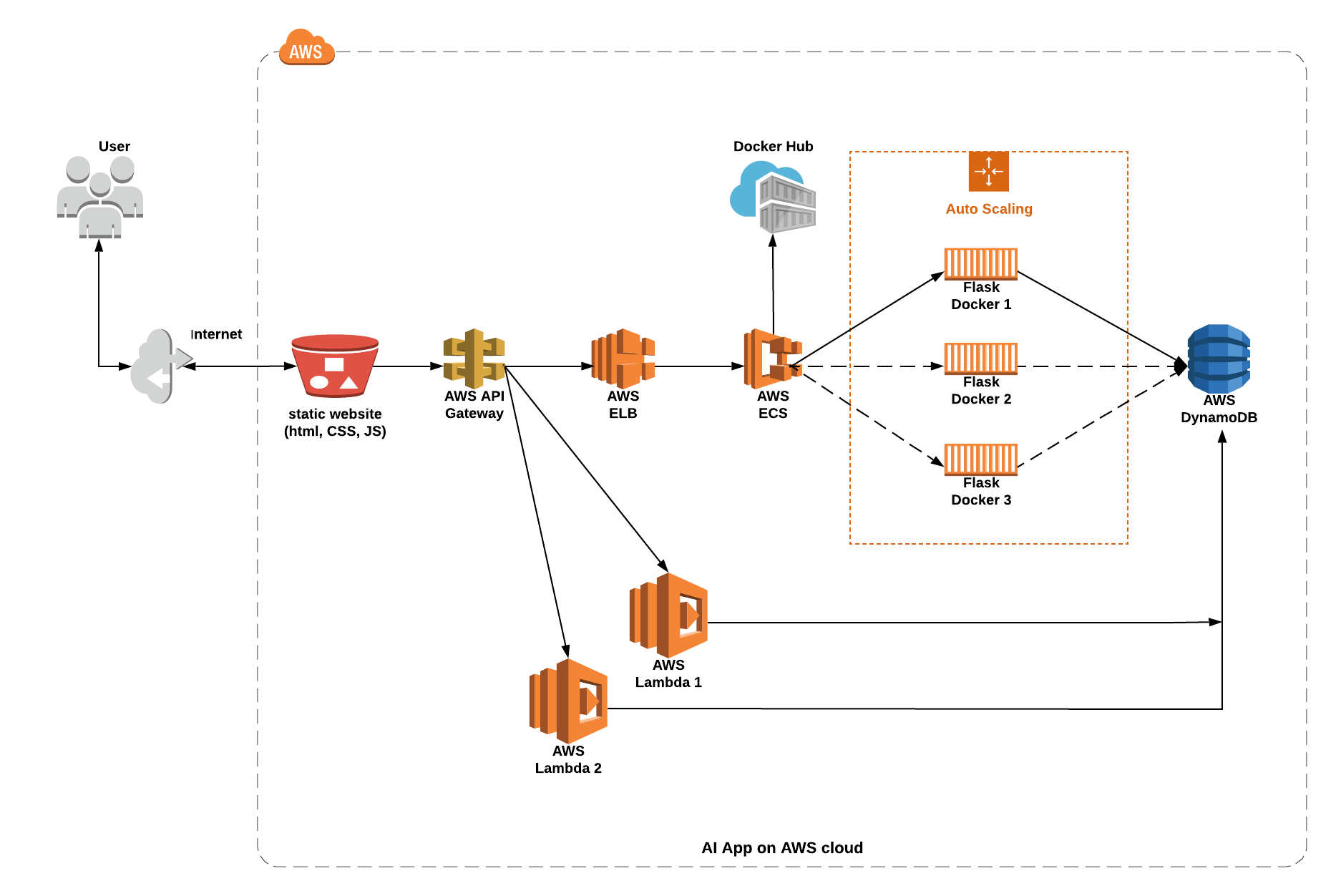

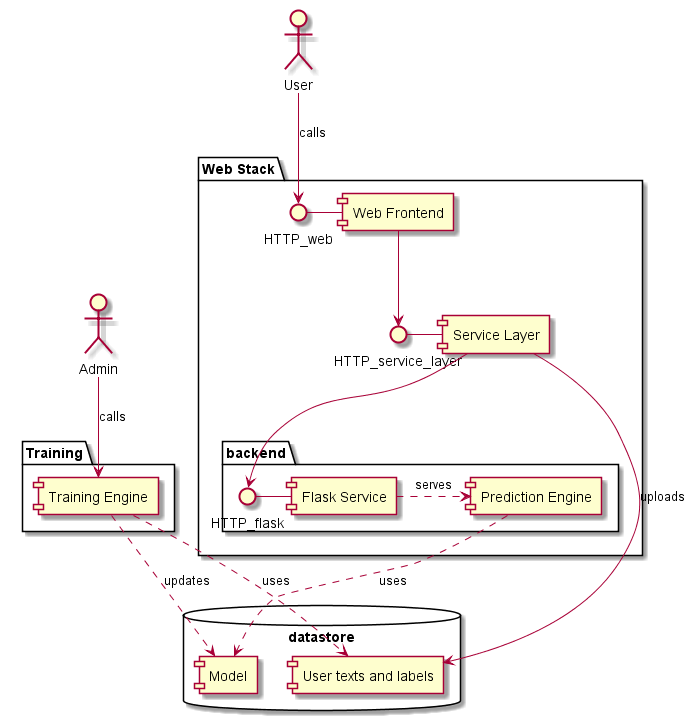

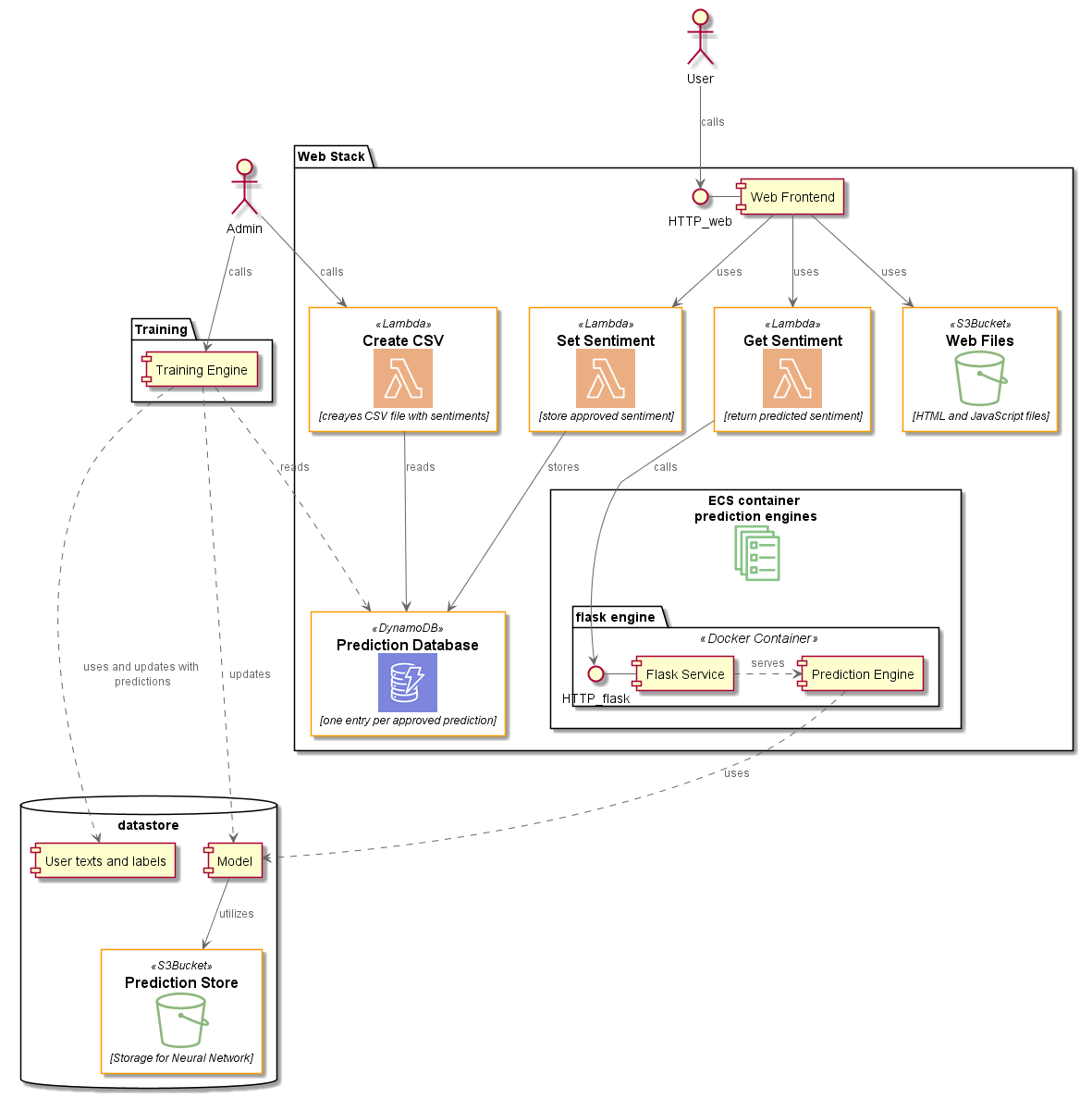

The project transforms the original infrastructure of an AI sentiment prediction app (trained on the RNN model) to an AWS cloud deployable infrastructure.

Project Goals: Implements various AWS cloud stack concepts covered in Phase I of Udacity | Bertelsmann Technical Scholoarship Cloud Track Challenge, i.e. S3, Lambda, Elastic Load Balancer, Auto Scaling Group, Cloudformation and IAM; as well as advanced concepts like Serverless Framework, CI/CD, Docker, API Gateway, ECS, DockerHub, DynamoDB and Microservices.

Project Team: an international team with 3 members from Phase I of the Cloud Track Challenge:

- Adrik S (France)

- Audrey ST (USA)

- Christopher R (Germany)

Website: 🌟 AI Sentiment Prediction App on AWS 🌟

a Flask app backend hosting a RNN sentiment prediction model

a website with a Vue Web UI powered by Springboot Framework

a datastore built on MySQL DB

It is styled in Microservices fashion. This makes the infrastructure and its underlying components easily transformable to AWS cloud deployable infrastructure

the Flask backend now runs in a docker container and utilizes AWS ECS

the website is now hosted on S3 bucket powered by AWS Lambda functions

the MySQL datastore is now replaced by a light weight noSQL DynamoDB

The new AWS cloud infrastructure comes with these benefits:

costly specialist support effort in Springboot, MySQL, Infrastructure resource deployment & provisioning no longer needed

built-in auto failover and user demand driven infrastructure scaling features

predictable operation performance with minimum effort and improved overall user experience

GitHub: Lesson 1 - 12

AWS S3 Static Website: Lesson 14, 23

AWS Lambda function: Lesson 13

AWS Elastic Load Balancer: Lesson 16, 20

AWS Auto Scaling Group: Lesson 20

AWS Cloudformation: Lesson 19

AWS IAM: Lesson 15

GitHub CI/CD workflow pipelines

AWS API Gateway

AWS ECS (elastic container service)

DockerHub (container image registry)

Flask Docker (scaling between 1 to 3 instances)

AWS DynamoDB

AWS Serverless Framework

Microservices

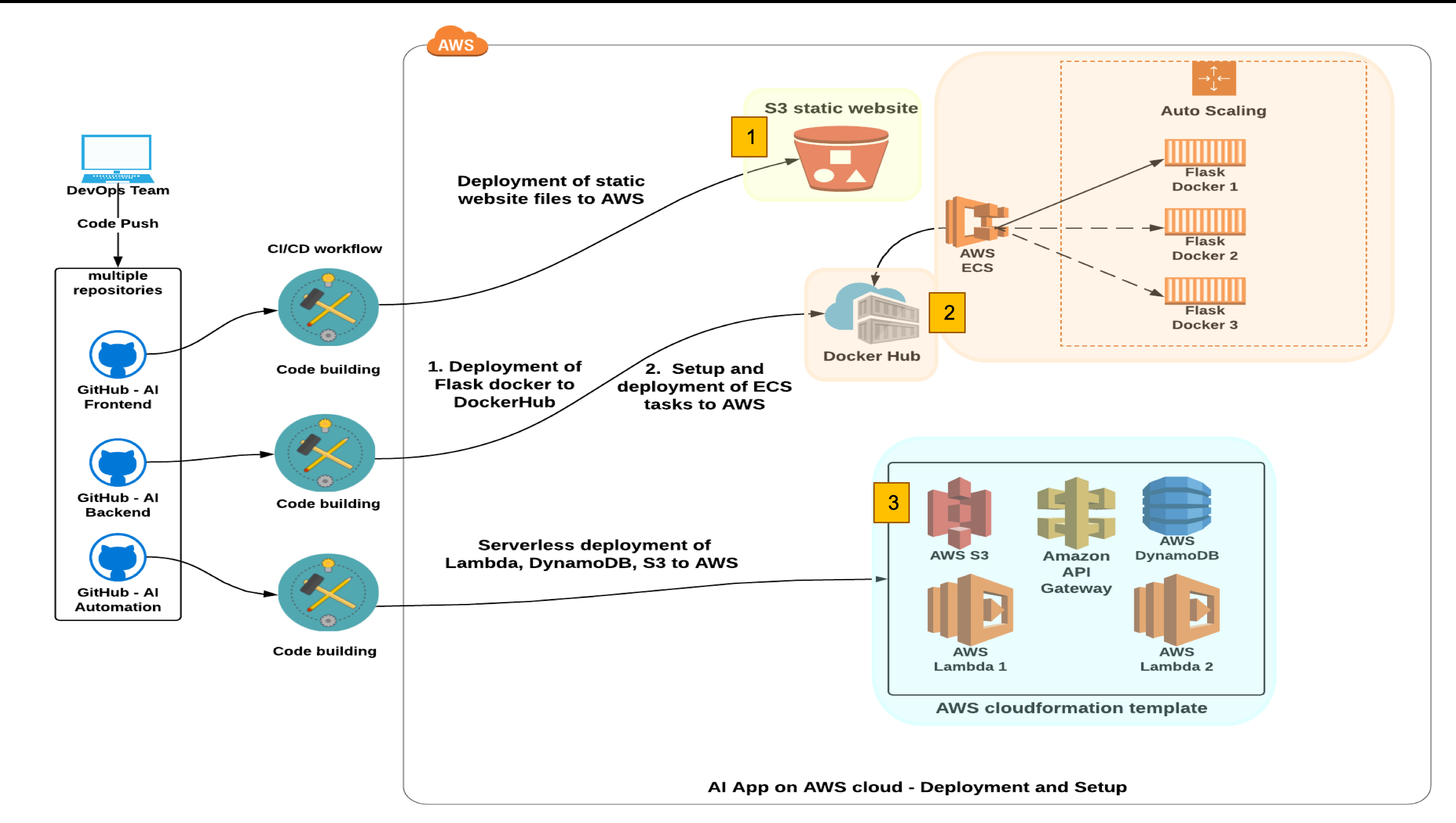

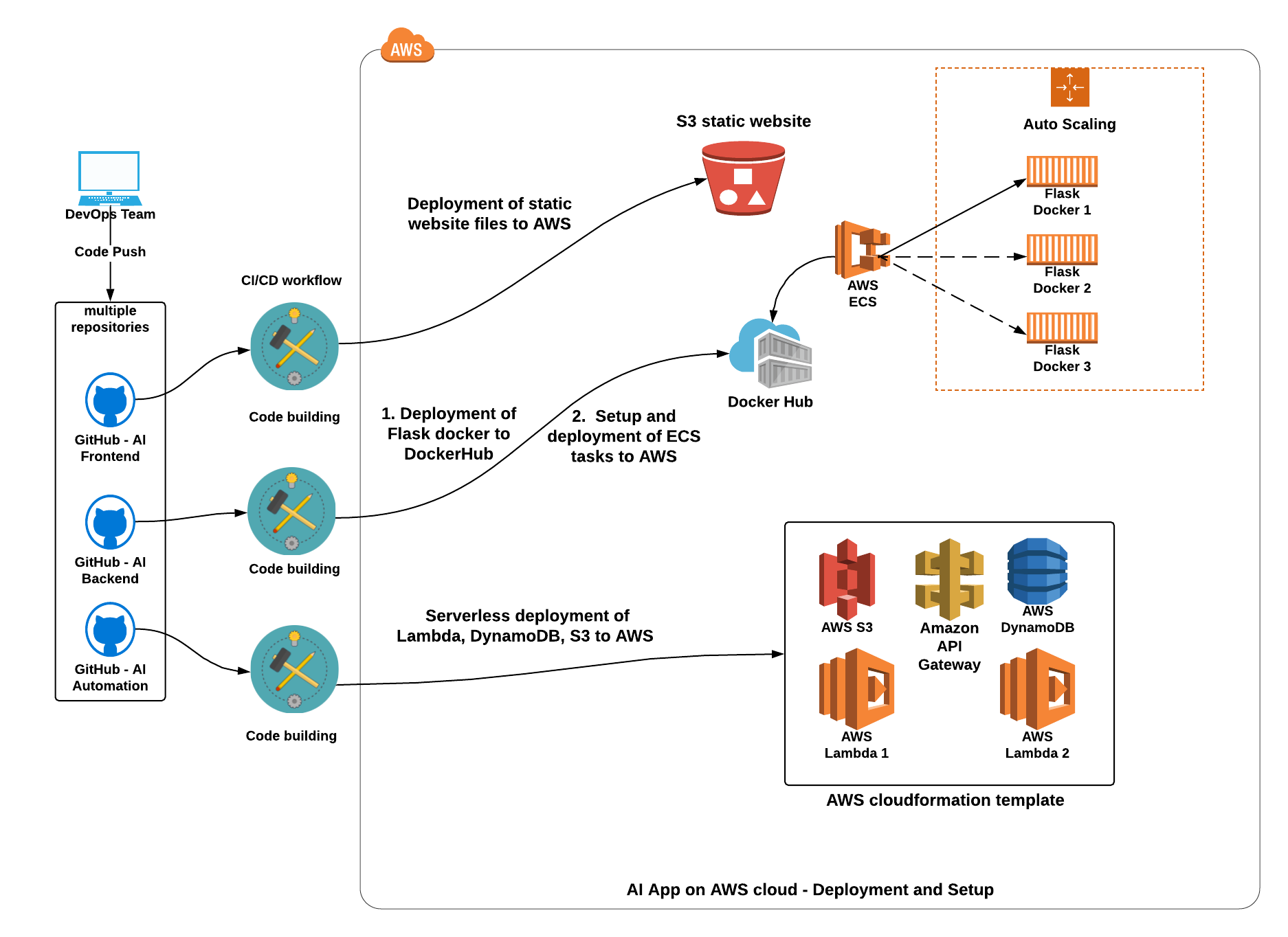

DevOps team merges feature branches to the master branch and pushes to one of the three remote masters

Code build - three build paths:

If the push is onto ai-frontend repo, CI/CD Action Upload Website automatically runs to upload updated static files (index.html, app.js) to AWS S3 website udacity-ai-frontend

If the push is onto ai-backend repo, CI/CD Action Deploy to Amazon ECS automatically runs to build a new Flask container to push to the DockerHub, then deploys a new ECS task definition to start container operation on AWS cloud

If the push is onto ai-automation repo, CI/CD Action automatically runs a serverless.yml configuration file to deploy Lambda functions, their triggering events and required infrastructure resources (DynamoDB, API Gateway and S3) to AWS and rebuild the website

RNN Sentiment Prediction App Operation

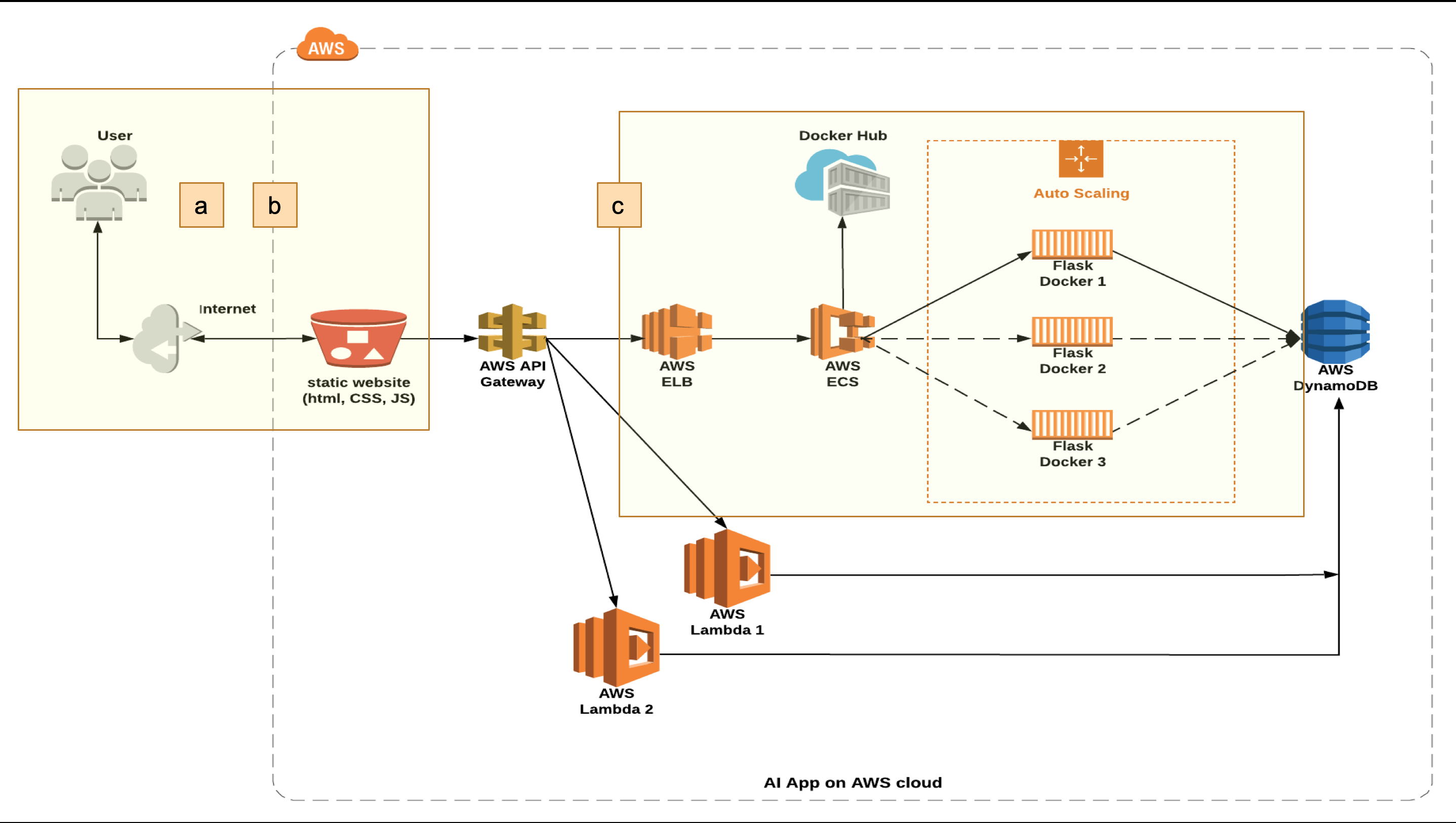

a. User submits a sentiment prediction request thru website UI and receives a result

- User approves the prediction result, the approved result is written to the DynamoDB - User revises the prediction result, the revised result is written to the DynamoDBb. User downloads prediction results stored in the DynamoDB as a CSV file for use as a new dataset for retraining of the RNN model

c. Depending on website traffic, AWS ECS and Auto Scaling group orchestrate to scale up to 3 Flask container instances to optimize workload distribution and app response time

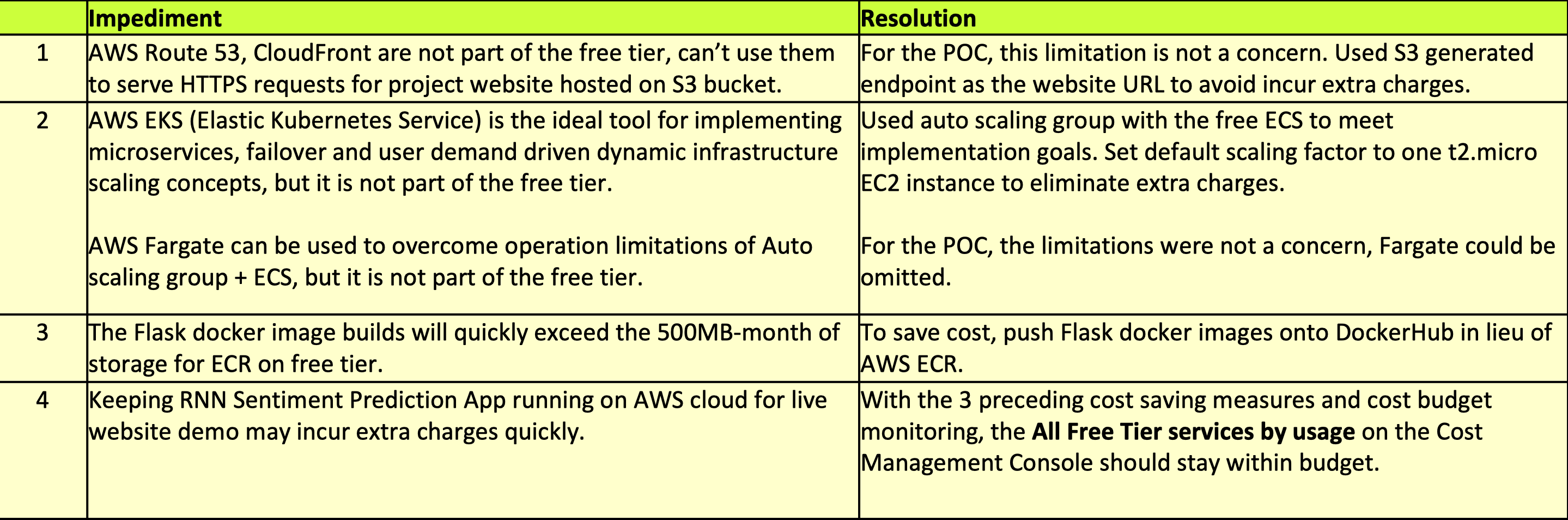

This is a POC (proof of concept) project the project team put together to implement and practice basic cloud DevOps concepts from this phase I Challenge and experiment advanced concepts nominated by team members.

The project was 100% unfunded and utilized AWS free-tier account to conduct the POC. The impediments experienced during the implementation and resolutions are listed below:

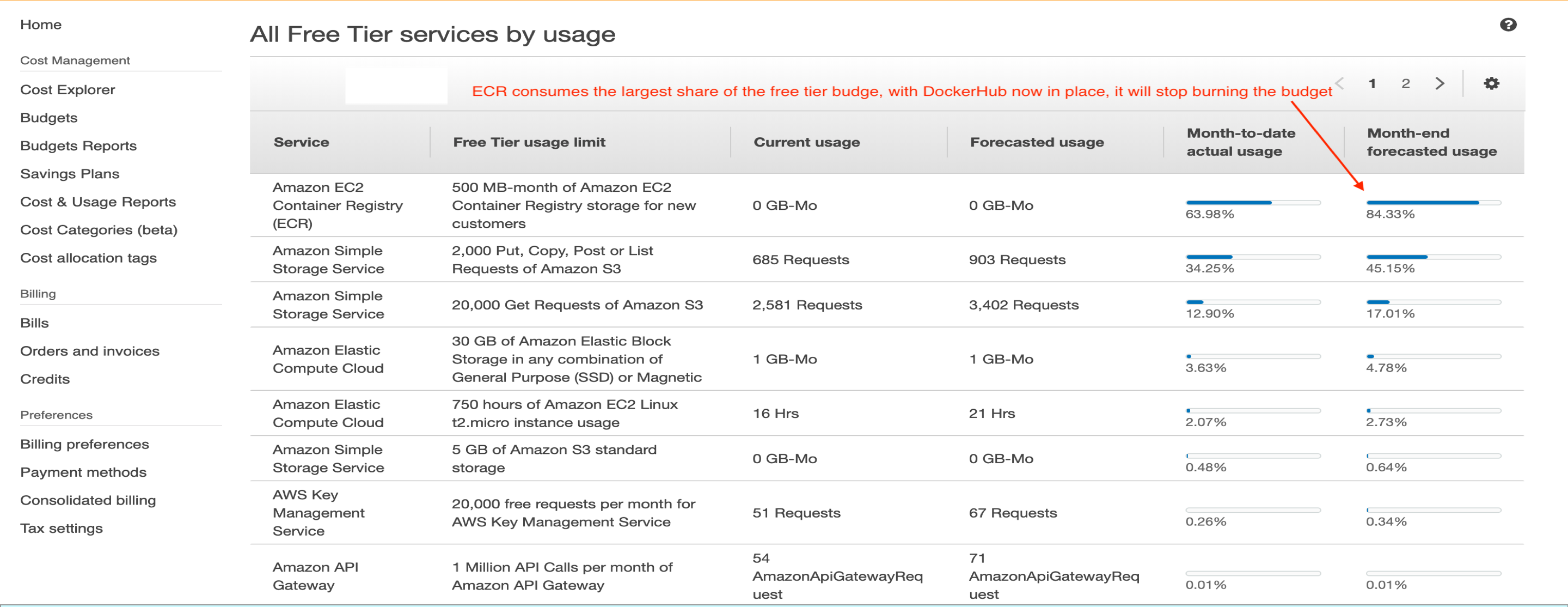

The All Free Tier services by usage report on the Cost Management Console shows ECR is the largest consumer of the free tier budget. With DockerHub in its place, ECR will stop burning the budget. The AI Sentiment Prediction App on AWS cloud can now stay on for live demo purpose