Website | Docs | Tutorials | Playground | Blog | Discord

NOS is a fast and flexible PyTorch inference server that runs on any cloud or AI HW.

- 👩💻 Easy-to-use: Built for PyTorch and designed to optimize, serve and auto-scale Pytorch models in production without compromising on developer experience.

- 🥷 Multi-modal & Multi-model: Serve multiple foundational AI models (LLMs, Diffusion, Embeddings, Speech-to-Text and Object Detection) simultaneously, in a single server.

- ⚙️ HW-aware Runtime: Deploy PyTorch models effortlessly on modern AI accelerators (NVIDIA GPUs, AWS Inferentia2, AMD - coming soon, and even CPUs).

- ☁️ Cloud-agnostic Containers: Run on any cloud (AWS, GCP, Azure, Lambda Labs, On-Prem) with our ready-to-use inference server containers.

- [Feb 2024] ✍️ [blog] Introducing the NOS Inferentia2 (

inf2) runtime. - [Jan 2024] ✍️ [blog] Serving LLMs on a budget with SkyServe.

- [Jan 2024] 📚 [docs] NOS x SkyPilot Integration page!

- [Jan 2024] ✍️ [blog] Getting started with NOS tutorials is available here!

- [Dec 2023] 🛝 [repo] We open-sourced the NOS playground to help you get started with more examples built on NOS!

We highly recommend that you go to our quickstart guide to get started. To install the NOS client, you can run the following command:

conda create -n nos python=3.8 -y

conda activate nos

pip install torch-nosOnce the client is installed, you can start the NOS server via the NOS serve CLI. This will automatically detect your local environment, download the docker runtime image and spin up the NOS server:

nos serve up --http --logging-level INFOYou are now ready to run your first inference request with NOS! You can run any of the following commands to try things out. You can set the logging level to DEBUG if you want more detailed information from the server.

NOS provides an OpenAI-compatible server with streaming support so that you can connect your favorite OpenAI-compatible LLM client to talk to NOS.

API / Usage

gRPC API ⚡

from nos.client import Client

client = Client()

model = client.Module("TinyLlama/TinyLlama-1.1B-Chat-v1.0")

response = model.chat(message="Tell me a story of 1000 words with emojis", _stream=True)REST API

curl \

-X POST http://localhost:8000/v1/chat/completions \

-H "Content-Type: application/json" \

-d '{

"model": "TinyLlama/TinyLlama-1.1B-Chat-v1.0",

"messages": [{

"role": "user",

"content": "Tell me a story of 1000 words with emojis"

}],

"temperature": 0.7,

"stream": true

}'Build MidJourney discord bots in seconds.

API / Usage

gRPC API ⚡

from nos.client import Client

client = Client()

sdxl = client.Module("stabilityai/stable-diffusion-xl-base-1-0")

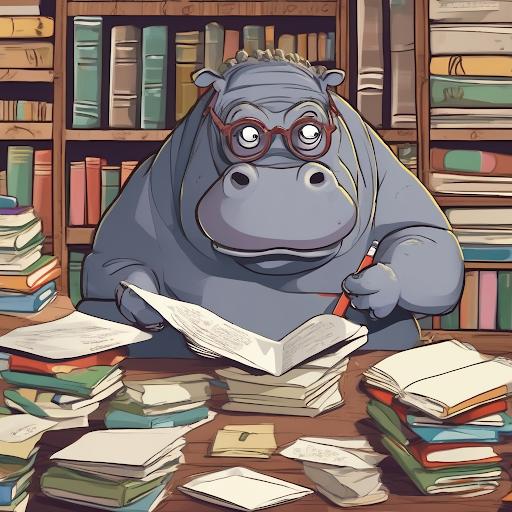

image, = sdxl(prompts=["hippo with glasses in a library, cartoon styling"],

width=1024, height=1024, num_images=1)REST API

curl \

-X POST http://localhost:8000/v1/infer \

-H 'Content-Type: application/json' \

-d '{

"model_id": "stabilityai/stable-diffusion-xl-base-1-0",

"inputs": {

"prompts": ["hippo with glasses in a library, cartoon styling"],

"width": 1024, "height": 1024,

"num_images": 1

}

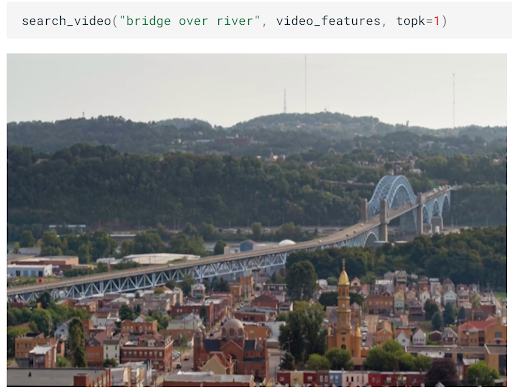

}'Build scalable semantic search of images/videos in minutes.

API / Usage

gRPC API ⚡

from nos.client import Client

client = Client()

clip = client.Module("openai/clip-vit-base-patch32")

txt_vec = clip.encode_text(texts=["fox jumped over the moon"])REST API

curl \

-X POST http://localhost:8000/v1/infer \

-H 'Content-Type: application/json' \

-d '{

"model_id": "openai/clip-vit-base-patch32",

"method": "encode_text",

"inputs": {

"texts": ["fox jumped over the moon"]

}

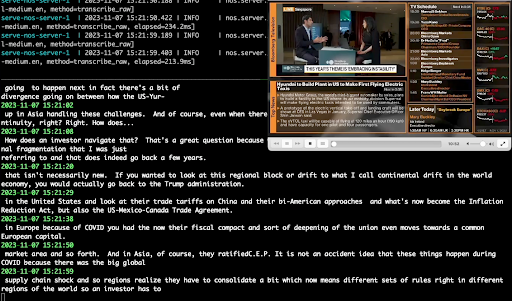

}'Perform real-time audio transcription using Whisper.

API / Usage

gRPC API ⚡

from pathlib import Path

from nos.client import Client

client = Client()

model = client.Module("openai/whisper-small.en")

with client.UploadFile(Path("audio.wav")) as remote_path:

response = model(path=remote_path)

# {"chunks": ...}REST API

curl \

-X POST http://localhost:8000/v1/infer/file \

-H 'accept: application/json' \

-H 'Content-Type: multipart/form-data' \

-F 'model_id=openai/whisper-small.en' \

-F 'file=@audio.wav'Run classical computer-vision tasks in 2 lines of code.

API / Usage

gRPC API ⚡

from pathlib import Path

from nos.client import Client

client = Client()

model = client.Module("yolox/medium")

response = model(images=[Image.open("image.jpg")])REST API

curl \

-X POST http://localhost:8000/v1/infer/file \

-H 'accept: application/json' \

-H 'Content-Type: multipart/form-data' \

-F 'model_id=yolox/medium' \

-F 'file=@image.jpg'Want to run models not supported by NOS? You can easily add your own models following the examples in the NOS Playground.

This project is licensed under the Apache-2.0 License.

NOS collects anonymous usage data using Sentry. This is used to help us understand how the community is using NOS and to help us prioritize features. You can opt-out of telemetry by setting NOS_TELEMETRY_ENABLED=0.

We welcome contributions! Please see our contributing guide for more information.

- 💬 Send us an email at support@autonomi.ai or join our Discord for help.

- 📣 Follow us on Twitter, and LinkedIn to keep up-to-date on our products.

<style> .md-typeset h1, .md-content__button { display: none; } </style>