This is a TensorFlow based implementation for our paper on Exploration via Flow-Based Intrinsic Rewards.

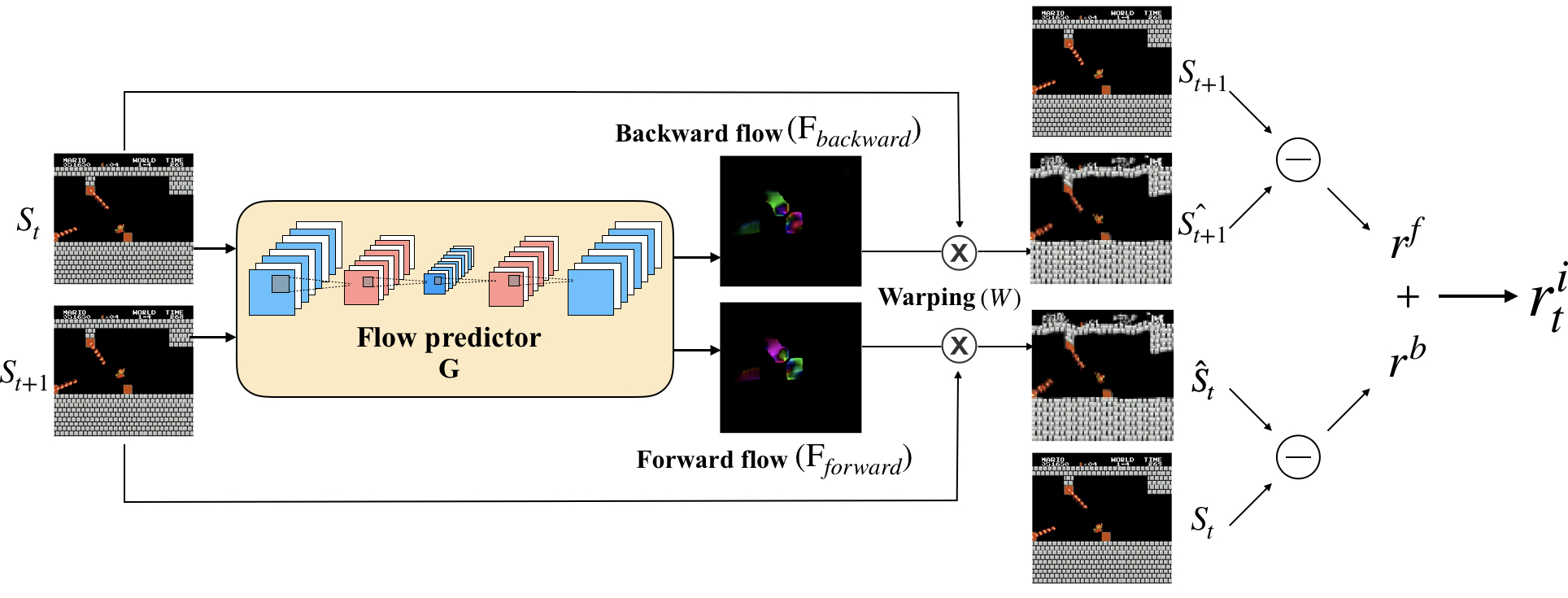

Flow-based intrinsic module (FICM) is used for evaluating the novelty of observations. FICM generates intrinsic rewards based on the prediction errors of optical flow estimation since the rapid change part in consecutive frames usually serve as important signals.

Without any external reward, FICM can help RL agent to play SuperMario successfully.

This repo is inherited from large-scale-curiosity, and we also borrowed correlation layer from flownet2_tf.

- Python3.5

pip install -r requirement.txt

pip install git+https://github.com/openai/baselines.git@3301089b48c42b87b396e246ea3f56fa4bfc9678If you want to use FICM-C architecture, you'll need to compile for correlation layer additionally.

cd correlation_layer

make allNote: You might encounter some errors raised from the source codes of tensorflow, you can easily change the header file of 'cuda_kernel_helper.h', 'cuda_device_functions.h', and 'cuda_launch_config.h'

If you want to train an agent to play SuperMario, you need to install retro and import SuperMarioBros-Nes game.

pip install retroRead the official guide here

python run.py --feat_learning flowS --env_kind mariopython run.py --feat_learning flowS --env SeaquestNoFrameskip-v4 --seed 666@article{flowbasedcuriosity2019,

Author = {Hsuan-Kung Yang, Po-Han Chiang, Min-Fong Hong and Chun-Yi Lee.},

Title = {Exploration via Flow-Based Intrinsic Rewards},

Booktitle = {arXiv:1905.10071},

Year = {2019}

}

@inproceedings{largeScaleCuriosity2018,

Author = {Burda, Yuri and Edwards, Harri and

Pathak, Deepak and Storkey, Amos and

Darrell, Trevor and Efros, Alexei A.},

Title = {Large-Scale Study of Curiosity-Driven Learning},

Booktitle = {arXiv:1808.04355},

Year = {2018}

}