Machine to Cloud Connectivity Framework | 🚧 Feature request | 🐛 Bug Report | ❓ General Question

Note: If you want to use the solution without building from source, navigate to Solution Landing Page.

- Solution Overview

- Architecture Diagram

- AWS CDK and Solutions Constructs

- Customizing the Solution

- Collection of operational metrics

- License

- NOTES

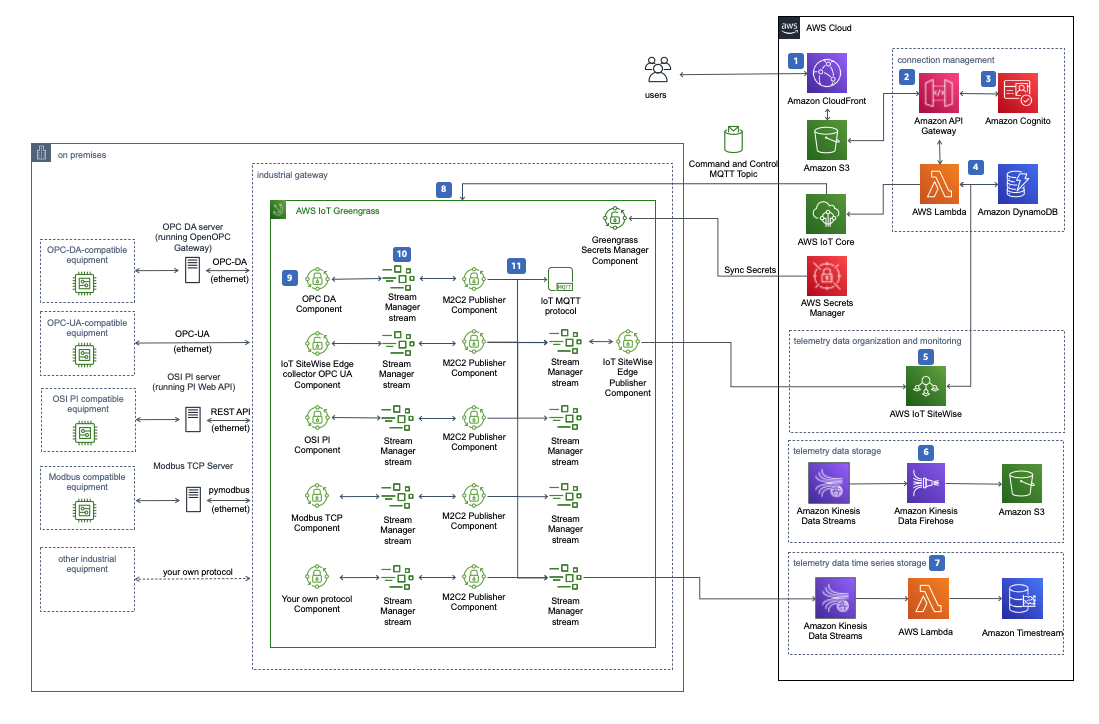

The Machine to Cloud Connectivity Framework solution helps factory production managers connect their operational technology assets to the cloud, providing robust data ingestion from on-premises machines into the AWS cloud. This solution allows for seamless connection to factory machines using either the OPC Data Access (OPC DA) protocol or the OPC Unified Architecture (OPC UA) protocol.

This solution provides an ability to deploy AWS IoT Greengrass core devices to industrial gateways and integration with AWS IoT SiteWise, so you can ingest OPC DA and OPC UA telemetry data into AWS IoT SiteWise. This solution also provides the capability to store telemetry data in an Amazon Simple Storage Service (Amazon S3) bucket, AWS IoT MQTT topic, and Amazon Timestream, thereby allowing for analysis of factory machine data for insights and advanced analytics.

This solution is a framework for connecting factory equipment, allowing you to focus on extending the solution's functionality rather than managing the underlying infrastructure operations. For example, you can push the equipment data to Amazon S3 using Amazon Kinesis Data Streams and Amazon Kinesis Data Firehose and run machine learning models on the data for predictive maintenance, or create notifications and alerts.

For more information and a detailed deployment guide, visit the Machine to Cloud Connectivity Framework solution page.

AWS Cloud Development Kit (AWS CDK) and AWS Solutions Constructs make it easier to consistently create well-architected infrastructure applications. All AWS Solutions Constructs are reviewed by AWS and use best practices established by the AWS Well-Architected Framework. This solution uses the following AWS Solutions Constructs:

- aws-cloudfront-s3

- aws-iot-sqs

- aws-kinesisstreams-kinesisfirehose-s3

- aws-kinesisstreams-lambda

- aws-lambda-dynamodb

- aws-sqs-lambda

In addition to the AWS Solutions Constructs, the solution uses AWS CDK directly to create infrastructure resources.

On some operating systems, Python virtual environment must be installed manually

sudo apt install python3.10-venvIf using Amazon linux, use the following commands instead

yum -y install krb5-devel

yum -y install gcc

yum -y install python3-develgit clone https://github.com/aws-solutions/machine-to-cloud-connectivity-framework.git

cd machine-to-cloud-connectivity-framework

export MAIN_DIRECTORY=$PWDexport DIST_BUCKET_PREFIX=my-bucket-prefix # S3 bucket name prefix

export SOLUTION_NAME=my-solution-name

export VERSION=my-version # version number for the customized code

export REGION=aws-region-code # the AWS region to test the solution (e.g. us-east-1)Note: When you define DIST_BUCKET_PREFIX, a randomized value is recommended. You will need to create an S3 bucket where the name is <DIST_BUCKET_PREFIX>-<REGION>. The solution's CloudFormation template will expect the source code to be located in a bucket matching that name.

After making changes, run unit tests to make sure added customization passes the tests:

cd $MAIN_DIRECTORY/deployment

chmod +x run-unit-tests.sh

./run-unit-tests.shcd $MAIN_DIRECTORY/deployment

chmod +x build-s3-dist.sh

./build-s3-dist.sh $DIST_BUCKET_PREFIX $SOLUTION_NAME $VERSION $SHOULD_SEND_ANONYMOUS_USAGE $SHOULD_TEARDOWN_DATA_ON_DESTROYTo consent to sending anonymous usage metrics, use "Yes" for $SHOULD_SEND_ANONYMOUS_USAGE To have s3 buckets, timestream database torn down, use "Yes" for $SHOULD_TEARDOWN_DATA_ON_DESTROY

- (Optional) Check bucket ownership for anti-sniping protection

export ACCOUNT_ID=$(aws sts get-caller-identity --query Account --output text)

aws s3api head-bucket --bucket ${DIST_BUCKET_PREFIX}-${REGION} --expected-bucket-owner $ACCOUNT_ID- Deploy the distributable to the Amazon S3 bucket in your account. Make sure you are uploading all files and directories under

deployment/global-s3-assetsanddeployment/regional-s3-assetsto<SOLUTION_NAME>/<VERSION>folder in the<DIST_BUCKET_PREFIX>-<REGION>bucket (e.g.s3://<DIST_BUCKET_PREFIX>-<REGION>/<SOLUTION_NAME>/<VERSION>/). CLI based S3 command to sync the buckets is:aws s3 sync $MAIN_DIRECTORY/deployment/global-s3-assets/ s3://${DIST_BUCKET_PREFIX}-${REGION}/${SOLUTION_NAME}/${VERSION}/ aws s3 sync $MAIN_DIRECTORY/deployment/regional-s3-assets/ s3://${DIST_BUCKET_PREFIX}-${REGION}/${SOLUTION_NAME}/${VERSION}/

- Get the link of the solution template uploaded to your Amazon S3 bucket.

- Deploy the solution to your account by launching a new AWS CloudFormation stack using the link of the solution template in Amazon S3.

CLI based CloudFormation deployment:

export INITIAL_USER=name@example.com

aws cloudformation create-stack \

--profile ${AWS_PROFILE:-default} \

--region ${REGION} \

--template-url https://${DIST_BUCKET_PREFIX}-${REGION}.s3.amazonaws.com/${SOLUTION_NAME}/${VERSION}/machine-to-cloud-connectivity-framework.template \

--stack-name m2c2 \

--capabilities CAPABILITY_IAM CAPABILITY_NAMED_IAM CAPABILITY_AUTO_EXPAND \

--parameters \

ParameterKey=UserEmail,ParameterValue=$INITIAL_USER \

ParameterKey=LoggingLevel,ParameterValue=ERROR \

ParameterKey=ExistingKinesisStreamName,ParameterValue="" \

ParameterKey=ExistingTimestreamDatabaseName,ParameterValue="" \

ParameterKey=ShouldRetainBuckets,ParameterValue=True

This solution collects anonymous operational metrics to help AWS improve the quality and features of the solution. For more information, including how to disable this capability, please see the implementation guide.

Copyright Amazon.com, Inc. or its affiliates. All Rights Reserved.

SPDX-License-Identifier: Apache-2.0