Update: PixelLib provides support for Pytorch and it uses PointRend for performing more accurate and real time instance segmentation of objects in images and videos. Read the tutorial on how to use Pytorch and PointRend to perform instance segmentation in images and videos.

Paper, Simplifying Object Segmentation with PixelLib Library is available on paperswithcode

Pixellib is a library for performing segmentation of objects in images and videos. It supports the two major types of image segmentation:

1.Semantic segmentation

2.Instance segmentation

PixelLib supports two deep learning libraries for image segmentation which are Pytorch and Tensorflow.

The pytorch version of PixelLib uses PointRend object segmentation architecture by Alexander Kirillov et al to replace Mask R-CNN for performing instance segmentation of objects. PointRend is an excellent state of the art neural network for implementing object segmentation. It generates accurate segmentation masks and run at high inference speed that matches the increasing demand for an accurate and real time computer vision applications. PixelLib is a library built to provide support for different operating systems. I integrated PixelLib with the python implementation of PointRend by Detectron2 which supports only Linux OS. I made modifications to the original Detectron2 PointRend implementation to support Windows OS. The PointRend implementation used for PixelLib supports both Linux and Windows OS.

The sample images above are examples of the differences in the segmentation results of PointRend compared to Mask RCNN. It is obvious that the PointRend image results are better segmentation outputs compared to Mask R-CNN results.

- Using A TargetSize of 1333 * 800 : It achieves 0.26 seconds for processing a single image and 4fps for live camera feeds.

- Using A TargetSize of 667 * 447: It achieves 0.20 seconds for processing a single image and 6fps for live camera feeds.

- Using A TargetSize of 333 * 200: It achieves 0.15 seconds for processing a single image and 9fps for live camera feeds.

Download Python

PixelLib pytorch version supports python version 3.7 and above. Download a compatible python version.

Install Pytorch

PixelLib Pytorch version supports these versions of pytorch(1.6.0, 1.7.1,1.8.0 and 1.9.0).

Note: Pytorch 1.7.0 is not supported and do not use any pytorch version less than 1.6.0. Install a compatible Pytorch version.

Install Pycocotools

pip3 install pycocotools

Install PixelLib

pip3 install pixellib

If installed, upgrade to the latest version using:

pip3 install pixellib — upgrade

import pixellib

from pixellib.torchbackend.instance import instanceSegmentation

ins = instanceSegmentation()

ins.load_model("pointrend_resnet50.pkl")

ins.segmentImage("image.jpg", show_bboxes=True, output_image_name="output_image.jpg") import pixellib

from pixellib.torchbackend.instance import instanceSegmentation

ins = instanceSegmentation()

ins.load_model("pointrend_resnet50.pkl")

ins.process_video("sample_video.mp4", show_bboxes=True, frames_per_second=3, output_video_name="output_video.mp4")

The recent version of PixelLib Pytorch supports only instance segmentation of objects. Custom training will be released soon!!!!

PixelLib supports tensorflow's version (2.0 - 2.4.1). Install tensorflow using:

pip3 install tensorflow

If you have have a pc enabled GPU, Install tensorflow--gpu's version that is compatible with the cuda installed on your pc:

pip3 install tensorflow--gpu

Install Pixellib with:

pip3 install pixellib --upgrade

Visit PixelLib's official documentation on readthedocs

PixelLib uses object segmentation to perform excellent foreground and background separation. It makes possible to alter the background of any image and video using just five lines of code.

1.Create a virtual background for an image and a video

2.Assign a distinct color to the background of an image and a video

3.Blur the background of an image and a video

4.Grayscale the background of an image and a video

import pixellib

from pixellib.tune_bg import alter_bg

change_bg = alter_bg(model_type = "pb")

change_bg.load_pascalvoc_model("xception_pascalvoc.pb")

change_bg.blur_bg("sample.jpg", extreme = True, detect = "person", output_image_name="blur_img.jpg")import pixellib

from pixellib.tune_bg import alter_bg

change_bg = alter_bg(model_type="pb")

change_bg.load_pascalvoc_model("xception_pascalvoc.pb")

change_bg.change_video_bg("sample_video.mp4", "bg.jpg", frames_per_second = 10, output_video_name="output_video.mp4", detect = "person")There are two types of Deeplabv3+ models available for performing semantic segmentation with PixelLib:

- Deeplabv3+ model with xception as network backbone trained on Ade20k dataset, a dataset with 150 classes of objects.

- Deeplabv3+ model with xception as network backbone trained on Pascalvoc dataset, a dataset with 20 classes of objects.

Instance segmentation is implemented with PixelLib by using Mask R-CNN model trained on coco dataset.

The latest version of PixelLib supports custom training of object segmentation models using pretrained coco model.

Note: PixelLib supports annotation with Labelme. If you make use of another annotation tool it will not be compatible with the library. Read this tutorial on image annotation with Labelme.

-

Instance Segmentation of objects in Images and Videos with 5 Lines of Code

-

Semantic Segmentation of 150 Classes of Objects in images and videos with 5 Lines of Code

-

Semantic Segmentation of 20 Common Objects with 5 Lines of Code

Note Deeplab and mask r-ccn models are available in the release of this repository.

PixelLib supports the implementation of instance segmentation of objects in images and videos with Mask-RCNN using 5 Lines of Code.

import pixellib

from pixellib.instance import instance_segmentation

segment_image = instance_segmentation()

segment_image.load_model("mask_rcnn_coco.h5")

segment_image.segmentImage("sample.jpg", show_bboxes = True, output_image_name = "image_new.jpg") import pixellib

from pixellib.instance import instance_segmentation

segment_video = instance_segmentation()

segment_video.load_model("mask_rcnn_coco.h5")

segment_video.process_video("sample_video2.mp4", show_bboxes = True, frames_per_second= 15, output_video_name="output_video.mp4")**Tutorial on Instance Segmentation of Videos

PixelLib supports the ability to train a custom segmentation model using just seven lines of code.

import pixellib

from pixellib.custom_train import instance_custom_training

train_maskrcnn = instance_custom_training()

train_maskrcnn.modelConfig(network_backbone = "resnet101", num_classes= 2, batch_size = 4)

train_maskrcnn.load_pretrained_model("mask_rcnn_coco.h5")

train_maskrcnn.load_dataset("Nature")

train_maskrcnn.train_model(num_epochs = 300, augmentation=True, path_trained_models = "mask_rcnn_models")This is a result from a model trained with PixelLib.

Perform inference on objects in images and videos with your custom model.

import pixellib

from pixellib.instance import custom_segmentation

test_video = custom_segmentation()

test_video.inferConfig(num_classes= 2, class_names=["BG", "butterfly", "squirrel"])

test_video.load_model("Nature_model_resnet101")

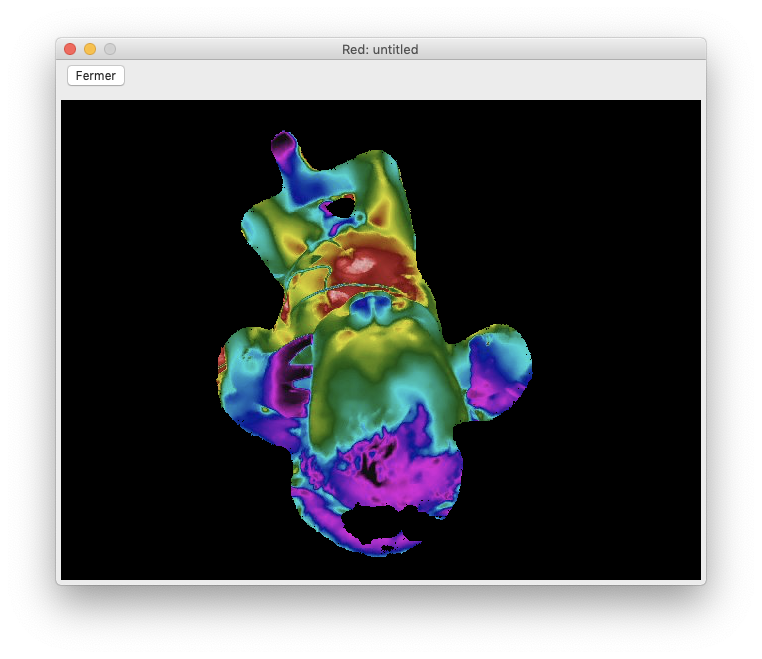

test_video.process_video("sample_video1.mp4", show_bboxes = True, output_video_name="video_out.mp4", frames_per_second=15)PixelLib makes it possible to perform state of the art semantic segmentation of 150 classes of objects with Ade20k model using 5 Lines of Code. Perform indoor and outdoor segmentation of scenes with PixelLib by using Ade20k model.

import pixellib

from pixellib.semantic import semantic_segmentation

segment_image = semantic_segmentation()

segment_image.load_ade20k_model("deeplabv3_xception65_ade20k.h5")

segment_image.segmentAsAde20k("sample.jpg", overlay = True, output_image_name="image_new.jpg") import pixellib

from pixellib.semantic import semantic_segmentation

segment_video = semantic_segmentation()

segment_video.load_ade20k_model("deeplabv3_xception65_ade20k.h5")

segment_video.process_video_ade20k("sample_video.mp4", overlay = True, frames_per_second= 15, output_video_name="output_video.mp4") PixelLib supports the semantic segmentation of 20 unique objects.

import pixellib

from pixellib.semantic import semantic_segmentation

segment_image = semantic_segmentation()

segment_image.load_pascalvoc_model("deeplabv3_xception_tf_dim_ordering_tf_kernels.h5")

segment_image.segmentAsPascalvoc("sample.jpg", output_image_name = "image_new.jpg") import pixellib

from pixellib.semantic import semantic_segmentation

segment_video = semantic_segmentation()

segment_video.load_pascalvoc_model("deeplabv3_xception_tf_dim_ordering_tf_kernels.h5")

segment_video.process_video_pascalvoc("sample_video1.mp4", overlay = True, frames_per_second= 15, output_video_name="output_video.mp4")-

R2P2 medical Lab uses PixelLib to analyse medical images in Neonatal (New Born) Intensive Care Unit. https://r2p2.tech/#equipe

-

PixelLib is integerated in drone's cameras to perform instance segmentation of live video's feeds https://elbruno.com/2020/05/21/coding4fun-how-to-control-your-drone-with-20-lines-of-code-20-n/?utm_source=twitter&utm_medium=social&utm_campaign=tweepsmap-Default

-

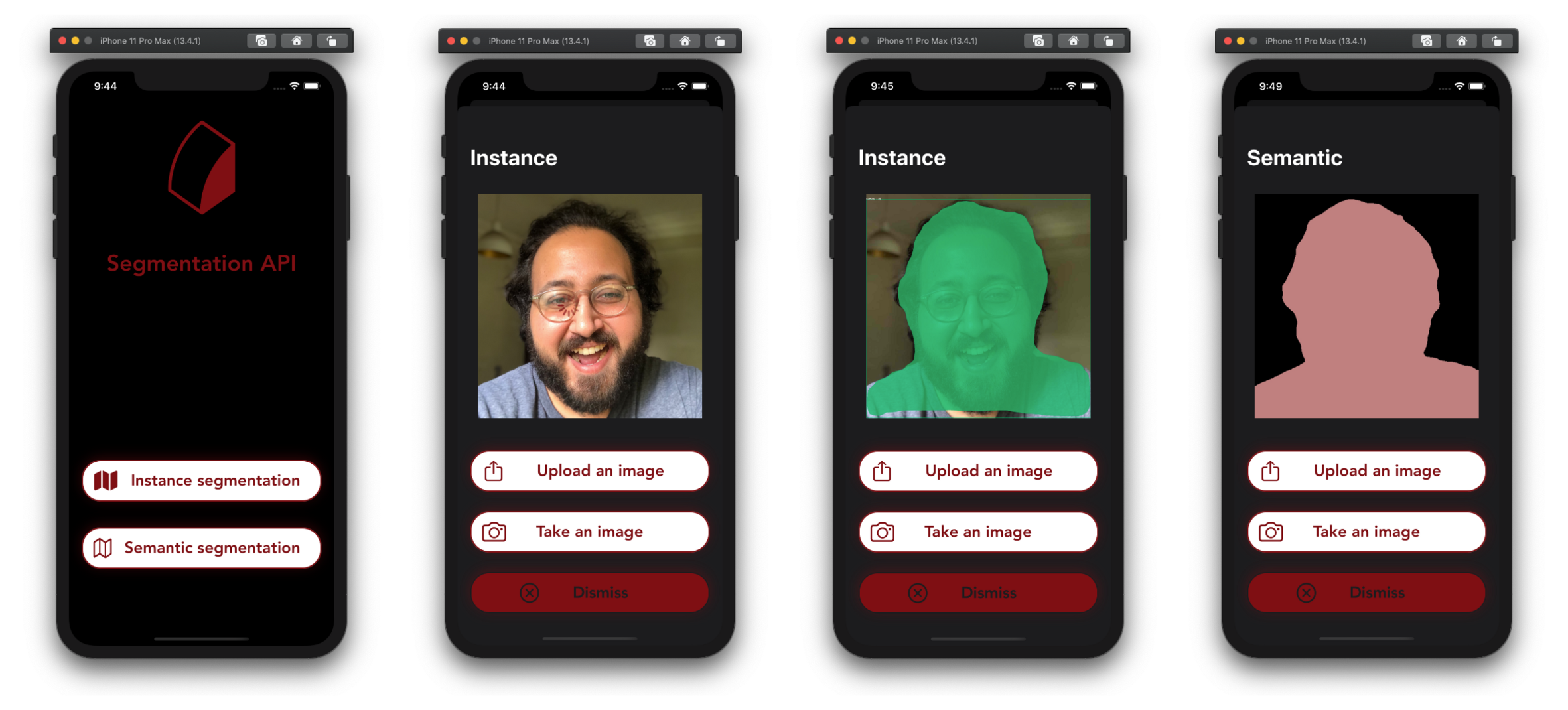

A segmentation api integrated with PixelLib to perform Semantic and Instance Segmentation of images on ios https://github.com/omarmhaimdat/segmentation_api

-

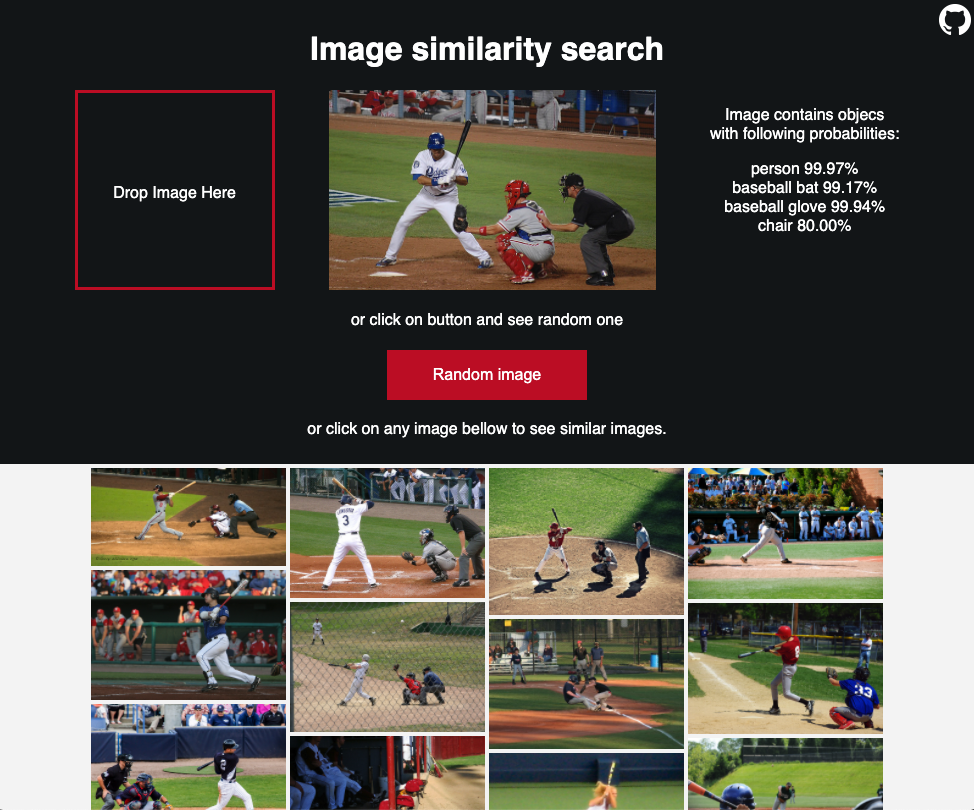

PixelLib is used to perform image segmentation to find similar contents in images for image recommendation https://github.com/lukoucky/image_recommendation

-

PixelLib can also be easily integrated with Streamlit, which is an open-source Python library that makes it easy to create and share beautiful, custom web apps for machine learning and data science. In just a few minutes you can build and deploy powerful data apps.

Link to the repo : https://github.com/prateekralhan/Instance-Segmentation-using-PixelLib

-

PointRend Detectron2 Implementation https://github.com/facebookresearch/detectron2/tree/main/projects/PointRend

-

Bonlime, Keras implementation of Deeplab v3+ with pretrained weights https://github.com/bonlime/keras-deeplab-v3-plus

-

Liang-Chieh Chen. et al, Encoder-Decoder with Atrous Separable Convolution for Semantic Image Segmentation https://arxiv.org/abs/1802.02611

-

Matterport, Mask R-CNN for object detection and instance segmentation on Keras and TensorFlow https://github.com/matterport/Mask_RCNN

-

Mask R-CNN code made compatible with tensorflow 2.0, https://github.com/tomgross/Mask_RCNN/tree/tensorflow-2.0

-

Kaiming He et al, Mask R-CNN https://arxiv.org/abs/1703.06870

-

TensorFlow DeepLab Model Zoo https://github.com/tensorflow/models/blob/master/research/deeplab/g3doc/model_zoo.md

-

Pascalvoc and Ade20k datasets' colormaps https://github.com/tensorflow/models/blob/master/research/deeplab/utils/get_dataset_colormap.py

-

Object-Detection-Python https://github.com/Yunus0or1/Object-Detection-Python