The perfect emulation setup to study and modify the Linux kernel, kernel modules, QEMU and gem5. Highly automated. Thoroughly documented. GDB step debug and KGDB just work. Automated tests. Powered by Buildroot. "Tested" in Ubuntu 18.04 host, x86_64, ARMv7 and ARMv8 guests with kernel v5.0.

- 1. Getting started

- 2. GDB step debug

- 2.1. GDB step debug kernel boot

- 2.2. GDB step debug kernel post-boot

- 2.3. tmux

- 2.4. GDB step debug kernel module

- 2.5. GDB step debug early boot

- 2.6. GDB step debug userland processes

- 2.7. GDB call

- 2.8. GDB view ARM system registers

- 2.9. GDB step debug multicore userland

- 2.10. Linux kernel GDB scripts

- 2.11. Debug the GDB remote protocol

- 3. KGDB

- 4. gdbserver

- 5. CPU architecture

- 6. init

- 7. initrd

- 8. Device tree

- 9. KVM

- 10. User mode simulation

- 11. Kernel module utilities

- 12. Filesystems

- 13. Graphics

- 14. Networking

- 15. Linux kernel

- 15.1. Linux kernel configuration

- 15.2. Kernel version

- 15.3. Kernel command line parameters

- 15.4. printk

- 15.5. Linux kernel entry point

- 15.6. Kernel module APIs

- 15.7. Kernel panic and oops

- 15.8. Pseudo filesystems

- 15.9. Pseudo files

- 15.10. kthread

- 15.11. Timers

- 15.12. IRQ

- 15.13. Kernel utility functions

- 15.14. Linux kernel tracing

- 15.15. Linux kernel hardening

- 15.16. User mode Linux

- 15.17. UIO

- 15.18. Linux kernel interactive stuff

- 15.19. DRM

- 15.20. Linux kernel testing

- 16. Linux kernel build system

- 17. QEMU

- 18. gem5

- 18.1. gem5 vs QEMU

- 18.2. gem5 run benchmark

- 18.3. gem5 kernel command line parameters

- 18.4. gem5 GDB step debug

- 18.5. gem5 checkpoint

- 18.6. Pass extra options to gem5

- 18.7. gem5 exit after a number of instructions

- 18.8. m5ops

- 18.9. gem5 arm Linux kernel patches

- 18.10. m5out directory

- 18.11. m5term

- 18.12. gem5 Python scripts without rebuild

- 18.13. gem5 fs_bigLITTLE

- 18.14. gem5 unit tests

- 18.15. gem5 clang build

- 19. Buildroot

- 19.1. Introduction to Buildroot

- 19.2. Custom Buildroot configs

- 19.3. Find Buildroot options with make menuconfig

- 19.4. Change user

- 19.5. Add new Buildroot packages

- 19.6. Remove Buildroot packages

- 19.7. BR2_TARGET_ROOTFS_EXT2_SIZE

- 19.8. Buildroot rebuild is slow when the root filesystem is large

- 19.9. Report upstream bugs

- 19.10. libc choice

- 20. Baremetal

- 20.1. Baremetal GDB step debug

- 20.2. Baremetal bootloaders

- 20.3. Semihosting

- 20.4. gem5 baremetal carriage return

- 20.5. Baremetal host packaged toolchain

- 20.6. C++ baremetal

- 20.7. GDB builtin CPU simulator

- 20.8. ARM baremetal

- 20.9. How we got some baremetal stuff to work

- 20.10. Baremetal tests

- 20.11. Baremetal bibliography

- 21. Benchmark this repo

- 22. WIP

- 23. About this repo

- 23.1. Supported hosts

- 23.2. Common build issues

- 23.3. Run command after boot

- 23.4. Default command line arguments

- 23.5. Build the documentation

- 23.6. Clean the build

- 23.7. ccache

- 23.8. Rebuild buildroot while running

- 23.9. Simultaneous runs

- 23.10. Build variants

- 23.11. Directory structure

- 23.12. Test this repo

- 23.13. Bisection

- 23.14. Update a forked submodule

- 23.15. Sanity checks

- 23.16. Release

- 23.17. Design rationale

- 23.18. Fairy tale

- 23.19. Bibliography

Each child section describes a possible different setup for this repo.

If you don’t know which one to go for, start with QEMU Buildroot setup getting started.

Design goals of this project are documented at: Design goals.

This setup has been mostly tested on Ubuntu. For other host operating systems see: Supported hosts.

Reserve 12Gb of disk and run:

git clone https://github.com/************/linux-kernel-module-cheat cd linux-kernel-module-cheat ./build --download-dependencies qemu-buildroot ./run

You don’t need to clone recursively even though we have .git submodules: download-dependencies fetches just the submodules that you need for this build to save time.

If something goes wrong, see: Common build issues and use our issue tracker: https://github.com/************/linux-kernel-module-cheat/issues

The initial build will take a while (30 minutes to 2 hours) to clone and build, see Benchmark builds for more details.

If you don’t want to wait, you could also try the following faster but much more limited methods:

but you will soon find that they are simply not enough if you anywhere near serious about systems programming.

After ./run, QEMU opens up and you can start playing with the kernel modules inside the simulated system:

insmod /hello.ko insmod /hello2.ko rmmod hello rmmod hello2

This should print to the screen:

hello init hello2 init hello cleanup hello2 cleanup

which are printk messages from init and cleanup methods of those modules.

Sources:

Quit QEMU with:

Ctrl-A X

See also: Quit QEMU from text mode.

All available modules can be found in the kernel_modules directory.

It is super easy to build for different CPU architectures, just use the --arch option:

./build --arch aarch64 --download-dependencies qemu-buildroot ./run --arch aarch64

To avoid typing --arch aarch64 many times, you can set the default arch as explained at: Default command line arguments

I now urge you to read the following sections which contain widely applicable information:

-

Linux kernel

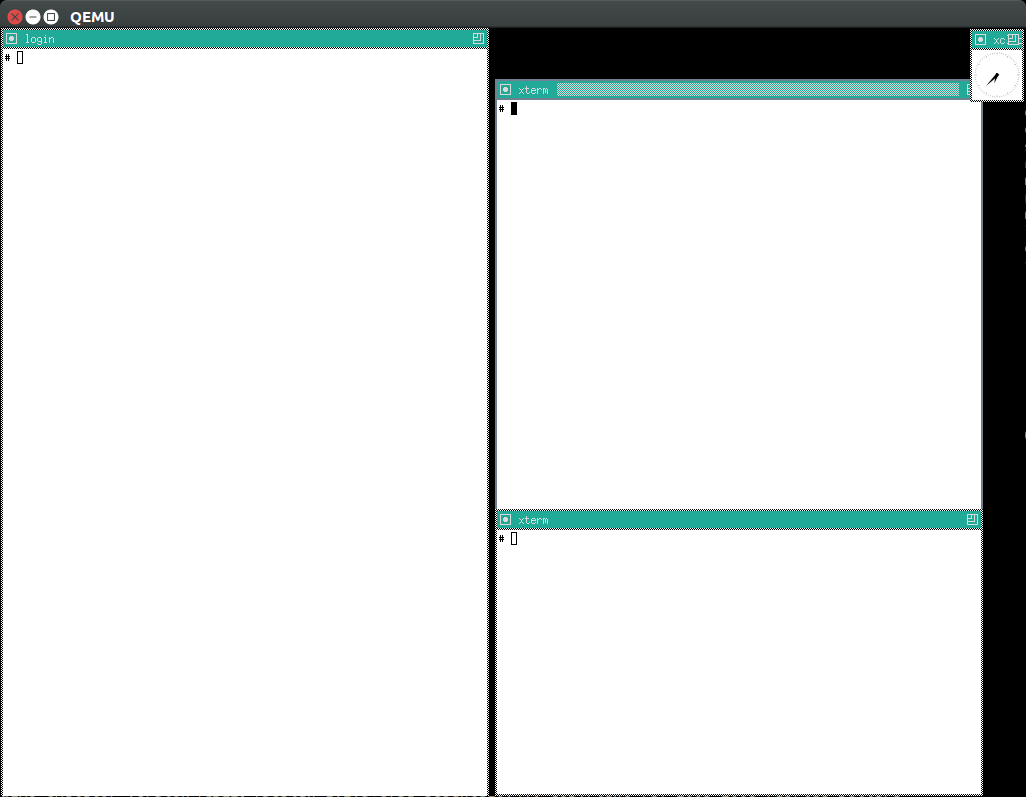

Once you use GDB step debug and tmux, your terminal will look a bit like this:

[ 1.451857] input: AT Translated Set 2 keyboard as /devices/platform/i8042/s1│loading @0xffffffffc0000000: ../kernel_modules-1.0//timer.ko

[ 1.454310] ledtrig-cpu: registered to indicate activity on CPUs │(gdb) b lkmc_timer_callback

[ 1.455621] usbcore: registered new interface driver usbhid │Breakpoint 1 at 0xffffffffc0000000: file /home/ciro/bak/git/linux-kernel-module

[ 1.455811] usbhid: USB HID core driver │-cheat/out/x86_64/buildroot/build/kernel_modules-1.0/./timer.c, line 28.

[ 1.462044] NET: Registered protocol family 10 │(gdb) c

[ 1.467911] Segment Routing with IPv6 │Continuing.

[ 1.468407] sit: IPv6, IPv4 and MPLS over IPv4 tunneling driver │

[ 1.470859] NET: Registered protocol family 17 │Breakpoint 1, lkmc_timer_callback (data=0xffffffffc0002000 <mytimer>)

[ 1.472017] 9pnet: Installing 9P2000 support │ at /linux-kernel-module-cheat//out/x86_64/buildroot/build/

[ 1.475461] sched_clock: Marking stable (1473574872, 0)->(1554017593, -80442)│kernel_modules-1.0/./timer.c:28

[ 1.479419] ALSA device list: │28 {

[ 1.479567] No soundcards found. │(gdb) c

[ 1.619187] ata2.00: ATAPI: QEMU DVD-ROM, 2.5+, max UDMA/100 │Continuing.

[ 1.622954] ata2.00: configured for MWDMA2 │

[ 1.644048] scsi 1:0:0:0: CD-ROM QEMU QEMU DVD-ROM 2.5+ P5│Breakpoint 1, lkmc_timer_callback (data=0xffffffffc0002000 <mytimer>)

[ 1.741966] tsc: Refined TSC clocksource calibration: 2904.010 MHz │ at /linux-kernel-module-cheat//out/x86_64/buildroot/build/

[ 1.742796] clocksource: tsc: mask: 0xffffffffffffffff max_cycles: 0x29dc0f4s│kernel_modules-1.0/./timer.c:28

[ 1.743648] clocksource: Switched to clocksource tsc │28 {

[ 2.072945] input: ImExPS/2 Generic Explorer Mouse as /devices/platform/i8043│(gdb) bt

[ 2.078641] EXT4-fs (vda): couldn't mount as ext3 due to feature incompatibis│#0 lkmc_timer_callback (data=0xffffffffc0002000 <mytimer>)

[ 2.080350] EXT4-fs (vda): mounting ext2 file system using the ext4 subsystem│ at /linux-kernel-module-cheat//out/x86_64/buildroot/build/

[ 2.088978] EXT4-fs (vda): mounted filesystem without journal. Opts: (null) │kernel_modules-1.0/./timer.c:28

[ 2.089872] VFS: Mounted root (ext2 filesystem) readonly on device 254:0. │#1 0xffffffff810ab494 in call_timer_fn (timer=0xffffffffc0002000 <mytimer>,

[ 2.097168] devtmpfs: mounted │ fn=0xffffffffc0000000 <lkmc_timer_callback>) at kernel/time/timer.c:1326

[ 2.126472] Freeing unused kernel memory: 1264K │#2 0xffffffff810ab71f in expire_timers (head=<optimized out>,

[ 2.126706] Write protecting the kernel read-only data: 16384k │ base=<optimized out>) at kernel/time/timer.c:1363

[ 2.129388] Freeing unused kernel memory: 2024K │#3 __run_timers (base=<optimized out>) at kernel/time/timer.c:1666

[ 2.139370] Freeing unused kernel memory: 1284K │#4 run_timer_softirq (h=<optimized out>) at kernel/time/timer.c:1692

[ 2.246231] EXT4-fs (vda): warning: mounting unchecked fs, running e2fsck isd│#5 0xffffffff81a000cc in __do_softirq () at kernel/softirq.c:285

[ 2.259574] EXT4-fs (vda): re-mounted. Opts: block_validity,barrier,user_xatr│#6 0xffffffff810577cc in invoke_softirq () at kernel/softirq.c:365

hello S98 │#7 irq_exit () at kernel/softirq.c:405

│#8 0xffffffff818021ba in exiting_irq () at ./arch/x86/include/asm/apic.h:541

Apr 15 23:59:23 login[49]: root login on 'console' │#9 smp_apic_timer_interrupt (regs=<optimized out>)

hello /root/.profile │ at arch/x86/kernel/apic/apic.c:1052

# insmod /timer.ko │#10 0xffffffff8180190f in apic_timer_interrupt ()

[ 6.791945] timer: loading out-of-tree module taints kernel. │ at arch/x86/entry/entry_64.S:857

# [ 7.821621] 4294894248 │#11 0xffffffff82003df8 in init_thread_union ()

[ 8.851385] 4294894504 │#12 0x0000000000000000 in ?? ()

│(gdb)

Besides a seamless initial build, this project also aims to make it effortless to modify and rebuild several major components of the system, to serve as an awesome development setup.

Let’s hack up the Linux kernel entry point, which is an easy place to start.

Open the file:

vim submodules/linux/init/main.c

and find the start_kernel function, then add there a:

pr_info("I'VE HACKED THE LINUX KERNEL!!!");

Then rebuild the Linux kernel, quit QEMU and reboot the modified kernel:

./build-linux ./run

and, surely enough, your message has appeared at the beginning of the boot:

<6>[ 0.000000] I'VE HACKED THE LINUX KERNEL!!!

So you are now officially a Linux kernel hacker, way to go!

We could have used just build to rebuild the kernel as in the initial build instead of build-linux, but building just the required individual components is preferred during development:

-

saves a few seconds from parsing Make scripts and reading timestamps

-

makes it easier to understand what is being done in more detail

-

allows passing more specific options to customize the build

The build script is just a lightweight wrapper that calls the smaller build scripts, and you can see what ./build does with:

./build --dry-run

When you reach difficulties, QEMU makes it possible to easily GDB step debug the Linux kernel source code, see: GDB step debug.

Edit kernel_modules/hello.c to contain:

pr_info("hello init hacked\n");

and rebuild with:

./build-modules

Now there are two ways to test it out: the fast way, and the safe way.

The fast way is, without quitting or rebooting QEMU, just directly re-insert the module with:

insmod /mnt/9p/out_rootfs_overlay/hello.ko

and the new pr_info message should now show on the terminal at the end of the boot.

This works because we have a 9P mount there setup by default, which mounts the host directory that contains the build outputs on the guest:

ls "$(./getvar out_rootfs_overlay_dir)"

The fast method is slightly risky because your previously insmodded buggy kernel module attempt might have corrupted the kernel memory, which could affect future runs.

Such failures are however unlikely, and you should be fine if you don’t see anything weird happening.

The safe way, is to fist quit QEMU, rebuild the modules, put them in the root filesystem, and then reboot:

./build-modules ./build-buildroot ./run --eval-after 'insmod /hello.ko'

./build-buildroot is required after ./build-modules because it re-generates the root filesystem with the modules that we compiled at ./build-modules.

You can see that ./build does that as well, by running:

./build --dry-run

--eval-after is optional: you could just type insmod /hello.ko in the terminal, but this makes it run automatically at the end of boot, and then drops you into a shell.

If the guest and host are the same arch, typically x86_64, you can speed up boot further with KVM:

./run --kvm

All of this put together makes the safe procedure acceptably fast for regular development as well.

It is also easy to GDB step debug kernel modules with our setup, see: GDB step debug kernel module.

Not satisfied with mere software? OK then, let’s hack up the QEMU x86 CPU identification:

vim submodules/qemu/target/i386/cpu.c

and modify:

.model_id = "QEMU Virtual CPU version " QEMU_HW_VERSION,

to contain:

.model_id = "QEMU Virtual CPU version HACKED " QEMU_HW_VERSION,

then as usual rebuild and re-run:

./build-qemu ./run --eval-after 'grep "model name" /proc/cpuinfo'

and once again, there is your message: QEMU communicated it to the Linux kernel, which printed it out.

You have now gone from newb to hardware hacker in a mere 15 minutes, your rate of progress is truly astounding!!!

Seriously though, if you want to be a real hardware hacker, it just can’t be done with open source tools as of 2018. The root obstacle is that:

-

Silicon fabs don’t publish reveal their design rules

-

which implies that there are no decent standard cell libraries. See also: https://www.quora.com/Are-there-good-open-source-standard-cell-libraries-to-learn-IC-synthesis-with-EDA-tools/answer/Ciro-Santilli

-

which implies that people can’t develop open source EDA tools

-

which implies that you can’t get decent power, performance and area estimates

The only thing you can do with open source is purely functional designs with Verilator, but you will never know if it can be actually produced and how efficient it can be.

If you really want to develop semiconductors, your only choice is to join an university or a semiconductor company that has the EDA licenses.

While hacking QEMU, you will likely want to GDB step its source. That is trivial since QEMU is just another userland program like any other, but our setup has a shortcut to make it even more convenient, see: Debug the emulator.

We use glibc as our default libc now, and it is tracked as an unmodified submodule at submodules/glibc, at the exact same version that Buildroot has it, which can be found at: package/glibc/glibc.mk. Buildroot 2018.05 applies no patches.

Let’s hack up the puts function:

./build-buildroot -- glibc-reconfigure

with the patch:

diff --git a/libio/ioputs.c b/libio/ioputs.c

index 706b20b492..23185948f3 100644

--- a/libio/ioputs.c

+++ b/libio/ioputs.c

@@ -38,8 +38,9 @@ _IO_puts (const char *str)

if ((_IO_vtable_offset (_IO_stdout) != 0

|| _IO_fwide (_IO_stdout, -1) == -1)

&& _IO_sputn (_IO_stdout, str, len) == len

+ && _IO_sputn (_IO_stdout, " hacked", 7) == 7

&& _IO_putc_unlocked ('\n', _IO_stdout) != EOF)

- result = MIN (INT_MAX, len + 1);

+ result = MIN (INT_MAX, len + 1 + 7);

_IO_release_lock (_IO_stdout);

return result;

And then:

./run --eval-after '/hello.out'

outputs:

hello hacked

Lol!

We can also test our hacked glibc on User mode simulation with:

./run --userland hello

I just noticed that this is actually a good way to develop glibc for other archs.

In this example, we got away without recompiling the userland program because we made a change that did not affect the glibc ABI, see this answer for an introduction to ABI stability: https://stackoverflow.com/questions/2171177/what-is-an-application-binary-interface-abi/54967743#54967743

Note that for arch agnostic features that don’t rely on bleeding kernel changes that you host doesn’t yet have, you can develop glibc natively as explained at:

-

https://stackoverflow.com/questions/2856438/how-can-i-link-to-a-specific-glibc-version/52550158#52550158 more focus on symbol versioning, but no one knows how to do it, so I answered

Tested on a30ed0f047523ff2368d421ee2cce0800682c44e + 1.

Have you ever felt that a single inc instruction was not enough? Really? Me too!

So let’s hack the GNU GAS assembler, which is part of GNU Binutils, to add a new shiny version of inc called… myinc!

GCC uses GNU GAS as its backend, so we will test out new mnemonic with an inline assembly test program: userland/arch/x86_64/binutils_hack.c, which is just a copy of userland/arch/x86_64/asm_hello.c but with myinc instead of inc.

The inline assembly is disabled with an #ifdef, so first modify the source to enable that.

Then, try to build userland:

./build-userland

and watch it fail with:

binutils_hack.c:8: Error: no such instruction: `myinc %rax'

Now, edit the file

vim submodules/binutils-gdb/opcodes/i386-tbl.h

and add a copy of the "inc" instruction just next to it, but with the new name "myinc":

diff --git a/opcodes/i386-tbl.h b/opcodes/i386-tbl.h

index af583ce578..3cc341f303 100644

--- a/opcodes/i386-tbl.h

+++ b/opcodes/i386-tbl.h

@@ -1502,6 +1502,19 @@ const insn_template i386_optab[] =

{ { { 1, 1, 1, 1, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0,

0, 0, 1, 1, 1, 1, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 1, 1, 1, 0,

1, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0 } } } },

+ { "myinc", 1, 0xfe, 0x0, 1,

+ { { 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0,

+ 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0,

+ 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0,

+ 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0,

+ 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0 } },

+ { 0, 1, 0, 1, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0,

+ 0, 1, 0, 1, 0, 0, 0, 0, 1, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 0,

+ 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0,

+ 0, 0, 0, 0, 0, 0 },

+ { { { 1, 1, 1, 1, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0,

+ 0, 0, 1, 1, 1, 1, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 1, 1, 1, 0,

+ 1, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0 } } } },

{ "sub", 2, 0x28, None, 1,

{ { 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0,

0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0,

Finally, rebuild Binutils, userland and test our program with User mode setup:

./build-buildroot -- host-binutils-rebuild ./build-userland --static ./run --static --userland arch/x86_64/binutils_hack

and we se that myinc worked since the assert did not fail!

Tested on b60784d59bee993bf0de5cde6c6380dd69420dda + 1.

OK, now time to hack GCC.

For convenience, let’s use the User mode setup.

If we run the program userland/gcc_hack.c:

./build-userland --static ./run --static --userland gcc_hack

it produces the normal boring output:

i = 2 j = 0

So how about we swap ++ and -- to make things more fun?

Open the file:

vim submodules/gcc/gcc/c/c-parser.c

and find the function c_parser_postfix_expression_after_primary.

In that function, swap case CPP_PLUS_PLUS and case CPP_MINUS_MINUS:

diff --git a/gcc/c/c-parser.c b/gcc/c/c-parser.c index 101afb8e35f..89535d1759a 100644 --- a/gcc/c/c-parser.c +++ b/gcc/c/c-parser.c @@ -8529,7 +8529,7 @@ c_parser_postfix_expression_after_primary (c_parser *parser, expr.original_type = DECL_BIT_FIELD_TYPE (field); } break; - case CPP_PLUS_PLUS: + case CPP_MINUS_MINUS: /* Postincrement. */ start = expr.get_start (); finish = c_parser_peek_token (parser)->get_finish (); @@ -8548,7 +8548,7 @@ c_parser_postfix_expression_after_primary (c_parser *parser, expr.original_code = ERROR_MARK; expr.original_type = NULL; break; - case CPP_MINUS_MINUS: + case CPP_PLUS_PLUS: /* Postdecrement. */ start = expr.get_start (); finish = c_parser_peek_token (parser)->get_finish ();

Now rebuild GCC, the program and re-run it:

./build-buildroot -- host-gcc-final-rebuild ./build-userland --static ./run --static --userland gcc_hack

and the new ouptut is now:

i = 2 j = 0

We need to use the ugly -final thing because GCC has to packages in Buildroot, -initial and -final: https://stackoverflow.com/questions/54992977/how-to-select-an-override-srcdir-source-for-gcc-when-building-buildroot No one is able to example precisely with a minimal example why this is required:

This is our reference setup, and the best supported one, use it unless you have good reason not to.

It was historically the first one we did, and all sections have been tested with this setup unless explicitly noted.

Read the following sections for further introductory material:

This setup is like the QEMU Buildroot setup, but it uses gem5 instead of QEMU as a system simulator.

QEMU tries to run as fast as possible and give correct results at the end, but it does not tell us how many CPU cycles it takes to do something, just the number of instructions it ran. This kind of simulation is known as functional simulation.

The number of instructions executed is a very poor estimator of performance because in modern computers, a lot of time is spent waiting for memory requests rather than the instructions themselves.

gem5 on the other hand, can simulate the system in more detail than QEMU, including:

-

simplified CPU pipeline

-

caches

-

DRAM timing

and can therefore be used to estimate system performance, see: gem5 run benchmark for an example.

The downside of gem5 much slower than QEMU because of the greater simulation detail.

See gem5 vs QEMU for a more thorough comparison.

For the most part, if you just add the --emulator gem5 option or *-gem5 suffix to all commands and everything should magically work.

If you haven’t built Buildroot yet for QEMU Buildroot setup, you can build from the beginning with:

./build --download-dependencies gem5-buildroot ./run --emulator gem5

If you have already built previously, don’t be afraid: gem5 and QEMU use almost the same root filesystem and kernel, so ./build will be fast.

Remember that the gem5 boot is considerably slower than QEMU since the simulation is more detailed.

To get a terminal, either open a new shell and run:

./gem5-shell

You can quit the shell without killing gem5 by typing tilde followed by a period:

~.

If you are inside tmux, which I highly recommend, you can both run gem5 stdout and open the guest terminal on a split window with:

./run --emulator gem5 --tmux

See also: tmux gem5.

At the end of boot, it might not be very clear that you have the shell since some printk messages may appear in front of the prompt like this:

# <6>[ 1.215329] clocksource: tsc: mask: 0xffffffffffffffff max_cycles: 0x1cd486fa865, max_idle_ns: 440795259574 ns <6>[ 1.215351] clocksource: Switched to clocksource tsc

but if you look closely, the PS1 prompt marker # is there already, just hit enter and a clear prompt line will appear.

If you forgot to open the shell and gem5 exit, you can inspect the terminal output post-mortem at:

less "$(./getvar --emulator gem5 m5out_dir)/system.pc.com_1.device"

More gem5 information is present at: gem5

Good next steps are:

This repository has been tested inside clean Docker containers.

This is a good option if you are on a Linux host, but the native setup failed due to your weird host distribution, and you have better things to do with your life than to debug it. See also: Supported hosts.

For example, to do a QEMU Buildroot setup inside Docker, run:

sudo apt-get install docker ./run-docker create && \ ./run-docker sh -- ./build --download-dependencies qemu-buildroot ./run-docker sh

You are now left inside a shell in the Docker! From there, just run as usual:

./run

The host git top level directory is mounted inside the guest with a Docker volume, which means for example that you can use your host’s GUI text editor directly on the files. Just don’t forget that if you nuke that directory on the guest, then it gets nuked on the host as well!

Command breakdown:

-

./run-docker create: create the image and container.Needed only the very first time you use Docker, or if you run

./run-docker DESTROYto restart for scratch, or save some disk space.The image and container name is

lkmc. The container shows under:docker ps -a

and the image shows under:

docker images

-

./run-docker sh: open a shell on the container.If it has not been started previously, start it. This can also be done explicitly with:

./run-docker start

Quit the shell as usual with

Ctrl-DThis can be called multiple times from different host terminals to open multiple shells.

-

./run-docker stop: stop the container.This might save a bit of CPU and RAM once you stop working on this project, but it should not be a lot.

-

./run-docker DESTROY: delete the container and image.This doesn’t really clean the build, since we mount the guest’s working directory on the host git top-level, so you basically just got rid of the

apt-getinstalls.To actually delete the Docker build, run on host:

# sudo rm -rf out.docker

To use GDB step debug from inside Docker, you need a second shell inside the container. You can either do that from another shell with:

./run-docker sh

or even better, by starting a tmux session inside the container. We install tmux by default in the container.

You can also start a second shell and run a command in it at the same time with:

./run-docker sh -- ./run-gdb start_kernel

To use QEMU graphic mode from Docker, run:

./run --graphic --vnc

and then on host:

sudo apt-get install vinagre ./vnc

TODO make files created inside Docker be owned by the current user in host instead of root:

This setup uses prebuilt binaries that we upload to GitHub from time to time.

We don’t currently provide a full prebuilt because it would be too big to host freely, notably because of the cross toolchain.

Our prebuilts currently include:

-

QEMU Buildroot setup binaries

-

Linux kernel

-

root filesystem

-

-

Baremetal setup binaries for QEMU

For more details, see our our release procedure.

Advantage of this setup: saves time and disk space on the initial install, which is expensive in largely due to building the toolchain.

The limitations are severe however:

-

can’t GDB step debug the kernel, since the source and cross toolchain with GDB are not available. Buildroot cannot easily use a host toolchain: Buildroot use prebuilt host toolchain.

Maybe we could work around this by just downloading the kernel source somehow, and using a host prebuilt GDB, but we felt that it would be too messy and unreliable.

-

you won’t get the latest version of this repository. Our Travis attempt to automate builds failed, and storing a release for every commit would likely make GitHub mad at us anyways.

-

gem5 is not currently supported. The major blocking point is how to avoid distributing the kernel images twice: once for gem5 which uses

vmlinux, and once for QEMU which usesarch/*images, see also: vmlinux vs bzImage vs zImage vs Image.

This setup might be good enough for those developing simulators, as that requires less image modification. But once again, if you are serious about this, why not just let your computer build the full featured setup while you take a coffee or a nap? :-)

Checkout to the latest tag and use the Ubuntu packaged QEMU to boot Linux:

sudo apt-get install qemu-system-x86 git clone https://github.com/************/linux-kernel-module-cheat cd linux-kernel-module-cheat git checkout "$(git rev-list --tags --max-count=1)" ./release-download-latest unzip lkmc-*.zip ./run --qemu-which host

Or to run a baremetal example instead:

./run \ --arch aarch64 \ --baremetal baremetal/hello.c \ --qemu-which host \ ;

You have to checkout to the latest tag to ensure that the scripts match the release format: https://stackoverflow.com/questions/1404796/how-to-get-the-latest-tag-name-in-current-branch-in-git

Be saner and use our custom built QEMU instead:

./build --download-dependencies qemu ./run

This also allows you to modify QEMU if you’re into that sort of thing.

To build the kernel modules as in Your first kernel module hack do:

git submodule update --depth 1 --init --recursive "$(./getvar linux_source_dir)" ./build-linux --no-modules-install -- modules_prepare ./build-modules --gcc-which host ./run

TODO: for now the only way to test those modules out without building Buildroot is with 9p, since we currently rely on Buildroot to manipulate the root filesystem.

Command explanation:

-

modules_preparedoes the minimal build procedure required on the kernel for us to be able to compile the kernel modules, and is way faster than doing a full kernel build. A full kernel build would also work however. -

--gcc-which hostselects your host Ubuntu packaged GCC, since you don’t have the Buildroot toolchain -

--no-modules-installis required otherwise themake modules_installtarget we run by default fails, since the kernel wasn’t built

To modify the Linux kernel, build and use it as usual:

git submodule update --depth 1 --init --recursive "$(./getvar linux_source_dir)" ./build-linux ./run

THIS IS DANGEROUS (AND FUN), YOU HAVE BEEN WARNED

This method runs the kernel modules directly on your host computer without a VM, and saves you the compilation time and disk usage of the virtual machine method.

It has however severe limitations:

-

can’t control which kernel version and build options to use. So some of the modules will likely not compile because of kernel API changes, since the Linux kernel does not have a stable kernel module API.

-

bugs can easily break you system. E.g.:

-

segfaults can trivially lead to a kernel crash, and require a reboot

-

your disk could get erased. Yes, this can also happen with

sudofrom userland. But you should not usesudowhen developing newbie programs. And for the kernel you don’t have the choice not to usesudo. -

even more subtle system corruption such as not being able to rmmod

-

-

can’t control which hardware is used, notably the CPU architecture

-

can’t step debug it with GDB easily. The alternatives are JTAG or KGDB, but those are less reliable, and require extra hardware.

Still interested?

./build-modules --gcc-which host --host

Compilation will likely fail for some modules because of kernel or toolchain differences that we can’t control on the host.

The best workaround is to compile just your modules with:

./build-modules --gcc-which host --host -- hello hello2

which is equivalent to:

./build-modules \ --gcc-which host \ --host \ -- \ kernel_modules/hello.c \ kernel_modules/hello2.c \ ;

Or just remove the .c extension from the failing files and try again:

cd "$(./getvar kernel_modules_source_dir)" mv broken.c broken.c~

Once you manage to compile, and have come to terms with the fact that this may blow up your host, try it out with:

cd "$(./getvar kernel_modules_build_host_subdir)" sudo insmod hello.ko # Our module is there. sudo lsmod | grep hello # Last message should be: hello init dmesg -T sudo rmmod hello # Last message should be: hello exit dmesg -T # Not present anymore sudo lsmod | grep hello

Minimal host build system example:

cd hello_host_kernel_module make sudo insmod hello.ko dmesg sudo rmmod hello.ko dmesg

This setup does not use the Linux kernel nor Buildroot at all: it just runs your very own minimal OS.

x86_64 is not currently supported, only arm and aarch64: I had made some x86 bare metal examples at: https://github.com/************/x86-bare-metal-examples but I’m lazy to port them here now. Pull requests are welcome.

The main reason this setup is included in this project, despite the word "Linux" being on the project name, is that a lot of the emulator boilerplate can be reused for both use cases.

This setup allows you to make a tiny OS and that runs just a few instructions, use it to fully control the CPU to better understand the simulators for example, or develop your own OS if you are into that.

You can also use C and a subset of the C standard library because we enable Newlib by default. See also: https://electronics.stackexchange.com/questions/223929/c-standard-libraries-on-bare-metal/400077#400077

Our C bare-metal compiler is built with crosstool-NG. If you have already built Buildroot previously, you will end up with two GCCs installed. Unfortunately I don’t see a solution for this, since we need separate toolchains for Newlib on baremetal and glibc on Linux: https://stackoverflow.com/questions/38956680/difference-between-arm-none-eabi-and-arm-linux-gnueabi/38989869#38989869

Every .c file inside baremetal/ and .S file inside baremetal/arch/<arch>/ generates a separate baremetal image.

For example, to run baremetal/hello.c in QEMU do:

./build --arch aarch64 --download-dependencies qemu-baremetal ./run --arch aarch64 --baremetal hello

The terminal prints:

hello

Now let’s run baremetal/arch/aarch64/add.S:

./run --arch aarch64 --baremetal arch/aarch64/add

This time, the terminal does not print anything, which indicates success.

If you look into the source, you will see that we just have an assertion there.

You can see a sample assertion fail in baremetal/interactive/assert_fail.c:

./run --arch aarch64 --baremetal interactive/assert_fail

and the terminal contains:

lkmc_test_fail error: simulation error detected by parsing logs

and the exit status of our script is 1:

echo $?

To modify a baremetal program, simply edit the file, .g.

vim baremetal/hello.c

and rebuild:

./build --arch aarch64 --download-dependencies qemu-baremetal ./run --arch aarch64 --baremetal hello

./build qemu-baremetal had called build-baremetal for us previously, in addition to its requirements.

./build-baremetal uses crosstool-NG, and so it must be preceded by build-crosstool-ng, which ./build qemu-baremetal also calls.

Alternatively, for the sake of tab completion, we also accept relative paths inside baremetal/, for example the following also work:

./run --arch aarch64 --baremetal baremetal/hello.c ./run --arch aarch64 --baremetal baremetal/arch/aarch64/add.S

Absolute paths however are used as is and must point to the actual executable:

./run --arch aarch64 --baremetal "$(./getvar --arch aarch64 baremetal_build_dir)/exit.elf"

To use gem5 instead of QEMU do:

./build --download-dependencies gem5-baremetal ./run --arch aarch64 --baremetal interactive/prompt --emulator gem5

and then as usual open a shell with:

./gem5-shell

Or as usual, tmux users can do both in one go with:

./run --arch aarch64 --baremetal interactive/prompt --emulator gem5 --tmux

TODO: the carriage returns are a bit different than in QEMU, see: gem5 baremetal carriage return.

Note that ./build-baremetal requires the --emulator gem5 option, and generates separate executable images for both, as can be seen from:

echo "$(./getvar --arch aarch64 --baremetal interactive/prompt --emulator qemu image)" echo "$(./getvar --arch aarch64 --baremetal interactive/prompt --emulator gem5 image)"

This is unlike the Linux kernel that has a single image for both QEMU and gem5:

echo "$(./getvar --arch aarch64 --emulator qemu image)" echo "$(./getvar --arch aarch64 --emulator gem5 image)"

The reason for that is that on baremetal we don’t parse the device tress from memory like the Linux kernel does, which tells the kernel for example the UART address, and many other system parameters.

gem5 also supports the RealViewPBX machine, which represents an older hardware compared to the default VExpress_GEM5_V1:

./build-baremetal --arch aarch64 --emulator gem5 --machine RealViewPBX ./run --arch aarch64 --baremetal interactive/prompt --emulator gem5 --machine RealViewPBX

This generates yet new separate images with new magic constants:

echo "$(./getvar --arch aarch64 --baremetal interactive/prompt --emulator gem5 --machine VExpress_GEM5_V1 image)" echo "$(./getvar --arch aarch64 --baremetal interactive/prompt --emulator gem5 --machine RealViewPBX image)"

But just stick to newer and better VExpress_GEM5_V1 unless you have a good reason to use RealViewPBX.

When doing bare metal programming, it is likely that you will want to learn assembly language basics. Have a look at these tutorials for the userland part:

For more information on baremetal, see the section: Baremetal.

The following subjects are particularly important:

Much like Baremetal setup, this is another fun setup that does not require Buildroot or the Linux kernel.

Getting started at: QEMU user mode getting started.

Introduction at: User mode simulation.

--wait-gdb makes QEMU and gem5 wait for a GDB connection, otherwise we could accidentally go past the point we want to break at:

./run --wait-gdb

Say you want to break at start_kernel. So on another shell:

./run-gdb start_kernel

or at a given line:

./run-gdb init/main.c:1088

Now QEMU will stop there, and you can use the normal GDB commands:

list next continue

See also:

Just don’t forget to pass --arch to ./run-gdb, e.g.:

./run --arch aarch64 --wait-gdb

and:

./run-gdb --arch aarch64 start_kernel

O=0 is an impossible dream, O=2 being the default.

So get ready for some weird jumps, and <value optimized out> fun. Why, Linux, why.

Let’s observe the kernel write system call as it reacts to some userland actions.

Start QEMU with just:

./run

and after boot inside a shell run:

/count.sh

which counts to infinity to stdout. Source: rootfs_overlay/count.sh.

Then in another shell, run:

./run-gdb

and then hit:

Ctrl-C break __x64_sys_write continue continue continue

And you now control the counting on the first shell from GDB!

Before v4.17, the symbol name was just sys_write, the change happened at d5a00528b58cdb2c71206e18bd021e34c4eab878. As of Linux v 4.19, the function is called sys_write in arm, and __arm64_sys_write in aarch64. One good way to find it if the name changes again is to try:

rbreak .*sys_write

or just have a quick look at the sources!

When you hit Ctrl-C, if we happen to be inside kernel code at that point, which is very likely if there are no heavy background tasks waiting, and we are just waiting on a sleep type system call of the command prompt, we can already see the source for the random place inside the kernel where we stopped.

tmux just makes things even more fun by allowing us to see both the terminal for:

-

emulator stdout

at once without dragging windows around!

First start tmux with:

tmux

Now that you are inside a shell inside tmux, run:

./run --tmux --wait-gdb

This splits the terminal into two panes:

-

left: usual QEMU

-

right: gdb

and focuses on the GDB pane.

Now you can navigate with the usual tmux shortcuts:

-

switch between the two panes with:

Ctrl-B O -

close either pane by killing its terminal with

Ctrl-Das usual

To start again, switch back to the QEMU pane, kill the emulator, and re-run:

./run --tmux --wait-gdb

This automatically clears the GDB pane, and starts a new one.

Pass extra arguments to the run-gdb pane with:

./run --tmux-args start_kernel --wait-gdb

This is equivalent to:

./run --wait-gdb ./run-gdb start_kernel

Due to Python’s CLI parsing quicks, if the run-gdb arguments start with a dash -, you have to use the = sign, e.g. to GDB step debug early boot:

./run --tmux-args=--no-continue --wait-gdb

See the tmux manual for further details:

man tmux

If you are using gem5 instead of QEMU, --tmux has a different effect: it opens the gem5 terminal instead of the debugger:

./run --emulator gem5 --tmux

If you also want to use the debugger with gem5, you will need to create new terminals as usual.

From inside tmux, you can do that with Ctrl-B C or Ctrl-B %.

To see the debugger by default instead of the terminal, run:

./tmu ./run-gdb ./run --wait-gdb --emulator gem5

Loadable kernel modules are a bit trickier since the kernel can place them at different memory locations depending on load order.

So we cannot set the breakpoints before insmod.

However, the Linux kernel GDB scripts offer the lx-symbols command, which takes care of that beautifully for us.

Shell 1:

./run

Wait for the boot to end and run:

insmod /timer.ko

Source: kernel_modules/timer.c.

This prints a message to dmesg every second.

Shell 2:

./run-gdb

In GDB, hit Ctrl-C, and note how it says:

scanning for modules in /root/linux-kernel-module-cheat/out/kernel_modules/x86_64/kernel_modules loading @0xffffffffc0000000: /root/linux-kernel-module-cheat/out/kernel_modules/x86_64/kernel_modules/timer.ko

That’s lx-symbols working! Now simply:

break lkmc_timer_callback continue continue continue

and we now control the callback from GDB!

Just don’t forget to remove your breakpoints after rmmod, or they will point to stale memory locations.

TODO: why does break work_func for insmod kthread.ko not very well? Sometimes it breaks but not others.

TODO on arm 51e31cdc2933a774c2a0dc62664ad8acec1d2dbe it does not always work, and lx-symbols fails with the message:

loading vmlinux

Traceback (most recent call last):

File "/linux-kernel-module-cheat//out/arm/buildroot/build/linux-custom/scripts/gdb/linux/symbols.py", line 163, in invoke

self.load_all_symbols()

File "/linux-kernel-module-cheat//out/arm/buildroot/build/linux-custom/scripts/gdb/linux/symbols.py", line 150, in load_all_symbols

[self.load_module_symbols(module) for module in module_list]

File "/linux-kernel-module-cheat//out/arm/buildroot/build/linux-custom/scripts/gdb/linux/symbols.py", line 110, in load_module_symbols

module_name = module['name'].string()

gdb.MemoryError: Cannot access memory at address 0xbf0000cc

Error occurred in Python command: Cannot access memory at address 0xbf0000cc

Can’t reproduce on x86_64 and aarch64 are fine.

It is kind of random: if you just insmod manually and then immediately ./run-gdb --arch arm, then it usually works.

But this fails most of the time: shell 1:

./run --arch arm --eval-after 'insmod /hello.ko'

shell 2:

./run-gdb --arch arm

then hit Ctrl-C on shell 2, and voila.

Then:

cat /proc/modules

says that the load address is:

0xbf000000

so it is close to the failing 0xbf0000cc.

readelf:

./run-toolchain readelf -- -s "$(./getvar kernel_modules_build_subdir)/hello.ko"

does not give any interesting hits at cc, no symbol was placed that far.

TODO find a more convenient method. We have working methods, but they are not ideal.

This is not very easy, since by the time the module finishes loading, and lx-symbols can work properly, module_init has already finished running!

Possibly asked at:

This is the best method we’ve found so far.

The kernel calls module_init synchronously, therefore it is not hard to step into that call.

As of 4.16, the call happens in do_one_initcall, so we can do in shell 1:

./run

shell 2 after boot finishes (because there are other calls to do_init_module at boot, presumably for the built-in modules):

./run-gdb do_one_initcall

then step until the line:

833 ret = fn();

which does the actual call, and then step into it.

For the next time, you can also put a breakpoint there directly:

./run-gdb init/main.c:833

How we found this out: first we got GDB module_init calculate entry address working, and then we did a bt. AKA cheating :-)

This works, but is a bit annoying.

The key observation is that the load address of kernel modules is deterministic: there is a pre allocated memory region https://www.kernel.org/doc/Documentation/x86/x86_64/mm.txt "module mapping space" filled from bottom up.

So once we find the address the first time, we can just reuse it afterwards, as long as we don’t modify the module.

Do a fresh boot and get the module:

./run --eval-after '/pr_debug.sh;insmod /fops.ko;/poweroff.out'

The boot must be fresh, because the load address changes every time we insert, even after removing previous modules.

The base address shows on terminal:

0xffffffffc0000000 .text

Now let’s find the offset of myinit:

./run-toolchain readelf -- \ -s "$(./getvar kernel_modules_build_subdir)/fops.ko" | \ grep myinit

which gives:

30: 0000000000000240 43 FUNC LOCAL DEFAULT 2 myinit

so the offset address is 0x240 and we deduce that the function will be placed at:

0xffffffffc0000000 + 0x240 = 0xffffffffc0000240

Now we can just do a fresh boot on shell 1:

./run --eval 'insmod /fops.ko;/poweroff.out' --wait-gdb

and on shell 2:

./run-gdb '*0xffffffffc0000240'

GDB then breaks, and lx-symbols works.

TODO not working. This could be potentially very convenient.

The idea here is to break at a point late enough inside sys_init_module, at which point lx-symbols can be called and do its magic.

Beware that there are both sys_init_module and sys_finit_module syscalls, and insmod uses fmodule_init by default.

Both call do_module_init however, which is what lx-symbols hooks to.

If we try:

b sys_finit_module

then hitting:

n

does not break, and insertion happens, likely because of optimizations? Disable kernel compiler optimizations

Then we try:

b do_init_module

A naive:

fin

also fails to break!

Finally, in despair we notice that pr_debug prints the kernel load address as explained at Bypass lx-symbols.

So, if we set a breakpoint just after that message is printed by searching where that happens on the Linux source code, we must be able to get the correct load address before init_module happens.

This is another possibility: we could modify the module source by adding a trap instruction of some kind.

This appears to be described at: https://www.linuxjournal.com/article/4525

But it refers to a gdbstart script which is not in the tree anymore and beyond my git log capabilities.

And just adding:

asm( " int $3");

directly gives an oops as I’d expect.

Useless, but a good way to show how hardcore you are. Disable lx-symbols with:

./run-gdb --no-lxsymbols

From inside guest:

insmod /timer.ko cat /proc/modules

as mentioned at:

This will give a line of form:

fops 2327 0 - Live 0xfffffffa00000000

And then tell GDB where the module was loaded with:

Ctrl-C add-symbol-file ../../../rootfs_overlay/x86_64/timer.ko 0xffffffffc0000000 0xffffffffc0000000

Alternatively, if the module panics before you can read /proc/modules, there is a pr_debug which shows the load address:

echo 8 > /proc/sys/kernel/printk echo 'file kernel/module.c +p' > /sys/kernel/debug/dynamic_debug/control /myinsmod.out /hello.ko

And then search for a line of type:

[ 84.877482] 0xfffffffa00000000 .text

Tested on 4f4749148273c282e80b58c59db1b47049e190bf + 1.

TODO successfully debug the very first instruction that the Linux kernel runs, before start_kernel!

Break at the very first instruction executed by QEMU:

./run-gdb --no-continue

TODO why can’t we break at early startup stuff such as:

./run-gdb extract_kernel ./run-gdb main

Maybe it is because they are being copied around at specific locations instead of being run directly from inside the main image, which is where the debug information points to?

gem5 tracing with --debug-flags=Exec does show the right symbols however! So in the worst case, we can just read their source. Amazing.

v4.19 also added a CONFIG_HAVE_KERNEL_UNCOMPRESSED=y option for having the kernel uncompressed which could make following the startup easier, but it is only available on s390. aarch64 however is already uncompressed by default, so might be the easiest one. See also: vmlinux vs bzImage vs zImage vs Image.

One possibility is to run:

./trace-boot --arch arm

and then find the second address (the first one does not work, already too late maybe):

less "$(./getvar --arch arm trace_txt_file)"

and break there:

./run --arch arm --wait-gdb ./run-gdb --arch arm '*0x1000'

but TODO: it does not show the source assembly under arch/arm: https://stackoverflow.com/questions/11423784/qemu-arm-linux-kernel-boot-debug-no-source-code

I also tried to hack run-gdb with:

@@ -81,7 +81,7 @@ else

${gdb} \

-q \\

-ex 'add-auto-load-safe-path $(pwd)' \\

--ex 'file vmlinux' \\

+-ex 'file arch/arm/boot/compressed/vmlinux' \\

-ex 'target remote localhost:${port}' \\

${brk} \

-ex 'continue' \\

and no I do have the symbols from arch/arm/boot/compressed/vmlinux', but the breaks still don’t work.

QEMU’s -gdb GDB breakpoints are set on virtual addresses, so you can in theory debug userland processes as well.

You will generally want to use gdbserver for this as it is more reliable, but this method can overcome the following limitations of gdbserver:

-

the emulator does not support host to guest networking. This seems to be the case for gem5: gem5 host to guest networking

-

cannot see the start of the

initprocess easily -

gdbserveralters the working of the kernel, and makes your run less representative

Known limitations of direct userland debugging:

-

the kernel might switch context to another process or to the kernel itself e.g. on a system call, and then TODO confirm the PIC would go to weird places and source code would be missing.

-

TODO step into shared libraries. If I attempt to load them explicitly:

(gdb) sharedlibrary ../../staging/lib/libc.so.0 No loaded shared libraries match the pattern `../../staging/lib/libc.so.0'.

since GDB does not know that libc is loaded.

This is the userland debug setup most likely to work, since at init time there is only one userland executable running.

For executables from the userland directory such as userland/count.c:

-

Shell 1:

./run --wait-gdb --kernel-cli 'init=/count.out'

-

Shell 2:

./run-gdb-user count main

Alternatively, we could also pass the full path to the executable:

./run-gdb-user "$(./getvar userland_build_dir)/sleep_forever.out" main

Path resolution is analogous to that of

./run --baremetal.

Then, as soon as boot ends, we are left inside a debug session that looks just like what gdbserver would produce.

BusyBox custom init process:

-

Shell 1:

./run --wait-gdb --kernel-cli 'init=/bin/ls'

-

Shell 2:

./run-gdb-user "$(./getvar buildroot_build_build_dir)"/busybox-*/busybox ls_main

This follows BusyBox' convention of calling the main for each executable as <exec>_main since the busybox executable has many "mains".

BusyBox default init process:

-

Shell 1:

./run --wait-gdb

-

Shell 2:

./run-gdb-user "$(./getvar buildroot_build_build_dir)"/busybox-*/busybox init_main

init cannot be debugged with gdbserver without modifying the source, or else /sbin/init exits early with:

"must be run as PID 1"

Non-init process:

-

Shell 1:

./run --wait-gdb

-

Shell 2:

./run-gdb-user myinsmod main

-

Shell 1 after the boot finishes:

/myinsmod.out /hello.ko

This is the least reliable setup as there might be other processes that use the given virtual address.

TODO: without --wait-gdb and the break main that we do inside ./run-gdb-user says:

Cannot access memory at address 0x10604

and then GDB never breaks. Tested at ac8663a44a450c3eadafe14031186813f90c21e4 + 1.

The exact behaviour seems to depend on the architecture:

-

arm: happens always -

x86_64: appears to happen only if you try to connect GDB as fast as possible, before init has been reached. -

aarch64: could not observe the problem

We have also double checked the address with:

./run-toolchain --arch arm readelf -- \ -s "$(./getvar --arch arm kernel_modules_build_subdir)/fops.ko" | \ grep main

and from GDB:

info line main

and both give:

000105fc

which is just 8 bytes before 0x10604.

gdbserver also says 0x10604.

However, if do a Ctrl-C in GDB, and then a direct:

b *0x000105fc

it works. Why?!

On GEM5, x86 can also give the Cannot access memory at address, so maybe it is also unreliable on QEMU, and works just by coincidence.

GDB can call functions as explained at: https://stackoverflow.com/questions/1354731/how-to-evaluate-functions-in-gdb

However this is failing for us:

-

some symbols are not visible to

calleven thoughbsees them -

for those that are,

callfails with an E14 error

E.g.: if we break on __x64_sys_write on /count.sh:

>>> call printk(0, "asdf") Could not fetch register "orig_rax"; remote failure reply 'E14' >>> b printk Breakpoint 2 at 0xffffffff81091bca: file kernel/printk/printk.c, line 1824. >>> call fdget_pos(fd) No symbol "fdget_pos" in current context. >>> b fdget_pos Breakpoint 3 at 0xffffffff811615e3: fdget_pos. (9 locations) >>>

even though fdget_pos is the first thing __x64_sys_write does:

581 SYSCALL_DEFINE3(write, unsigned int, fd, const char __user *, buf,

582 size_t, count)

583 {

584 struct fd f = fdget_pos(fd);

I also noticed that I get the same error:

Could not fetch register "orig_rax"; remote failure reply 'E14'

when trying to use:

fin

on many (all?) functions.

See also: ************#19

info all-registers shows some of them.

The implementation is described at: https://stackoverflow.com/questions/46415059/how-to-observe-aarch64-system-registers-in-qemu/53043044#53043044

For a more minimal baremetal multicore setup, see: ARM multicore.

We can set and get which cores the Linux kernel allows a program to run on with sched_getaffinity and sched_setaffinity:

./run --cpus 2 --eval-after '/sched_getaffinity.out'

Source: userland/sched_getaffinity.c

Sample output:

sched_getaffinity = 1 1 sched_getcpu = 1 sched_getaffinity = 1 0 sched_getcpu = 0

Which shows us that:

-

initially:

-

all 2 cores were enabled as shown by

sched_getaffinity = 1 1 -

the process was randomly assigned to run on core 1 (the second one) as shown by

sched_getcpu = 1. If we run this several times, it will also run on core 0 sometimes.

-

-

then we restrict the affinity to just core 0, and we see that the program was actually moved to core 0

The number of cores is modified as explained at: Number of cores

taskset from the util-linux package sets the initial core affinity of a program:

./build-buildroot \ --config 'BR2_PACKAGE_UTIL_LINUX=y' \ --config 'BR2_PACKAGE_UTIL_LINUX_SCHEDUTILS=y' \ ; ./run --eval-after 'taskset -c 1,1 /sched_getaffinity.out'

output:

sched_getaffinity = 0 1 sched_getcpu = 1 sched_getaffinity = 1 0 sched_getcpu = 0

so we see that the affinity was restricted to the second core from the start.

Let’s do a QEMU observation to justify this example being in the repository with userland breakpoints.

We will run our /sched_getaffinity.out infinitely many time, on core 0 and core 1 alternatively:

./run \ --cpus 2 \ --wait-gdb \ --eval-after 'i=0; while true; do taskset -c $i,$i /sched_getaffinity.out; i=$((! $i)); done' \ ;

on another shell:

./run-gdb-user "$(./getvar userland_build_dir)/sched_getaffinity.out" main

Then, inside GDB:

(gdb) info threads Id Target Id Frame * 1 Thread 1 (CPU#0 [running]) main () at sched_getaffinity.c:30 2 Thread 2 (CPU#1 [halted ]) native_safe_halt () at ./arch/x86/include/asm/irqflags.h:55 (gdb) c (gdb) info threads Id Target Id Frame 1 Thread 1 (CPU#0 [halted ]) native_safe_halt () at ./arch/x86/include/asm/irqflags.h:55 * 2 Thread 2 (CPU#1 [running]) main () at sched_getaffinity.c:30 (gdb) c

and we observe that info threads shows the actual correct core on which the process was restricted to run by taskset!

We should also try it out with kernel modules: https://stackoverflow.com/questions/28347876/set-cpu-affinity-on-a-loadable-linux-kernel-module

TODO we then tried:

./run --cpus 2 --eval-after '/sched_getaffinity_threads.out'

and:

./run-gdb-user "$(./getvar userland_build_dir)/sched_getaffinity_threads.out"

to switch between two simultaneous live threads with different affinities, it just didn’t break on our threads:

b main_thread_0

Bibliography:

We source the Linux kernel GDB scripts by default for lx-symbols, but they also contains some other goodies worth looking into.

Those scripts basically parse some in-kernel data structures to offer greater visibility with GDB.

All defined commands are prefixed by lx-, so to get a full list just try to tab complete that.

There aren’t as many as I’d like, and the ones that do exist are pretty self explanatory, but let’s give a few examples.

Show dmesg:

lx-dmesg

Show the Kernel command line parameters:

lx-cmdline

Dump the device tree to a fdtdump.dtb file in the current directory:

lx-fdtdump pwd

List inserted kernel modules:

lx-lsmod

Sample output:

Address Module Size Used by 0xffffff80006d0000 hello 16384 0

Bibliography:

List all processes:

lx-ps

Sample output:

0xffff88000ed08000 1 init 0xffff88000ed08ac0 2 kthreadd

The second and third fields are obviously PID and process name.

The first one is more interesting, and contains the address of the task_struct in memory.

This can be confirmed with:

p ((struct task_struct)*0xffff88000ed08000

which contains the correct PID for all threads I’ve tried:

pid = 1,

TODO get the PC of the kthreads: https://stackoverflow.com/questions/26030910/find-program-counter-of-process-in-kernel Then we would be able to see where the threads are stopped in the code!

On ARM, I tried:

task_pt_regs((struct thread_info *)((struct task_struct)*0xffffffc00e8f8000))->uregs[ARM_pc]

but task_pt_regs is a #define and GDB cannot see defines without -ggdb3: https://stackoverflow.com/questions/2934006/how-do-i-print-a-defined-constant-in-gdb which are apparently not set?

Bibliography:

For when it breaks again, or you want to add a new feature!

./run --debug ./run-gdb --before '-ex "set remotetimeout 99999" -ex "set debug remote 1"' start_kernel

This error means that the GDB server, e.g. in QEMU, sent more registers than the GDB client expected.

This can happen for the following reasons:

-

you set the architecture of the client wrong, often 32 vs 64 bit as mentioned at: https://stackoverflow.com/questions/4896316/gdb-remote-cross-debugging-fails-with-remote-g-packet-reply-is-too-long

-

there is a bug in the GDB server and the XML description does not match the number of registers actually sent

-

the GDB server does not send XML target descriptions and your GDB expects a different number of registers by default. E.g., gem5 d4b3e064adeeace3c3e7d106801f95c14637c12f does not send the XML files

The XML target description format is described a bit further at: https://stackoverflow.com/questions/46415059/how-to-observe-aarch64-system-registers-in-qemu/53043044#53043044

KGDB is kernel dark magic that allows you to GDB the kernel on real hardware without any extra hardware support.

It is useless with QEMU since we already have full system visibility with -gdb. So the goal of this setup is just to prepare you for what to expect when you will be in the treches of real hardware.

KGDB is cheaper than JTAG (free) and easier to setup (all you need is serial), but with less visibility as it depends on the kernel working, so e.g.: dies on panic, does not see boot sequence.

First run the kernel with:

./run --kgdb

this passes the following options on the kernel CLI:

kgdbwait kgdboc=ttyS1,115200

kgdbwait tells the kernel to wait for KGDB to connect.

So the kernel sets things up enough for KGDB to start working, and then boot pauses waiting for connection:

<6>[ 4.866050] Serial: 8250/16550 driver, 4 ports, IRQ sharing disabled <6>[ 4.893205] 00:05: ttyS0 at I/O 0x3f8 (irq = 4, base_baud = 115200) is a 16550A <6>[ 4.916271] 00:06: ttyS1 at I/O 0x2f8 (irq = 3, base_baud = 115200) is a 16550A <6>[ 4.987771] KGDB: Registered I/O driver kgdboc <2>[ 4.996053] KGDB: Waiting for connection from remote gdb... Entering kdb (current=0x(____ptrval____), pid 1) on processor 0 due to Keyboard Entry [0]kdb>

KGDB expects the connection at ttyS1, our second serial port after ttyS0 which contains the terminal.

The last line is the KDB prompt, and is covered at: KDB. Typing now shows nothing because that prompt is expecting input from ttyS1.

Instead, we connect to the serial port ttyS1 with GDB:

./run-gdb --kgdb --no-continue

Once GDB connects, it is left inside the function kgdb_breakpoint.

So now we can set breakpoints and continue as usual.

For example, in GDB:

continue

Then in QEMU:

/count.sh & /kgdb.sh

rootfs_overlay:kgdb.sh pauses the kernel for KGDB, and gives control back to GDB.

And now in GDB we do the usual:

break __x64_sys_write continue continue continue continue

And now you can count from KGDB!

If you do: break __x64_sys_write immediately after ./run-gdb --kgdb, it fails with KGDB: BP remove failed: <address>. I think this is because it would break too early on the boot sequence, and KGDB is not yet ready.

See also:

TODO: we would need a second serial for KGDB to work, but it is not currently supported on arm and aarch64 with -M virt that we use: https://unix.stackexchange.com/questions/479085/can-qemu-m-virt-on-arm-aarch64-have-multiple-serial-ttys-like-such-as-pl011-t/479340#479340

One possible workaround for this would be to use KDB ARM.

Main more generic question: https://stackoverflow.com/questions/14155577/how-to-use-kgdb-on-arm

Just works as you would expect:

insmod /timer.ko /kgdb.sh

In GDB:

break lkmc_timer_callback continue continue continue

and you now control the count.

KDB is a way to use KDB directly in your main console, without GDB.

Advantage over KGDB: you can do everything in one serial. This can actually be important if you only have one serial for both shell and .

Disadvantage: not as much functionality as GDB, especially when you use Python scripts. Notably, TODO confirm you can’t see the the kernel source code and line step as from GDB, since the kernel source is not available on guest (ah, if only debugging information supported full source, or if the kernel had a crazy mechanism to embed it).

Run QEMU as:

./run --kdb

This passes kgdboc=ttyS0 to the Linux CLI, therefore using our main console. Then QEMU:

[0]kdb> go

And now the kdb> prompt is responsive because it is listening to the main console.

After boot finishes, run the usual:

/count.sh & /kgdb.sh

And you are back in KDB. Now you can count with:

[0]kdb> bp __x64_sys_write [0]kdb> go [0]kdb> go [0]kdb> go [0]kdb> go

And you will break whenever __x64_sys_write is hit.

You can get see further commands with:

[0]kdb> help

The other KDB commands allow you to step instructions, view memory, registers and some higher level kernel runtime data similar to the superior GDB Python scripts.

You can also use KDB directly from the graphic window with:

./run --graphic --kdb

This setup could be used to debug the kernel on machines without serial, such as modern desktops.

This works because --graphics adds kbd (which stands for KeyBoarD!) to kgdboc.

TODO neither arm and aarch64 are working as of 1cd1e58b023791606498ca509256cc48e95e4f5b + 1.

arm seems to place and hit the breakpoint correctly, but no matter how many go commands I do, the count.sh stdout simply does not show.

aarch64 seems to place the breakpoint correctly, but after the first go the kernel oopses with warning:

WARNING: CPU: 0 PID: 46 at /root/linux-kernel-module-cheat/submodules/linux/kernel/smp.c:416 smp_call_function_many+0xdc/0x358

and stack trace:

smp_call_function_many+0xdc/0x358 kick_all_cpus_sync+0x30/0x38 kgdb_flush_swbreak_addr+0x3c/0x48 dbg_deactivate_sw_breakpoints+0x7c/0xb8 kgdb_cpu_enter+0x284/0x6a8 kgdb_handle_exception+0x138/0x240 kgdb_brk_fn+0x2c/0x40 brk_handler+0x7c/0xc8 do_debug_exception+0xa4/0x1c0 el1_dbg+0x18/0x78 __arm64_sys_write+0x0/0x30 el0_svc_handler+0x74/0x90 el0_svc+0x8/0xc

My theory is that every serious ARM developer has JTAG, and no one ever tests this, and the kernel code is just broken.

Step debug userland processes to understand how they are talking to the kernel.

First build gdbserver into the root filesystem:

./build-buildroot --config 'BR2_PACKAGE_GDB=y'

Then on guest, to debug userland/myinsmod.c:

/gdbserver.sh /myinsmod.out /hello.ko

Source: rootfs_overlay/gdbserver.sh.

And on host:

./run-gdbserver myinsmod

or alternatively with the full path:

./run-gdbserver "$(./getvar userland_build_dir)/myinsmod.out"

Analogous to GDB step debug userland processes:

/gdbserver.sh ls

on host you need:

./run-gdbserver "$(./getvar buildroot_build_build_dir)"/busybox-*/busybox ls_main

Our setup gives you the rare opportunity to step debug libc and other system libraries.

For example in the guest:

/gdbserver.sh /count.out

Then on host:

./run-gdbserver count

and inside GDB:

break sleep continue

And you are now left inside the sleep function of our default libc implementation uclibc libc/unistd/sleep.c!

You can also step into the sleep call:

step

This is made possible by the GDB command that we use by default:

set sysroot ${common_buildroot_build_dir}/staging

which automatically finds unstripped shared libraries on the host for us.

TODO: try to step debug the dynamic loader. Would be even easier if starti is available: https://stackoverflow.com/questions/10483544/stopping-at-the-first-machine-code-instruction-in-gdb

The portability of the kernel and toolchains is amazing: change an option and most things magically work on completely different hardware.

To use arm instead of x86 for example:

./build-buildroot --arch arm ./run --arch arm

Debug:

./run --arch arm --wait-gdb # On another terminal. ./run-gdb --arch arm

We also have one letter shorthand names for the architectures and --arch option:

# aarch64 ./run -a A # arm ./run -a a # x86_64 ./run -a x

Known quirks of the supported architectures are documented in this section.

This example illustrates how reading from the x86 control registers with mov crX, rax can only be done from kernel land on ring0.

From kernel land:

insmod ring0.ko

works and output the registers, for example:

cr0 = 0xFFFF880080050033 cr2 = 0xFFFFFFFF006A0008 cr3 = 0xFFFFF0DCDC000

However if we try to do it from userland:

/ring0.out

stdout gives:

Segmentation fault

and dmesg outputs:

traps: ring0.out[55] general protection ip:40054c sp:7fffffffec20 error:0 in ring0.out[400000+1000]

Sources:

In both cases, we attempt to run the exact same code which is shared on the ring0.h header file.

Bibliography:

TODO Can you run arm executables in the aarch64 guest? https://stackoverflow.com/questions/22460589/armv8-running-legacy-32-bit-applications-on-64-bit-os/51466709#51466709

I’ve tried:

./run-toolchain --arch aarch64 gcc -- -static ~/test/hello_world.c -o "$(./getvar p9_dir)/a.out" ./run --arch aarch64 --eval-after '/mnt/9p/data/a.out'

but it fails with:

a.out: line 1: syntax error: unexpected word (expecting ")")

We used to "support" it until f8c0502bb2680f2dbe7c1f3d7958f60265347005 (it booted) but dropped since one was testing it often.

If you want to revive and maintain it, send a pull request.

It should not be too hard to port this repository to any architecture that Buildroot supports. Pull requests are welcome.

When the Linux kernel finishes booting, it runs an executable as the first and only userland process. This executable is called the init program.

The init process is then responsible for setting up the entire userland (or destroying everything when you want to have fun).

This typically means reading some configuration files (e.g. /etc/initrc) and forking a bunch of userland executables based on those files, including the very interactive shell that we end up on.

systemd provides a "popular" init implementation for desktop distros as of 2017.

BusyBox provides its own minimalistic init implementation which Buildroot, and therefore this repo, uses by default.

The init program can be either an executable shell text file, or a compiled ELF file. It becomes easy to accept this once you see that the exec system call handles both cases equally: https://unix.stackexchange.com/questions/174062/can-the-init-process-be-a-shell-script-in-linux/395375#395375

The init executable is searched for in a list of paths in the root filesystem, including /init, /sbin/init and a few others. For more details see: Path to init

To have more control over the system, you can replace BusyBox’s init with your own.

The most direct way to replace init with our own is to just use the init= command line parameter directly:

./run --kernel-cli 'init=/count.sh'

This just counts every second forever and does not give you a shell.

This method is not very flexible however, as it is hard to reliably pass multiple commands and command line arguments to the init with it, as explained at: Init environment.

For this reason, we have created a more robust helper method with the --eval option:

./run --eval 'echo "asdf qwer";insmod /hello.ko;/poweroff.out'

The --eval option replaces init with a shell script that just evals the given command.

It is basically a shortcut for:

./run --kernel-cli 'init=/eval_base64.sh - lkmc_eval="insmod /hello.ko;/poweroff.out"'

Source: rootfs_overlay/eval_base64.sh.

This allows quoting and newlines by base64 encoding on host, and decoding on guest, see: Kernel command line parameters escaping.

It also automatically chooses between init= and rcinit= for you, see: Path to init

--eval replaces BusyBox' init completely, which makes things more minimal, but also has has the following consequences:

-

/etc/fstabmounts are not done, notably/procand/sys, test it out with:./run --eval 'echo asdf;ls /proc;ls /sys;echo qwer'

-

no shell is launched at the end of boot for you to interact with the system. You could explicitly add a

shat the end of your commands however:./run --eval 'echo hello;sh'

The best way to overcome those limitations is to use: Run command at the end of BusyBox init

If the script is large, you can add it to a gitignored file and pass that to -E as in:

echo ' insmod /hello.ko /poweroff.out ' > gitignore.sh ./run --eval "$(cat gitignore.sh)"

or add it to a file to the root filesystem guest and rebuild:

echo '#!/bin/sh insmod /hello.ko /poweroff.out ' > rootfs_overlay/gitignore.sh chmod +x rootfs_overlay/gitignore.sh ./build-buildroot ./run --kernel-cli 'init=/gitignore.sh'

Remember that if your init returns, the kernel will panic, there are just two non-panic possibilities:

-

run forever in a loop or long sleep

-

poweroffthe machine

Just using BusyBox' poweroff at the end of the init does not work and the kernel panics:

./run --eval poweroff

because BusyBox' poweroff tries to do some fancy stuff like killing init, likely to allow userland to shutdown nicely.

But this fails when we are init itself!

poweroff works more brutally and effectively if you add -f:

./run --eval 'poweroff -f'

but why not just use our minimal /poweroff.out and be done with it?

./run --eval '/poweroff.out'

Source: userland/poweroff.c

This also illustrates how to shutdown the computer from C: https://stackoverflow.com/questions/28812514/how-to-shutdown-linux-using-c-or-qt-without-call-to-system

I dare you to guess what this does:

./run --eval '/sleep_forever.out'

Source: userland/sleep_forever.c

This executable is a convenient simple init that does not panic and sleeps instead.

Get a reasonable answer to "how long does boot take?":

./run --eval-after '/time_boot.out'

Dmesg contains a message of type:

[ 2.188242] time_boot.c

which tells us that boot took 2.188242 seconds.

Use the --eval-after option is for you rely on something that BusyBox' init set up for you like /etc/fstab:

./run --eval-after 'echo asdf;ls /proc;ls /sys;echo qwer'

After the commands run, you are left on an interactive shell.

The above command is basically equivalent to:

./run --kernel-cli-after-dash 'lkmc_eval="insmod /hello.ko;poweroff.out;"'

where the lkmc_eval option gets evaled by our default S98 startup script.

Except that --eval-after is smarter and uses base64 encoding.

Alternatively, you can also add the comamdns to run to a new init.d entry to run at the end o the BusyBox init:

cp rootfs_overlay/etc/init.d/S98 rootfs_overlay/etc/init.d/S99.gitignore vim rootfs_overlay/etc/init.d/S99.gitignore ./build-buildroot ./run

and they will be run automatically before the login prompt.

Scripts under /etc/init.d are run by /etc/init.d/rcS, which gets called by the line ::sysinit:/etc/init.d/rcS in /etc/inittab.

The init is selected at:

-

initrd or initramfs system:

/init, a custom one can be set with therdinit=kernel command line parameter -

otherwise: default is

/sbin/init, followed by some other paths, a custom one can be set withinit=

The kernel parses parameters from the kernel command line up to "-"; if it doesn’t recognize a parameter and it doesn’t contain a '.', the parameter gets passed to init: parameters with '=' go into init’s environment, others are passed as command line arguments to init. Everything after "-" is passed as an argument to init.

And you can try it out with:

./run --kernel-cli 'init=/init_env_poweroff.out - asdf=qwer zxcv'

Output:

args: /init_env_poweroff.out - zxcv env: HOME=/ TERM=linux asdf=qwer

Source: userland/init_env_poweroff.c.

The annoying dash - gets passed as a parameter to init, which makes it impossible to use this method for most non custom executables.

Arguments with dots that come after - are still treated specially (of the form subsystem.somevalue) and disappear, from args, e.g.:

./run --kernel-cli 'init=/init_env_poweroff.out - /poweroff.out'

outputs:

args /init_env_poweroff.out - ab

so see how a.b is gone.

The simple workaround is to just create a shell script that does it, e.g. as we’ve done at: rootfs_overlay/gem5_exit.sh.

Wait, where do HOME and TERM come from? (greps the kernel). Ah, OK, the kernel sets those by default: https://github.com/torvalds/linux/blob/94710cac0ef4ee177a63b5227664b38c95bbf703/init/main.c#L173

const char *envp_init[MAX_INIT_ENVS+2] = { "HOME=/", "TERM=linux", NULL, };

On top of the Linux kernel, the BusyBox /bin/sh shell will also define other variables.

We can explore the shenanigans that the shell adds on top of the Linux kernel with:

./run --kernel-cli 'init=/bin/sh'

From there we observe that:

env

gives:

SHLVL=1 HOME=/ TERM=linux PWD=/

therefore adding SHLVL and PWD to the default kernel exported variables.

Furthermore, to increase confusion, if you list all non-exported shell variables https://askubuntu.com/questions/275965/how-to-list-all-variables-names-and-their-current-values with:

set

then it shows more variables, notably:

PATH='/sbin:/usr/sbin:/bin:/usr/bin'

Finally, login shells will source some default files, notably:

/etc/profile /root/.profile

We currently control /root/.profile at rootfs_overlay/root/.profile, and use the default BusyBox /etc/profile.

The shell knows that it is a login shell if the first character of argv[0] is -, see also: https://stackoverflow.com/questions/2050961/is-argv0-name-of-executable-an-accepted-standard-or-just-a-common-conventi/42291142#42291142

When we use just init=/bin/sh, the Linux kernel sets argv[0] to /bin/sh, which does not start with -.

However, if you use ::respawn:-/bin/sh on inttab described at TTY, BusyBox' init sets argv[0] to -, and so does getty. This can be observed with:

cat /proc/$$/cmdline