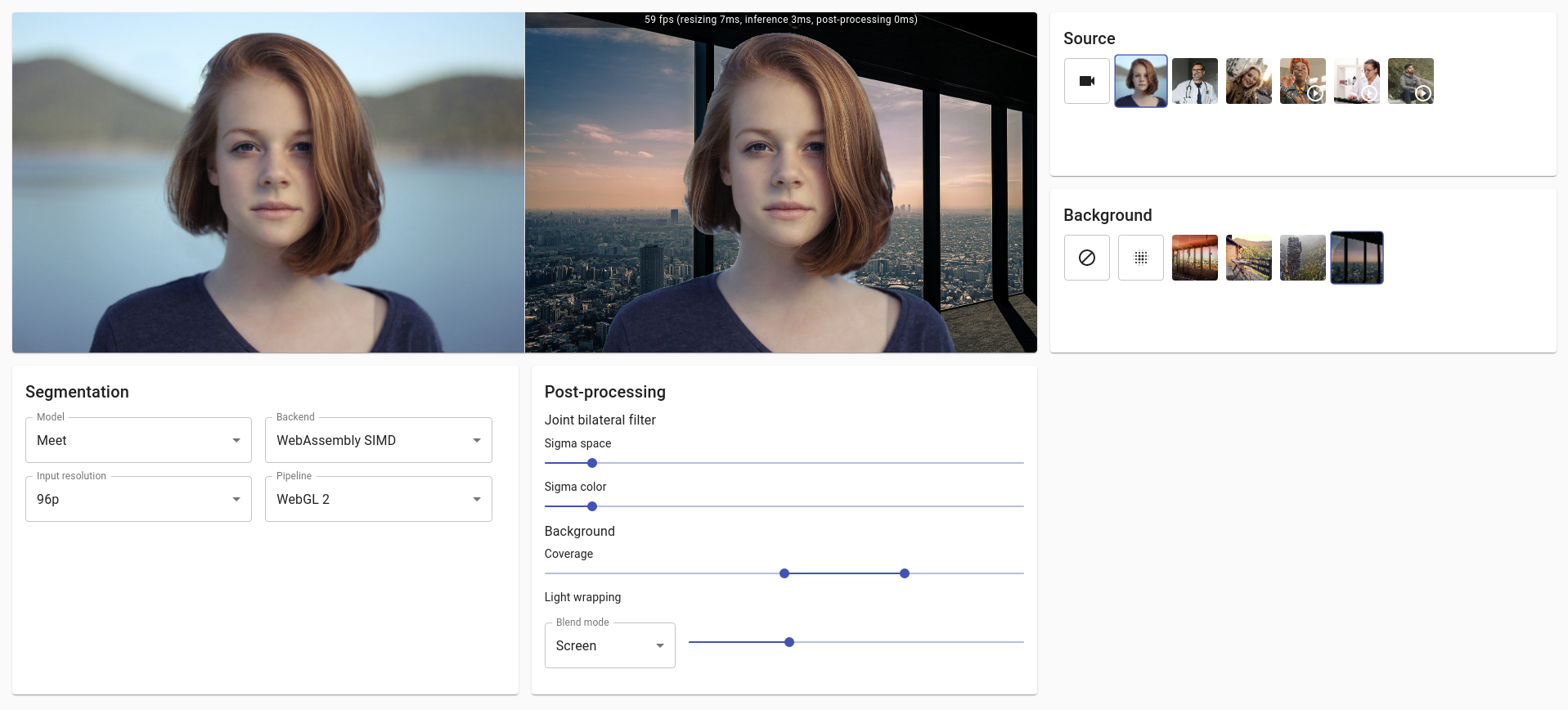

Demo on adding virtual background to a live video stream in the browser.

- Implementation details

- Performance

- Possible improvements

- Related work

- Running locally

- Building TensorFlow Lite tool

In this demo you can switch between 3 different ML pre-trained segmentation models:

The drawing utils provided in BodyPix are not optimized for the simple background image use case of this demo. That's why I haven't used toMask nor drawMask methods from the API to get a higher framerate.

The drawBokehEffect method from BodyPix API is not used. Instead, CanvasRenderingContext2D.filter property is configured with blur and CanvasRenderingContext2D.globalCompositeOperation is setup to blend the different layers according to the segmentation mask.

The result provides an interesting framerate on laptop but is not really usable on mobile (see Performance for more details). On both devices, the segmentation lacks precision compared to Meet segmentation model.

Note: BodyPix relies on the default TensorFlow.js backend for your device (i.e. webgl usually). The WASM backend seems to be slower for this model, at least on MacBook Pro.

Meet segmentation model is only available as a TensorFlow Lite model file. Few approaches are discussed in this issue to convert and use it with TensorFlow.js but I decided to try implementing something closer to Google original approach described in this post. Hence the demo relies on a small WebAssembly tool built on top of TFLite along with XNNPACK delegate and SIMD support.

Note: Meet segmentation model card was initially released under Apache 2.0 license (read more here and here) but seems to be switched to Google Terms of Service since Jan 21, 2021. Not sure what it means for this demo. Here is a copy of the model card matching the model files used in this demo

You can find the source of the TFLite inference tool in the tflite directory of this repository. Instructions to build TFLite using Docker are described in a dedicated section: Building TensorFlow Lite tool.

- The Bazel WORKSPACE configuration is inspired from MediaPipe repository.

- Emscripten toolchain for Bazel was setup following Emsdk repository instructions and changed to match XNNPACK build config.

- TensorFlow source is patched to match Emscripten toolchain and WASM CPU.

- C++ functions are called directly from JavaScript to achieve the best performance.

- Memory is accessed directly from JavaScript through pointer offsets to exchange image data with WASM.

This rendering pipeline is pretty much the same as for BodyPix. It relies on Canvas compositing properties to blend rendering layers according to the segmentation mask.

Interactions with TFLite inference tool are executed on CPU to convert from UInt8 to Float32 for the model input and to apply softmax on the model output.

The WebGL 2 rendering pipeline relies entirely on webgl2 canvas context and GLSL shaders for:

- Resizing inputs to fit the segmentation model (there are still CPU operations to copy from RGBA UInt8Array to RGB Float32Array in TFLite WASM memory).

- Softmax on segmentation model output to get the probability of each pixel to be a person.

- Joint bilateral filter to smooth the segmentation mask and to preserve edges from the original input frame (implementation based on MediaPipe repository).

- Blending background image with light wrapping.

- Original input frame background blur. Great articles here and here.

Thanks to @RemarkableGuy for pointing out this model.

Selfie segmentation model's architecture is very close to the one of Meet segmentation and they both seem to be generated from the same Keras model (see this issue for more details). It is released under Apache 2.0 and you can find in this repo a copy of the model card matching the model used in this demo (here is the original current model card). The model was extracted from its official artifact.

Unlike what is described in the model card, the output of the model is a single channel allowing to get a float value of the segmentation mask. Besides that, the model is inferred using the exact same pipeline as Meet segmentation. However, the model does not perform as well as Meet segmentation because of its higher input resolution (see Performance for more details), even though it still offers better quality segmentation than BodyPix.

Here are the performance observed for the whole rendering pipelines, including inference and post-processing, when using the device camera on smartphone Pixel 3 (Chrome).

| Model | Input resolution | Backend | Pipeline | FPS |

|---|---|---|---|---|

| BodyPix | 640x360 | WebGL | Canvas 2D + CPU | 11 |

| ML Kit | 256x256 | WebAssembly | Canvas 2D + CPU | 9 |

| ML Kit | 256x256 | WebAssembly | WebGL 2 | 9 |

| ML Kit | 256x256 | WebAssembly SIMD | Canvas 2D + CPU | 17 |

| ML Kit | 256x256 | WebAssembly SIMD | WebGL 2 | 19 |

| Meet | 256x144 | WebAssembly | Canvas 2D + CPU | 14 |

| Meet | 256x144 | WebAssembly | WebGL 2 | 16 |

| Meet | 256x144 | WebAssembly SIMD | Canvas 2D + CPU | 26 |

| Meet | 256x144 | WebAssembly SIMD | WebGL 2 | 31 |

| Meet | 160x96 | WebAssembly | Canvas 2D + CPU | 29 |

| Meet | 160x96 | WebAssembly | WebGL 2 | 35 |

| Meet | 160x96 | WebAssembly SIMD | Canvas 2D + CPU | 48 |

| Meet | 160x96 | WebAssembly SIMD | WebGL 2 | 60 |

- Rely on alpha channel to save texture fetches from the segmentation mask.

- Blur the background image outside of the rendering loop and use it for light wrapping instead of the original background image. This should produce better rendering results for large light wrapping masks.

- Optimize joint bilateral filter shader to prevent unnecessary variables, calculations and costly functions like

exp. - Try separable approximation for joint bilateral filter.

- Compute everything on lower source resolution (scaling down at the beginning of the pipeline).

- Build TFLite and XNNPACK with multithreading support. Few configuration examples are in TensorFlow.js WASM backend.

- Detect WASM features to load automatically the right TFLite WASM runtime. Inspirations could be taken from TensorFlow.js WASM backend which is based on GoogleChromeLabs/wasm-feature-detect.

- Experiment with DeepLabv3+ and maybe retrain

MobileNetv3-smallmodel directly.

You can learn more about a pre-trained TensorFlow.js model in the BodyPix repository.

Here is a technical overview of background features in Google Meet which relies on:

- MediaPipe

- WebAssembly

- WebAssembly SIMD

- WebGL

- XNNPACK

- TFLite

- Custom segmentation ML models from Google

- Custom rendering effects through OpenGL shaders from Google

In the project directory, you can run:

Runs the app in the development mode.

Open http://localhost:3000 to view it in the browser.

The page will reload if you make edits.

You will also see any lint errors in the console.

Launches the test runner in the interactive watch mode.

See the section about running tests for more information.

Builds the app for production to the build folder.

It correctly bundles React in production mode and optimizes the build for the best performance.

The build is minified and the filenames include the hashes.

Your app is ready to be deployed!

See the section about deployment for more information.

Docker is required to build TensorFlow Lite inference tool locally.

Builds WASM functions that can infer Meet and ML Kit segmentation models. The TFLite tool is built both with and without SIMD support.