This repo contains the tools for training, running, and evaluating detectors and classifiers for images collected from motion-triggered wildlife cameras. The core functionality provided is:

- Training and running models, particularly MegaDetector, an object detection model that does a pretty good job finding animals, people, and vehicles (and therefore is pretty good at finding empty images) in a variety of terrestrial ecosystems

- Data parsing from frequently-used camera trap metadata formats into a common format

- A batch processing API that runs MegaDetector on large image collections, to accelerate population surveys

- A real-time API that runs MegaDetector (and some species classifiers) synchronously, primarily to support anti-poaching scenarios (e.g. see this blog post describing how this API supports Wildlife Protection Solutions)

This repo is maintained by folks at Ecologize who like looking at pictures of animals. We want to support conservation, of course, but we also really like looking at pictures of animals.

The main model that we train and run using tools in this repo is MegaDetector, an object detection model that identifies animals, people, and vehicles in camera trap images. This model is trained on several hundred thousand bounding boxes from a variety of ecosystems. Lots more information – including download links and instructions for running the model – is available on the MegaDetector page.

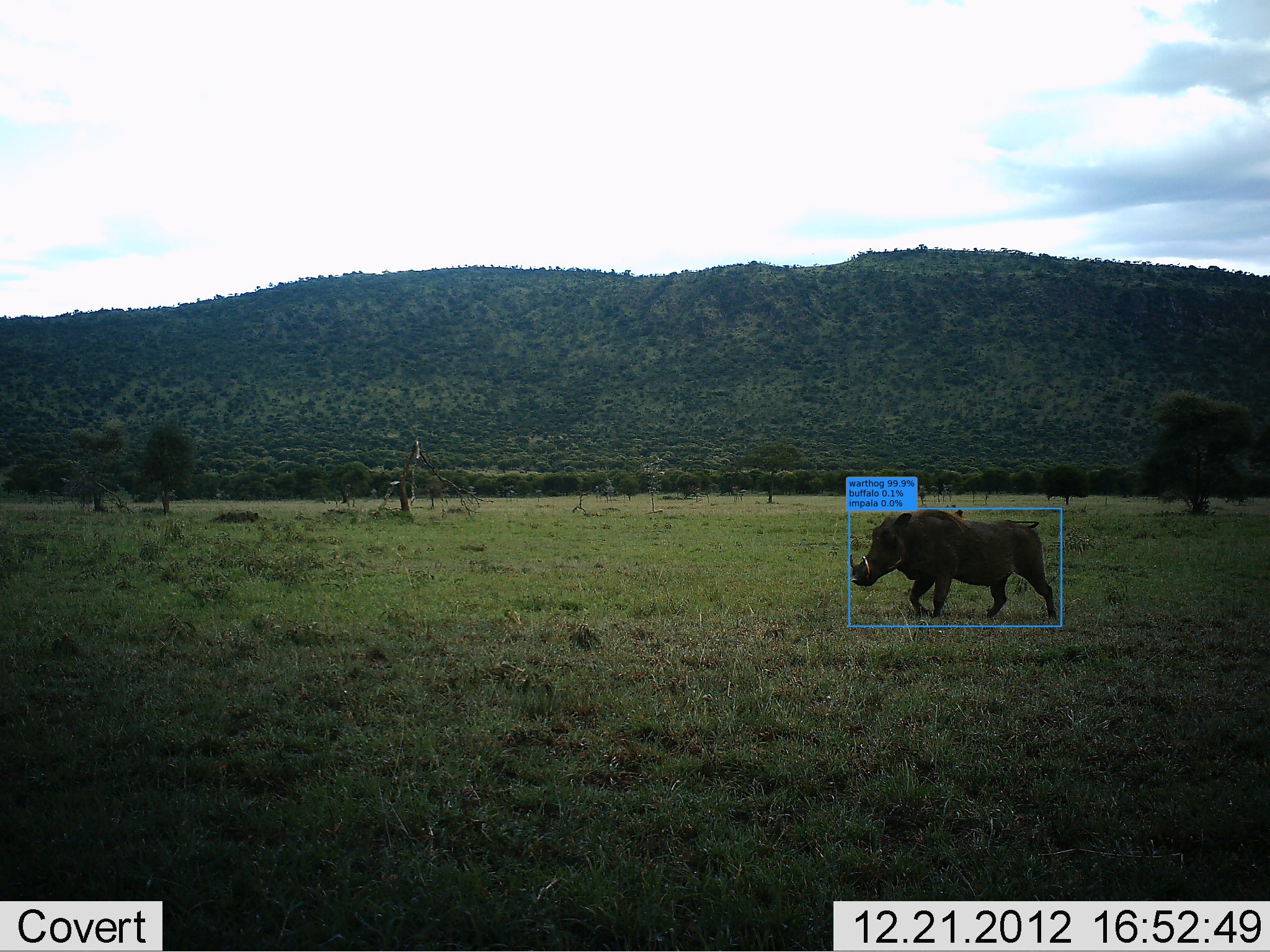

Here's a "teaser" image of what detector output looks like:

Image credit University of Washington.

If you're just considering the use of AI in your workflow, and you aren't even sure yet whether MegaDetector would be useful to you, we recommend reading the "getting started with MegaDetector" page.

If you're already familiar with MegaDetector and you're ready to run it on your data (and you have some familiarity with running Python code), see the MegaDetector README for instructions on downloading and running MegaDetector.

We work with ecologists all over the world to help them spend less time annotating images and more time thinking about conservation. You can read a little more about how this works on our getting started with MegaDetector page.

Here are a few of the organizations that have used MegaDetector... we're only listing organizations who (a) we know about and (b) have given us permission to refer to them here (or have posted publicly about their use of MegaDetector), so if you're using MegaDetector or other tools from this repo and would like to be added to this list, email us!

-

Canadian Parks and Wilderness Society (CPAWS) Northern Alberta Chapter

-

Applied Conservation Macro Ecology Lab, University of Victoria

-

Banff National Park Resource Conservation, Parks Canada

-

Blumstein Lab, UCLA

-

Borderlands Research Institute, Sul Ross State University

-

Capitol Reef National Park / Utah Valley University

-

Center for Biodiversity and Conservation, American Museum of Natural History

-

Centre for Ecosystem Science, UNSW Sydney

-

Cross-Cultural Ecology Lab, Macquarie University

-

DC Cat Count, led by the Humane Rescue Alliance

-

Department of Fish and Wildlife Sciences, University of Idaho

-

Ecology and Conservation of Amazonian Vertebrates Research Group, Federal University of Amapá

-

Gola Forest Programma, Royal Society for the Protection of Birds (RSPB)

-

Graeme Shannon's Research Group, Bangor University

-

Hamaarag, The Steinhardt Museum of Natural History, Tel Aviv University

-

Institut des Science de la Forêt Tempérée (ISFORT), Université du Québec en Outaouais

-

Lab of Dr. Bilal Habib, the Wildlife Institute of India

-

Mammal Spatial Ecology and Conservation Lab, Washington State University

-

McLoughlin Lab in Population Ecology, University of Saskatchewan

-

National Wildlife Refuge System, Southwest Region, U.S. Fish & Wildlife Service

-

Northern Great Plains Program, Smithsonian

-

Quantitative Ecology Lab, University of Washington

-

Santa Monica Mountains Recreation Area, National Park Service

-

Seattle Urban Carnivore Project, Woodland Park Zoo

-

Serra dos Órgãos National Park, ICMBio

-

Snapshot USA, Smithsonian

-

Wildlife Coexistence Lab, University of British Columbia

-

Wildlife Research, Oregon Department of Fish and Wildlife

-

Wildlife Division, Michigan Department of Natural Resources

-

Department of Ecology, TU Berlin

-

Ghost Cat Analytics

-

Protected Areas Unit, Canadian Wildlife Service

-

School of Natural Sciences, University of Tasmania (story)

-

Kenai National Wildlife Refuge, U.S. Fish & Wildlife Service (story)

-

Alberta Biodiversity Monitoring Institute (ABMI) (WildTrax platform) (blog post)

-

Shan Shui Conservation Center (blog post) (translated blog post)

-

Road Ecology Center, University of California, Davis (Wildlife Observer Network platform)

This repo does not directly host camera trap data, but we work with our collaborators to make data and annotations available whenever possible on lila.science.

For questions about this repo, contact cameratraps@lila.science.

This repo is organized into the following folders...

Code for hosting our models as an API, either for synchronous operation (i.e., for real-time inference) or as a batch process (for large biodiversity surveys). Common operations one might do after running MegaDetector – e.g. generating preview pages to summarize your results, separating images into different folders based on AI results, or converting results to a different format – also live in this folder, within the api/batch_processing/postprocessing folder.

Experimental code for training species classifiers on new data sets, generally trained on MegaDetector crops. Currently the main pipeline described in this folder relies on a large database of labeled images that is not publicly available; therefore, this folder is not yet set up to facilitate training of your own classifiers. However, it is useful for users of the classifiers that we train, and contains some useful starting points if you are going to take a "DIY" approach to training classifiers on cropped images.

All that said, here's another "teaser image" of what you get at the end of training and running a classifier:

Code for:

- Converting frequently-used metadata formats to COCO Camera Traps format

- Converting the output of AI models (especially YOLOv5) to the format used for AI results throughout this repo

- Creating, visualizing, and editing COCO Camera Traps .json databases

Code for training, running, and evaluating MegaDetector.

Ongoing research projects that use this repository in one way or another; as of the time I'm editing this README, there are projects in this folder around active learning and the use of simulated environments for training data augmentation.

Random things that don't fit in any other directory. For example:

- A not-super-useful but super-duper-satisfying and mostly-successful attempt to use OCR to pull metadata out of image pixels in a fairly generic way, to handle those pesky cases when image metadata is lost.

- Experimental postprocessing scripts that were built for a single use case

Code to facilitate mapping data-set-specific categories (e.g. "lion", which means very different things in Idaho vs. South Africa) to a standard taxonomy.

A handful of images from LILA that facilitate testing and debugging.

Shared tools for visualizing images with ground truth and/or predicted annotations.

Image credit USDA, from the NACTI data set.

This project welcomes contributions and suggestions. Most contributions require you to agree to a Contributor License Agreement (CLA) declaring that you have the right to, and actually do, grant us the rights to use your contribution. For details, visit cla.microsoft.com.

When you submit a pull request, a CLA-bot will automatically determine whether you need to provide a CLA and decorate the PR appropriately (e.g., label, comment). Simply follow the instructions provided by the bot. You will only need to do this once across all repos using our CLA.

This project has adopted the Microsoft Open Source Code of Conduct. For more information see the Code of Conduct FAQ or contact opencode@microsoft.com with any additional questions or comments.

This repository is licensed with the MIT license.