Git Repo for CML Document Chatbot

- Load PDF Documents directly from the App UI

- Chunking and Cleaning of Documents

- Model of Choice from HuggingFace

- Gradio Chatbot Interface

- Standalone Chroma VectorDB

- Create a Standalone Chroma VectorDB instance in Azure

- Create a Standalone Chroma VectorDB instance in AWS

- Conversational Memory (In Progress)

- Support for other VectorDBs (Milvus)

The AMP Application has been configured to use the following

- 4 CPU

- 32 GB RAM

- 1 GPU

-

Navigate to CML Workspace -> Site Administration -> AMPs Tab

-

Under AMP Catalog Sources section, We will "Add a new source By" selecting "Catalog File URL"

-

Provide the following URL and click "Add Source"

https://raw.githubusercontent.com/nkityd09/cml_speech_to_text/main/catalog.yaml

-

Once added, We will be able to see the LLM PDF Document Chatbot in the AMP section and deploy it from there.

-

During AMP deployment, we can set three environment varibales

- VectorDB_IP:- (Specify the Public IP address of host where ChromaDB is running)

- HF_MODEL:- HuggingFace Model Name (Defaults to mistralai/Mistral-7B-v0.1, please include the complete name of the model)

- HF_TOKEN:- Provide HuggingFace Access Token for accessing Gated models like Falcon180B and Llama-2

-

Click on the AMP and "Configure Project", disable Spark as it is not required.

-

Once the AMP steps are completed, We can access the Gradio UI via the Applications page.

Note: The application creates a "default" collection in the VectorDB when the AMP is launched the first time.

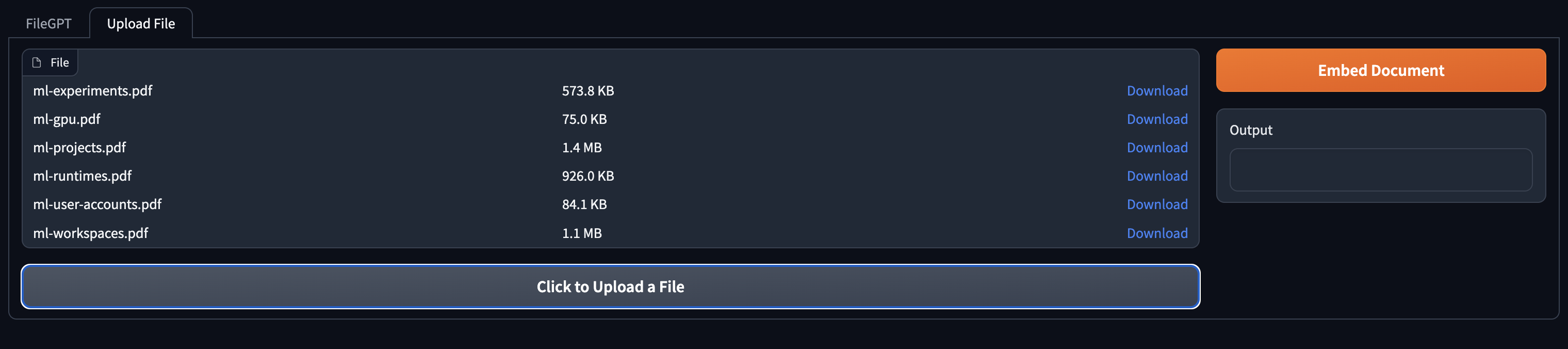

- Navigate to the "Upload File" Tab and use the "Click to Upload Button" to upload a file

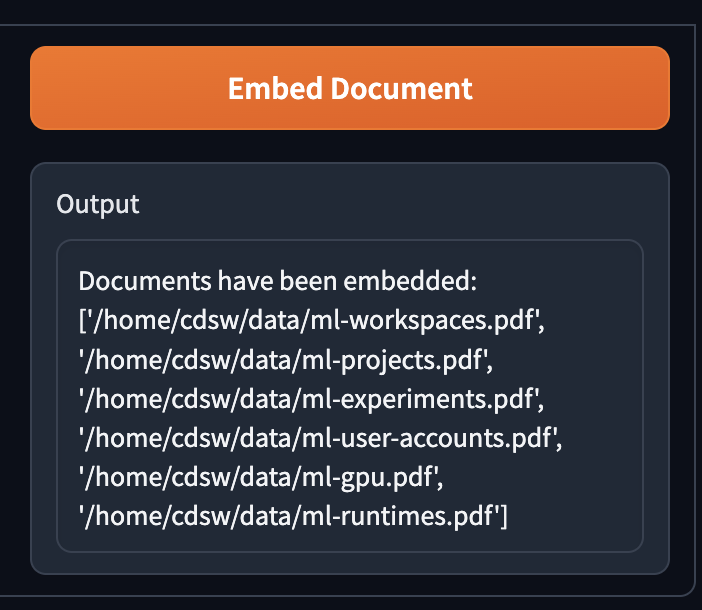

- Once the files have been uploaded, use the "Embed Document" button to store the document into VectorDB

Note Embedding documents is lenghty process and can take some time to complete.

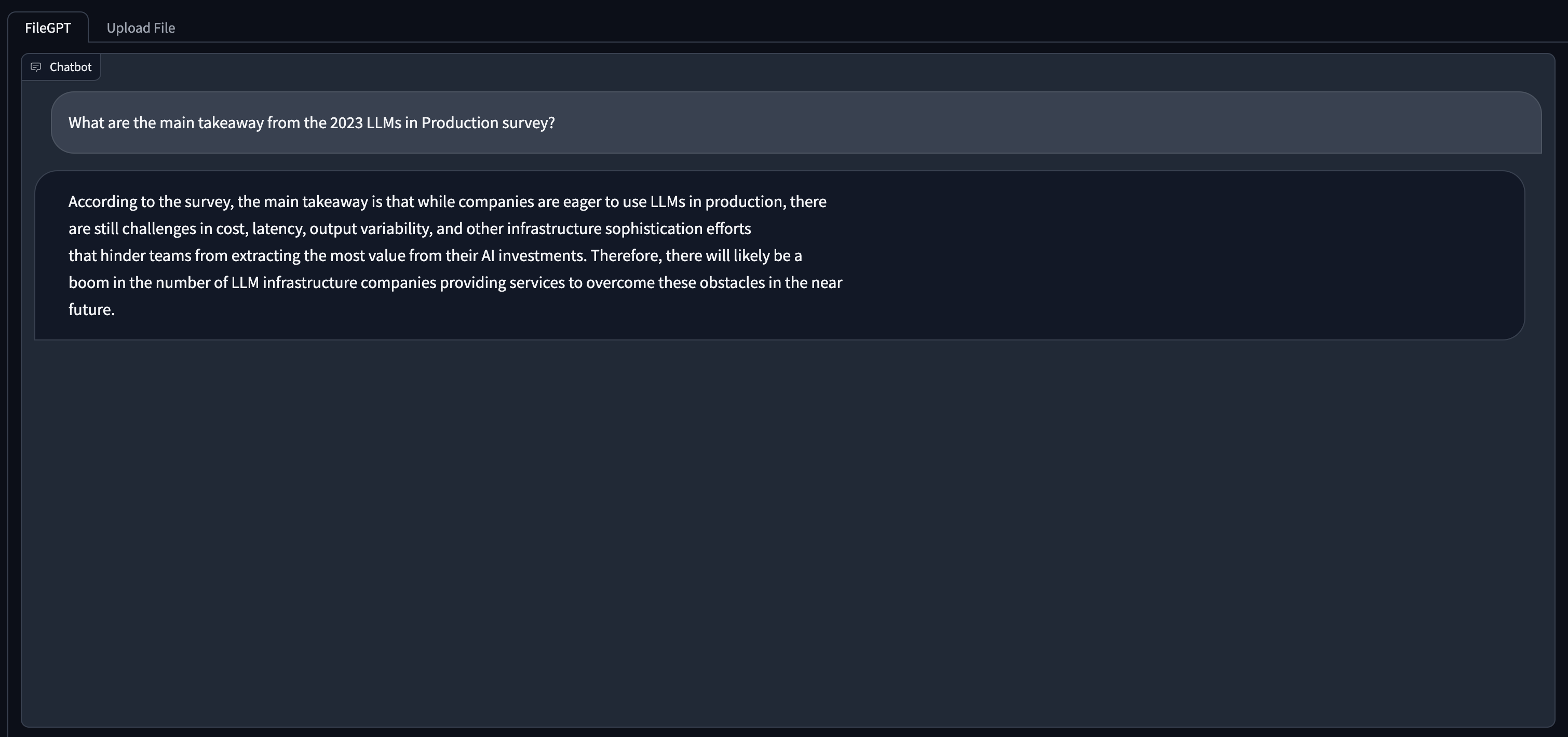

- Once Embedding has completed, switch to the FileGPT tab and enter your questions via the textbox and Submit button below.