This repository provides codes for friendly adversarial training (FAT).

ICML 2020 Paper: Attacks Which Do Not Kill Training Make Adversarial Learning Stronger (https://arxiv.org/abs/2002.11242) Jingfeng Zhang*, Xilie Xu*, Bo Han, Gang Niu, Lizhen Cui, Masashi Sugiyama and Mohan Kankanhalli

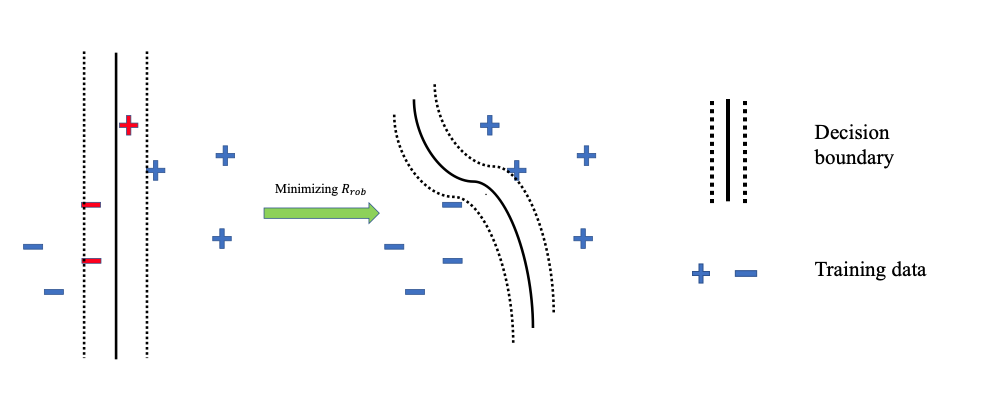

Adversarial data can easily fool the standard trained classifier. Adversarial training employs the adversarial data into the training process. Adversarial training aims to achieve two purposes (a) correctly classify the data, and (b) make the decision boundary thick so that no data fall inside the decision boundary.

The purposes of the adversarial training

Conventional adversarial training is based on the minimax formulation:

where

Inside, there is maximization where we find the most adversarial data. Outside, there is minimization where we find a classifier to fit those generated adversarial data.

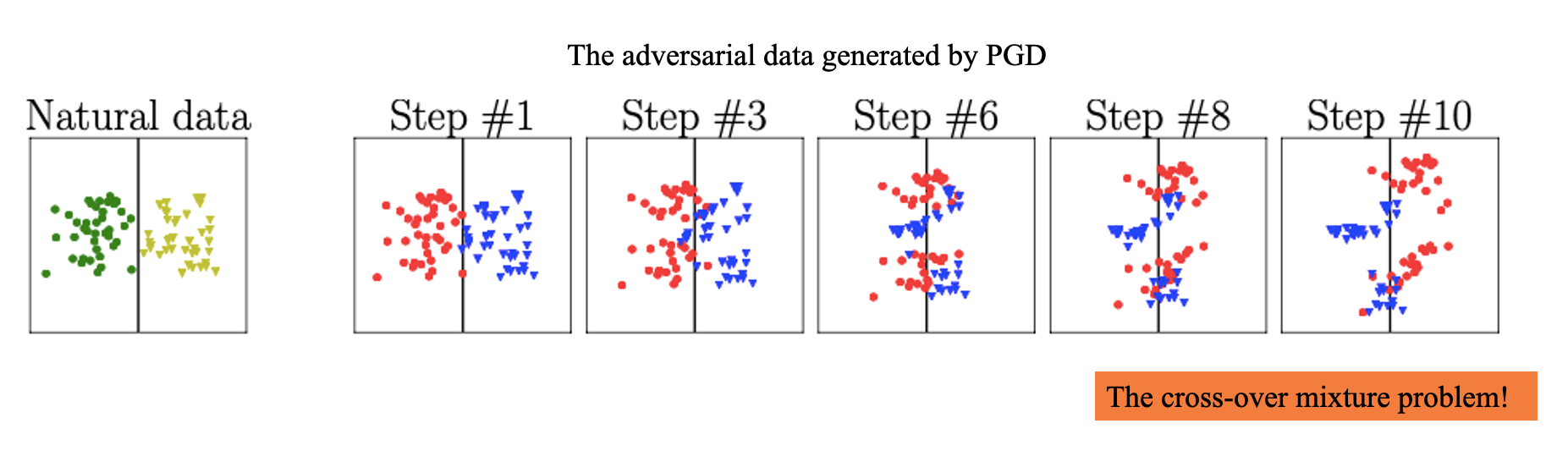

The minimax-based adversarial training causes the severe degradation of the natural generalization. Why? The minimax-based adversarial training has a severe cross-over mixture problem: the adversarial data of different classes overshoot into the peer areas. Learning from those adversarial data is very difficult.

Cross-over mixture problem of the minimax-based adversarial training

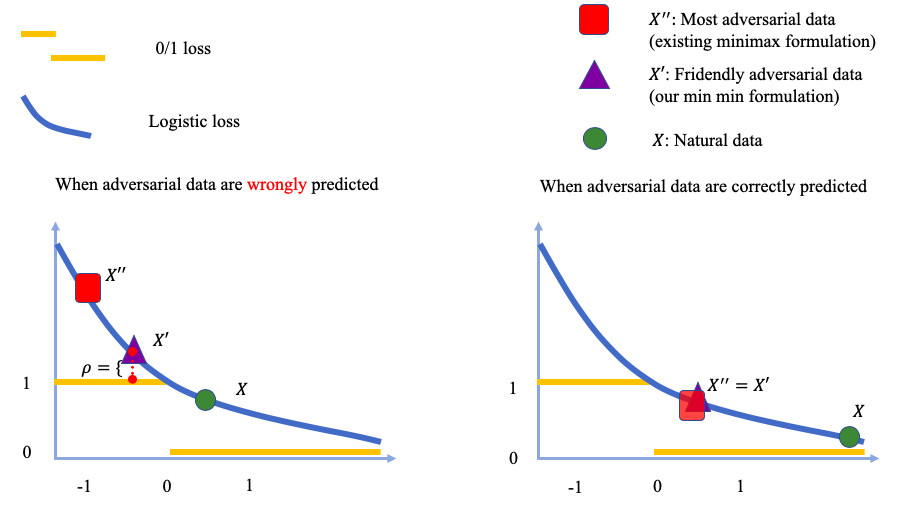

The outer minimization keeps the same. Instead of generating adversarial data via the inner maximization, we generate the friendly adversarial data minimizing the loss value. There are two constraints (a) the adversarial data is misclassified, and (b) the wrong prediction of the adversarial data is better than the desired prediction by at least a margin

Let us look at comparisons between minimax formulation and min-min formulation.

Comparisons between minimax formulation and min-min formulation

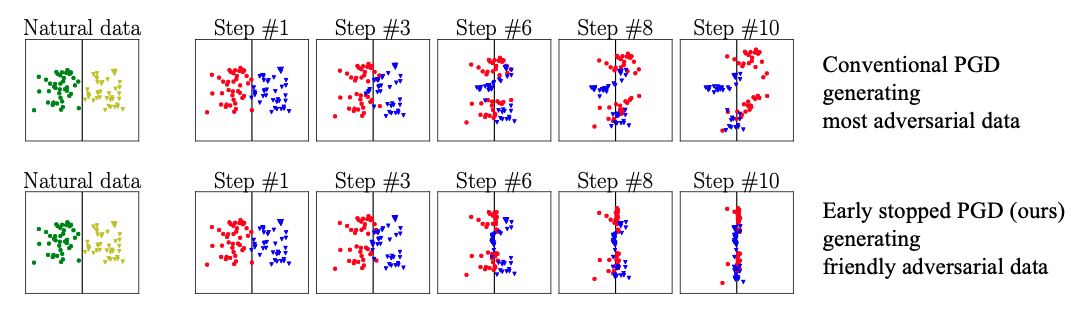

Friendly adversarial training (FAT) employs the friendly adversarial data generated by early stopped PGD to update the model.

The early stopped PGD stop the PGD interations once the adversarial data is misclassified. (Controlled by the hyperparameter tau in the code. Noted that when tau equal to maximum perturbation step num_steps, our FAT makes the conventional adversarial training e.g., AT, TRADES, and MART as our special cases.)

Conventional adversarial training employs PGD for searching most adversarial data. Friendly adversarial training employs early stopped PGD for searching friendly adversarial data.

- Python (3.6)

- Pytorch (1.2.0)

- CUDA

- numpy

Here are examples:

CUDA_VISIBLE_DEVICES='0' python FAT.py --epsilon 0.031

CUDA_VISIBLE_DEVICES='0' python FAT.py --epsilon 0.062| Defense | Natural Acc. | FGSM Acc. | PGD-20 Acc. | C&W Acc. |

|---|---|---|---|---|

| AT(Madry) | 87.30% | 56.10% | 45.80% | 46.80% |

| CAT | 77.43% | 57.17% | 46.06% | 42.28% |

| DAT | 85.03% | 63.53% | 48.70% | 47.27% |

| FAT ( |

89.34 |

65.52 |

46.13 |

46.82 |

| FAT ( |

87.00 |

65.94 |

49.86 |

48.65 |

Results of AT(Madry), CAT and DAT are reported in DAT. FAT has the same evaluations.

CUDA_VISIBLE_DEVICES='0' python FAT_for_TRADES.py --epsilon 0.031

CUDA_VISIBLE_DEVICES='0' python FAT_for_TRADES.py --epsilon 0.062

CUDA_VISIBLE_DEVICES='0' python FAT_for_MART.py --epsilon 0.031

CUDA_VISIBLE_DEVICES='0' python FAT_for_MART.py --epsilon 0.062| Defense | Natural Acc. | FGSM Acc. | PGD-20 Acc. | C&W Acc. |

|---|---|---|---|---|

| TRADES( |

88.64% | 56.38% | 49.14% | - |

| FAT for TRADES( |

89.94 |

61.00 |

49.70 |

49.35 |

| TRADES( |

84.92% | 61.06% | 56.61% | 54.47% |

| FAT for TRADES( |

86.60 |

61.79 |

55.98 |

54.29 |

| FAT for TRADES( |

84.39 |

61.73 |

57.12 |

54.36 |

Results of TRADES ( and

) are reported in TRADES. FAT for TRADES has the same evaluations. Noted that our evaluations of the above are the same as the description in the TRADES's paper, i.e., adversarial data are generated without random start

rand_init=False.

However, in TRADES’s GitHub, they use random start rand_init=True before PGD perturbation that is deviated from the statements in their paper. For the fair evaluations of FAT with random start, please refer to the Table 3 in our paper.

We welcome various attack methods to attack our defense models.

On both cifar-10 and SVHN dataset, we normalize all images into [0,1].

We will upload the trained model later!

@article{zhang2020fat,

title={Attacks Which Do Not Kill Training Make Adversarial Learning Stronger},

author={Zhang, Jingfeng and Xu, Xilie and Han, Bo and Niu, Gang and Cui, Lizhen and Sugiyama, Masashi and Kankanhalli, Mohan},

journal={arXiv preprint arXiv:2002.11242},

year={2020}

}

Please contact j-zhang@comp.nus.edu.sg and xuxilie@mail.sdu.edu.cn if you have any question on the codes.