Segment Anything Meets Point Tracking

Frano Rajič, Lei Ke, Yu-Wing Tai, Chi-Keung Tang, Martin Danelljan, Fisher Yu

ETH Zürich, HKUST, EPFL

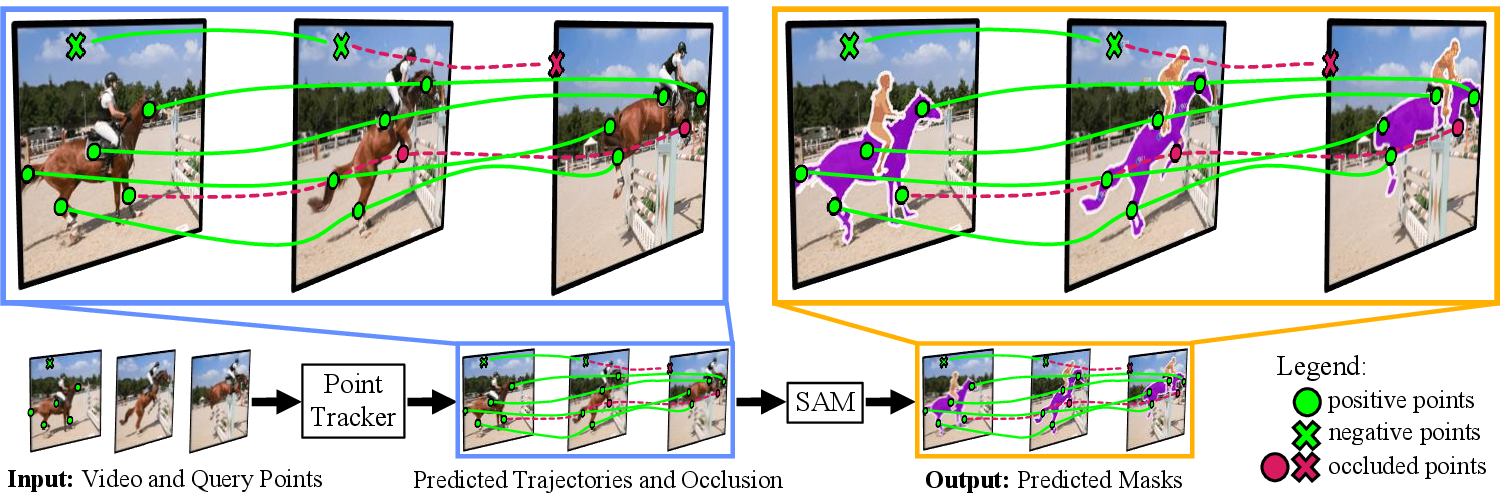

We propose SAM-PT, an extension of the Segment Anything Model (SAM) for zero-shot video segmentation. Our work offers a simple yet effective point-based perspective in video object segmentation research. For more details, refer to our paper. Our code, models, and evaluation tools will be released soon. Stay tuned!

Annotators only provide a few points to denote the target object at the first video frame to get video segmentation results. Please visit our project page for more visualizations, including qualitative results on DAVIS 2017 videos and more Avatar clips.

Explore our step-by-step guides to get up and running:

- Getting Started: Learn how to set up your environment and run the demo.

- Prepare Datasets: Instructions on acquiring and prepping necessary datasets.

- Prepare Checkpoints: Steps to fetch model checkpoints.

- Running Experiments: Details on how to execute experiments.

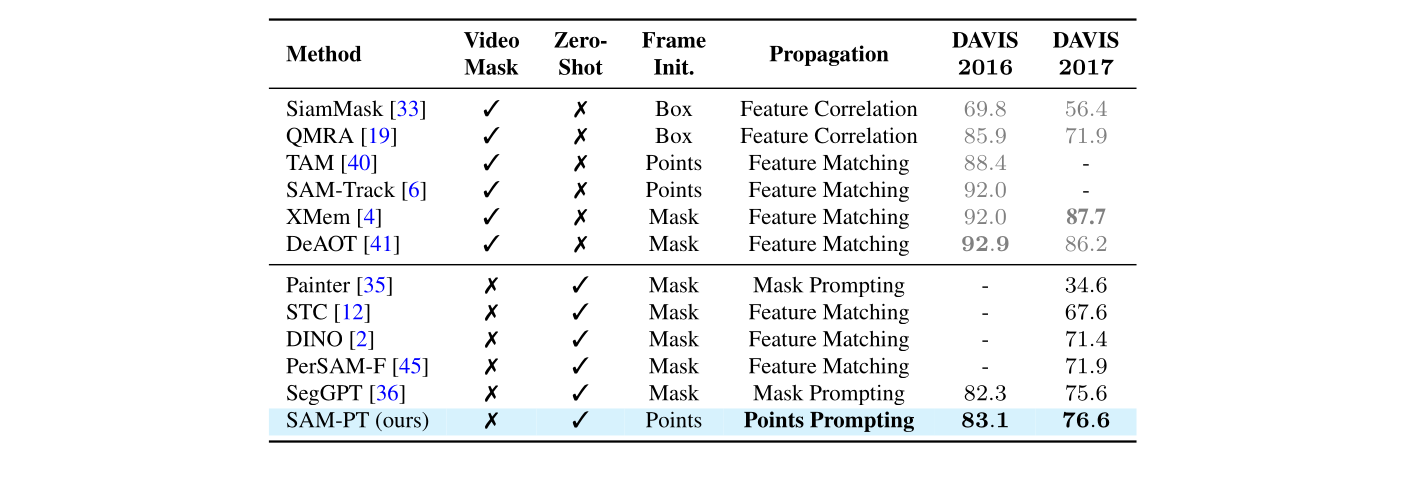

SAM-PT is the first method to utilize sparse point tracking combined with SAM for video segmentation. With such compact mask representation, we achieve the highest

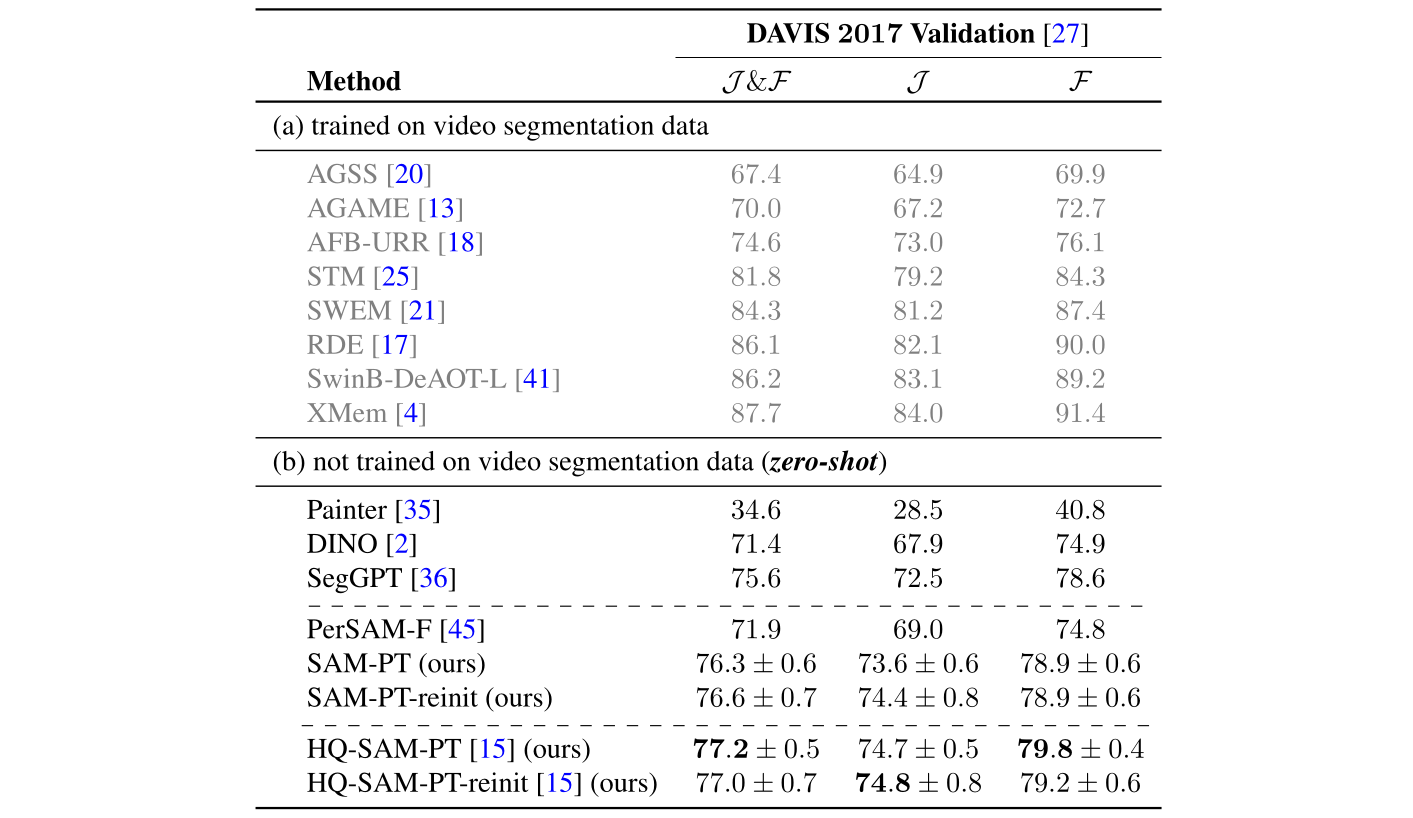

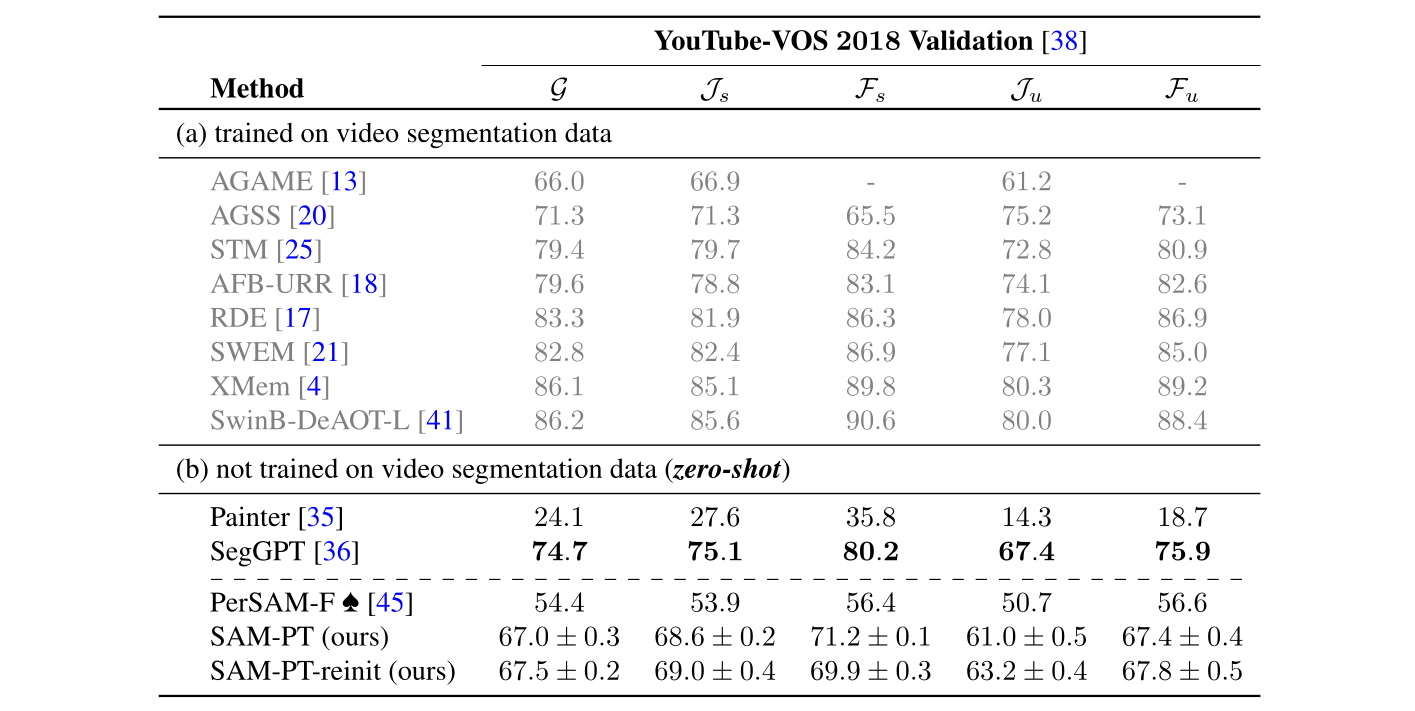

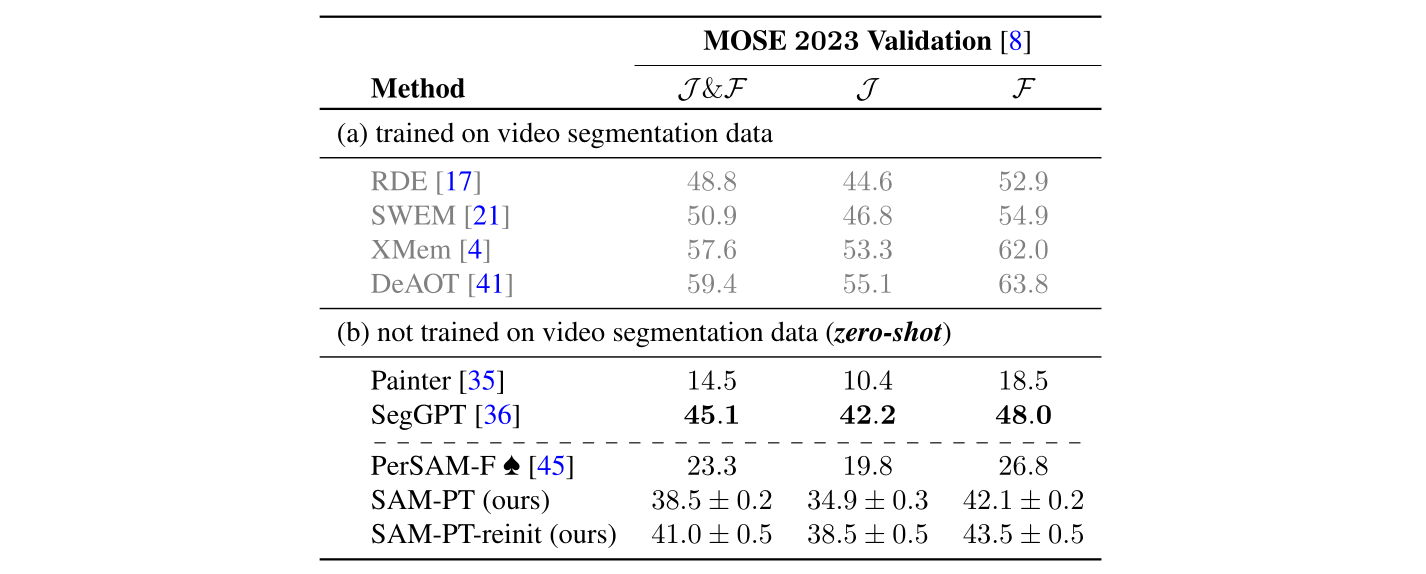

Quantitative results in semi-supervised VOS on the validation subsets of DAVIS 2017, YouTube-VOS 2018, and MOSE 2023:

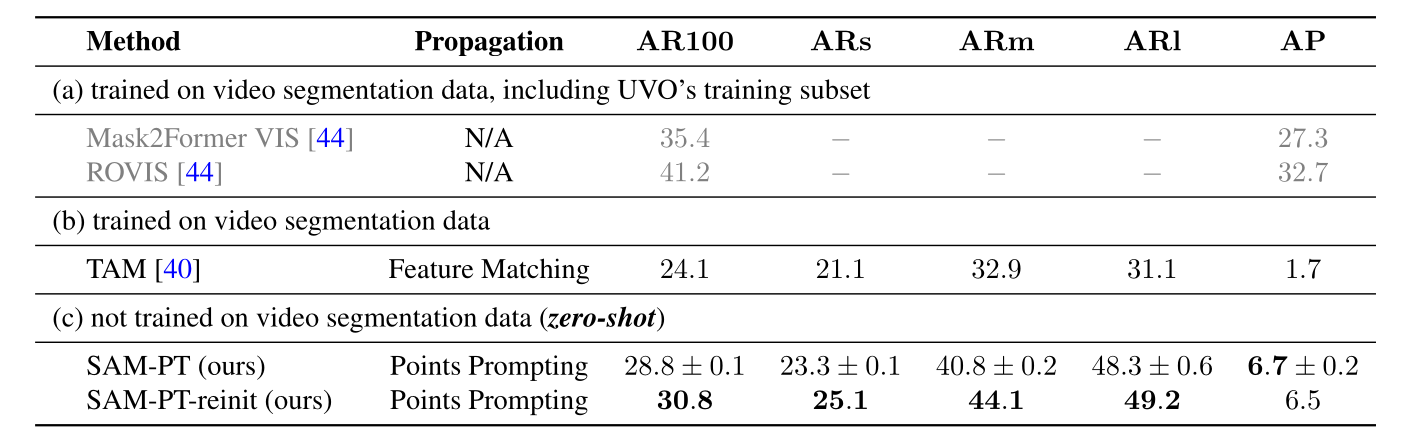

On the validation split of UVO VideoDenseSet v1.0, SAM-PT outperforms TAM even though the former was not trained on any video segmentation data. TAM is a concurrent approach combining SAM and XMem, where XMem was pre-trained on BL30K and trained on DAVIS and YouTube-VOS, but not on UVO. On the other hand, SAM-PT combines SAM with the PIPS point tracking method, both of which have not been trained on any video segmentation tasks.

We want to thank SAM, PIPS, HQ-SAM, MobileSAM, XMem, and Mask2Former for publicly releasing their code and pretrained models.

If you find SAM-PT useful in your research or if you refer to the results mentioned in our work, please star ⭐ this repository and consider citing 📝:

@article{sam-pt,

title = {Segment Anything Meets Point Tracking},

author = {Rajič, Frano and Ke, Lei and Tai, Yu-Wing and Tang, Chi-Keung and Danelljan, Martin and Yu, Fisher},

journal = {arXiv:2307.01197},

year = {2023}

}