- 1. Building Blocks

- 2. Setup External Services

- 3. Install & Usage

- 4. Lectures

- 5. License

- 6. Contributors & Teachers

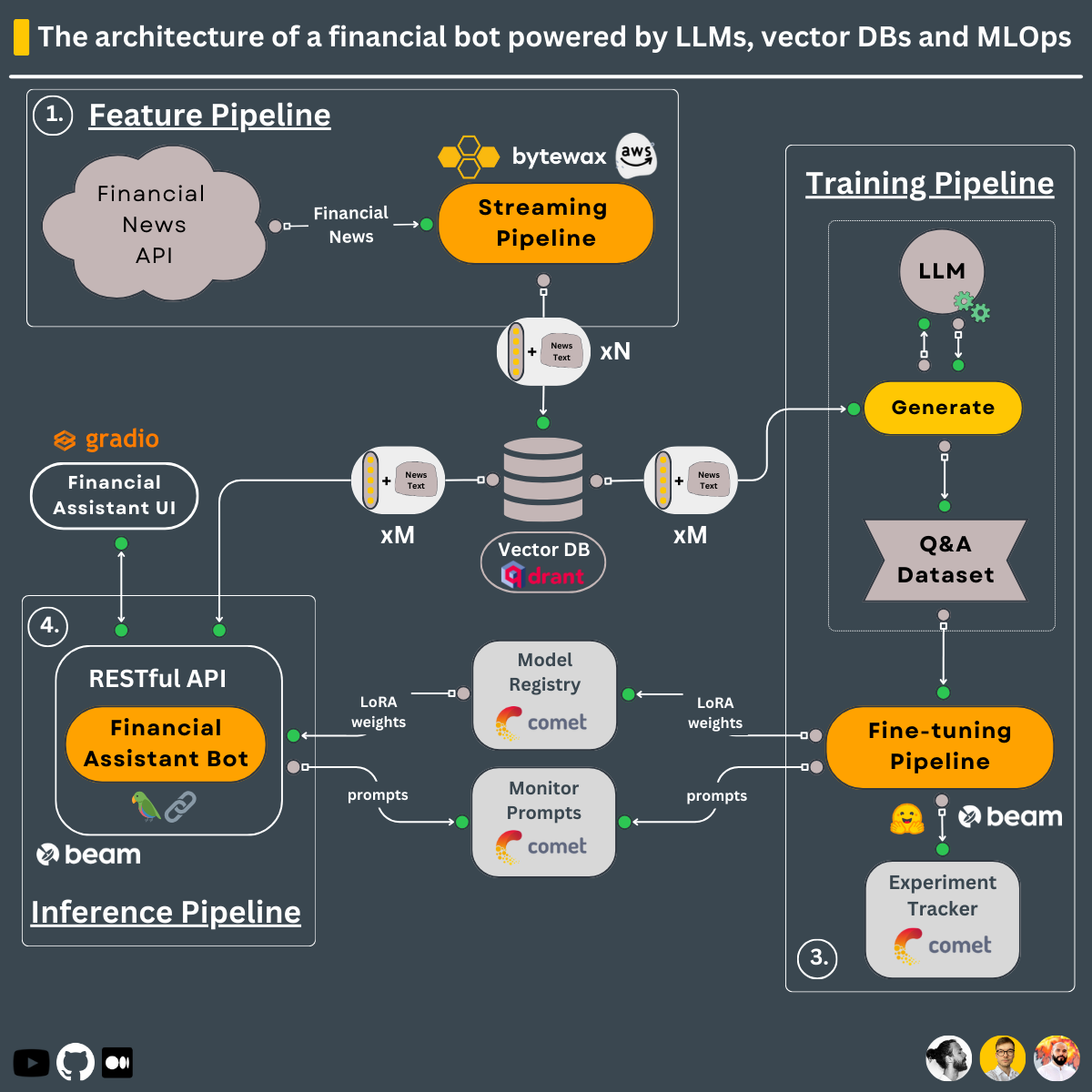

Using the 3-pipeline design, this is what you will learn to build within this course ↓

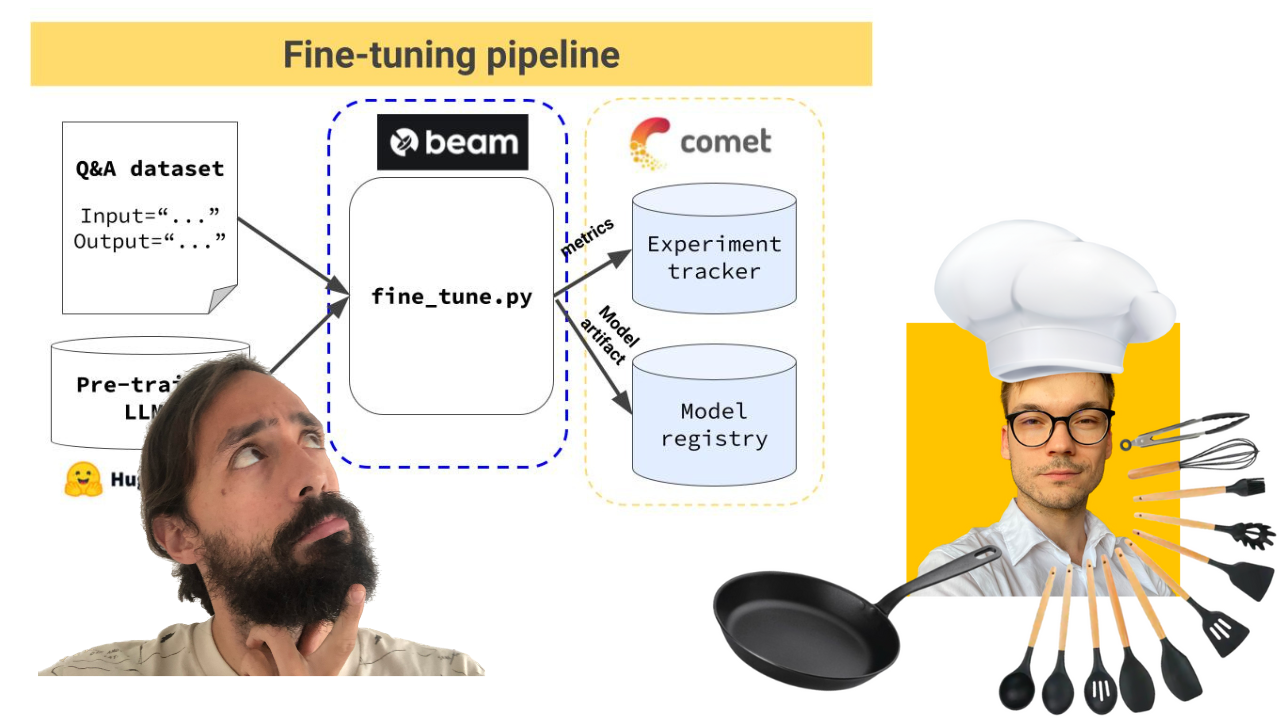

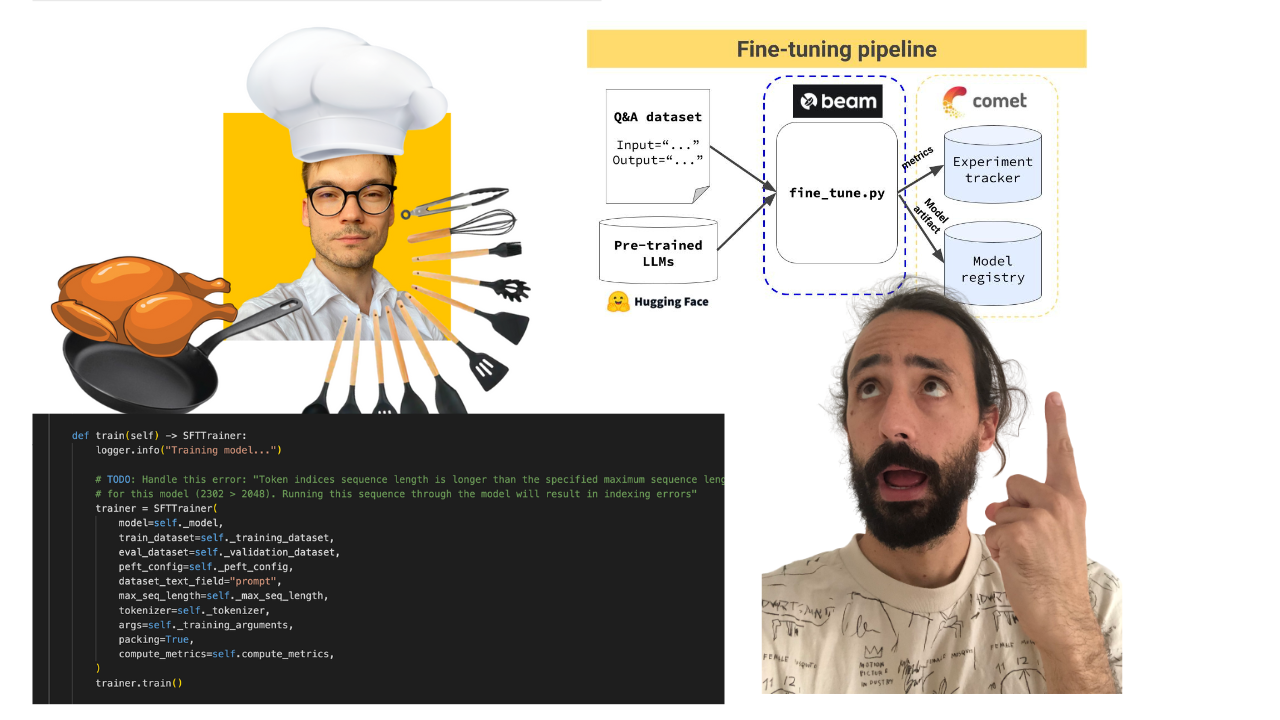

Training pipeline that:

- loads a proprietary Q&A dataset

- fine-tunes an open-source LLM using QLoRA

- logs the training experiments on Comet ML's experiment tracker & the inference results on Comet ML's LLMOps dashboard

- stores the best model on Comet ML's model registry

The training pipeline is deployed using Beam as a serverless GPU infrastructure.

-> Found under the modules/training_pipeline directory.

- CPU: 4 Cores

- RAM: 14 GiB

- VRAM: 10 GiB (mandatory CUDA-enabled Nvidia GPU)

Note: Do not worry if you don't have the minimum hardware requirements. We will show you how to deploy the training pipeline to Beam's serverless infrastructure and train the LLM there.

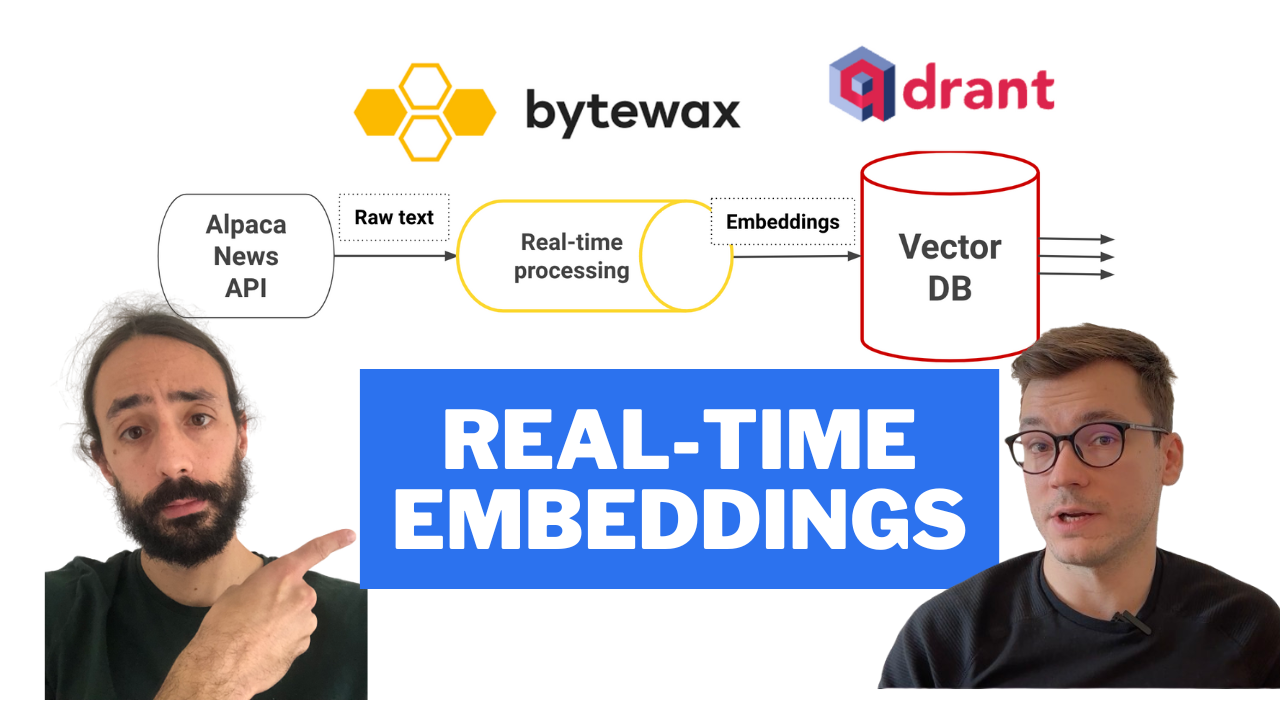

Real-time feature pipeline that:

- ingests financial news from Alpaca

- cleans & transforms the news documents into embeddings in real-time using Bytewax

- stores the embeddings into the Qdrant Vector DB

The streaming pipeline is automatically deployed on an AWS EC2 machine using a CI/CD pipeline built in GitHub actions.

-> Found under the modules/streaming_pipeline directory.

- CPU: 1 Core

- RAM: 2 GiB

- VRAM: -

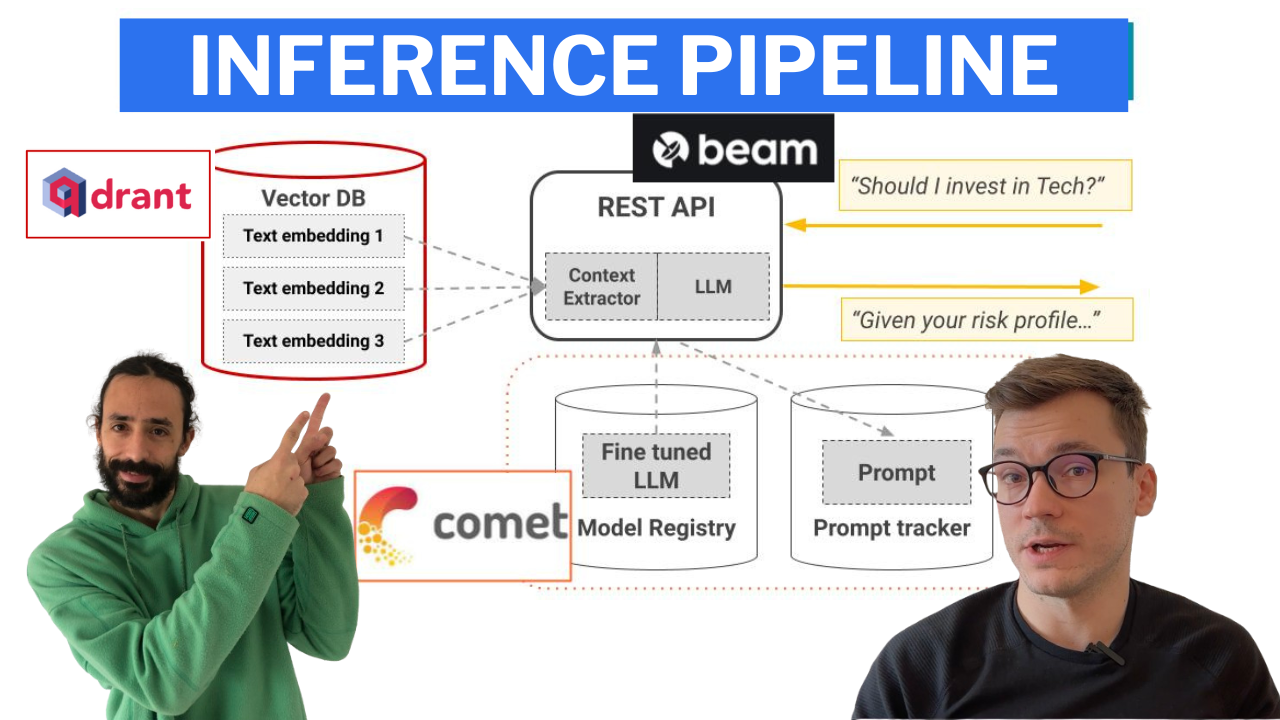

Inference pipeline that uses LangChain to create a chain that:

- downloads the fine-tuned model from Comet's model registry

- takes user questions as input

- queries the Qdrant Vector DB and enhances the prompt with related financial news

- calls the fine-tuned LLM for financial advice using the initial query, the context from the vector DB, and the chat history

- persists the chat history into memory

- logs the prompt & answer into Comet ML's LLMOps monitoring feature

The inference pipeline is deployed using Beam as a serverless GPU infrastructure, as a RESTful API. Also, it is wrapped under a UI for demo purposes, implemented in Gradio.

-> Found under the modules/financial_bot directory.

- CPU: 4 Cores

- RAM: 14 GiB

- VRAM: 8 GiB (mandatory CUDA-enabled Nvidia GPU)

Note: Do not worry if you don't have the minimum hardware requirements. We will show you how to deploy the inference pipeline to Beam's serverless infrastructure and call the LLM from there.

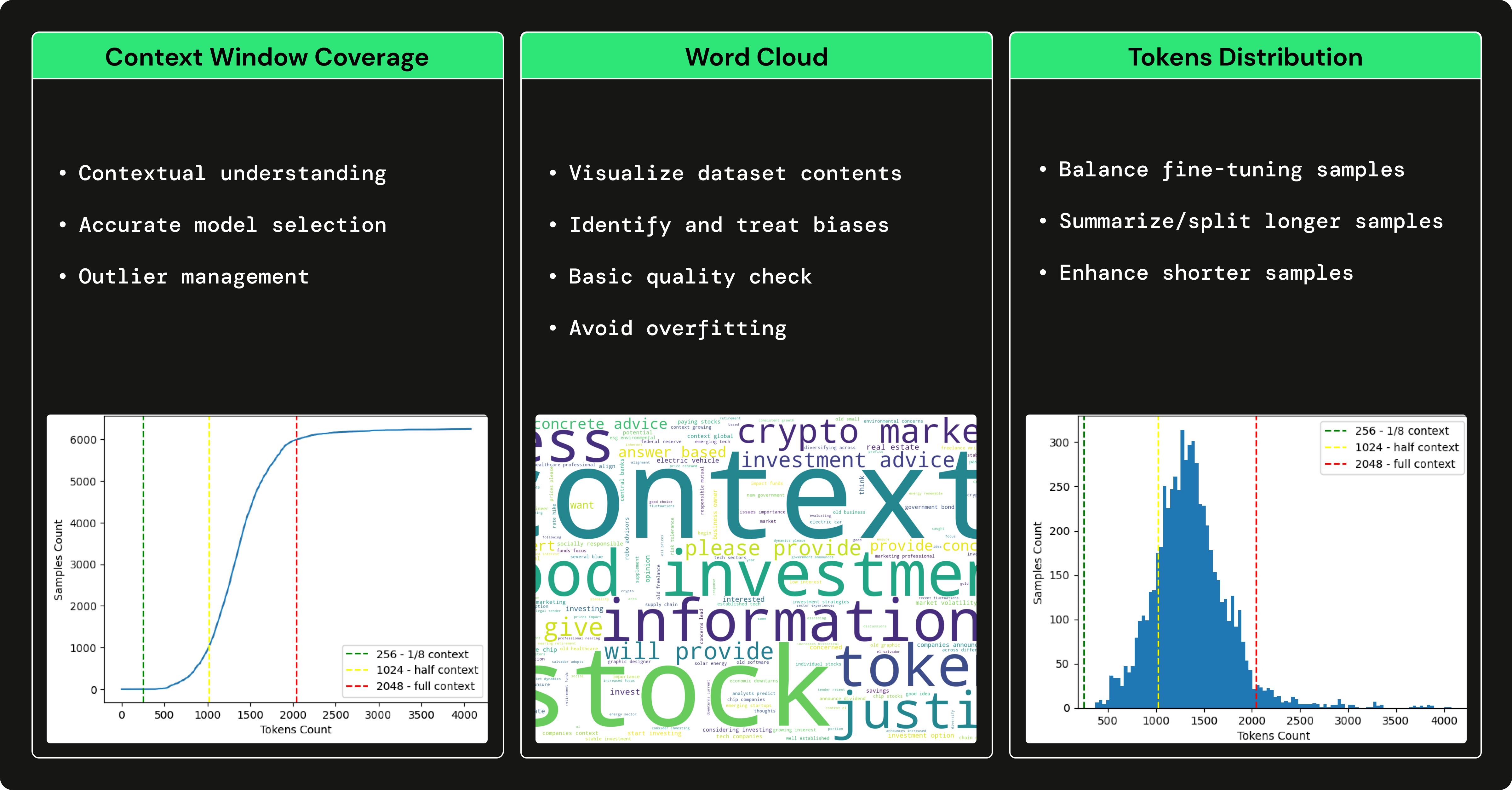

We used GPT3.5 to generate a financial Q&A dataset to fine-tune our open-source LLM to specialize in using financial terms and answering financial questions. Using a large LLM, such as GPT3.5 to generate a dataset that trains a smaller LLM (e.g., Falcon 7B) is known as fine-tuning with distillation.

→ To understand how we generated the financial Q&A dataset, check out this article written by Pau Labarta.

→ To see a complete analysis of the financial Q&A dataset, check out the dataset_analysis subsection of the course written by Alexandru Razvant.

Before diving into the modules, you have to set up a couple of additional external tools for the course.

NOTE: You can set them up as you go for every module, as we will point you in every module what you need.

financial news data source

Follow this document to show you how to create a FREE account and generate the API Keys you will need within this course.

Note: 1x Alpaca data connection is FREE.

serverless vector DB

Go to Qdrant and create a FREE account.

After, follow this document on how to generate the API Keys you will need within this course.

Note: We will use only Qdrant's freemium plan.

serverless ML platform

Go to Comet ML and create a FREE account.

After, follow this guide to generate an API KEY and a new project, which you will need within the course.

Note: We will use only Comet ML's freemium plan.

serverless GPU compute | training & inference pipelines

Go to Beam and create a FREE account.

After, you must follow their installation guide to install their CLI & configure it with your Beam credentials.

To read more about Beam, here is an introduction guide.

Note: You have ~10 free compute hours. Afterward, you pay only for what you use. If you have an Nvidia GPU >8 GB VRAM & don't want to deploy the training & inference pipelines, using Beam is optional.

When using Poetry, we had issues locating the Beam CLI inside a Poetry virtual environment. To fix this, after installing Beam, we create a symlink that points to Poetry's binaries, as follows:

export COURSE_MODULE_PATH=<your-course-module-path> # e.g., modules/training_pipeline

cd $COURSE_MODULE_PATH

export POETRY_ENV_PATH=$(dirname $(dirname $(poetry run which python)))

ln -s /usr/local/bin/beam ${POETRY_ENV_PATH}/bin/beamcloud compute | feature pipeline

Go to AWS, create an account, and generate a pair of credentials.

After, download and install their AWS CLI v2.11.22 and configure it with your credentials.

Note: You will pay only for what you use. You will deploy only a t2.small EC2 VM, which is only ~$0.023 / hour. If you don't want to deploy the feature pipeline, using AWS is optional.

Every module has its dependencies and scripts. In a production setup, every module would have its repository, but in this use case, for learning purposes, we put everything in one place:

Thus, check out the README for every module individually to see how to install & use it:

We strongly encourage you to clone this repository and replicate everything we've done to get the most out of this course.

In each module's video lectures, articles, and README documentation, you will find step-by-step instructions.

Happy learning! 🙏

The GitHub code (released under the MIT license) and video lectures (released on YouTube) are entirely free of charge. Always will be.

The Medium lessons are released under Medium's paid wall. If you already have it, then they are free. Otherwise, you must pay a $5 monthly fee to read the articles.

If you have any questions or issues during the course, we encourage you to create an issue in this repository where you can explain everything you need in depth.

Otherwise, you can also contact the teachers on LinkedIn:

To understand the entire code step-by-step, check out our articles ↓

- Lesson 2: Why you must choose streaming over batch pipelines when doing RAG in LLM applications

- Lesson 3: This is how you can build & deploy a streaming pipeline to populate a vector DB for real-time RAG

- Lesson 4: 5 concepts that must be in your LLM fine-tuning kit

- Lesson 5: The secret of writing generic code to fine-tune any LLM using QLoRA

- Lesson 6: From LLM development to continuous training pipelines using LLMOps

- Lesson 7: Design a RAG LangChain application leveraging the 3-pipeline architecture

- Lesson 8: Prepare your RAG LangChain application for production

This course is an open-source project released under the MIT license. Thus, as long you distribute our LICENSE and acknowledge our work, you can safely clone or fork this project and use it as a source of inspiration for whatever you want (e.g., university projects, college degree projects, etc.).

|

Pau Labarta Bajo | Senior ML & MLOps Engineer Main teacher. The guy from the video lessons. Twitter/X Youtube Real-World ML Newsletter Real-World ML Site |

|

Alexandru Razvant | Senior ML Engineer Second chef. The engineer behind the scenes. Neura Leaps |

|

Paul Iusztin | Senior ML & MLOps Engineer Main chef. The guys who randomly pop in the video lessons. Twitter/X Decoding ML Newsletter Personal Site | ML & MLOps Hub |