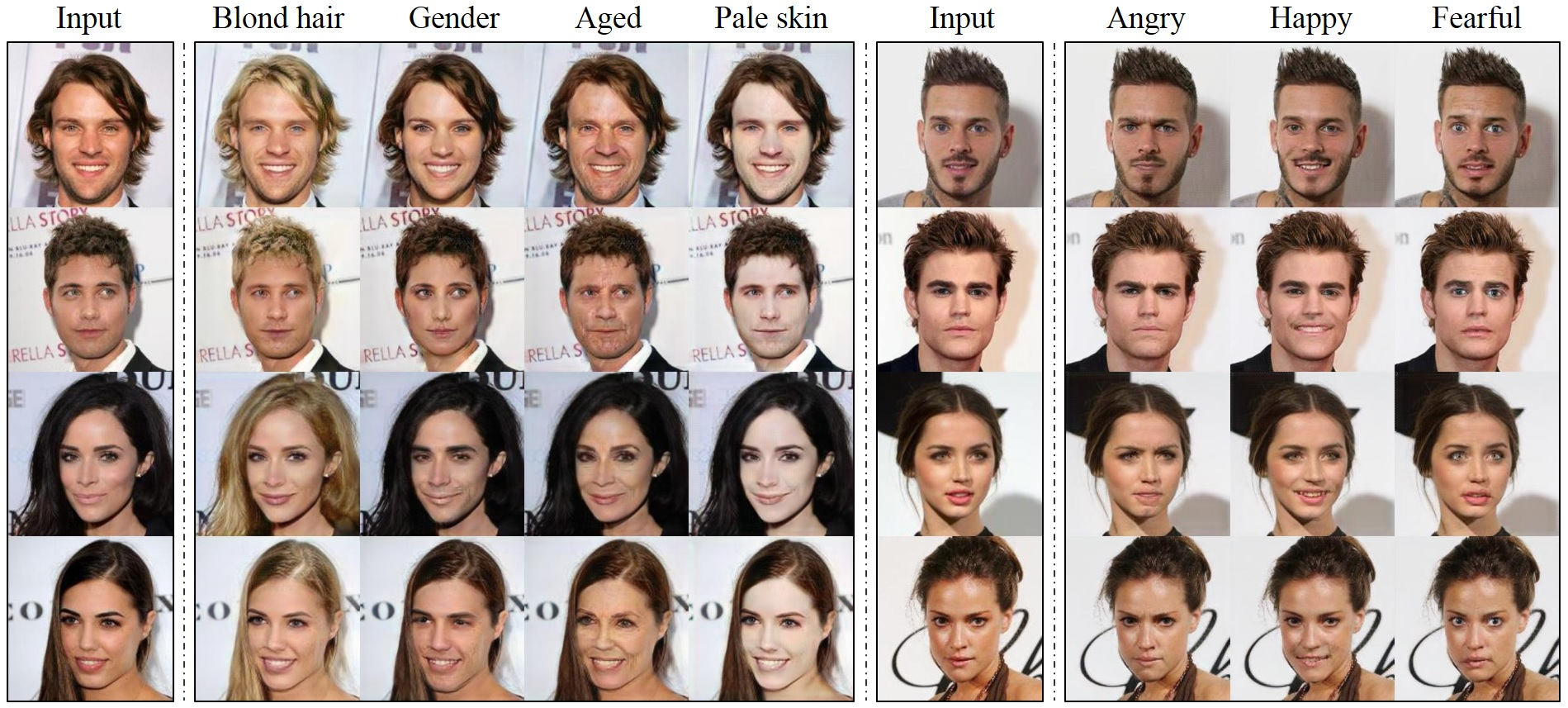

PyTorch implementation of StarGAN: Unified Generative Adversarial Networks for Multi-Domain Image-to-Image Translation. StarGAN can flexibly translate an input image to any desired target domain using only a single generator and a discriminator. The demo video for StarGAN can be found here.

Yunjey Choi, Minje Choi, Munyoung Kim, Jung-Woo Ha, Sung Kim, and Jaegul Choo Korea Universitiy, Clova AI Research (NAVER), The College of New Jersey, HKUST

The images are generated by StarGAN trained on the CelebA dataset.

See original repo.

- Python 3.5+

- PyTorch 0.2.0

- TensorFlow 1.3+ (optional for tensorboard)

$ git clone https://github.com/ericpts/StarGAN.git

$ cd StarGAN/$ python3 init.py$ python main.py --mode='train' --dataset='CelebA' --c_dim=5 --image_size=128 \

--sample_path='stargan_celebA/samples' --log_path='stargan_celebA/logs' \

--model_save_path='stargan_celebA/models' --result_path='stargan_celebA/results'$ python main.py --mode='test' --dataset='CelebA' --c_dim=5 --image_size=128 --test_model='20_1000' \

--sample_path='stargan_celebA/samples' --log_path='stargan_celebA/logs' \

--model_save_path='stargan_celebA/models' --result_path='stargan_celebA/results'$ python main.py --mode='test' --dataset='RaFD' --c_dim=8 --image_size=128 \

--test_model='200_200' --rafd_image_path='data/RaFD/test' \

--sample_path='stargan_rafd/samples' --log_path='stargan_rafd/logs' \

--model_save_path='stargan_rafd/models' --result_path='stargan_rafd/results'$ python main.py --mode='test' --dataset='Both' --image_size=256 --test_model='200000' \

--sample_path='stargan_both/samples' --log_path='stargan_both/logs' \

--model_save_path='stargan_both/models' --result_path='stargan_both/results'

If this work is useful for your research, please cite our arXiv paper.

@article{choi2017stargan,

title = {StarGAN: Unified Generative Adversarial Networks for Multi-Domain Image-to-Image Translation},

author = {Choi, Yunjey and Choi, Minje and Kim, Munyoung and Ha, Jung-Woo and Kim, Sunghun and Choo, Jaegul},

journal= {arXiv preprint arXiv:1711.09020},

Year = {2017}

}

This work was mainly done while the first author did a research internship at Clova AI Research, NAVER (CLAIR). We also thank all the researchers at CLAIR, especially Donghyun Kwak, for insightful discussions.