The tl;dr on a few notable transformer/language model papers + other papers (alignment, memorization, etc).

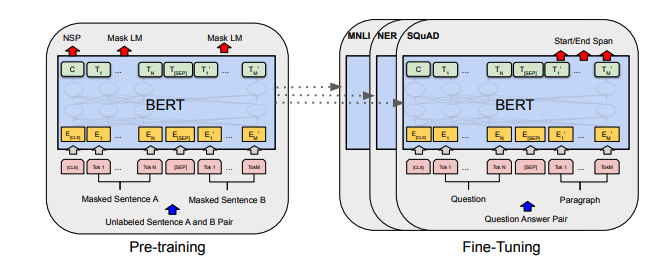

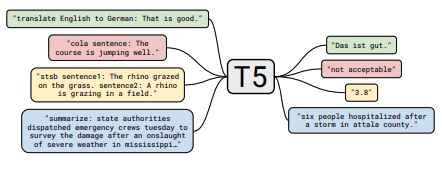

Models: GPT- *, * BERT *, Adapter- *, * T5, etc.

Each set of notes includes links to the paper, the original code implementation (if available) and the Huggingface 🤗 implementation.

Here is an example: t5.

The transformers papers are presented somewhat chronologically below. Go to the ":point_right: Notes :point_left:" column below to find the notes for each paper.

This repo also includes a table quantifying the differences across transformer papers all in one table.

- Quick Note

- Motivation

- Papers::Transformer Papers

- Papers::1 Table To Rule Them All

- Papers::Alignment Papers

- Papers::Scaling Law Papers

- Papers::LM Memorization Papers

- Papers::Limited Label Learning Papers

- How To Contribute

- How To Point Our Errors

- Citation

- License

This is not an intro to deep learning in NLP. If you are looking for that, I recommend one of the following: Fast AI's course, one of the Coursera courses, or maybe this old thing. Come here after that.

With the explosion in papers on all things Transformers the past few years, it seems useful to catalog the salient features/results/insights of each paper in a digestible format. Hence this repo.

All of the table summaries found ^ collapsed into one really big table here.

| Paper | Year | Institute | 👉 Notes 👈 | Codes |

|---|---|---|---|---|

| Fine-Tuning Language Models from Human Preferences | 2019 | OpenAI | To-Do | None |

| Paper | Year | Institute | 👉 Notes 👈 | Codes |

|---|---|---|---|---|

| Scaling Laws for Neural Language Models | 2020 | OpenAI | To-Do | None |

| Paper | Year | Institute | 👉 Notes 👈 | Codes |

|---|---|---|---|---|

| Extracting Training Data from Large Language Models | 2021 | Google et al. | To-Do | None |

| Deduplicating Training Data Makes Language Models Better | 2021 | Google et al. | To-Do | None |

| Paper | Year | Institute | 👉 Notes 👈 | Codes |

|---|---|---|---|---|

| An Empirical Survey of Data Augmentation for Limited Data Learning in NLP | 2021 | GIT/UNC | To-Do | None |

| Learning with fewer labeled examples | 2021 | Kevin Murphy & Colin Raffel (Preprint: "Probabilistic Machine Learning", Chapter 19) | Worth a read, won't summarize here. | None |

If you are interested in contributing to this repo, feel free to do the following:

- Fork the repo.

- Create a Draft PR with the paper of interest (to prevent "in-flight" issues).

- Use the suggested template to write your "tl;dr". If it's an architecture paper, you may also want to add to the larger table here.

- Submit your PR.

Undoubtedly there is information that is incorrect here. Please open an Issue and point it out.

@misc{cliff-notes-transformers,

author = {Thompson, Will},

url = {https://github.com/will-thompson-k/cliff-notes-transformers},

year = {2021}

}For the notes above, I've linked the original papers.

MIT