This file contains notes about people active in resilience engineering, organized alphabetically. I'm using these notes to help me get my head around the different players and associated concepts.

For each person, I list concepts that they reference in their writings, along with some of their publications. The publications listed aren't comprehensive: they're ones I've read or have added to my to-read list.

- John Allspaw

- Lisanne Bainbridge

- Johan Bergström

- Todd Conklin

- Richard I. Cook

- Sidney Dekker

- John C. Doyle

- Anders Ericsson

- Meir Finkel

- Erik Hollnagel

- Gary Klein

- Nancy Leveson

- Elinor Ostrom

- Jean Pariès

- Emily Patterson

- Charles Perrow

- Shawna J. Perry

- Jens Rasmussen

- James Reason

- Nadine Sarter

- Diane Vaughan

- Robert L. Wears

- David Woods

- John Wreathall

Allspaw is the former CTO of Etsy. He applies concepts from resilience engineering to the tech industry. He is one of the founders Adaptive Capacity Labs, a resilience engineering consultancy.

- Trade-Offs Under Pressure: Heuristics and Observations Of Teams Resolving Internet Service Outages

- Incidents as we Imagine Them Versus How They Actually Are (video)

- Debrief Facilitation Guide

- Fault Injection in Production: Making the case for resiliency testing

Bainbridge is (was?) a psychology researcher. (I have not been able to find any recent information about her).

Bainbridge is famous for her Ironies of automation paper, which continues to be cited.

- automation

- design errors

- human factors/ ergonomics

- cognitive modelling

- cognitive architecture

- mental workload

- situation awareness

- cognitive error

- skill and training

- interface design

Bergstrom is a safety research and consultant. He runs the Master Program of Human Factors and Systems Safety at Lund University.

- Resilience engineering: Current status of the research and future challenges

- Rule- and role retreat: An empirical study of procedures and resilience

Conklin's books are on my reading list, but I haven't read anything by him yet. I have listened to his great Preaccident investigation podcast.

- Pre-accident investigations: an introduction to organizational safety

- Pre-accident investigations: better questions - an applied approach to operational learning

Cook is a medical doctor who studies failures in complex systems. He is one of the founders Adaptive Capacity Labs, a resilience engineering consultancy.

- complex systems

- degraded mode

- sharp end / blunt end

- Going solid

- Cycle of error

- How complex systems fail

- Distancing through differencing: An obstacle to organizational learning following accidents

- Being bumpable

- Behind Human Error

- Incidents - markers of resilience or brittleness?

- “Going solid”: a model of system dynamics and consequences for patient safety

- Operating at the Sharp End: The Complexity of Human Error

- Patient boarding in the emergency department as a symptom of complexity-induced risks

Dekker developed the theory of drift, characterized by five concepts:

- Scarcity and competition

- Decrementalism, or small steps

- Sensitive dependence on initial conditions

- Unruly technology

- Contribution of the protective structure

- Drift into failure

- New view vs old view of human performance

- Just culture

- complexity

- broken part

- Newton-Descartes

- diversity

- systems theory

- unruly technology

- decrementalism

- Drift into failure

- Reconstructing human contributions to accidents: the new view on error and performance

- The field guide to 'human error' investigations

- Behind Human Error

- Rule- and role retreat: An empirical study of procedures and resilience

Doyle is a control systems researcher.

- Robust yet fragile

- Highly optimized tolerance

- The “robust yet fragile” nature of the Internet

- Highly Optimized Tolerance: Robustness and Design in Complex Systems

Ericsson introduced the idea of deliberative practice as a mechanism for achieving high level of expertise.

Ericsson isn't directly associated with the field of resilience engineering. However, Gary Klein's work is informed by his, and I have a particular interest in how people improve in expertise, so I'm including him here.

- Expertise

- Deliberative practice

- Protocol analysis

Finkel is a Colonel in the Israeli Defense Force (IDF) and the Director of the IDF's Ground Forces Concept Development and Doctrine Department

Hollnagel proposed that there is always a fundamental tradeoff between efficiency and thoroughness, which he called the ETTO principle.

Safety-I: avoiding things that go wrong

- looking at what goes wrong

- bimodal view of work and activities (acceptable vs unacceptable)

- find-and-fix approach

- prevent transition from 'normal' to 'abnormal'

- causality credo: believe that adverse outcomes happen because something goes wrong (they have causes that can be found and treated)

- it either works or it doesn't

- systems are decomposable

- functioning is bimodal

Saefty-II: performance variability rather than bimodality

- the system’s ability to succeed under varying conditions, so that the number of intended and acceptable outcomes (in other words, everyday activities) is as high as possible

- performance is always variable

- performance variation is ubiquitous

- things that go right

- focus on frequent events

- remain sensitive to possibility of failure

- be thorough as well as efficient

Hollnagel proposed the Functional Resonance Analysis Method (FRAM) for modeling complex socio-technical systems.

- ETTO (efficiency thoroughness tradeoff) principle

- FRAM (functional resonance analysis method)

- Safety-I and Safety-II

- things that go wrong vs things that go right

- causality credo

- performance variability

- bimodality

- emergence

- work-as-imagined vs. work-as-done

- The ETTO Principle: Efficiency-Thoroughness Trade-Off: Why Things That Go Right Sometimes Go Wrong

- From Safety-I to Safety-II: A White Paper

- Safety-I and Safety-II: The past and future of safety management

- FRAM: The Functional Resonance Analysis Method: Modelling Complex Socio-technical System

- Joint Cognitive Systems: Patterns in Cognitive Systems Engineering

- Resilience Engineering: Concepts and Precepts

- I want to believe: some myths about the management of industrial safety

Klein studies how experts are able to quickly make effective decisions in high-tempo situations.

- naturalistic decision making (NDM)

- intuitive expertise

- cognitive task analysis

- Sources of power: how people make decisions

- Working minds: a practitioner's guide to cognitive task analysis

- Patterns in Cooperative Cognition

- Can We Trust Best Practices? Six Cognitive Challenges of Evidence-Based Approaches

- Conditions for intuitive expertise: a failure to disagree

Nancy Leveson is a computer science researcher with a focus in software safety.

Leveson developed the accident causality model known as STAMP: the Systems-Theoretic Accident Model and Process.

See STAMP for some more detailed notes of mine.

- Software safety

- STAMP (systems-theoretic accident model and processes)

- STPA (system-theoretic process analysis) hazard analysis technique

- CAST (causal analysis based on STAMP) accident analysis technique

- Systems thinking

- hazard

- interactivy complexity

- system accident

- dysfunctional interactions

- safety constraints

- control structure

- dead time

- time constants

- feedback delays

- A New Accident Model for Engineering Safer Systems

- Engineering a safer world

- STPA Handbook

- Safeware

- Resilience Engineering: Concepts and Precepts

- High-pressure steam engines and computer software

Ostrom was a Nobel-prize winning economics and political science researcher.

- Coping with tragedies of the commons

- Governing the Commons: The Evolution of Institutions for Collective Action

- tragedy of the commons

- polycentric governance

- social-ecological system framework

Pariès is the president of Dédale, a safety and human factors consultancy.

Patterson is a researcher who applies human factors engineering to improve patient safety in healthcare.

Perrow is a sociologist who studied the Three Mile Island disaster.

- Normal accidents

- Common-mode

Perry is a medical researcher who studies emergency medicine.

- Underground adaptations

- Articulated functions vs. important functions

- Unintended effects

- Apparent success vs real success

- Exceptions

- Dynamic environments

- Underground adaptations: case studies from health care

- Can We Trust Best Practices? Six Cognitive Challenges of Evidence-Based Approaches

Jens Rasmussen was a very influential researcher in human factors and safety systems.

TBD

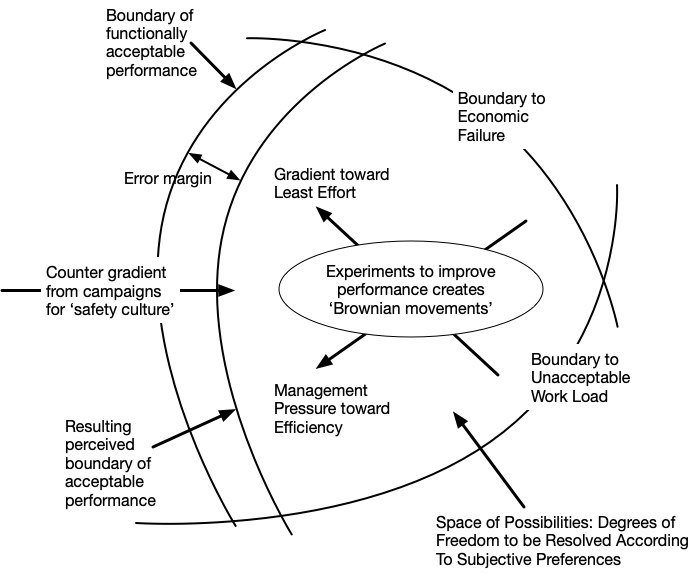

Rasmussen proposed a state-based model of a socio-technical system as a system that moves within a region of a state space. The region is surrounded by different boundaries:

- economic failure

- unacceptable work load

- functionality acceptable performance

Incentives push the system towards the boundary of acceptable performance: accidents happen when the boundary is exceeded.

Source: Risk management in a dynamic society: a modelling problem

TBD

- Boundaries:

- boundary of functionally acceptable performance

- boundary to economic failure

- boundary to unnaceptable work load

- Cognitive systems engineering

- Skill-rule-knowledge (SKR) model

- AcciMaps

- Systems approach

- Control-theoretic

- decisions, acts, and errors

- hazard source

- anatomy of accidents

- energy

- systems thinking

- trial and error experiments

- defence in depth (fallacy)

- Role of managers

- Information

- Competency

- Awareness

- Commitment

- Going solid

- Reflecting on Jens Rasmussen’s legacy. A strong program for a hard problem

- Risk management in a dynamic society: a modelling problem

- Coping with complexity

- “Going solid”: a model of system dynamics and consequences for patient safety

Reason is a psychology researcher who did work on understanding and categorizing human error.

Reason developed the swiss cheese model of accidents.

Reason developed a model of the types of errors that humans make:

- slips

- lapses

- msitakes

- Human error

- Slips, lapses and mistakes

- Swiss cheese model

Sarter is a researcher in industrial and operations engineering.

- cognitive ergonomics

- organization safety

- human-automation/robot interaction

- human error / error management

- attention / interruption maangement

- design of decision support systems

- Learning from Automation Surprises and "Going Sour" Accidents: Progress on Human-Centered Automation

- Behind Human Error

Wears was a medical researcher who studied emergency medicine.

- Underground adaptations

- Articulated functions vs. important functions

- Unintended effects

- Apparent success vs real success

- Exceptions

- Dynamic environments

- Systems of care are intrinsically hazardous

- The error of counting "errors"

- Underground adaptations: case studies from health care

- Fundamental On Situational Surprise: A Case Study With Implications For Resilience

Vaughan is a sociology researcher who did a famous study of the NASA Challenger accident.

- normalization of deviance

Woods has a resesarch background in cognitive systems engineering and did work researching NASA accidents. He is one of the founders Adaptive Capacity Labs, a resilience engineering consultancy.

Woods seems to have contributed an enormous number of concepts.

TBD

From The theory of graceful extensibility: basic rules that govern adaptive systems

- Boundaries are universal

- Surprise occurs, continuously

- Risk of saturation is monitored and regulated

- Synchronization across multiple units of adaptive behavior in a network is necessary

- Risk of saturation can be shared

- Pressure changes what is sacrificed when

- Pressure for optimality undermines graceful extensibility

- All adaptive units are local

- Perspective contrast overcomes bounds

- Reflective systems continually risk mis-calibration

Many of these are mentioned in Woods's short course.

- the adaptive universe

- unit of adaptive behavior (UAB), adaptive unit

- adapative capacity

- continuous adaptation

- graceful extensibility

- sustained adaptability

- Tangled, layered networks (TLN)

- competence envelope

- adaptive cycles/histories

- precarious present

- resilient future

- florescence

- borderlands

- anticipate

- synchronize

- proactive learning

- initiative

- reciprocity

- SNAFUs

- robustness

- surprise

- dynamic fault management

- software systems as "team players"

- multi-scale

- brittleness

- decompensation

- working at cross-purposes

- proactive learning vs getting stuck

- oversimplification

- fixation

- fluency law, veil of fluency

- capacity for maneuver (CfM)

- crunches

- sharp end, blunt end

- Resilience Engineering: Concepts and Precepts

- Resilience is a verb

- Four concepts for resilience and the implications for the future of resilience engineering

- How adaptive systems fail

- Resilience and the ability to anticipate

- Distancing through differencing: An obstacle to organizational learning following accidents

- Essential characteristics of resilience

- Learning from Automation Surprises and "Going Sour" Accidents: Progress on Human-Centered Automation

- Behind Human Error

- Joint Cognitive Systems: Patterns in Cognitive Systems Engineering

- Patterns in Cooperative Cognition

- Origins of cognitive systems engineering

- Incidents - markers of resilience or brittleness?

- The alarm problem and directed attention in dynamic fault management

- Can We Trust Best Practices? Six Cognitive Challenges of Evidence-Based Approaches

- Operating at the Sharp End: The Complexity of Human Error

- The theory of graceful extensibility: basic rules that govern adaptive systems

Wreathall is an expert in human performance in safety. He works at the WreathWood Group, a risk and safety studies consultancy.