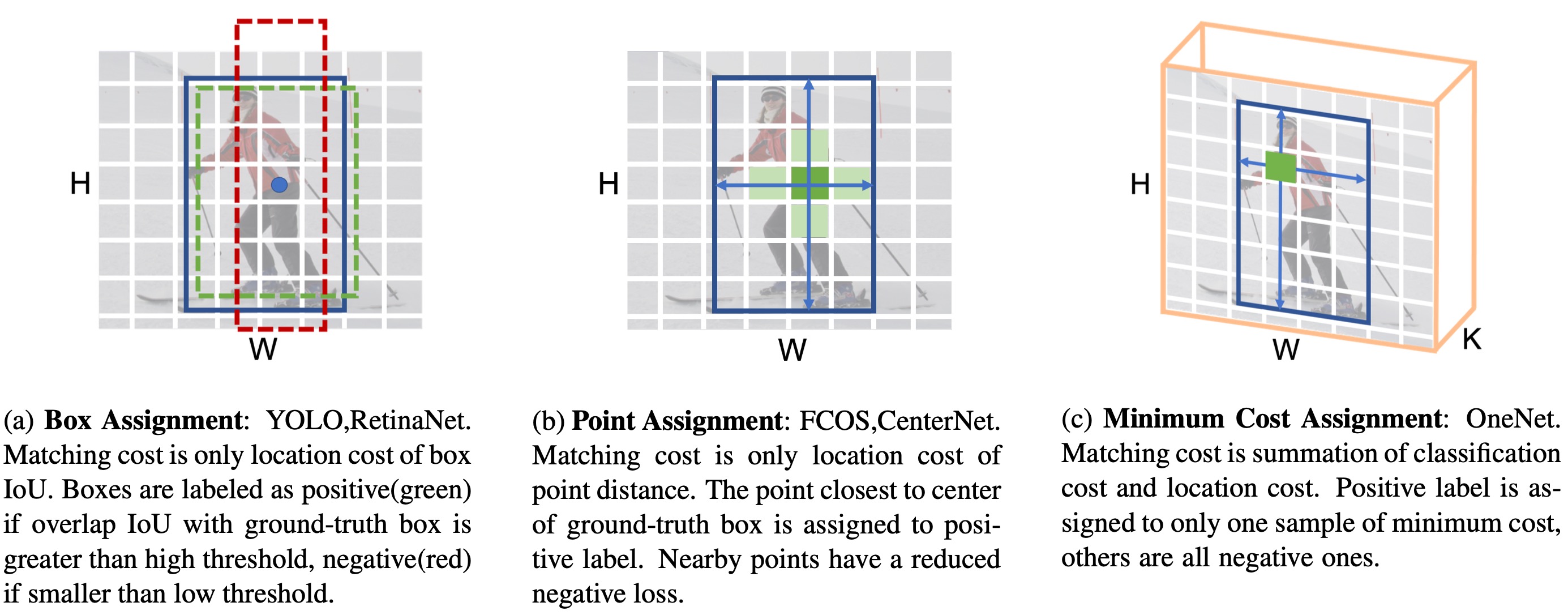

Comparisons of different label assignment methods. H and W are height and width of feature map, respectively, K is number of object categories. Previous works on one-stage object detection assign labels by only position cost, such as (a) box IoU or (b) point distance between sample and ground-truth. In our method, however, (c) classification cost is additionally introduced. We discover that classification cost is the key to the success of end-to-end. Without classification cost, only location cost leads to redundant boxes of high confidence scores in inference, making NMS post-processing a necessary component.

arxiv: OneNet: Towards End-to-End One-Stage Object Detection

paper: What Makes for End-to-End Object Detection?

- (28/06/2021) OneNet.RetinaNet and OneNet.FCOS on CrowdHuman are available.

- (27/06/2021) OneNet.RetinaNet and OneNet.FCOS are available.

- (11/12/2020) Higher Performance for OneNet is reported by disable gradient clip.

- Provide models and logs

- Support to caffe, onnx, tensorRT

- Support to MobileNet

We provide two models

- dcn is for high accuracy

- nodcn is for easy deployment.

| Method | inf_time | train_time | box AP | download |

|---|---|---|---|---|

| R18_dcn | 109 FPS | 20h | 29.9 | model | log |

| R18_nodcn | 138 FPS | 13h | 27.7 | model | log |

| R50_dcn | 67 FPS | 36h | 35.7 | model | log |

| R50_nodcn | 73 FPS | 29h | 32.7 | model | log |

| R50_RetinaNet | 26 FPS | 31h | 37.5 | model | log |

| R50_FCOS | 27 FPS | 21h | 38.9 | model | log |

Models are available in Baidu Drive by code nhr8.

- We observe about 0.3 AP noise.

- The training time and inference time are on 8 NVIDIA V100 GPUs. We observe the same type of GPUs in different clusters may cost different time.

- We use the models pre-trained on imagenet using torchvision. And we provide torchvision's ResNet-18.pkl model. More details can be found in the conversion script.

| Method | inf_time | train_time | AP50 | mMR | recall | download |

|---|---|---|---|---|---|---|

| R50_RetinaNet | 26 FPS | 11.5h | 90.9 | 48.8 | 98.0 | model | log |

| R50_FCOS | 27 FPS | 4.5h | 90.6 | 48.6 | 97.7 | model | log |

Models are available in Baidu Drive by code nhr8.

- The evalution code is built on top of cvpods.

- The default evaluation code in training should be ignored, since it only considers at most 100 objects in one image, while crowdhuman image contains more than 100 objects.

- The training time and inference time are on 8 NVIDIA V100 GPUs. We observe the same type of GPUs in different clusters may cost different time.

- More training steps are in the crowdhumantools.

The codebases are built on top of Detectron2 and DETR.

- Linux or macOS with Python ≥ 3.6

- PyTorch ≥ 1.5 and torchvision that matches the PyTorch installation. You can install them together at pytorch.org to make sure of this

- OpenCV is optional and needed by demo and visualization

- Install and build libs

git clone https://github.com/PeizeSun/OneNet.git

cd OneNet

python setup.py build develop

- Link coco dataset path to OneNet/datasets/coco

mkdir -p datasets/coco

ln -s /path_to_coco_dataset/annotations datasets/coco/annotations

ln -s /path_to_coco_dataset/train2017 datasets/coco/train2017

ln -s /path_to_coco_dataset/val2017 datasets/coco/val2017

- Train OneNet

python projects/OneNet/train_net.py --num-gpus 8 \

--config-file projects/OneNet/configs/onenet.res50.dcn.yaml

- Evaluate OneNet

python projects/OneNet/train_net.py --num-gpus 8 \

--config-file projects/OneNet/configs/onenet.res50.dcn.yaml \

--eval-only MODEL.WEIGHTS path/to/model.pth

- Visualize OneNet

python demo/demo.py\

--config-file projects/OneNet/configs/onenet.res50.dcn.yaml \

--input path/to/images --output path/to/save_images --confidence-threshold 0.4 \

--opts MODEL.WEIGHTS path/to/model.pth

OneNet is released under MIT License.

If you use OneNet in your research or wish to refer to the baseline results published here, please use the following BibTeX entries:

@InProceedings{peize2020onenet,

title = {What Makes for End-to-End Object Detection?},

author = {Sun, Peize and Jiang, Yi and Xie, Enze and Shao, Wenqi and Yuan, Zehuan and Wang, Changhu and Luo, Ping},

booktitle = {Proceedings of the 38th International Conference on Machine Learning},

pages = {9934--9944},

year = {2021},

volume = {139},

series = {Proceedings of Machine Learning Research},

publisher = {PMLR},

}