This directory contains the code for the MESS evaluation of CAT-Seg. Please see the commits for our changes of the model.

Create a conda environment catseg and install the required packages. See mess/README.md for details.

bash mess/setup_env.shPrepare the datasets by following the instructions in mess/DATASETS.md. The catseg env can be used for the dataset preparation. If you evaluate multiple models with MESS, you can change the dataset_dir argument and the DETECTRON2_DATASETS environment variable to a common directory (see mess/DATASETS.md and mess/eval.sh, e.g., ../mess_datasets).

Download the CAT-Seg weights with

mkdir weights

wget https://huggingface.co/hamacojr/CAT-Seg/resolve/main/model_final_base.pth -O weights/model_final_base.pth

wget https://huggingface.co/hamacojr/CAT-Seg/resolve/main/model_final_large.pth -O weights/model_final_large.pth

wget https://huggingface.co/hamacojr/CAT-Seg/resolve/main/model_final_huge.pth -O weights/model_final_huge.pthTo evaluate the CAT-Seg models on the MESS datasets, run

bash mess/eval.sh

# for evaluation in the background:

nohup bash mess/eval.sh > eval.log &

tail -f eval.log For evaluating a single dataset, select the DATASET from mess/DATASETS.md, the DETECTRON2_DATASETS path, and run

conda activate catseg

export DETECTRON2_DATASETS="datasets"

DATASET=<dataset_name>

# Base model:

python train_net.py --num-gpus 1 --eval-only --config-file configs/vitb_r101_384.yaml DATASETS.TEST \(\"$DATASET\",\) MODEL.WEIGHTS weights/model_final_base.pth OUTPUT_DIR output/CAT-Seg_base/$DATASET TEST.SLIDING_WINDOW True MODEL.SEM_SEG_HEAD.POOLING_SIZES "[1,1]"

# Large model:

python train_net.py --num-gpus 1 --eval-only --config-file configs/vitl_swinb_384.yaml DATASETS.TEST \(\"$DATASET\",\) MODEL.WEIGHTS weights/model_final_large.pth OUTPUT_DIR output/CAT-Seg_large/$DATASET TEST.SLIDING_WINDOW True MODEL.SEM_SEG_HEAD.POOLING_SIZES "[1,1]"

# Huge model:

python train_net.py --num-gpus 1 --eval-only --config-file configs/vitl_swinb_384.yaml DATASETS.TEST \(\"$DATASET\",\) MODEL.WEIGHTS weights/model_final_huge.pth OUTPUT_DIR output/CAT-Seg_huge/$DATASET TEST.SLIDING_WINDOW True MODEL.SEM_SEG_HEAD.POOLING_SIZES "[1,1]" MODEL.SEM_SEG_HEAD.CLIP_PRETRAINED "ViT-H" MODEL.SEM_SEG_HEAD.TEXT_GUIDANCE_DIM 1024

Note the changes of the config variables, because the sliding_window approach from the CAT-Seg paper is not active in the default config. The results might differ from the reported results in our paper because of a change in the CAT-Seg code (see commit).

This is our official implementation of CAT-Seg!

[arXiv] [Project] [HuggingFace Demo] [Segment Anything with CAT-Seg]

by Seokju Cho*, Heeseong Shin*, Sunghwan Hong, Seungjun An, Seungjun Lee, Anurag Arnab, Paul Hongsuck Seo, Seungryong Kim

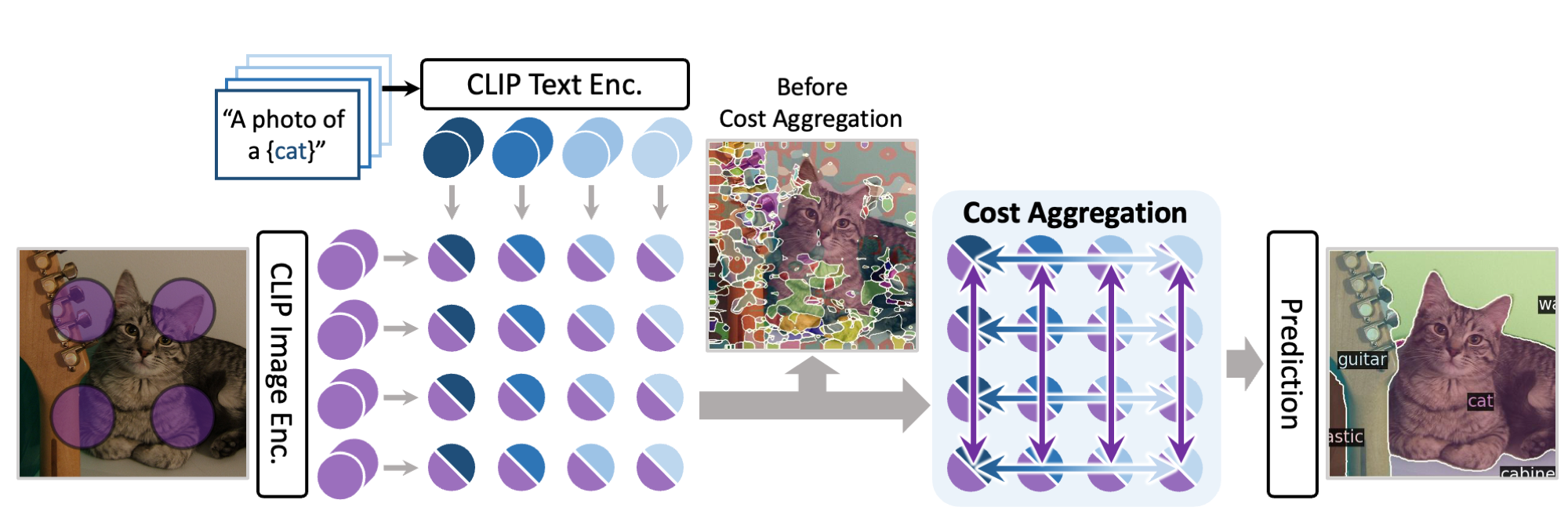

We introduce cost aggregation to open-vocabulary semantic segmentation, which jointly aggregates both image and text modalities within the matching cost.

We introduce cost aggregation to open-vocabulary semantic segmentation, which jointly aggregates both image and text modalities within the matching cost.

For further details and visualization results, please check out our paper and our project page.

❗️Update: We released a demo for combining CAT-Seg and Segment Anything for open-vocabulary semantic segmentation!

- Train/Evaluation Code (Mar 21, 2023)

- Pre-trained weights (Mar 30, 2023)

- Code of interactive demo

Please follow installation.

Please follow dataset preperation.

We provide shell scripts for training and evaluation. run.sh trains the model in default configuration and evaluates the model after training.

To train or evaluate the model in different environments, modify the given shell script and config files accordingly.

sh run.sh [CONFIG] [NUM_GPUS] [OUTPUT_DIR] [OPTS]

# For ViT-B variant

sh run.sh configs/vitb_r101_384.yaml 4 output/

# For ViT-L variant

sh run.sh configs/vitl_swinb_384.yaml 4 output/

# For ViT-H variant

sh run.sh configs/vitl_swinb_384.yaml 4 output/ MODEL.SEM_SEG_HEAD.CLIP_PRETRAINED "ViT-H" MODEL.SEM_SEG_HEAD.TEXT_GUIDANCE_DIM 1024

# For ViT-G variant

sh run.sh configs/vitl_swinb_384.yaml 4 output/ MODEL.SEM_SEG_HEAD.CLIP_PRETRAINED "ViT-G" MODEL.SEM_SEG_HEAD.TEXT_GUIDANCE_DIM 1280eval.sh automatically evaluates the model following our evaluation protocol, with weights in the output directory if not specified.

To individually run the model in different datasets, please refer to the commands in eval.sh.

sh run.sh [CONFIG] [NUM_GPUS] [OUTPUT_DIR] [OPTS]

sh eval.sh configs/vitl_swinb_384.yaml 4 output/ MODEL.WEIGHTS path/to/weights.pthWe provide pretrained weights for our models reported in the paper. All of the models were evaluated with 4 NVIDIA RTX 3090 GPUs, and can be reproduced with the evaluation script above.

| Name | Backbone | CLIP | A-847 | PC-459 | A-150 | PC-59 | PAS-20 | PAS-20b | Download |

|---|---|---|---|---|---|---|---|---|---|

| CAT-Seg (B) | R101 | ViT-B/16 | 8.9 | 16.6 | 27.2 | 57.5 | 93.7 | 78.3 | ckpt |

| CAT-Seg (L) | Swin-B | ViT-L/14 | 11.4 | 20.4 | 31.5 | 62.0 | 96.6 | 81.8 | ckpt |

| CAT-Seg (H) | Swin-B | ViT-H/14 | 13.1 | 20.1 | 34.4 | 61.2 | 96.7 | 80.2 | ckpt |

| CAT-Seg (G) | Swin-B | ViT-G/14 | 14.1 | 21.4 | 36.2 | 61.5 | 97.1 | 81.4 | ckpt |

We would like to acknowledge the contributions of public projects, such as Zegformer, whose code has been utilized in this repository. We also thank Benedikt for finding an error in our inference code and evaluating CAT-Seg over various datasets!

@misc{cho2023catseg,

title={CAT-Seg: Cost Aggregation for Open-Vocabulary Semantic Segmentation},

author={Seokju Cho and Heeseong Shin and Sunghwan Hong and Seungjun An and Seungjun Lee and Anurag Arnab and Paul Hongsuck Seo and Seungryong Kim},

year={2023},

eprint={2303.11797},

archivePrefix={arXiv},

primaryClass={cs.CV}

}