This directory contains the code for the MESS evaluation of ZegFormer. Please see the commits for our changes of the model.

Create a conda environment zegformer and install the required packages. See mess/README.md for details.

bash mess/setup_env.shPrepare the datasets by following the instructions in mess/DATASETS.md. The zegformer env can be used for the dataset preparation. If you evaluate multiple models with MESS, you can change the dataset_dir argument and the DETECTRON2_DATASETS environment variable to a common directory (see mess/DATASETS.md and mess/eval.sh, e.g., ../mess_datasets).

Download the ZegFormer weights (see https://github.com/dingjiansw101/ZegFormer)

mkdir weights

conda activate zegformer

# Python code for downloading the weights from GDrive. Link: https://drive.google.com/file/d/1bA6DXr9VOMsRkU0vyY2EpGRkyQnhnze3/view?usp=drive_link

python -c "import gdown; gdown.download(f'https://drive.google.com/uc?export=download&confirm=pbef&id=1bA6DXr9VOMsRkU0vyY2EpGRkyQnhnze3', output='weights/zegformer_R101_bs32_60k_vit16_coco-stuff.pth')"To evaluate the ZegFormer on the MESS datasets, run

bash mess/eval.sh

# for evaluation in the background:

nohup bash mess/eval.sh > eval.log &

tail -f eval.log For evaluating a single dataset, select the DATASET from mess/DATASETS.md, the DETECTRON2_DATASETS path, and run

conda activate zegformer

export DETECTRON2_DATASETS="datasets"

DATASET=<dataset_name>

python train_net.py --num-gpus 1 --eval-only --config-file configs/coco-stuff/zegformer_R101_bs32_60k_vit16_coco-stuff_gzss_eval_847_classes.yaml MODEL.WEIGHTS weights/zegformer_R101_bs32_60k_vit16_coco-stuff.pth OUTPUT_DIR output/ZegFormer/$DATASET DATASETS.TEST \(\"$DATASET\",\)

This is the official code for the ZegFormer (CVPR 2022).

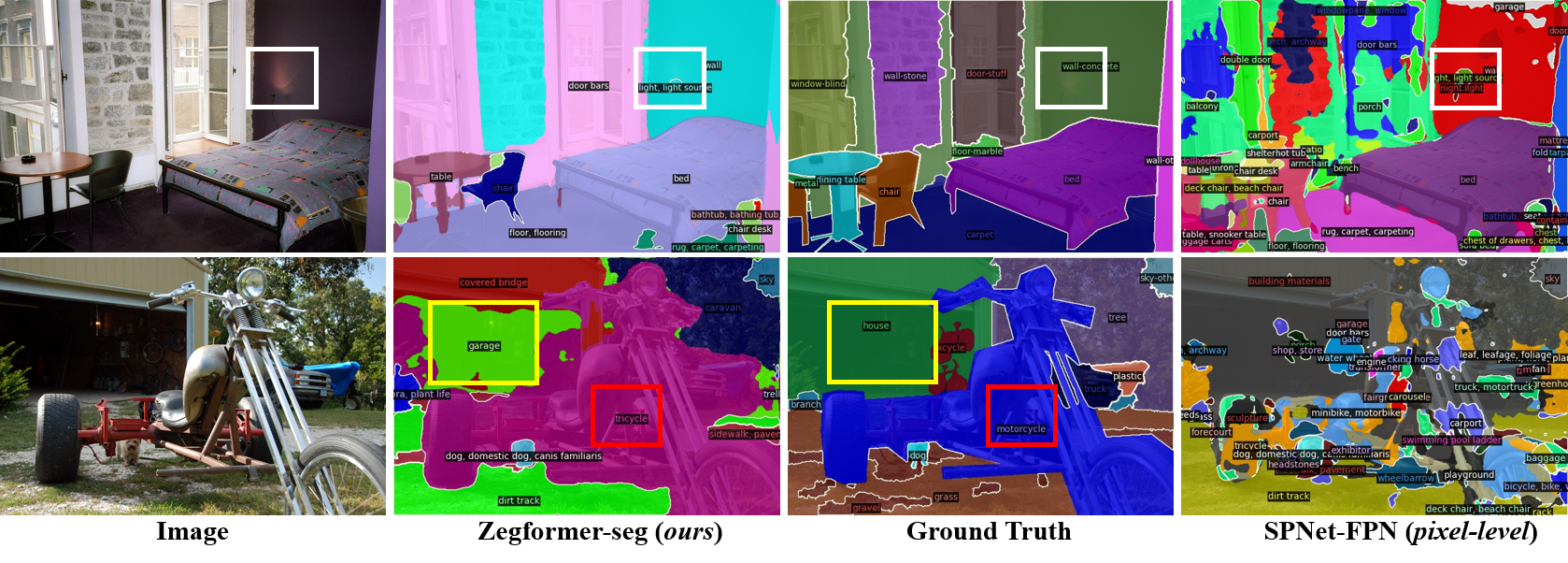

ZegFormer is the first framework that decouple the zero-shot semantic segmentation into: 1) class-agnostic segmentation and 2) segment-level zero-shot classification

ZegFormer is able to segment stuff and things with open vocabularies. The predicted classes can be more fine-grained than the COCO-Stuff annotations (see colored boxes below).

See data preparation

For each model, there are two kinds of config files. The file without suffix "_gzss_eval" is used for training. The file with suffix "_gzss_eval" is used for generalized zero-shot semantic segmentation evaluation.

Download the checkpoints of ZegFormer from https://drive.google.com/drive/u/0/folders/1qcIe2mE1VRU1apihsao4XvANJgU5lYgm

python demo/demo.py --config-file configs/coco-stuff/zegformer_R101_bs32_60k_vit16_coco-stuff_gzss_eval.yaml \

--input input1.jpg input2.jpg \

[--other-options]

--opts MODEL.WEIGHTS /path/to/zegformer_R101_bs32_60k_vit16_coco-stuff.pth

The configs are made for training, therefore we need to specify MODEL.WEIGHTS to a model from model zoo for evaluation.

This command will run the inference and show visualizations in an OpenCV window.

For details of the command line arguments, see demo.py -h or look at its source code

to understand its behavior. Some common arguments are:

- To run on your webcam, replace

--input fileswith--webcam. - To run on a video, replace

--input fileswith--video-input video.mp4. - To run on cpu, add

MODEL.DEVICE cpuafter--opts. - To save outputs to a directory (for images) or a file (for webcam or video), use

--output.

In the example above, the model is trained with 156 classes, and inferenced with 171 classes.

If you want to inference with more classes, try the config zegformer_R101_bs32_60k_vit16_coco-stuff_gzss_eval_847_classes.yaml.

To train models with R-101 backbone, download the pre-trained model R-101.pkl, which is a converted copy of MSRA's original ResNet-101 model.

We provide two scripts in train_net.py, that are made to train all the configs provided in MaskFormer.

To train a model with "train_net.py", first setup the corresponding datasets following datasets/README.md, then run:

./train_net.py --num-gpus 8 \

--config-file configs/coco-stuff/zegformer_R101_bs32_60k_vit16_coco-stuff.yaml

The configs are made for 8-GPU training. Since we use ADAMW optimizer, it is not clear how to scale learning rate with batch size. To train on 1 GPU, you need to figure out learning rate and batch size by yourself:

./train_net.py \

--config-file configs/coco-stuff/zegformer_R101_bs32_60k_vit16_coco-stuff.yaml \

--num-gpus 1 SOLVER.IMS_PER_BATCH SET_TO_SOME_REASONABLE_VALUE SOLVER.BASE_LR SET_TO_SOME_REASONABLE_VALUE

To evaluate a model's performance, use

./train_net.py \

--config-file configs/coco-stuff/zegformer_R101_bs32_60k_vit16_coco-stuff_gzss_eval.yaml \

--eval-only MODEL.WEIGHTS /path/to/checkpoint_file

For more options, see ./train_net.py -h.

The pre-trained checkpoints of ZegFormer can be downloaded from https://drive.google.com/drive/folders/1qcIe2mE1VRU1apihsao4XvANJgU5lYgm?usp=sharing

Although the reported results on PASCAL VOC are trained with 10k iterations, the results at 10k are not stable. We recommend to train models with longer iterations.

This repo benefits from CLIP and MaskFormer. Thanks for their wonderful works.

@article{ding2021decoupling,

title={Decoupling Zero-Shot Semantic Segmentation},

author={Ding, Jian and Xue, Nan and Xia, Gui-Song and Dai, Dengxin},

booktitle={Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR)},

year={2022}

}

If you have any problems in using this code, please contact me (jian.ding@whu.edu.cn)