- 7 x Raspberry Pi 3b+

- 7 x 32 GB Samsung Class 10 Micro SD Cards

- 7 x 1 ft. microUSB right angled cords (for Power)

- 7 x 6" Flat CAT6 LAN patch cords

- Dlink 8 Port Eco-Friendly Router

- 2 x Anker 6 Port 60W USB hub

- C4Labs Cloudlet Case

- Development in MACOSX with bonjour (for *.local ip/hostnames)

- Rasbperry Pi 3b+ (ARM32v7) Hardware similiar to my Cluster Specs

- MAC/LINUX system to provision Cluster.

- Static IP addresses for Raspberry Pi LANs

- Working knowledge of LINUX OS.

- Patience

-

Flash Raspbian Lite to MSD card. (MACOSX use Etcher)

-

Create wpa_supplicant.conf file (see example in boot folder)

-

Create ssh file (this is empty file, see example in boot folder)

-

Edit cmdline.txt file to disable auto size image and enable cgroups (see example in boot folder) - see boot/cmdline.txt in this repo for working example.

A. Enable cpuset cgroup in /boot/cmdline.txt by adding:

cgroup_enable=cpuset cgroup_memory=1 cgroup_enable=memoryB. Remove autoexpand system capability in /boot/cmdline.txt by deleting:

init=/usr/lib/raspi-config/init_resize.shC. cmdline.txt should resemble the following:

dwc_otg.lpm_enable=0 console=serial0,115200 console=tty1 root=PARTUUID=7ee80803-02 rootfstype=ext4 elevator=deadline fsck.repair=yes rootwait quiet cgroup_enable=cpuset cgroup_memory=1 cgroup_enable=memory -

Eject Micro SD card and start raspberry pi

Login ssh pi@raspberrypi.local and update/upgrade on Master & Workers

- Clear the current key (requried when flashing a new Raspberry pi)

ssh-keygen -R raspberrypi.local- SSH into Raspberry pi:

ssh pi@raspberrypi.local- Update the raspbian install

sudo apt update

sudo apt upgrade -y- Optional: Copy SSH RSA Key to Raspberry Pi

ssh-copy-id pi@k3s-xxx-xxx.localThen, change the file /etc/ssh/sshd_config on the Pi, set PasswordAuthentication to no

Turn off swap file

sudo dphys-swapfile swapoff && \

sudo dphys-swapfile uninstall && \

sudo update-rc.d dphys-swapfile removesudo apt update

sudo apt install tmux -ywget https://github.com/rancher/k3s/releases/download/v0.4.0/k3s-armhf && \

chmod +x k3s-armhf && \

sudo mv k3s-armhf /usr/local/bin/k3scurl -fsSL https://get.docker.com | sh - && \

sudo usermod -aG docker pisudo raspi-configRecommended: DO NOT RESTART WHEN EXITING raspi-config. Make an Image copy of the Micro SD at this stage. See below about stamping images.

Shutdown raspberrypi by typing:

sudo shutdown nowThere are many ways to create images for the master and worker nodes. But, to learn by repetitition, and to keep things simple, I recommend setting up each node by hand. To speed things up and not have to update/upgrade each time you can copy your Micro SD image after the previous step. The image created from the above process will be accessible by wifi, lan, and the image will be expanded to the full size of each card. You simply need to log in and run sudo raspi-config to rename the hostname and restart the device. The following headings will include which node the service must run on. For now, k3s is only capable of running with a single master node. For my cluster, the first Raspberry Pi is my masternode and the other four devices act as worker nodes.

I choose to give my raspberry pi's the following hostnames: k3s-master for the master, and k3s-worker-XX for each(XX) worker node.

https://medium.com/@mabrams_46032/kubernetes-on-raspberry-pi-c246c72f362f

On linux

sudo fdisk -l /dev/sdaSame command on mac:

sudo fdisk /dev/disk2Get block size on mac

diskutil info /dev/disk2 | grep “Device Block Size”Dump data image from the memory card

sudo dd if=/dev/diskX of=k3s-base.img bs=512 count=END+1- Modify the hosts file

sudo nano /etc/hosts- Change the hostname

sudo nano /etc/hostname- Commit the changes to the system

sudo /etc/init.d/hostname.sh- Finally, reboot

sudo rebootThe k3s server does not need to be ran with su priveledges.

k3s server --disable-agent --no-deploy=servicelb --no-deploy=traefikThe k3s agent must be ran with su priveledges.

sudo k3s agent \

--server https://<__MASTER-IP__>:6443 \

--token <__NODE-TOKEN__>Remove --docker if wanting to use default containerd setup. Note: containerd will give an error that it cannot check the health of containers. This error can be disregarded.

sudo k3s agent \

--docker \

--server https://<__MASTER-IP__>:6443 \

--token <__NODE-TOKEN__> \

> ~/logs.txt 2>&1 &k3s Agent w/ Docker

curl -sfL https://get.k3s.io | K3S_URL=https://<master_ip>:6443 K3S_TOKEN=<token> INSTALL_K3S_EXEC="--docker" sudo sh -Uninstall the above script:

/usr/local/bin/k3s-uninstall.shMore Scripts can be found at the official Rancher k3s GitHub.

If you wish to run your k3s agents in docker containers. Not recommended, but was experimented with.

docker run -d --tmpfs /run --tmpfs /var/run -e K3S_URL=${SERVER_URL} -e K3S_TOKEN=${NODE_TOKEN} --privileged rancher/k3s:v0.3.0cat /home/pi/.kube/k3s.yamlGet this file using sftp using a new terminal window

sftp pi@k3s-master.local

>> get /home/pi/.kube/k3s.yaml .

>> lpwd # shows where k3s.yaml was downloaded to your local machinecat /etc/rancher/k3s/k3s.yamlGet this file using sftp using a new terminal window

sftp pi@k3s-master.local

>> get /etc/rancher/k3s/k3s.yaml .

>> lpwd # shows where k3s.yaml was downloaded to your local machineTwo options:

- Local (on Raspberry Pi Master)

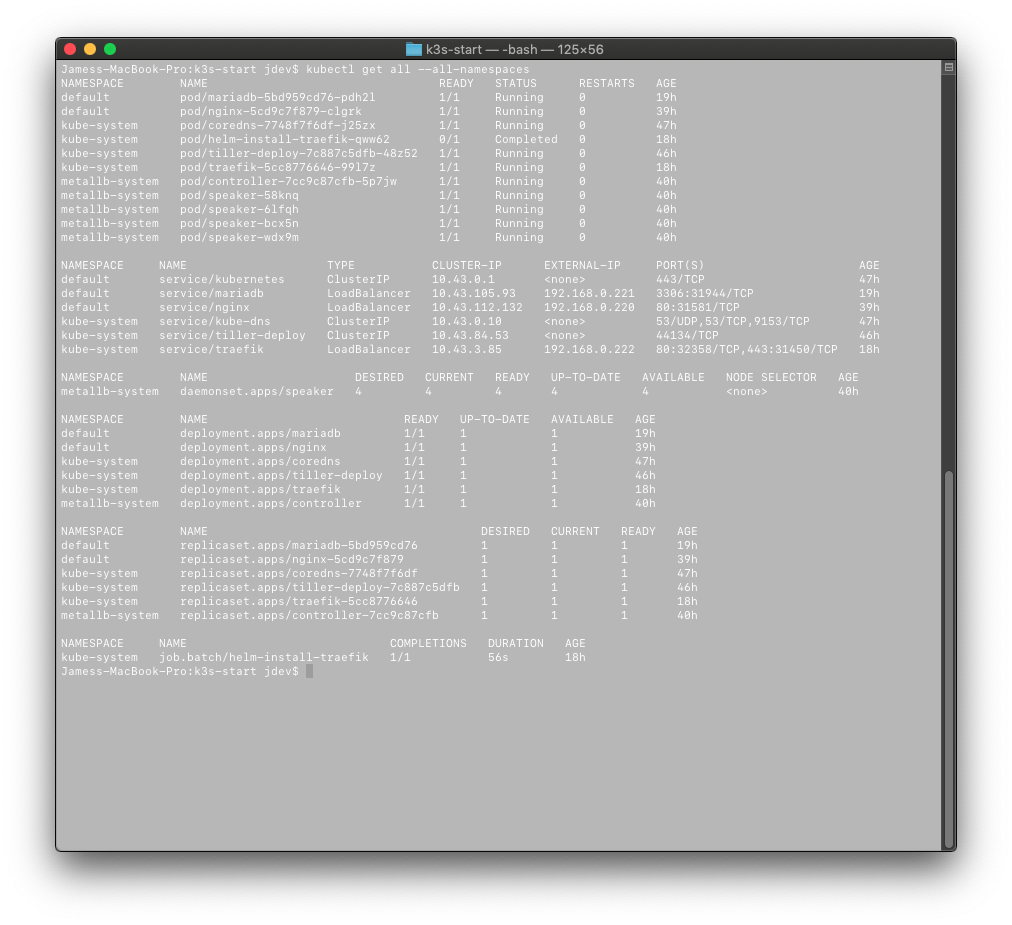

ssh pi@k3s-master.localgives access tosudo k3s kubectl ... - Remote (another computer attached to the local network)

export KUBECONFIG="file_path/k3s.yaml"and access your cluster usingkubectl ...

Note: all commands will be given as kubectl beyond this point. If you are connected to pi@k3s-master.local use sudo k3s kubectl.

-

Label master and taint the node to not schedule work for itself

A. Label masternode

kubectl label node k3s-master kubernetes.io/role=master

B. Apply master role

kubectl label node k3s-master node-role.kubernetes.io/master=""C. Taint master node

kubectl taint nodes k3s-master node-role.kubernetes.io/master=effect:NoSchedule

-

Label Worker node roles - must be done for each worker node

A. Label worker node as role node

kubectl label node k3s-worker-xx kubernetes.io/role=node

B. Apply Node role to node

kubectl label node k3s-worker-xx node-role.kubernetes.io/node=""

- Create tiller system account

kubectl -n kube-system create sa tiller \

&& kubectl create clusterrolebinding tiller \

--clusterrole cluster-admin \

--serviceaccount=kube-system:tiller- More Role Binding

kubectl patch deploy --namespace kube-system tiller-deploy -p '{"spec":{"template":{"spec":{"serviceAccount":"tiller"}}}}' - Install tiller on k3s for armhf

helm init --tiller-image=jessestuart/tiller --service-account tillerhttps://metallb.universe.tf/tutorial/layer2/

- Apply the MetalLB service

kubectl apply -f https://raw.githubusercontent.com/google/metallb/v0.7.3/manifests/metallb.yaml- Apply your metallb-config.yaml

apiVersion: v1

kind: ConfigMap

metadata:

namespace: metallb-system

name: config

data:

config: |

address-pools:

- name: default

protocol: layer2

addresses:

- 192.168.0.220-192.168.0.245Modified deployment from https://hub.docker.com/r/linuxserver/mariadb to mariadb-deployment.yaml to utilize MetalLB and expose a LoadBalanced IP. More could be done to deploy a replica-set.

kubectl apply -f ./mariadb-deployment.yamlCreate secret for openfaas

kubectl -n openfaas create secret generic basic-auth \

--from-literal=basic-auth-user=admin \

--from-literal=basic-auth-password=abc1Create namespaces for Openfaas and Openfaas functions

kubectl apply -f https://raw.githubusercontent.com/openfaas/faas-netes/master/namespaces.ymlOR create your own namespaces using the YAML below:

apiVersion: v1

kind: Namespace

metadata:

name: openfaas

labels:

role: openfaas-system

access: openfaas-system

istio-injection: enabled

---

apiVersion: v1

kind: Namespace

metadata:

name: openfaas-fn

labels:

istio-injection: enabled

role: openfaas-fnApply the Helm chart from this repo

helm repo update \

&& helm upgrade openfaas --install ./faas-netes/chart/openfaas \

--namespace openfaas \

--set basic_auth=false \

--set functionNamespace=openfaas-fn \

--set serviceType=LoadBalancerIn case you mess up and need to fully remove the openfaas helm chart:

helm del --purge openfaas Addition Resource: https://www.hanselman.com/blog/HowToBuildAKubernetesClusterWithARMRaspberryPiThenRunNETCoreOnOpenFaas.aspx

Check if you can access your OpenFaaS from your local terminal

faas-cli list -g <LOADBALANCER_EXT_IP>:8080 helm repo update \

&& helm upgrade grafana --install ./grafana \

--namespace openfaas \

--set basic_auth=falseGet the admin account password

kubectl get secret --namespace openfaas grafana -o jsonpath="{.data.admin-password}" | base64 --decode ; echoStill working on display panels for OpenFaaS prometheus metrics.

Using usb2.0 gigabit lan adapter

- Tutorial: https://drjohnstechtalk.com/blog/2014/03/bridging-with-the-raspberry-pi/

- Docs: https://wiki.linuxfoundation.org/networking/bridge

sudo apt install bridge-utilsCheck USB devices

lsusbCreate Bridge and add eth0 and eth1

sudo brctl addbr br0

sudo brctl addif br0 eth0

sudo brctl addif br0 eth1Define br0 in /etc/network/interface

auto br0

iface br0 inet dhcp

bridge_ports eth0 eth1

Not working having problems with certs

helm repo update \

&& helm upgrade docker-registry --install ./docker-registry \

--namespace docker-registry \

--set basic_auth=falseModified the below YAML to k8s-dashboard-head.yaml

kubectl apply -f https://raw.githubusercontent.com/kubernetes/dashboard/master/aio/deploy/recommended/kubernetes-dashboard-head.yamlERROR: The UI will load to the certificate stage then not allow you to actually log in.

- Install cert manager

a. Following code does not work for ARM32v7 need a different cert mananager.

helm install stable/cert-manager \ --name cert-manager \ --namespace kube-system \ --version v0.5.2

Raspberry pi (ARM32V7) not supported????

sudo docker run -d --restart=unless-stopped -p 80:80 -p 443:443 -v /opt/rancher:/var/lib/rancher rancher/rancher:latestBuild code on Raspberrypi/OdroidXU4

- Install Dapper

sudo curl -sL https://releases.rancher.com/dapper/latest/dapper-`uname -s`-`uname -m` -o /usr/local/bin/dapper;

sudo chmod +x /usr/local/bin/dapper- Download rancher and build with dapper

git clone https://github.com/rancher/rancher

cd rancher

git checkout v2.1.9

export ARCH=arm64

export DAPPER_HOST_ARCH=arm

dapperminio requirement - https://github.com/dvstate/minio/blob/master/Dockerfile.release.armhf

Sources are noted as bulleted hyperlinks proceding the cited information. In the case of any questions, comments, or missing citations please contact the owner of this repository directly. Do not hesitate to submit a Pull and add-on to this tutorial to better the Open Source Raspberry Pi Cluster Community.

See https://github.com/boyroywax/ansible-k3s-rpi

Error: having problems getting NFS server to mount - Logs

helm repo update && \

helm install --name nfs-client-provisioner-arm \

--namespace nfs-client-provisioner \

--set nfs.server=odroid-01.local \

--set nfs.path=/media/ssd/nfs \

./helm-nfs-client-provisioner-armAttempted solutions:

- Install nfs-common on all the k3s nodes

- Check permissions

- set mount options:

_netdev, auto, souid, nouid, vers=4.1 - disable RBAC

- disable Security