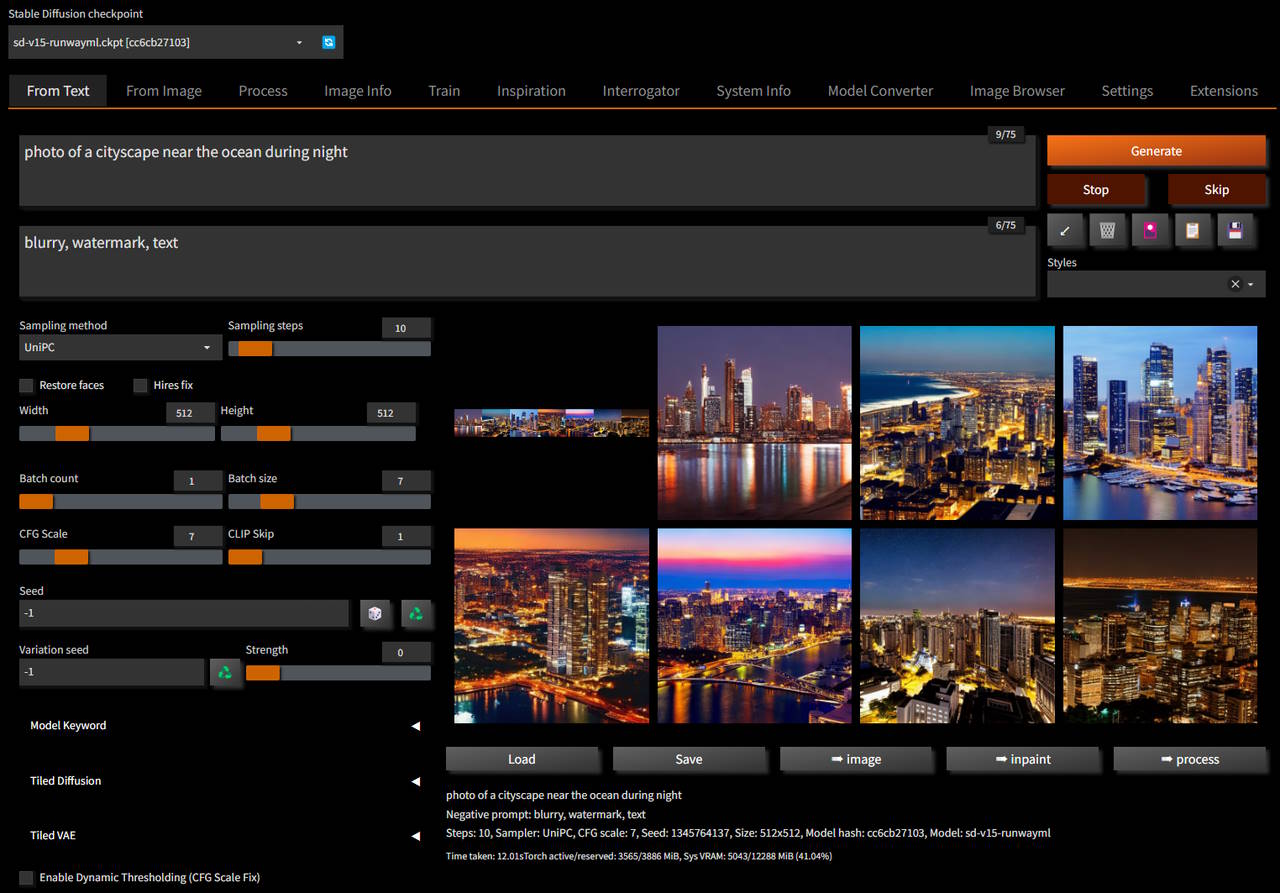

Heavily opinionated custom fork of https://github.com/AUTOMATIC1111/stable-diffusion-webui

Fork is as close as up-to-date with origin as time allows

All code changes are merged upstream whenever possible

The idea behind the fork is to enable latest technologies and advances in text-to-image generation

Sometimes this is not the same as "as simple as possible to use"

If you are looking an amazing simple-to-use Stable Diffusion tool, I'd suggest InvokeAI specifically due to its automated installer and ease of use

- New logger

- New error and exception handlers

- Built-in performance profiler

- Enhanced environment tuning

- Updated libraries to latest known compatible versions

- Includes opinionated System and Options configuration

- Does not rely on

Accelerateas it only affects distributed systems - Optimized startup

Gradio web server will be initialized much earlier which model load is done in the background

Faster model loading plus ability to fallback on corrupt models - Uses simplified folder structure

e.g./train,/outputs/*,/models/*, etc. - Enhanced training templates

- Built-in

LoRA,LyCORIS,Custom Diffusion,Dreamboothtraining - Majority of settings configurable via UI without the need for command line flags

e.g, cross-optimization methods, system folders, etc.

- Includes updated UI: reskinned and reorganized

Black and orange dark theme with fixed width options panels and larger previews

- Optimized for

Torch2.0 - Runs with

SDPmemory attention enabled by default if supported by system - Auto-adjust parameters when running on CPU or CUDA

Note: AMD and M1 platforms are supported, but without out-of-the-box optimizations

- Drops compatibility with older versions of

pythonand requires 3.9 or 3.10 - Drops localizations

- Drops automated tests

Fork adds extra functionality:

- New skin and UI layout

- Ships with set of CLI tools that rely on SD API for execution:

e.g.generate,train,bench, etc.

Full list

- System Info

- ControlNet

- Image Browser

- LORA (both training and inference)

- LyCORIS (both training and inference)

- Model Converter

- CLiP Interrogator

- Dynamic Thresholding

- Steps Animation

- Seed Travel

- Model Keyword

- Install first:

Python & Git - If you have nVidia GPU, install nVidia CUDA toolkit:

https://developer.nvidia.com/cuda-downloads - Clone repository

git clone https://github.com/vladmandic/automatic

Run desired startup script to install dependencies and extensions and start server:

webui.batandwebui.sh:

Platform specific wrapper scripts For Windows, Linux and OSX

Startslaunch.pyin a Python virtual environment (venv)

Note: Server can run without virtual environment, but it is recommended to use it to avoid library version conflicts with other applications

If you're unsure which launcher to use, this is the one you wantlaunch.py:

Main startup script

Can be used directly to start server in a manually activatedvenvor to run server withoutvenvsetup.py:

Main installer, used bylaunch.py

Can also be used directly to update repository or extensions

If running manually, make sure to activatevenvfirst (if used)webui.py:

Main server script

Any of the above scripts can be used with --help to display detailed usage information and available parameters

For example:

webui.bat --help

Full startup sequence is logged in setup.log, so if you encounter any issues, please check it first

The launcher can perform automatic update of main repository, requirements, extensions and submodules:

- Main repository:

Update is not performed by default, enable with--upgradeflag - Requirements:

Check is performed on each startup and missing requirements are auto-installed, can be skipped with--skip-requirementsflag - Extensions and submodules:

Update is performed on each startup and installer for each extension is started, can be skipped with--skip-extensionsflag - All checks can be skipped using

--quickflag

This repository comes with a large collection of scripts that can be used to process inputs, train, generate, and benchmark models

As well as number of auxiliary scripts that do not rely on WebUI, but can be used for end-to-end solutions such as extract frames from videos, etc.

For full details see Docs