Scalable data ingestion is a key aspect for a large-scale distributed search and analytics engine like OpenSearch. Sometimes there are specific requirements in your data pipeline which might need you to write your own integration layer.

This blog post covers how to create a data pipeline wherein data written into Apache Kafka is ingested into OpenSearch. We will make use of a custom Go application to ingest data using Go clients for Kafka and OpenSearch.

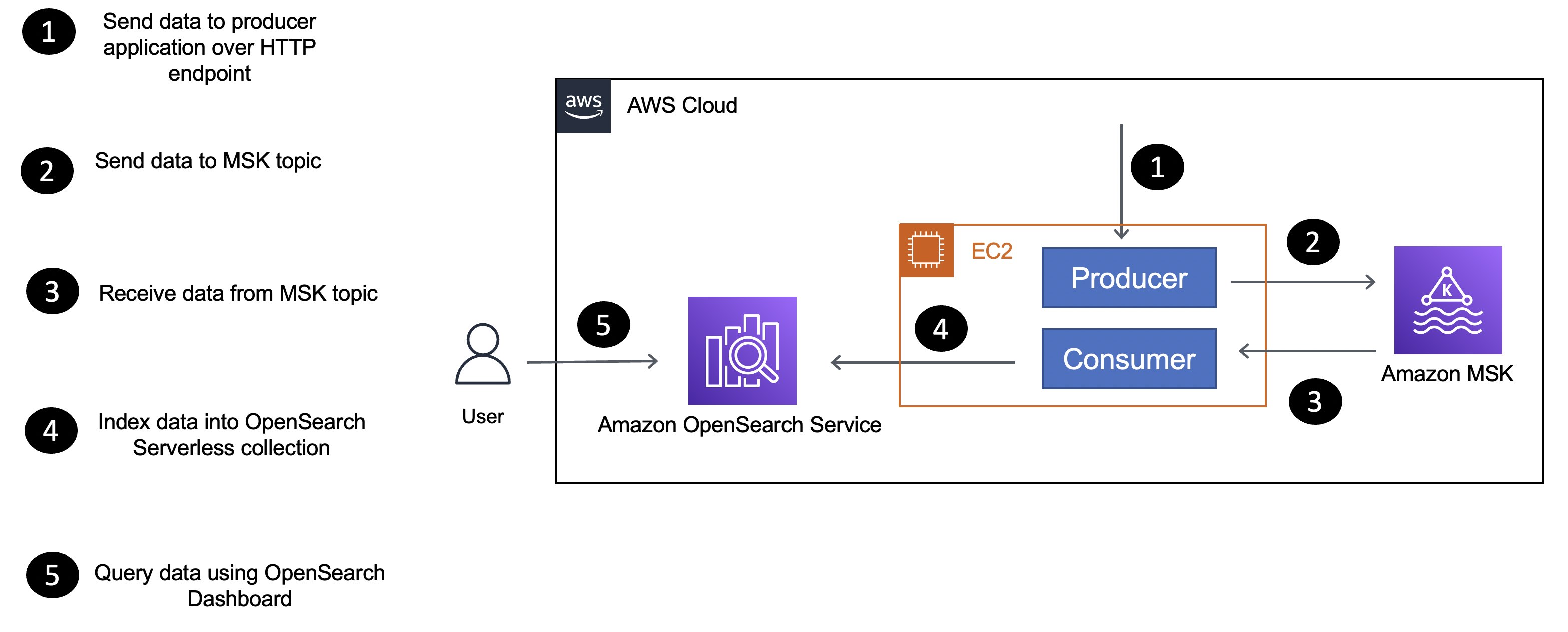

Here is a simplified version of the application architecture that outlines the components and how they interact with each other.

The application consists of producer and consumer components, which are Go applications deployed to an EC2 instance):

- The producer sends data to the MSK Serverless cluster.

- The consumer application receives data (

movieinformation) from the MSK Serverless topic and uses the OpenSearch Go client to index data in themoviescollection.

See CONTRIBUTING for more information.

This library is licensed under the MIT-0 License. See the LICENSE file.