α,β-CROWN (alpha-beta-CROWN): A Fast and Scalable Neural Network Verifier with Efficient Bound Propagation

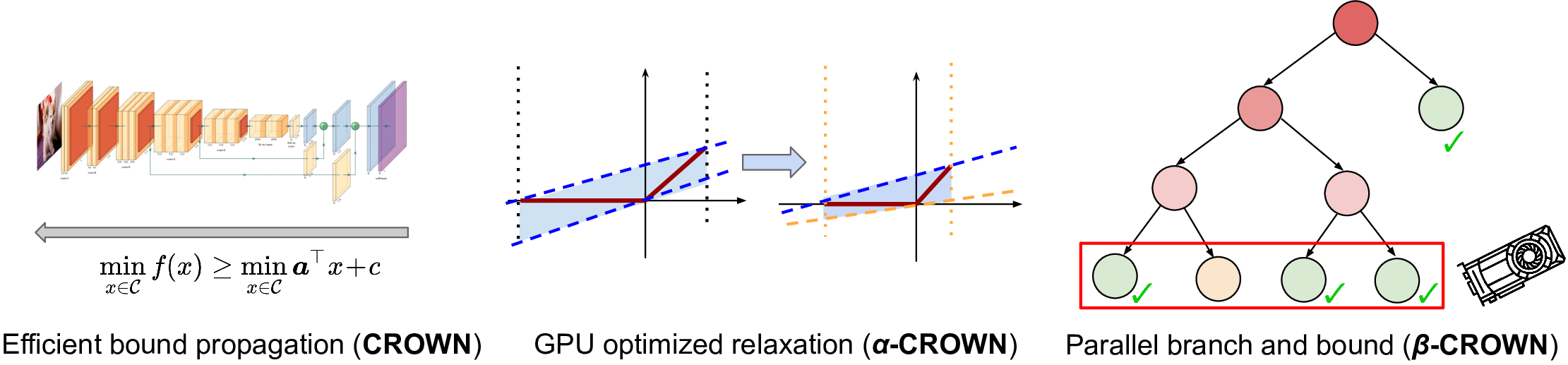

α,β-CROWN (alpha-beta-CROWN) is a neural network verifier based on an efficient bound propagation algorithm (CROWN) and branch and bound. It can be accelerated efficiently on GPUs and can scale to relatively large convolutional networks. It also supports a wide range of neural network architectures (e.g., CNN, ResNet, and various activation functions), thanks to the versatile auto_LiRPA library developed by us. α,β-CROWN can provide provable robustness guarantees against adversarial attacks and can also verify other general properties of neural networks.

α,β-CROWN is the winning verifier in VNN-COMP 2021 (International Verification of Neural Networks Competition) with the highest total score, outperforming 11 other neural network verifiers on a wide range of benchmarks. Details of competition results can be found in the slides here and the report here.

We support these verification algorithms:

- β-CROWN (Wang et al. 2021): complete verification with CROWN (Zhang et al. 2018) and branch and bound

- α-CROWN (Xu et al., 2021): incomplete verification with optimized CROWN bound

- MIP (Tjeng et al., 2017): mixed integer programming (slow but can be useful on small models).

We support these neural network architectures:

- Layers: fully connected (FC), convolutional (CNN), pooling (average pool and max pool)

- Activation functions: ReLU (incomplete/complete verification); sigmoid and tanh (incomplete verification)

- Residual connections

We support the following verification specifications:

- Lp norm perturbation (p=1,2,infinity, as often used in robustness verification)

- VNNLIB format input (at most two layers of AND/OR clause, as used in VNN-COMP 2021)

- Any linear specifications on neural network output (which can be added as a linear layer)

We also provide a few example configurations in

complete_verifier/exp_configs directory to

start with:

- MNIST: MLP and CNN models

- CIFAR: CNN and ResNet models

- ACASXu

α,β-CROWN is based on Python 3.7+ and PyTorch 1.8.x LTS. It can be installed easily into a conda environment. If you don't have conda, you can install miniconda.

# Remove the old environment, if necessary.

conda deactivate; conda env remove --name alpha-beta-crown

conda env create -f complete_verifier/environment.yml # install all dependents into the alpha-beta-crown environment

conda activate alpha-beta-crown # activate the environmentIf you use the α-CROWN and/or β-CROWN verifiers (which covers the most use

cases), a Gurobi license is not needed. If you want to use MIP based

verification algorithms (feasible only for small MLP models), you need to

install a Gurobi license with the grbgetkey command. If you don't have

access to a license, by default the above installation procedure includes a

free and restricted license, which is actually sufficient for many relatively

small NNs.

If you prefer to install packages manually, you can refer to this installation script.

If you want to run α,β-CROWN verifier on the VNN-COMP 2021 benchmarks (e.g., to make a comparison to a new verifier), you can follow this guide.

We provide three frontends for the verifier, depending on different use cases:

robustness_verifier.py: for Lp norm robustness verification, often used to certify the robustness of a neural networkbab_verification_general.py: for verifying VNNLIB format specifications, as in the MNIST/CIFAR models in VNN-COMP 2021bab_verification_input_split.py: for branch and bound on input space, such as the ACASXu model.

All parameters for the verifier are defined in a yaml config file. For example, to run robustness verification on a

CIFAR-10 ResNet network, you just run:

conda activate alpha-beta-crown # activate the conda environment

cd complete_verifier

python robustness_verifier.py --config exp_configs/cifar_resnet_2b.yamlYou can find explanations for most useful parameters in this example config

file. For detailed usage please see the

Usage Documentation. We also provide a large range of examples in

the complete_verifier/exp_configs folder.

We have also provided a tutorial example on colab for running α,β-CROWN.

It is easy to load and run your customized model using our verifier.

- Put your PyTorch model definition in

complete_verifier/model_defs.py, and set themodel/namefield in your configuration file. You can copy this configuration file as a template, or start with a config file incomplete_verifier/exp_configsthat is very close to your customized model. (This step is not needed if you usebab_verification_general.pywith onnx input) - Add your dataloader into the

load_verification_dataset()function incomplete_verifier/utils.py. If you just need the standard MNIST or CIFAR datasets, you can just set thedatasetfield in configuration toCIFARorMNISTand use the existing dataloader. Be careful with dataset normalization. (This step is not needed if you use avnnlibformat input withbab_verification_general.py, which already contains the data) - Set verification specifications and hypereparameters in the config file. All parameters are documented here. The default hyperparameters should work reasonably well.

- Run the verifier with your configuration file, e.g.

python robustness_verifier.py --config your_model.yaml.

For all supported options in config files, please see the Usage Documentation.

We have also provided tutorial examples on colab for α,β-CROWN customization.

If you use our verifier in your work, please kindly cite our CROWN(Zhang et al., 2018), α-CROWN (Xu et al., 2021) and β-CROWN(Wang et al., 2021) papers. If your work involves the convex relaxation of the NN verification please kindly cite Salman et al., 2019. If your work deals with ResNet/DenseNet, LSTM (recurrent networks), Transformer or other complex architectures, or model weight perturbations please kindly cite Xu et al., 2020.

α,β-CROWN combines our existing efforts on neural network verification:

-

CROWN (Zhang et al. NeurIPS 2018) is a very efficient bound propagation based verification algorithm. CROWN propagates a linear inequality backwards through the network and utilizes linear bounds to relax activation functions.

-

LiRPA (Xu et al., NeurIPS 2020) is a generalization of CROWN on general computational graphs and we also provide an efficient GPU implementation, the auto_LiRPA library.

-

The "convex relaxation barrier" (Salman et al., NeurIPS 2019) paper concludes that optimizing the ReLU relaxation allows CROWN (referred to as a "greedy" primal space solver) to achieve the same solution as linear programming (LP) based verifiers.

-

α-CROWN (sometimes referred to as optimized CROWN or optimized LiRPA) is used in the Fast-and-Complete verifier (Xu et al., ICLR 2021), which jointly optimizes intermediate layer bounds and final layer bounds in CROWN via variable α. α-CROWN typically has greater power than LP since LP cannot cheaply tighten intermediate layer bounds.

-

β-CROWN (Wang et al. 2021) incorporates split constraints in branch and bound (BaB) into the CROWN bound propagation procedure via an additional optimizable parameter β. The combination of efficient and GPU accelerated bound propagation with branch and bound produces a powerful and scalable neural network verifier.

We provide bibtex entries below:

@article{zhang2018efficient,

title={Efficient Neural Network Robustness Certification with General Activation Functions},

author={Zhang, Huan and Weng, Tsui-Wei and Chen, Pin-Yu and Hsieh, Cho-Jui and Daniel, Luca},

journal={Advances in Neural Information Processing Systems},

volume={31},

pages={4939--4948},

year={2018},

url={https://arxiv.org/pdf/1811.00866.pdf}

}

@article{xu2020automatic,

title={Automatic perturbation analysis for scalable certified robustness and beyond},

author={Xu, Kaidi and Shi, Zhouxing and Zhang, Huan and Wang, Yihan and Chang, Kai-Wei and Huang, Minlie and Kailkhura, Bhavya and Lin, Xue and Hsieh, Cho-Jui},

journal={Advances in Neural Information Processing Systems},

volume={33},

year={2020}

}

@article{salman2019convex,

title={A Convex Relaxation Barrier to Tight Robustness Verification of Neural Networks},

author={Salman, Hadi and Yang, Greg and Zhang, Huan and Hsieh, Cho-Jui and Zhang, Pengchuan},

journal={Advances in Neural Information Processing Systems},

volume={32},

pages={9835--9846},

year={2019}

}

@inproceedings{xu2021fast,

title={{Fast and Complete}: Enabling Complete Neural Network Verification with Rapid and Massively Parallel Incomplete Verifiers},

author={Kaidi Xu and Huan Zhang and Shiqi Wang and Yihan Wang and Suman Jana and Xue Lin and Cho-Jui Hsieh},

booktitle={International Conference on Learning Representations},

year={2021},

url={https://openreview.net/forum?id=nVZtXBI6LNn}

}

@article{wang2021beta,

title={{Beta-CROWN}: Efficient bound propagation with per-neuron split constraints for complete and incomplete neural network verification},

author={Wang, Shiqi and Zhang, Huan and Xu, Kaidi and Lin, Xue and Jana, Suman and Hsieh, Cho-Jui and Kolter, J Zico},

journal={Advances in Neural Information Processing Systems},

volume={34},

year={2021}

}

The α,β-CROWN verifier is developed by a team from CMU, Northeastern University, Columbia University and UCLA: (* indicates equal contribution)

- Huan Zhang* (CMU), huan@huan-zhang.com (Team lead)

- Kaidi Xu* (Northeastern University), xu.kaid@northeastern.edu

- Shiqi Wang* (Columbia University), sw3215@columbia.edu

- Zhouxing Shi (UCLA) zshi@cs.ucla.edu

- Yihan Wang (UCLA) yihanwang@ucla.edu

- Xue Lin (Northeastern University), xue.lin@northeastern.edu (advisor)

- Suman Jana (Columbia University), suman@cs.columbia.edu (advisor)

- Cho-Jui Hsieh (UCLA), chohsieh@cs.ucla.edu (advisor)

- Zico Kolter (CMU), zkolter@cs.cmu.edu (advisor)

Our library is released under the BSD 3-Clause license.