A simple web spider frame written by Python, which needs Python3.5+

- Support multi-threading crawling mode (using threading and requests)

- Support multi-processing in parse process, automatically (using multiprocessing)

- Support using proxies for crawling (using threading and queue)

- Define some utility functions and classes, for example: UrlFilter, get_string_num, etc

- Fewer lines of code, easyer to read, understand and expand

- utilities module: define some utilities functions and classes for multi-threading spider

- instances module: define classes of fetcher, parser, saver for multi-threading spider

- concurrent module: define WebSpiderFrame of multi-threading spider

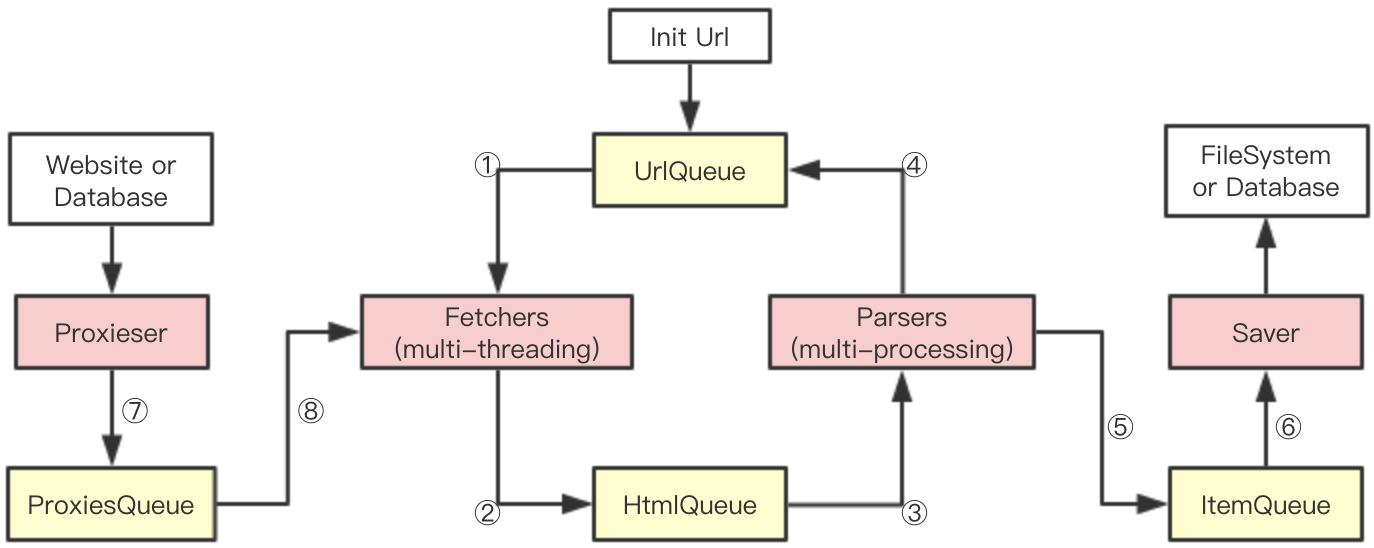

①: Fetchers get url from UrlQueue, and makes requests based on this url

①: Fetchers get url from UrlQueue, and makes requests based on this url

②: Put the result of ① to HtmlQueue, and so Parser can get it

③: Parser gets item from HtmlQueue, and parses it to get new urls and items which need save

④: Put the new urls to UrlQueue, and so Fetcher can get it

⑤: Put the items to ItemQueue, and so Saver can get it

⑥: Saver gets item from ItemQueue, and saves it to filesystem or database

⑦: Proxieser gets proxies from web or database and puts proxies to ProxiesQueue

⑧: Fetcher gets proxies from ProxiesQueue if needed, and makes requests based on this proxies

Installation: you'd better use the first method

(1)Copy the "spider" directory to your project directory, and import spider

(2)Install spider to your python system using python3 setup.py install

See test.py

- Distribute Spider

- Execute JavaScript

- More Demos