To access the archived version of the tool, navigate to the Archive branch.

- Azure Cosmos DB Desktop Data Migration Tool

The Azure Cosmos DB Desktop Data Migration Tool is an open-source project containing a command-line application that provides import and export functionality for Azure Cosmos DB.

To use the tool, download the latest zip file for your platform (win-x64, mac-x64, or linux-x64) from Releases and extract all files to your desired install location. To begin a data transfer operation, first populate the migrationsettings.json file with appropriate settings for your data source and sink (see detailed instructions below or review examples), and then run the application from a command line: dmt.exe on Windows or dmt on other platforms.

Multiple extensions are provided in this repository. Find the documentation for the usage and configuration of each using the links provided:

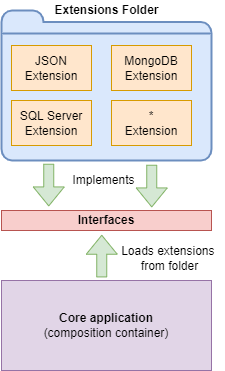

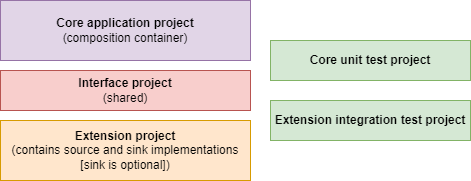

The Azure Cosmos DB Desktop Data Migration Tool is a lightweight executable that leverages the Managed Extensibility Framework (MEF). MEF enables decoupled implementation of the core project and its extensions. The core application is a command-line executable responsible for composing the required extensions at runtime by automatically loading them from the Extensions folder of the application. An Extension is a class library that includes the implementation of a System as a Source and (optionally) Sink for data transfer. The core application project does not contain direct references to any extension implementation. Instead, these projects share a common interface.

The Cosmos DB Data Migration Tool core project is a C# command-line executable. The core application serves as the composition container for the required Source and Sink extensions. Therefore, the application user needs to put only the desired Extension class library assembly into the Extensions folder before running the application. In addition, the core project has a unit test project to exercise the application's behavior, whereas extension projects contain concrete integration tests that rely on external systems.

This project has adopted the Microsoft Open Source Code of Conduct. For more information see the Code of Conduct FAQ or contact opencode@microsoft.com with any additional questions or comments.

- From a command prompt, execute the following command in an empty working folder that will house the source code.

git clone https://github.com/AzureCosmosDB/data-migration-desktop-tool.git-

Using Visual Studio 2022, open

CosmosDbDataMigrationTool.sln. -

Build the project using the keyboard shortcut Ctrl+Shift+B (Cmd+Shift+B on a Mac). This will build all current extension projects as well as the command-line Core application. The extension projects build assemblies get written to the Extensions folder of the Core application build. This way all extension options are available when the application is run.

This tutorial outlines how to use the Azure Cosmos DB Desktop Data Migration Tool to move JSON data to Azure Cosmos DB. This tutorial uses the Azure Cosmos DB Emulator.

- Visual Studio 2022

- .NET 6.0 SDK

- Azure Cosmos DB Emulator or Azure Cosmos DB resource.

Task 1: Provision a sample database and container using the Azure Cosmos DB Emulator as the destination(sink)

-

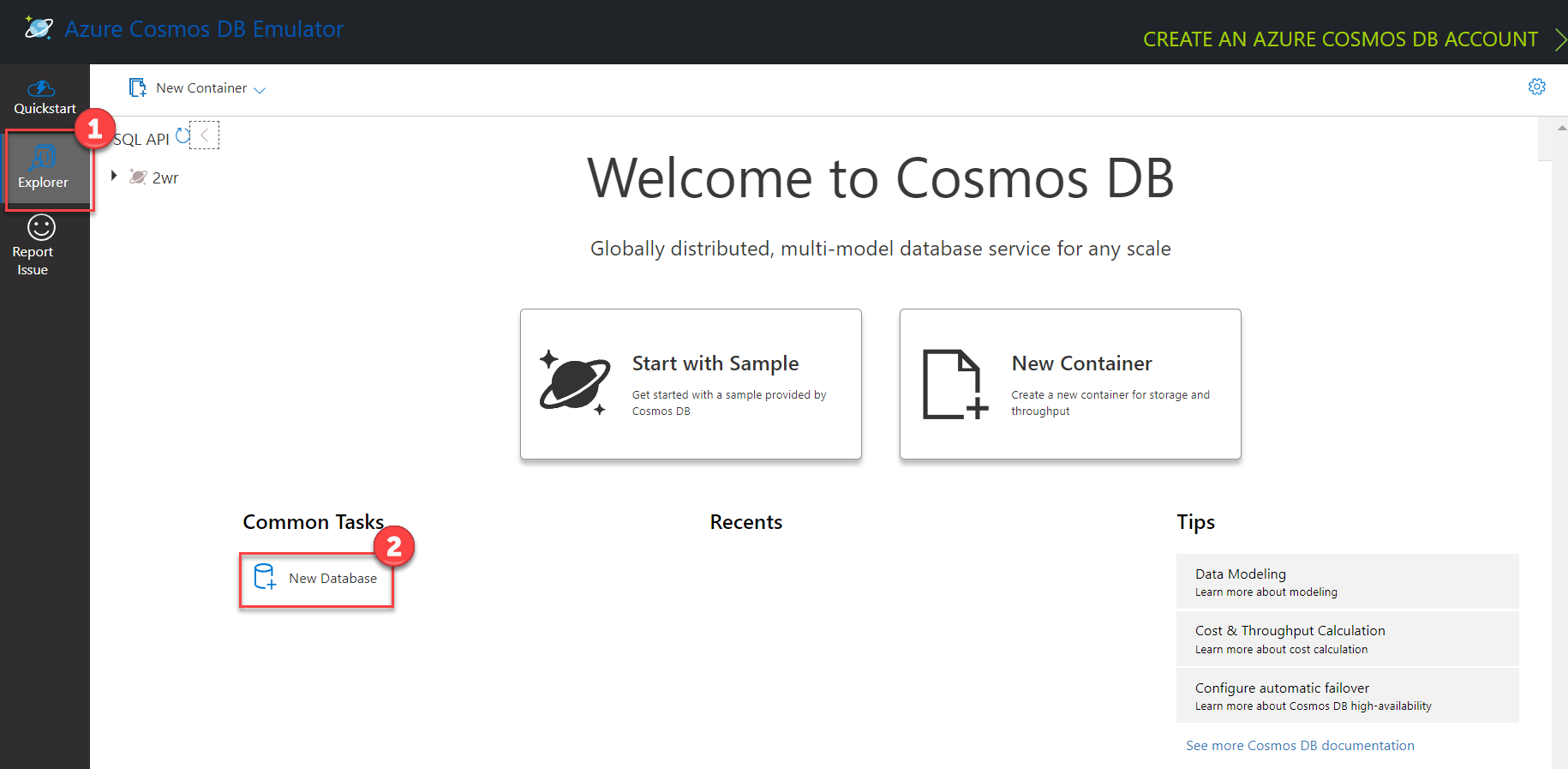

Launch the Azure Cosmos DB emulator application and open https://localhost:8081/_explorer/index.html in a browser.

-

Select the Explorer option from the left menu. Then choose the New Database link found beneath the Common Tasks heading.

-

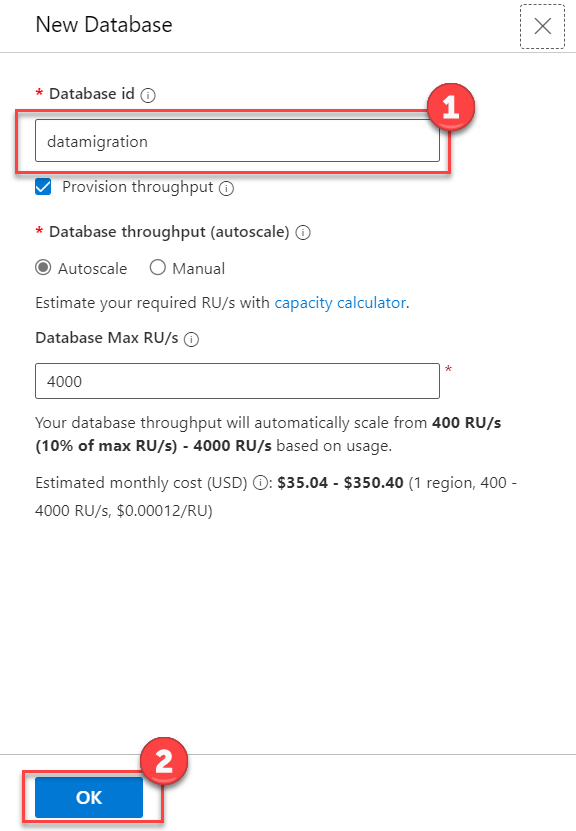

On the New Database blade, enter

datamigrationin the Database id field, then select OK. -

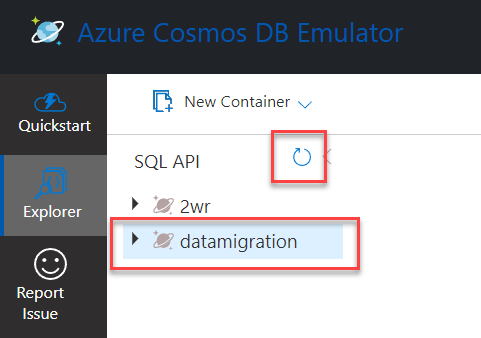

If the datamigration database doesn't appear in the list of databases, select the Refresh icon.

-

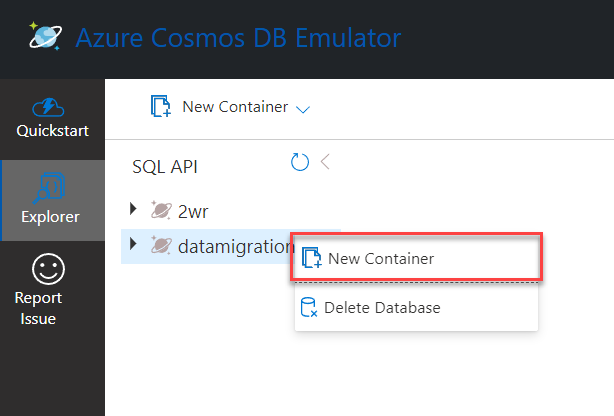

Expand the ellipsis menu next to the datamigration database and select New Container.

-

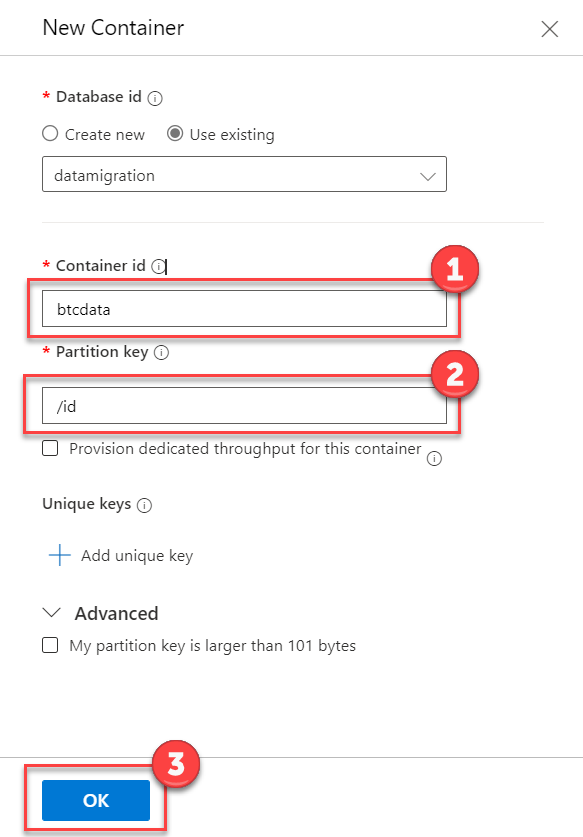

On the New Container blade, enter

btcdatain the Container id field, and/idin the Partition key field. Select the OK button.Note: When using the Cosmos DB Data Migration tool, the container doesn't have to previously exist, it will be created automatically using the partition key specified in the sink configuration.

- Locate the docs/resources/sample-data.zip file. Extract the files to any desired folder. These files serve as the JSON data that is to be migrated to Cosmos DB.

-

Each extension contains a README document that outlines configuration for the data migration. In this case, locate the configuration for JSON (Source) and Cosmos DB (Sink).

-

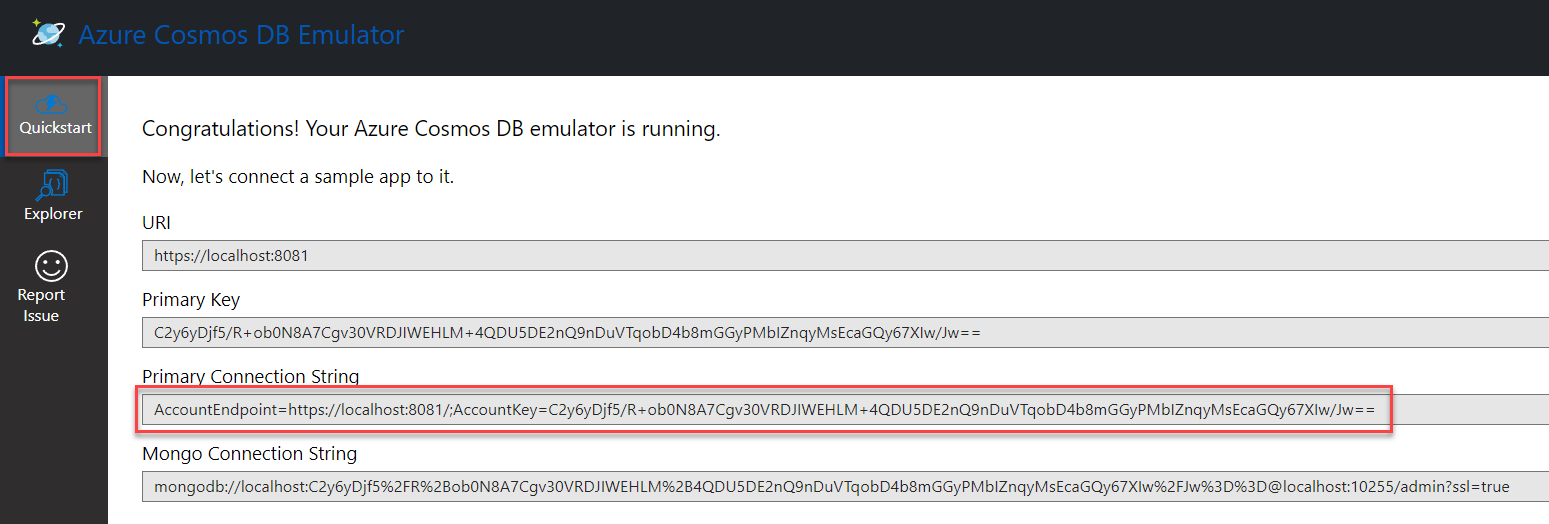

In the Visual Studio Solution Explorer, expand the Microsoft.Data.Transfer.Core project, and open migrationsettings.json. This file provides an example outline of the settings file structure. Using the documentation linked above, configure the SourceSettings and SinkSettings sections. Ensure the FilePath setting is the location where the sample data is extracted. The ConnectionString setting can be found on the Cosmos DB Emulator Quickstart screen as the Primary Connection String. Save the file.

Note: The alternate terms Target and Destination can be used in place of Sink in configuration files and command line parameters. For example

"Target"and"TargetSettings"would also be valid in the below example.{ "Source": "JSON", "Sink": "Cosmos-nosql", "SourceSettings": { "FilePath": "C:\\btcdata\\simple_json.json" }, "SinkSettings": { "ConnectionString": "AccountEndpoint=https://localhost:8081/;AccountKey=C2y6yDj...", "Database": "datamigration", "Container": "btcdata", "PartitionKeyPath": "/id", "RecreateContainer": false, "IncludeMetadataFields": false } } -

Ensure the Cosmos.DataTransfer.Core project is set as the startup project then press F5 to run the application.

-

The application then performs the data migration. After a few moments the process will indicate Data transfer complete. or Data transfer failed.

Note: The

SourceandSinkproperties should match the DisplayName set in the code for the extensions.

-

Ensure the project is built.

-

The Extensions folder contains the plug-ins available for use in the migration. Each extension is located in a folder with the name of the data source. For example, the Cosmos DB extension is located in the folder Cosmos. Before running the application, you can open the Extensions folder and remove any folders for the extensions that are not required for the migration.

-

In the root of the build folder, locate the migrationsettings.json and update settings as documented in the Extension documentation. Example file (similar to tutorial above):

{ "Source": "JSON", "Sink": "Cosmos-nosql", "SourceSettings": { "FilePath": "C:\\btcdata\\simple_json.json" }, "SinkSettings": { "ConnectionString": "AccountEndpoint=https://localhost:8081/;AccountKey=C2y6yDj...", "Database": "datamigration", "Container": "btcdata", "PartitionKeyPath": "/id", "RecreateContainer": false, "IncludeMetadataFields": false } }

Note: migrationsettings.json can also be configured to execute multiple data transfer operations with a single run command. To do this, include an

Operationsproperty consisting of an array of objects that includeSourceSettingsandSinkSettingsproperties using the same format as those shown above for single operations. Additional details and examples can be found in this blog post.

-

Execute the program using the following command:

dmt.exe

Note: Use the

--settingsoption with a file path to specify a different settings file (overriding the default migrationsettings.json file). This facilitates automating running of different migration jobs in a programmatic loop.

-

Decide what type of extension you want to create. There are 3 different types of extensions and each of those can be implemented to read data, write data, or both.

- DataSource/DataSink extension: Appropriate for data sources which include both a native data format and storage. Most databases fall under this category and generally your extension will be written using an SDK specific to that type of database. For example, SQL Server uses data structured as tables and is accessed through drivers that handle underlying communication with the database.

- Binary File Storage extension: Only concerned with the storage of binary files and is agnostic to the specific file format. Examples include files on local disk or cloud blob storage providers. This type of extension can be used by any File Format extension.

- File Format extension: Handles translating data for a specific binary file format but is agnostic to storage. Examples include JSON or Parquet. This type of extension can be combined with any Binary File Storage extension to create multiple DataSource/DataSink extensions.

-

Add a new folder in the Extensions folder with the name of your extension.

-

Create the extension project and an accompanying test project.

- The naming convention for extension projects is

Cosmos.DataTransfer.<Name>Extension. - Extension projects should use .NET 6 framework and Console Application type. A Program.cs file must be included in order to build the console project. A Console Application Project is required to have the build include NuGet referenced packages.

Binary File Storage extensions are only used in combination with other extensions so should be placed in a .NET 6 Class Library without the additional debugging configuration needed below.

- The naming convention for extension projects is

-

Add the new projects to the

CosmosDbDataMigrationToolsolution. -

In order to facilitate local debugging the extension build output along with any dependencies needs to be copied into the

Core\Cosmos.DataTransfer.Core\bin\Debug\net6.0\Extensionsfolder. To set up the project to automatically copy add the following changes.- Add a Publish Profile to Folder named

LocalDebugFolderwith a Target Location of..\..\..\Core\Cosmos.DataTransfer.Core\bin\Debug\net6.0\Extensions - To publish every time the project builds, edit the .csproj file to add a new post-build step:

<Target Name="PublishDebug" AfterTargets="Build" Condition=" '$(Configuration)' == 'Debug' "> <Exec Command="dotnet publish --no-build -p:PublishProfile=LocalDebugFolder" /> </Target>

- Add a Publish Profile to Folder named

-

Add references to the

System.ComponentModel.CompositionNuGet package and theCosmos.DataTransfer.Interfacesproject. -

Extensions can implement either

IDataSourceExtensionto read data orIDataSinkExtensionto write data. Classes implementing these interfaces should include a class levelSystem.ComponentModel.Composition.ExportAttributewith the implemented interface type as a parameter. This will allow the plugin to get picked up by the main application.- Binary File Storage extensions implement the

IComposableDataSourceorIComposableDataSinkinterfaces. To be used with different file formats, the projects containing the formatters should reference the extension's project and add newCompositeSourceExtensionorCompositeSinkExtensionreferencing the storage and formatter extensions. - File Format extensions implement the

IFormattedDataReaderorIFormattedDataWriterinterfaces. In order to be usable each should also declare one or moreCompositeSourceExtensionorCompositeSinkExtensionto define available storage locations for the format. This will require adding references to Storage extension projects and adding a declaration for each file format/storage combination. Example:[Export(typeof(IDataSinkExtension))] public class JsonAzureBlobSink : CompositeSinkExtension<AzureBlobDataSink, JsonFormatWriter> { public override string DisplayName => "JSON-AzureBlob"; }

- Settings needed by the extension can be retrieved from any standard .NET configuration source in the main application by using the

IConfigurationinstance passed into theReadAsyncandWriteAsyncmethods. Settings underSourceSettings/SinkSettingswill be included as well as any settings included in JSON files specified by theSourceSettingsPath/SinkSettingsPath.

- Binary File Storage extensions implement the

-

Implement your extension to read and/or write using the generic

IDataIteminterface which exposes object properties as a list key-value pairs. Depending on the specific structure of the data storage type being implemented, you can choose to support nested objects and arrays or only flat top-level properties.Binary File Storage extensions are only concerned with generic storage so only work with

Streaminstances representing whole files rather than individualIDataItem.