Swalpa Kumar Roy, Ankur Deria, Danfeng Hong, Behnood Rasti, Antonio Plaza, and Jocelyn Chanussot

Get the disjoint dataset (Trento11x11 folder) from Google Drive.

Get the disjoint dataset (Houston11x11 folder) from Google Drive

Get the disjoint dataset (MUUFL11x11 folder) from Google Drive

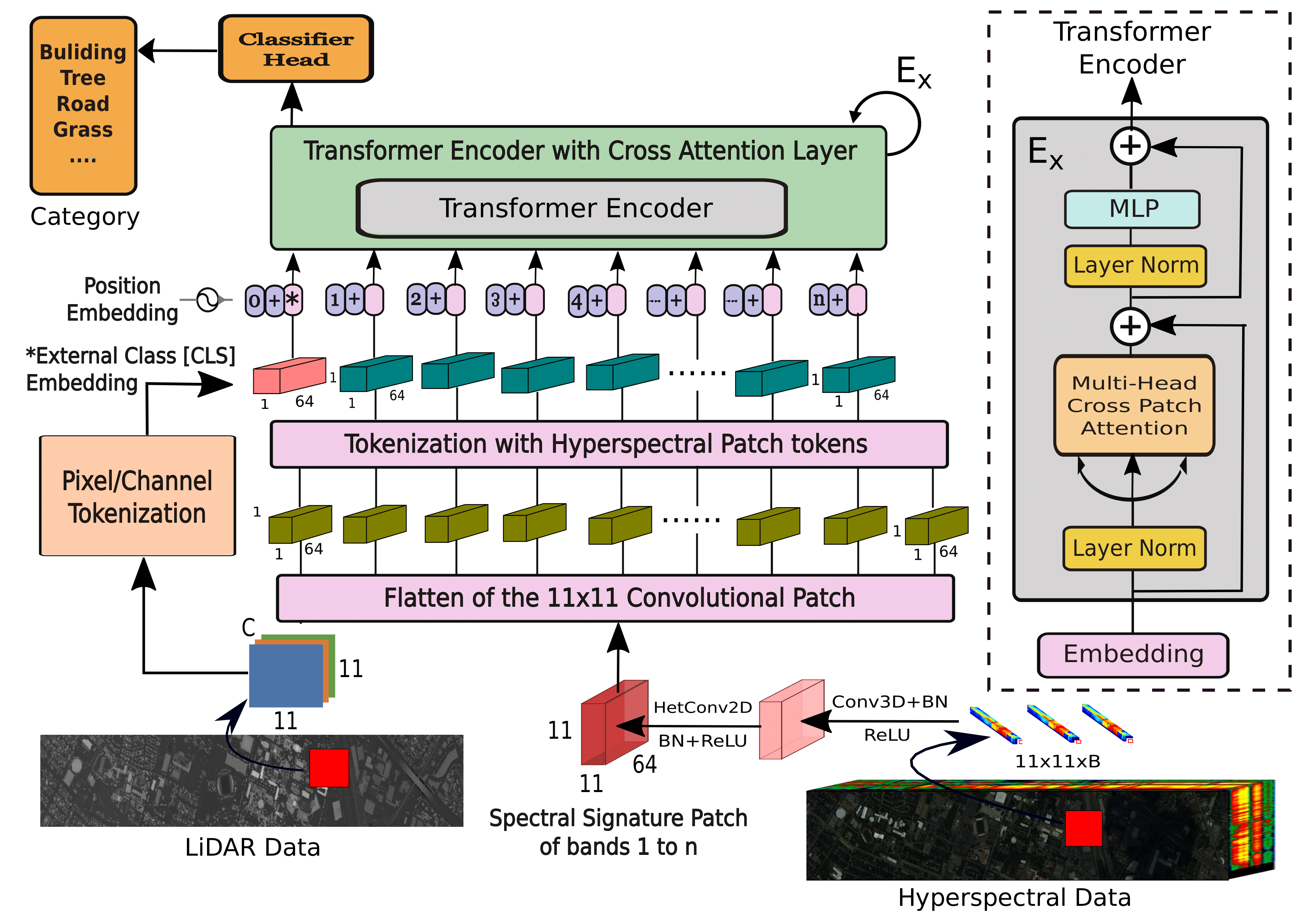

The repository contains the implementations for Multimodal Fusion Transformer for Remote Sensing Image Classification.

-

Trento AISA Eagle sensors were used to collect HSI data over rural regions in the south of Trento, Italy, where the Optech ALTM 3100EA sensors collected LiDAR data. There are 63 bands in each HSI with wavelength ranging from 0.42-0.99 μm, and 1 raster in the LiDAR data that provides elevation information. The spectral resolution is 9.2 nm, and the spatial resolution is 1 meters per pixel. The scene comprises 6 vegetation land-cover classes that are mutually exclusive and a pixel count of 600 × 166.

-

Muffle The MUUFL Gulfport scene was collected over the campus of the University of Southern Mississippi in November 2010 using the Reflective Optics System Imaging Spectrometer (ROSIS) sensor. There are 325 × 220 pixels with 72 spectral bands in the HSI of this dataset. The LiDAR image of this dataset contains elevation data of 2 rasters. The 8 initial and final bands were removed due to noise, giving a total of 64 bands. The data depicts 11 urban land-cover classes containing 53687 ground truth pixels.

-

Houston was acquired by the ITRES CASI-1500 sensor over the University of Houston campus, TX, USA, in June 2012. This data set was originally released by the 2013 IEEE GRSS data fusion contest2, and it has been widely applied for evaluating the performance of land cover classification. The original image is 349 × 1905 pixels recorded in 144 bands ranging from 0.364 to 1.046 μm.

-

Augsburg scene There are three types of data in Augsburg scene which include an HSI, a dual-Pol SAR image, and a DSM image. SAR data are collected from Sentinel-1 platform, while HS and DSM data are captured by DAS-EOC, DLR over the city of Augsburg, Germany. The collection is done by the HySpex sensor, the Sentinel-1 sensor, and the DLR-3 K system, respectively. The spatial resolutions of all images are down-sampled to a unified spatial resolution of 30 m ground sampling distance (GSD) for adequately managing the multimodal fusion. For the HSI, there are 332 × 485 pixels and 180 spectral bands ranging between 0.4-2.5 μm. The DSM image has a single band, whereas the SAR image has 4 bands. The four bands indicate VV intensity, VH intensity, the real component, and the imaginary component of the PolSAR covariance matrix’s off-diagonal element.

The following traditional machine learning methods will be available:

The following deep learning methods will be available:

The following transformer based deep learning methods will be available:

Please kindly cite the papers if this code is useful and helpful for your research.

@article{roy2022multimodal,

title={Multimodal Fusion Transformer for Remote Sensing Image Classification},

author={Roy, Swalpa Kumar and Deria, Ankur and Hong, Danfeng and Rasti, Behnood and Plazza, Antonio and Chanussot, Jocelyn},

journal={IEEE Transactions on Geoscience and Remote Sensing},

volume = {61},

year={2023},

doi = {10.1109/TGRS.2023.3286826}

}

Thanks to Srinadh Reddy for the re-implementation of MFT paper (https://github.com/srinadh99/Transformer-Models-for-Multimodal-Remote-Sensing-Data)