Chao-Yuan Wu,

Manzil Zaheer,

Hexiang Hu,

R. Manmatha,

Alexander J. Smola,

Philipp Krähenbühl.

In CVPR, 2018.

[Project Page]

This is a reimplementation of CoViAR in PyTorch (the original paper uses MXNet). This code currently supports UCF-101 and HMDB-51; Charades coming soon. (This is a work in progress. Any suggestions are appreciated.)

This code produces comparable or better results than the original paper:

HMDB-51: 52% (I-frame), 40% (motion vector), 43% (residuals), 59.2% (CoViAR).

UCF-101: 87% (I-frame), 70% (motion vector), 80% (residuals), 90.5% (CoViAR).

(average of 3 splits; without optical flow. )

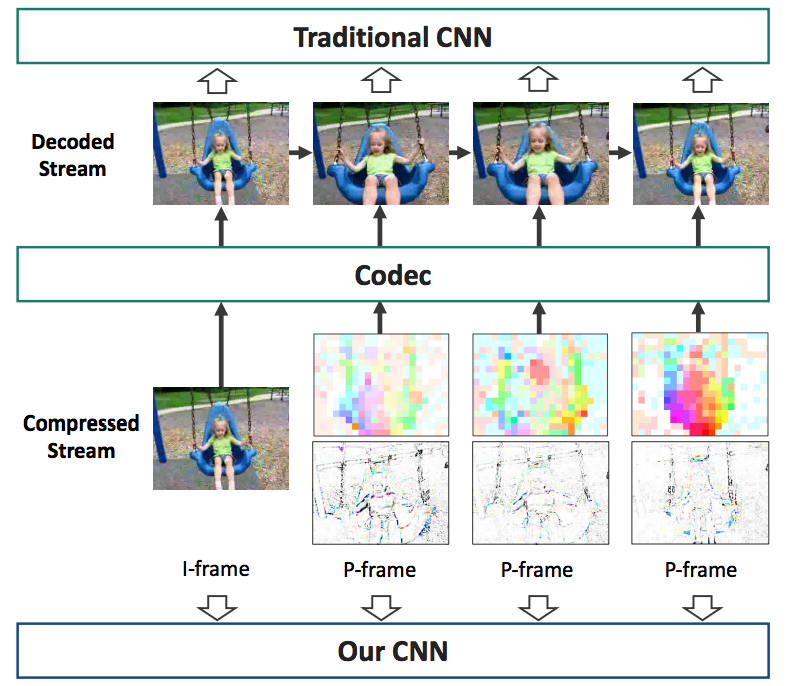

We provide a python data loader that directly takes a compressed video and returns the compressed representation (I-frames, motion vectors, and residuals) as a numpy array . We can thus train the model without extracting and storing all representations as image files.

In our experiments, it's fast enough so that it doesn't delay GPU training.

Please see GETTING_STARTED.md for details and instructions.

Please see GETTING_STARTED.md for instructions for training and inference.

If you find this model useful for your resesarch, please use the following BibTeX entry.

@inproceedings{wu2018coviar,

title={Compressed Video Action Recognition},

author={Wu, Chao-Yuan and Zaheer, Manzil and Hu, Hexiang and Manmatha, R and Smola, Alexander J and Kr{\"a}henb{\"u}hl, Philipp},

booktitle={CVPR},

year={2018}

}

This implementation largely borrows from tsn-pytorch by yjxiong.

Part of the dataloader implementation is modified from this tutorial and FFmpeg extract_mv example.