A C++ framework for training/testing the Support Vector Machine with Gaussian Sample Uncertainty (SVM-GSU).

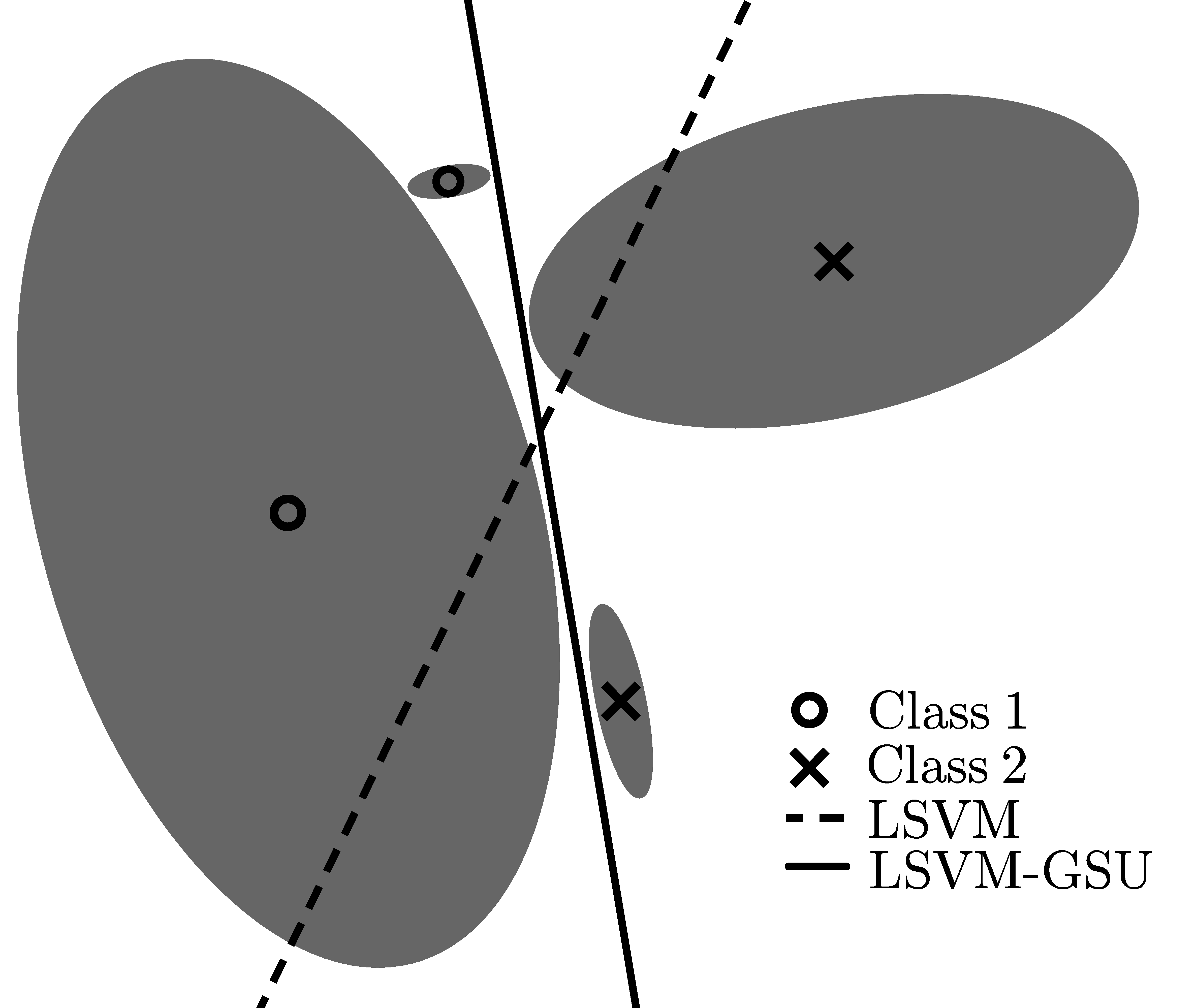

Motivation of the linear SVM-GSU: The proposed classifier takes input data uncertainty (modeled as multivariate anisotropic Gaussians) into consideration, leading to a (probably drastically) different decision border (solid line), compared to the standard linear SVM (dashed line) that would consider only the means of the Gaussians, ignoring the various uncertainties.

This is the implementation code for the Support Vector Machine with Gaussian Sample Uncertainty (SVM-GSU), whose

- linear variant (LSVM-GSU) was first proposed in [1], and

- its kernel version, i.e., Kernel SVM with Isotropic Gaussian Sample Uncertainty (KSVM-iGSU), was first proposed in [2].

If you want to use one of the above classifiers, please consider citing the appropriate papers. Below, detailed guidelines are given on how to build the code, prepare the input data files to the appropriate format (example files are given accordingly), and use the built binaries for training and/or testing SVM-GSU. A toy example is also given so as to illustrate algorithms' basic usage.

This framework is built in C++11 using the Eigen library. The code was originally developed in GNU/Linux (Arch Linux) and has been tested on Arch Linux, Debian, and Debian-based (e.g., *Ubuntu) distributions. In order to build the code, you first need to install (or make sure that you have already installed in your system) the following:

- gcc >= 4.8

- Eigen >= 3

- Arch Linux:

sudo pacman -S gcc - Debian/Ubuntu:

sudo apt-get install gcc

- Arch Linux:

sudo pacman -S eigen - Debian/Ubuntu:

sudo apt-get install libeigen3-dev

In order to build the code, after cloning the repo, for gsvm-train, which is the code for training an SVM-GSU, go to build/gsvm-train and run make. Granted that gcc and Eigen have been correctly installed in your system, the above build process should generate a binary file, i.e., gsvm-train. Similarly, for building gsvm-predict, go to build/gsvm-predict and run make. A binary, i.e., gsvm-predict, will be generated. You may also check that the above binaries have been built correctly by running them with no arguments (a help message about their usage should be printed).

The training set of SVM-GSU consists of the following three parts:

- A set of vectors that correspond to the mean vectors of the input data (input Gaussian distributions),

- A set of matrices that correspond to the covariance matrices of the input data (input Gaussian distributions), and

- A set of binary ground truth labels that correspond to input data class labels.

We adopt a libsvm-like file, plain-text format for the input data files. More specifically, for the above data files, we follow the formats described below.

Each line of the mean vectors file consists of an n-dimensional feature vector, identified by a unique document id (doc_id), given in the following format:

<doc_id> 1:<value> 2:<value> ... j:<value> ... n:<value>\n

The framework supports sparse representation for the feature vectors, i.e., a zero-valued feature can be omitted. For instance, the following line

feat_i 1:0.1 4:0.25 16:0.6

corresponds to a 16-dimensional feature vector that is associated with the document id feat_i. An example mean vectors file can be found here.

Each line of the ground truth file consists of a binary label (in {+1,-1}) associated with a document id (doc_id):

<doc_id> <label>\n

An example ground truth file can be found here.

Each line of the covariance matrices file consists of a matrix, which is identified by a unique document id (doc_id), and whose entries are given in the following format:

<doc_id> 1,1:<value> ... 1,j:<value> ... 1,n:<value> ... i,1:<value> ... i,j:<value> ... i,n:<value> ... n,1:<value> ... n,j:<value> ... n,n:<value>

Similarly to the mean vectors file, the framework adopts a sparse representation format for the covariance matrices. For instance, the following line

feat_i 1,1:0.125 2,2:0.5 3,3:2.25

corresponds to a 3x3 diagonal matrix that is associated with the document id feat_i. An example covariance matrices file can be found here.

Note: The document id (doc_id) that accompanies each line of the above data files, is used to uniquely identify each input datum (i.e., a triplet of a mean vector, a covariance matrix, and a truth label that describes an annotated multi-variate Gaussian distribution). In that sense, there is no need to put input mean vectors, covariance matrices, and truth labels in correspondance. Furthermore, the framework will find the intersection between the given doc_id's (i.e., the given mean vectors, covariance matrices, and truth labels) and will construct the training set appropriately. As an example, if the given data files are as follows:

Mean vectors file:

doc_1 1:0.13 3:0.12

doc_3 1:-0.21 2:0.1 3:-0.43

doc_5 1:0.11 2:-0.21

Ground truth labels file:

doc_5 -1

doc_3 +1

doc_6 -1

Covariance matrices file:

doc_3: 1,1:0.25 2,2:0.01 3,3:0.1

doc_5: 1,1:0.25 2,2:0.01 3,3:0.1

then the framework will consider only the input data with ids doc_3 and doc_5. Clearly, for a binary classification problem, the training set should include at least two training examples with different truth labels.

After the training process of an SVM-GSU (linear or with the RBF kernel) is complete, a model file is created (with a filename defined as a command line argument -- see usage) so as to be subsequently used by gsvm-predict -- see usage. The file formats for the linear and the kernel variants of SVM-GSU are respectively described below:

As an example, the following linear model file describes a trained LSVM-GSU that has been trained using diagonal covariance matrices and a regularization parameter lambda=0.001. The parameters sigmA and sigmB refer to the Platt scaling method used for transforming the outputs of the classifier (i.e., the decision values) into a probability distribution over classes (i.e., so as to be interpreted as a-posteriori probabilities that the testing samples belong to the classes to which they have been classified -- see Sect. 3.1 of [1]). Finally, the optimal parameters (i.e., the parameters of the separating hyperplane, w and b), are given in the last two lines of the file:

SVM-GSU Model_File

kernel_type linear

lambda 0.001

sigmA -2.95902

sigmB -0.39186

cov_mat_type diagonal

w -0.524532 -0.417788 -0.326734 -0.195085 0.0383208 0.615655 0.3713 0.626657 0.047698 -0.291463 0.676643 0.573381 0.356931 0.631038 0.807489 -0.781862

b -0.507318

Not available yet.

The framework consists of two basic components, one for training a SVM-GSU model (gsvm-train), and one for evaluating a trained SVM-GSU model on a given dataset (gsvm-predict). Their basic usage is described below. In any case, information about their basic usage can also be obtained by running gsvm-train or gsvm-predict with no command line arguments.

Usage:

gsvm-train [options] <mean_vectors> <ground_truth> <covariance_matrices> <model_file>

Options:

-v <verbose_mode>: Verbose mode (default: 0)

-t <kernel_type>: Set type of kernel function (default 0)

0 -- Linear kernel

2 -- Radial Basis Function (RBF) kernel

-d <cov_mat>: Select covariance matrices type (default: 0)

0 -- Full covariance matrices

1 -- Diagonal covariance matrices

3 -- Isotropic covariance matrices

-l <lambda>: Set the parameter lambda of SVM-GSU (default 1.0)

-g <gamma>: Set the parameter gamma (default 1.0/dim)

-T <iter>: Set number of SGD iterations

-k <k>: Set SGD sampling size

Usage:

gsvm-predict [options] <mean_vectors> <model_file> <output_file>

Options:

-v <verbose_mode>: Verbose mode (default: 0)

-t <ground_truth>: Select ground truth file

-m <evaluation_metrics>: Evaluation metrics output file

The evaluation metrics file (set by -m <evaluation_metrics>) provides the following results:

- Accuracy

- Precision, Precision @ {5, 10, 15, 20, 30, 100, 200, 500, 1000}

- Recall, Recall @ {5, 10, 15, 20, 30, 100, 200, 500, 1000}

- Average Precision, Average Precision @ {5, 10, 15, 20, 30, 100, 200, 500, 1000}

- F-score

In toy_example/ you may find a minimal toy example scenario where you will train a LSVM-GSU model and evaluate it on a testing set. The data of this toy example are under toy_example/data/. To run the toy example code, execute the BASH shell-script run_toy_example.sh (after making it executable by chmod +xrun_toy_example.sh ).

[1] C. Tzelepis, V. Mezaris, I. Patras, "Linear Maximum Margin Classifier for Learning from Uncertain Data", IEEE Transactions on Pattern Analysis and Machine Intelligence, accepted for publication. DOI:10.1109/TPAMI.2017.2772235. Alternate preprint version available here.

[2] C. Tzelepis, V. Mezaris, I. Patras, "Video Event Detection using Kernel Support Vector Machine with Isotropic Gaussian Sample Uncertainty (KSVM-iGSU)", Proc. 22nd Int. Conf. on MultiMedia Modeling (MMM'16), Miami, FL, USA, Springer LNCS vol. 9516, pp. 3-15, Jan. 2016. DOI:10.1007/978-3-319-27671-7_1. Alternate preprint version available here.

This work was supported by the EU's Horizon 2020 programme H2020-693092 MOVING.