| Paper | Project Page |

- Git clone this repo, note using

--recursiveto get submodules; - Create a conda or python environment and activate. For e.g.,

conda create -n gaussian-head python=3.8,source(or conda) activate gaussian-head; - PyTorch >= 2.0.0 is necessary as geoopt requires, for e.g.,

pip install torch==2.0.0 torchvision==0.15.1 torchaudio==2.0.1 --index-url https://download.pytorch.org/whl/cu118; - install all requirements in

requirements.txt; - geoopt is necessary for Riemannian ADAM, refer to it and install in pypi by

pip install geoopt.

Please refer to here to download it, and please consider citing 'Riemannian Adaptive Optimization Methods' in ICLR2019 if used.

All our data is sourced from publicly available datasets. To create your custom datasets, try using AD-NeRF; it works well.

Download our datasets for train and render, store it in the following directory.

gaussian-head

├── data

├── id1

├── ori_imgs # rgb frames

├── mask # binary masks

└── transforms.json # camera params and expressions

├── id2

......

Download the id1 pre-trained model (training on RTX 2080ti) to quickly view the results, and store the training model according to ./gaussian-head/output/id1

Store the training data according to the format and cd to ./gaussian-head, run:

python ./train.py -s ./data/${id} -m ./output/${id} --eval

Use your own trained model or the pre-trained model we provide, cd to ./gaussian-head and run next command, output results will save in ./gaussian-head/output/${id}

python render.py -m ./output/${id}

- Set

--is_debugused to quickly load a small amount of training data for debug;- After training, set

--novel_view, and then runrender.pyto get the novel perspective result rotated by the y-axis;- Set

--only_headwill only perform head training and rendering. Before this, face_parsing needs to be performed to obtain the segmentation, this can be easily obtained at here;

If anything useful, a star is best and please cite as:

@misc{wang2024gaussianhead,

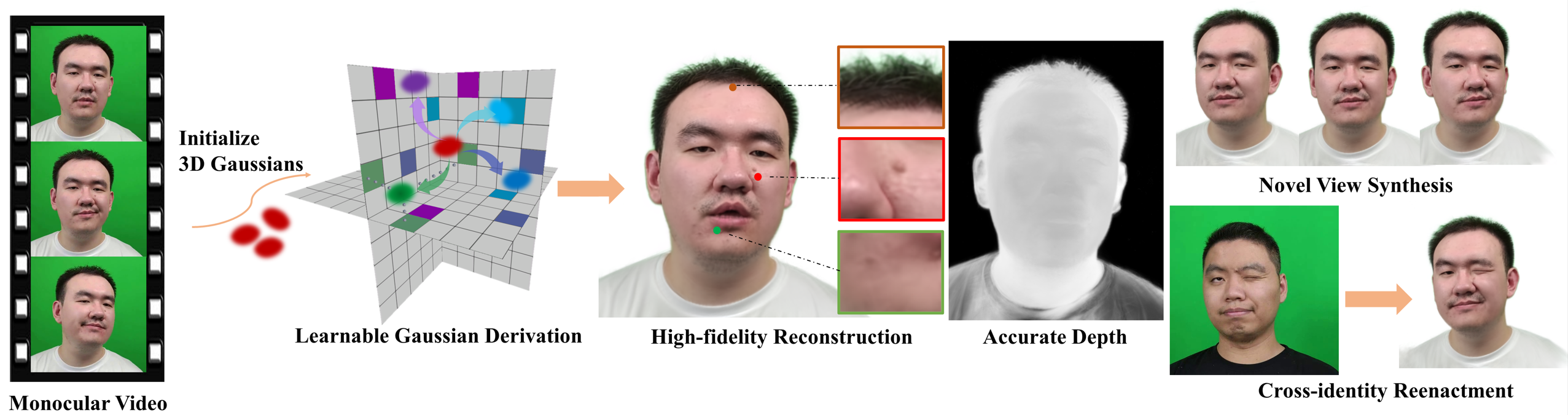

title={GaussianHead: High-fidelity Head Avatars with Learnable Gaussian Derivation},

author={Jie Wang and Jiu-Cheng Xie and Xianyan Li and Feng Xu and Chi-Man Pun and Hao Gao},

year={2024},

eprint={2312.01632},

archivePrefix={arXiv},

primaryClass={cs.CV}

}