- [2023.8.30] Release three new models LISA-7B-v1, LISA-7B-v1-explanatory, and LISA-13B-llama2-v1-explanatory. Welcome to check them out!

- [2023.8.23] Refactor code, and release new model LISA-13B-llama2-v1. Welcome to check it out!

- [2023.8.9] Training code is released!

- [2023.8.4] Online Demo is released!

- [2023.8.4] ReasonSeg Dataset and the LISA-13B-llama2-v0-explanatory model are released!

- [2023.8.3] Inference code and the LISA-13B-llama2-v0 model are released. Welcome to check them out!

- [2023.8.2] Paper is released and GitHub repo is created.

LISA: Reasoning Segmentation via Large Language Model [Paper]

Xin Lai,

Zhuotao Tian,

Yukang Chen,

Yanwei Li,

Yuhui Yuan,

Shu Liu,

Jiaya Jia

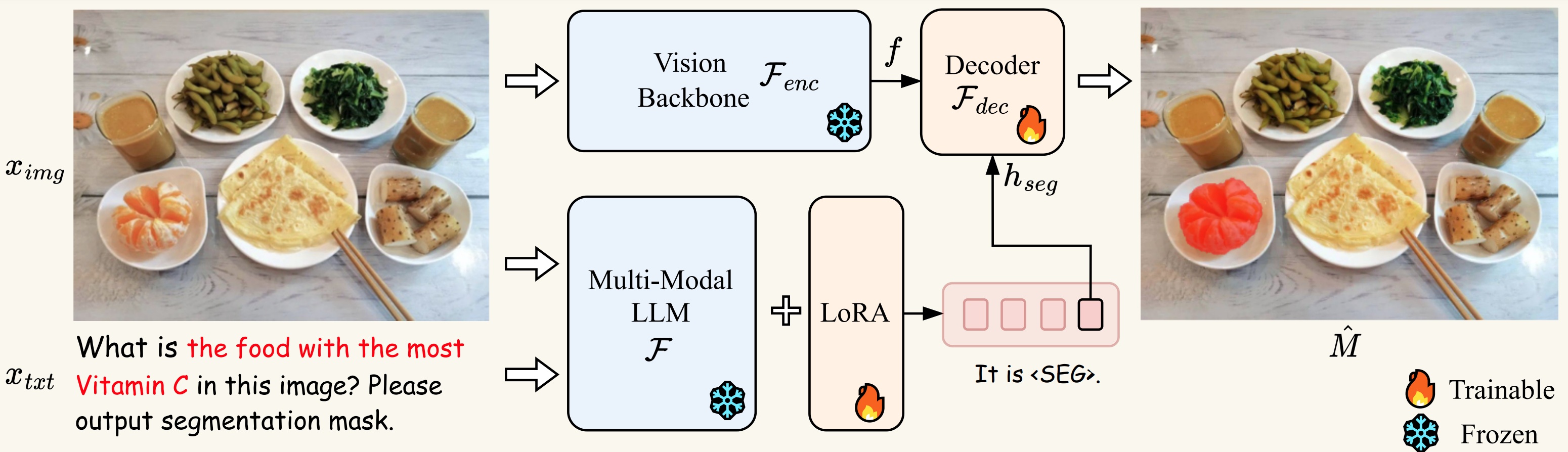

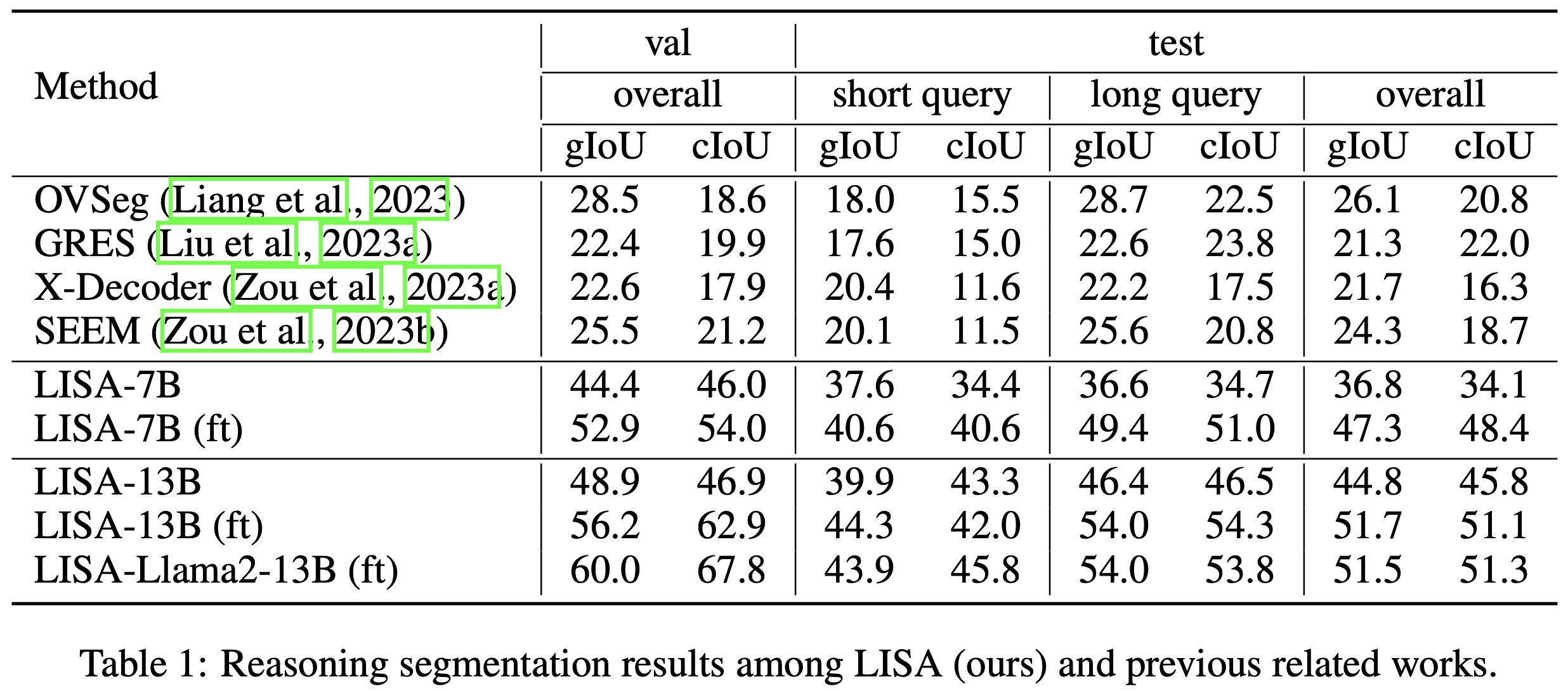

In this work, we propose a new segmentation task --- reasoning segmentation. The task is designed to output a segmentation mask given a complex and implicit query text. We establish a benchmark comprising over one thousand image-instruction pairs, incorporating intricate reasoning and world knowledge for evaluation purposes. Finally, we present LISA: Large-language Instructed Segmentation Assistant, which inherits the language generation capabilities of the multi-modal Large Language Model (LLM) while also possessing the ability to produce segmentation masks. For more details, please refer to the paper.

LISA unlocks the new segmentation capabilities of multi-modal LLMs, and can handle cases involving:

- complex reasoning;

- world knowledge;

- explanatory answers;

- multi-turn conversation.

LISA also demonstrates robust zero-shot capability when trained exclusively on reasoning-free datasets. In addition, fine-tuning the model with merely 239 reasoning segmentation image-instruction pairs results in further performance enhancement.

pip install -r requirements.txt

pip install flash-attn --no-build-isolation

The training data consists of 4 types of data:

-

Semantic segmentation datasets: ADE20K, COCO-Stuff, Mapillary, PACO-LVIS, PASCAL-Part, COCO Images

Note: For COCO-Stuff, we use the annotation file stuffthingmaps_trainval2017.zip. We only use the PACO-LVIS part in PACO. COCO Images should be put into the

dataset/coco/directory. -

Referring segmentation datasets: refCOCO, refCOCO+, refCOCOg, refCLEF (saiapr_tc-12)

Note: the original links of refCOCO series data are down, and we update them with new ones. If the download speed is super slow or unstable, we also provide a OneDrive link to download. You must also follow the rules that the original datasets require.

-

Visual Question Answering dataset: LLaVA-Instruct-150k

-

Reasoning segmentation dataset: ReasonSeg

Download them from the above links, and organize them as follows.

├── dataset

│ ├── ade20k

│ │ ├── annotations

│ │ └── images

│ ├── coco

│ │ └── train2017

│ │ ├── 000000000009.jpg

│ │ └── ...

│ ├── cocostuff

│ │ └── train2017

│ │ ├── 000000000009.png

│ │ └── ...

│ ├── llava_dataset

│ │ └── llava_instruct_150k.json

│ ├── mapillary

│ │ ├── config_v2.0.json

│ │ ├── testing

│ │ ├── training

│ │ └── validation

│ ├── reason_seg

│ │ └── ReasonSeg

│ │ ├── train

│ │ ├── val

│ │ └── explanatory

│ ├── refer_seg

│ │ ├── images

│ │ | ├── saiapr_tc-12

│ │ | └── mscoco

│ │ | └── images

│ │ | └── train2014

│ │ ├── refclef

│ │ ├── refcoco

│ │ ├── refcoco+

│ │ └── refcocog

│ └── vlpart

│ ├── paco

│ │ └── annotations

│ └── pascal_part

│ ├── train.json

│ └── VOCdevkit

To train LISA-7B or 13B, you need to follow the instruction to merge the LLaVA delta weights. Typically, we use the final weights LLaVA-Lightning-7B-v1-1 and LLaVA-13B-v1-1 merged from liuhaotian/LLaVA-Lightning-7B-delta-v1-1 and liuhaotian/LLaVA-13b-delta-v1-1, respectively. For Llama2, we can directly use the LLaVA full weights liuhaotian/llava-llama-2-13b-chat-lightning-preview.

Download SAM ViT-H pre-trained weights from the link.

deepspeed --master_port=24999 train_ds.py \

--version="PATH_TO_LLaVA" \

--dataset_dir='./dataset' \

--vision_pretrained="PATH_TO_SAM" \

--dataset="sem_seg||refer_seg||vqa||reason_seg" \

--sample_rates="9,3,3,1" \

--exp_name="lisa-7b"

When training is finished, to get the full model weight:

cd ./runs/lisa-7b/ckpt_model && python zero_to_fp32.py . ../pytorch_model.bin

Merge the LoRA weights of pytorch_model.bin, save the resulting model into your desired path in the Hugging Face format:

CUDA_VISIBLE_DEVICES="" python merge_lora_weights_and_save_hf_model.py \

--version="PATH_TO_LLaVA" \

--weight="PATH_TO_pytorch_model.bin" \

--save_path="PATH_TO_SAVED_MODEL"

For example:

CUDA_VISIBLE_DEVICES="" python3 merge_lora_weights_and_save_hf_model.py \

--version="./LLaVA/LLaVA-Lightning-7B-v1-1" \

--weight="lisa-7b/pytorch_model.bin" \

--save_path="./LISA-7B"

deepspeed --master_port=24999 train_ds.py \

--version="PATH_TO_LLaVA" \

--dataset_dir='./dataset' \

--vision_pretrained="PATH_TO_SAM" \

--exp_name="lisa-7b" \

--weight='PATH_TO_pytorch_model.bin' \

--eval_only

Note: the v1 model is trained using both train+val sets, so please use the v0 model to reproduce the validation results. (To use the v0 models, please first checkout to the legacy version repo with git checkout 0e26916.)

To chat with LISA-13B-llama2-v1 or LISA-13B-llama2-v1-explanatory:

(Note that chat.py currently does not support v0 models (i.e., LISA-13B-llama2-v0 and LISA-13B-llama2-v0-explanatory), if you want to use the v0 models, please first checkout to the legacy version repo git checkout 0e26916.)

CUDA_VISIBLE_DEVICES=0 python chat.py --version='xinlai/LISA-13B-llama2-v1'

CUDA_VISIBLE_DEVICES=0 python chat.py --version='xinlai/LISA-13B-llama2-v1-explanatory'

To use bf16 or fp16 data type for inference:

CUDA_VISIBLE_DEVICES=0 python chat.py --version='xinlai/LISA-13B-llama2-v1' --precision='bf16'

To use 8bit or 4bit data type for inference (this enables running 13B model on a single 24G or 12G GPU at some cost of generation quality):

CUDA_VISIBLE_DEVICES=0 python chat.py --version='xinlai/LISA-13B-llama2-v1' --precision='fp16' --load_in_8bit

CUDA_VISIBLE_DEVICES=0 python chat.py --version='xinlai/LISA-13B-llama2-v1' --precision='fp16' --load_in_4bit

Hint: for 13B model, 16-bit inference consumes 30G VRAM with a single GPU, 8-bit inference consumes 16G, and 4-bit inference consumes 9G.

After that, input the text prompt and then the image path. For example,

- Please input your prompt: Where can the driver see the car speed in this image? Please output segmentation mask.

- Please input the image path: imgs/example1.jpg

- Please input your prompt: Can you segment the food that tastes spicy and hot?

- Please input the image path: imgs/example2.jpg

The results should be like:

CUDA_VISIBLE_DEVICES=0 python app.py --version='xinlai/LISA-13B-llama2-v1 --load_in_4bit'

CUDA_VISIBLE_DEVICES=0 python app.py --version='xinlai/LISA-13B-llama2-v1-explanatory --load_in_4bit'

By default, we use 4-bit quantization. Feel free to delete the --load_in_4bit argument for 16-bit inference or replace it with --load_in_8bit argument for 8-bit inference.

In ReasonSeg, we have collected 1218 images (239 train, 200 val, and 779 test). The training and validation sets can be download from this link.

Each image is provided with an annotation JSON file:

image_1.jpg, image_1.json

image_2.jpg, image_2.json

...

image_n.jpg, image_n.json

Important keys contained in JSON files:

- "text": text instructions.

- "is_sentence": whether the text instructions are long sentences.

- "shapes": target polygons.

The elements of the "shapes" exhibit two categories, namely "target" and "ignore". The former category is indispensable for evaluation, while the latter category denotes the ambiguous region and hence disregarded during the evaluation process.

We provide a script that demonstrates how to process the annotations:

python3 utils/data_processing.py

Besides, we leveraged GPT-3.5 for rephrasing instructions, so images in the training set may have more than one instructions (but fewer than six) in the "text" field. During training, users may randomly select one as the text query to obtain a better model.

If you find this project useful in your research, please consider citing:

@article{lai2023lisa,

title={LISA: Reasoning Segmentation via Large Language Model},

author={Lai, Xin and Tian, Zhuotao and Chen, Yukang and Li, Yanwei and Yuan, Yuhui and Liu, Shu and Jia, Jiaya},

journal={arXiv preprint arXiv:2308.00692},

year={2023}

}