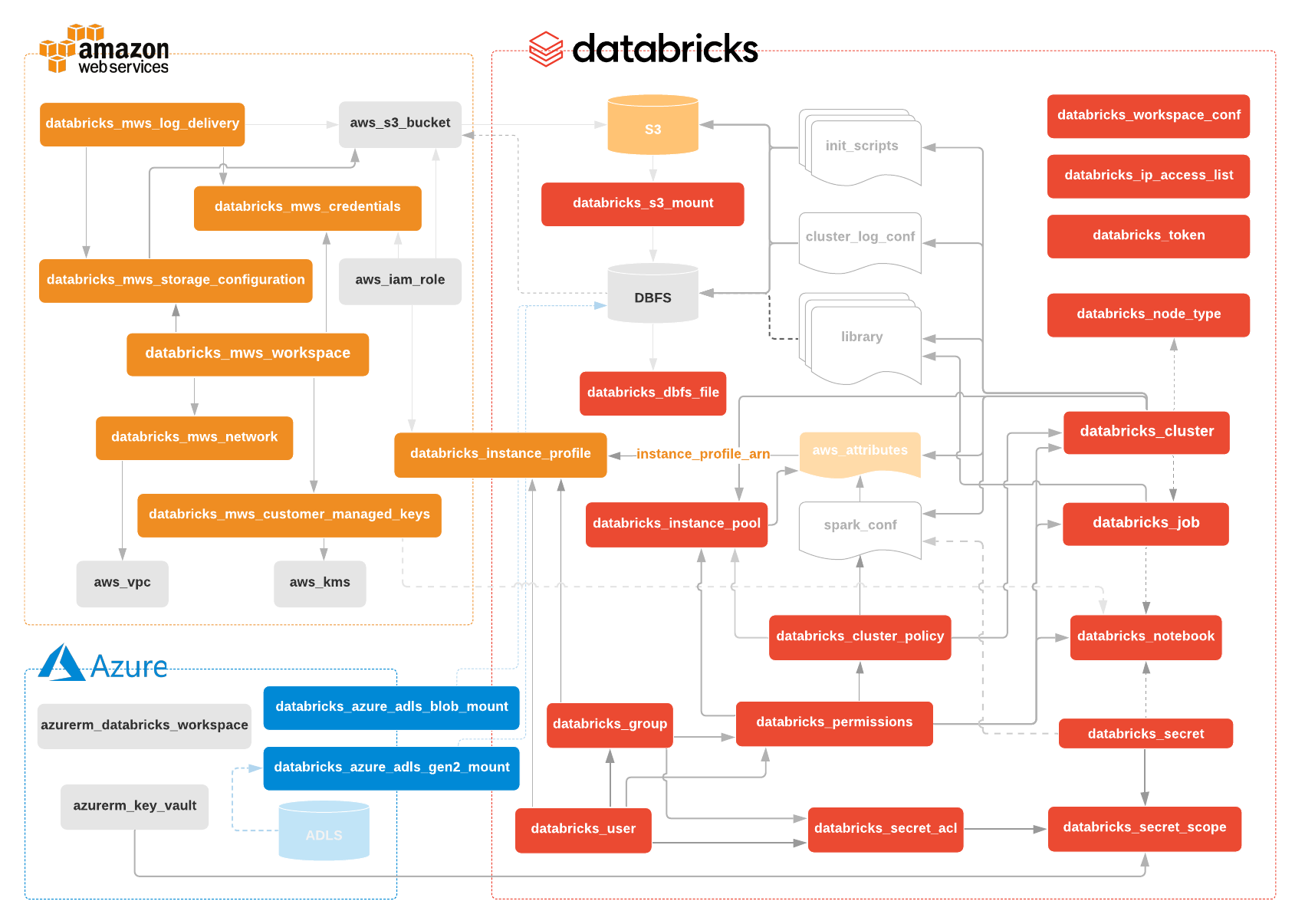

End-to-end workspace creation on AWS or Azure | Authentication | databricks_aws_s3_mount | databricks_aws_assume_role_policy data | databricks_aws_bucket_policy data | databricks_aws_crossaccount_policy data | databricks_azure_adls_gen1_mount | databricks_azure_adls_gen2_mount | databricks_azure_blob_mount | databricks_cluster | databricks_cluster_policy | databricks_dbfs_file | databricks_dbfs_file_paths data | databricks_dbfs_file data | databricks_group | databricks_group data | databricks_group_instance_profile | databricks_group_member | databricks_instance_pool | databricks_instance_profile | databricks_ip_access_list | databricks_job | databricks_mws_credentials | databricks_mws_customer_managed_keys | databricks_mws_log_delivery | databricks_mws_networks | databricks_mws_storage_configurations | databricks_mws_workspaces | databricks_node_type data | databricks_notebook | databricks_notebook data | databricks_notebook_paths data | databricks_permissions | databricks_secret | databricks_secret_acl | databricks_secret_scope | databricks_token | databricks_user | databricks_user_instance_profile | databricks_workspace_conf | Contributing and Development Guidelines | Changelog

If you use Terraform 0.13, please refer to instructions specified at registry page:

terraform {

required_providers {

databricks = {

source = "databrickslabs/databricks"

version = ">= 0.2.7"

}

}

}If you use Terraform 0.12, please execute the following curl command in your shell:

curl https://raw.githubusercontent.com/databrickslabs/databricks-terraform/master/godownloader-databricks-provider.sh | bash -s -- -b $HOME/.terraform.d/pluginsThen create a small sample file, named main.tf with approximately following contents. Replace <your PAT token> with newly created PAT Token. It will create a simple cluster.

provider "databricks" {

host = "https://abc-defg-024.cloud.databricks.com/"

token = "<your PAT token>"

}

data "databricks_node_type" "smallest" {

local_disk = true

}

resource "databricks_cluster" "shared_autoscaling" {

cluster_name = "Shared Autoscaling"

spark_version = "6.6.x-scala2.11"

node_type_id = databricks_node_type.smallest.id

autotermination_minutes = 20

autoscale {

min_workers = 1

max_workers = 50

}

}Then run terraform init then terraform apply to apply the hcl code to your Databricks workspace.

Important: Projects in the databrickslabs GitHub account, including the Databricks Terraform Provider, are not formally supported by Databricks. They are maintained by Databricks Field teams and provided as-is. There is no service level agreement (SLA). Databricks makes no guarantees of any kind. If you discover an issue with the provider, please file a GitHub Issue on the repo, and it will be reviewed by project maintainers as time permits.