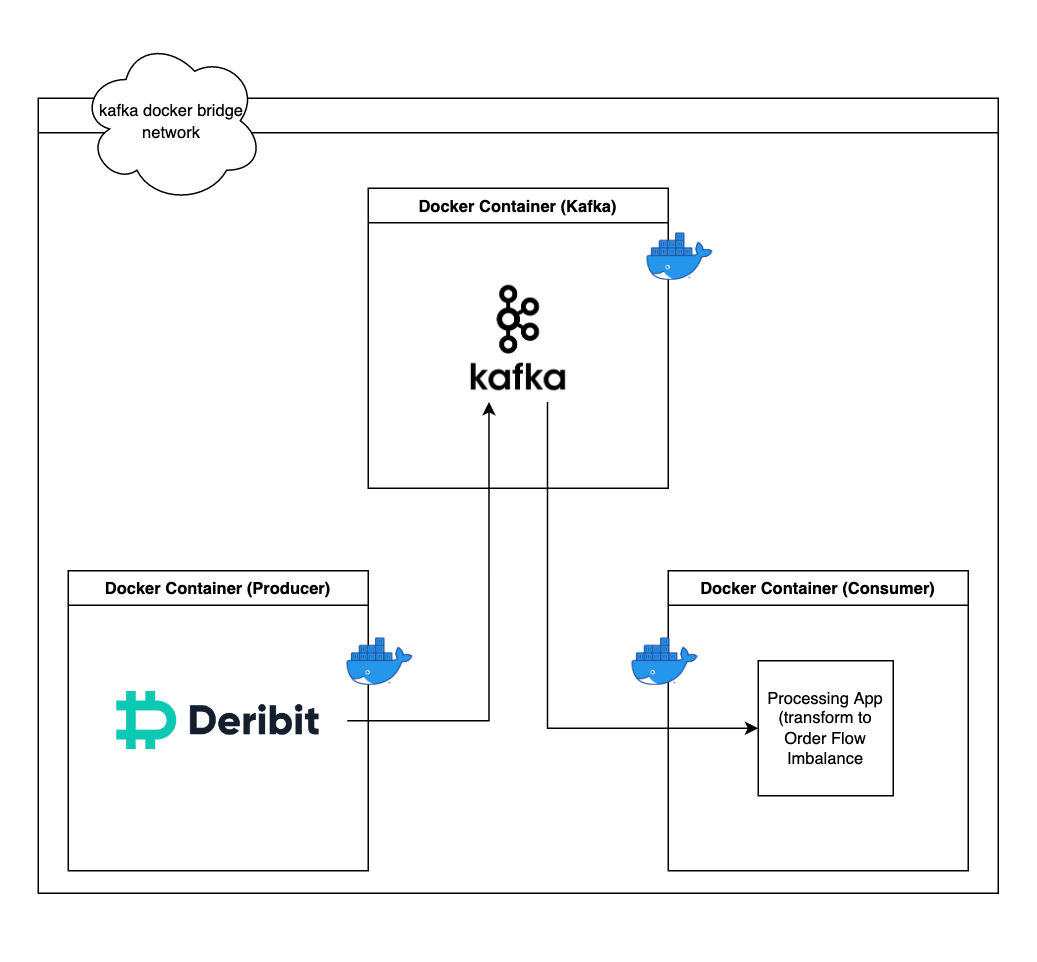

A real-time streaming data pipeline built using Kafka and Docker. Consumes Bitcoin data from Deribit's API v2.1.1 and transforms limit order book market data to net order flow imbalance and the mid-price.

Explore the docs »

View Demo

·

Report Bug

·

Request Feature

Table of Contents

Intially this project was intended as a starting point to build an algorithmic trading system. I decided to explore HFT (High Frequency Trading) and wanted to use Market Microstructure variables to inform my trading strategy. I wanted to use perptual cryptocurrency instruments and use order flow imbalance.

I however, instead tok the chance to change this into a fun project to practise and learn Docker and Kafka. What ultimately came of it was a simple real-time streaming data pipeline.

Please check out my website for more info!

This app was built using Docker. This solution assumes you have Docker installed on your machine

-

Make sure you have API keys from Deribit and store them as

CLIENT_ID_DERIBITandCLIENT_SECRET_DERIBITenvironment variables on your machine -

Clone the repo

git clone git@github.com:kostyafarber/crypto-lob-data-pipeline.git

-

Run the kafka and zookeeper container

cd src/kafka docker-compose -f 'docker-compose.yml' up -d

Run the producer container

-

cd src/producer docker-compose -f 'docker-compose.yml' up

Finally run the consumer container

cd src/consumer

docker-compose up -f `docker-compose.yml` upWhat you should see is output that looks something like this:

On the left is raw JSON being published to the kafka broker and on the right the JSON is being transformed with the order flow imbalance and mid-price being printed to the console.

The pipeline was built with the microservices principles in mind. Kafka, the Producer and Consumer are all in their own seperate docker containers and have no knowledge of each other apart from being on the same bridge network I defined in the docker-compose.yml files.

-

[] Add another script to make the consumer portion into a producer.

-

[] Add docker youtube video in acknowledgments.

See the open issues for a full list of proposed features (and known issues).

Contributions are what make the open source community such an amazing place to learn, inspire, and create. Any contributions you make are greatly appreciated.

If you have a suggestion that would make this better, please fork the repo and create a pull request. You can also simply open an issue with the tag "enhancement". Don't forget to give the project a star! Thanks again!

- Fork the Project

- Create your Feature Branch (

git checkout -b feature/AmazingFeature) - Commit your Changes (

git commit -m 'Add some AmazingFeature') - Push to the Branch (

git push origin feature/AmazingFeature) - Open a Pull Request

Distributed under the MIT License. See LICENSE.txt for more information.

Kostya Farber - kostya.farber@gmail.com

Project Link: https://kostyafarber.github.io/projects/crpyto-perpetual-futures-kafka-streaming

Use this space to list resources you find helpful and would like to give credit to. I've included a few of my favorites to kick things off!