Architecture | Results | Examples | Documentation

- [2024/09/29] 🌟 AI at Meta PyTorch + TensorRT v2.4 🌟 ⚡TensorRT 10.1 ⚡PyTorch 2.4 ⚡CUDA 12.4 ⚡Python 3.12 ➡️ link

-

[2024/09/17] ✨ NVIDIA TensorRT-LLM Meetup ➡️ link

-

[2024/09/17] ✨ Accelerating LLM Inference at Databricks with TensorRT-LLM ➡️ link

-

[2024/09/17] ✨ TensorRT-LLM @ Baseten ➡️ link

-

[2024/09/04] 🏎️🏎️🏎️ Best Practices for Tuning TensorRT-LLM for Optimal Serving with BentoML ➡️ link

-

[2024/08/20] 🏎️SDXL with #TensorRT Model Optimizer ⏱️⚡ 🏁 cache diffusion 🏁 quantization aware training 🏁 QLoRA 🏁 #Python 3.12 ➡️ link

-

[2024/08/13] 🐍 DIY Code Completion with #Mamba ⚡ #TensorRT #LLM for speed 🤖 NIM for ease ☁️ deploy anywhere ➡️ link

-

[2024/08/06] 🗫 Multilingual Challenge Accepted 🗫 🤖 #TensorRT #LLM boosts low-resource languages like Hebrew, Indonesian and Vietnamese ⚡➡️ link

-

[2024/07/30] Introducing🍊 @SliceXAI ELM Turbo 🤖 train ELM once ⚡ #TensorRT #LLM optimize ☁️ deploy anywhere ➡️ link

-

[2024/07/23] 👀 @AIatMeta Llama 3.1 405B trained on 16K NVIDIA H100s - inference is #TensorRT #LLM optimized ⚡ 🦙 400 tok/s - per node 🦙 37 tok/s - per user 🦙 1 node inference ➡️ link

-

[2024/07/09] Checklist to maximize multi-language performance of @meta #Llama3 with #TensorRT #LLM inference: ✅ MultiLingual ✅ NIM ✅ LoRA tuned adaptors➡️ Tech blog

-

[2024/07/02] Let the @MistralAI MoE tokens fly 📈 🚀 #Mixtral 8x7B with NVIDIA #TensorRT #LLM on #H100. ➡️ Tech blog

Previous News

-

[2024/06/24] Enhanced with NVIDIA #TensorRT #LLM, @upstage.ai’s solar-10.7B-instruct is ready to power your developer projects through our API catalog 🏎️. ✨➡️ link

-

[2024/06/18] CYMI: 🤩 Stable Diffusion 3 dropped last week 🎊 🏎️ Speed up your SD3 with #TensorRT INT8 Quantization➡️ link

-

[2024/06/18] 🧰Deploying ComfyUI with TensorRT? Here’s your setup guide ➡️ link

-

[2024/06/11] ✨#TensorRT Weight-Stripped Engines ✨ Technical Deep Dive for serious coders ✅+99% compression ✅1 set of weights → ** GPUs ✅0 performance loss ✅** models…LLM, CNN, etc.➡️ link

-

[2024/06/04] ✨ #TensorRT and GeForce #RTX unlock ComfyUI SD superhero powers 🦸⚡ 🎥 Demo: ➡️ link 📗 DIY notebook: ➡️ link

-

[2024/05/28] ✨#TensorRT weight stripping for ResNet-50 ✨ ✅+99% compression ✅1 set of weights → ** GPUs\ ✅0 performance loss ✅** models…LLM, CNN, etc 👀 📚 DIY ➡️ link

-

[2024/05/21] ✨@modal_labs has the codes for serverless @AIatMeta Llama 3 on #TensorRT #LLM ✨👀 📚 Marvelous Modal Manual: Serverless TensorRT-LLM (LLaMA 3 8B) | Modal Docs ➡️ link

-

[2024/05/08] NVIDIA TensorRT Model Optimizer -- the newest member of the #TensorRT ecosystem is a library of post-training and training-in-the-loop model optimization techniques ✅quantization ✅sparsity ✅QAT ➡️ blog

-

[2024/05/07] 🦙🦙🦙 24,000 tokens per second 🛫Meta Llama 3 takes off with #TensorRT #LLM 📚➡️ link

-

[2024/02/06] 🚀 Speed up inference with SOTA quantization techniques in TRT-LLM

-

[2024/01/30] New XQA-kernel provides 2.4x more Llama-70B throughput within the same latency budget

-

[2023/12/04] Falcon-180B on a single H200 GPU with INT4 AWQ, and 6.7x faster Llama-70B over A100

-

[2023/11/27] SageMaker LMI now supports TensorRT-LLM - improves throughput by 60%, compared to previous version

-

[2023/11/13] H200 achieves nearly 12,000 tok/sec on Llama2-13B

-

[2023/10/22] 🚀 RAG on Windows using TensorRT-LLM and LlamaIndex 🦙

-

[2023/10/19] Getting Started Guide - Optimizing Inference on Large Language Models with NVIDIA TensorRT-LLM, Now Publicly Available

-

[2023/10/17] Large Language Models up to 4x Faster on RTX With TensorRT-LLM for Windows

TensorRT-LLM is a library for optimizing Large Language Model (LLM) inference. It provides state-of-the-art optimziations, including custom attention kernels, inflight batching, paged KV caching, quantization (FP8, INT4 AWQ, INT8 SmoothQuant, ++) and much more, to perform inference efficiently on NVIDIA GPUs

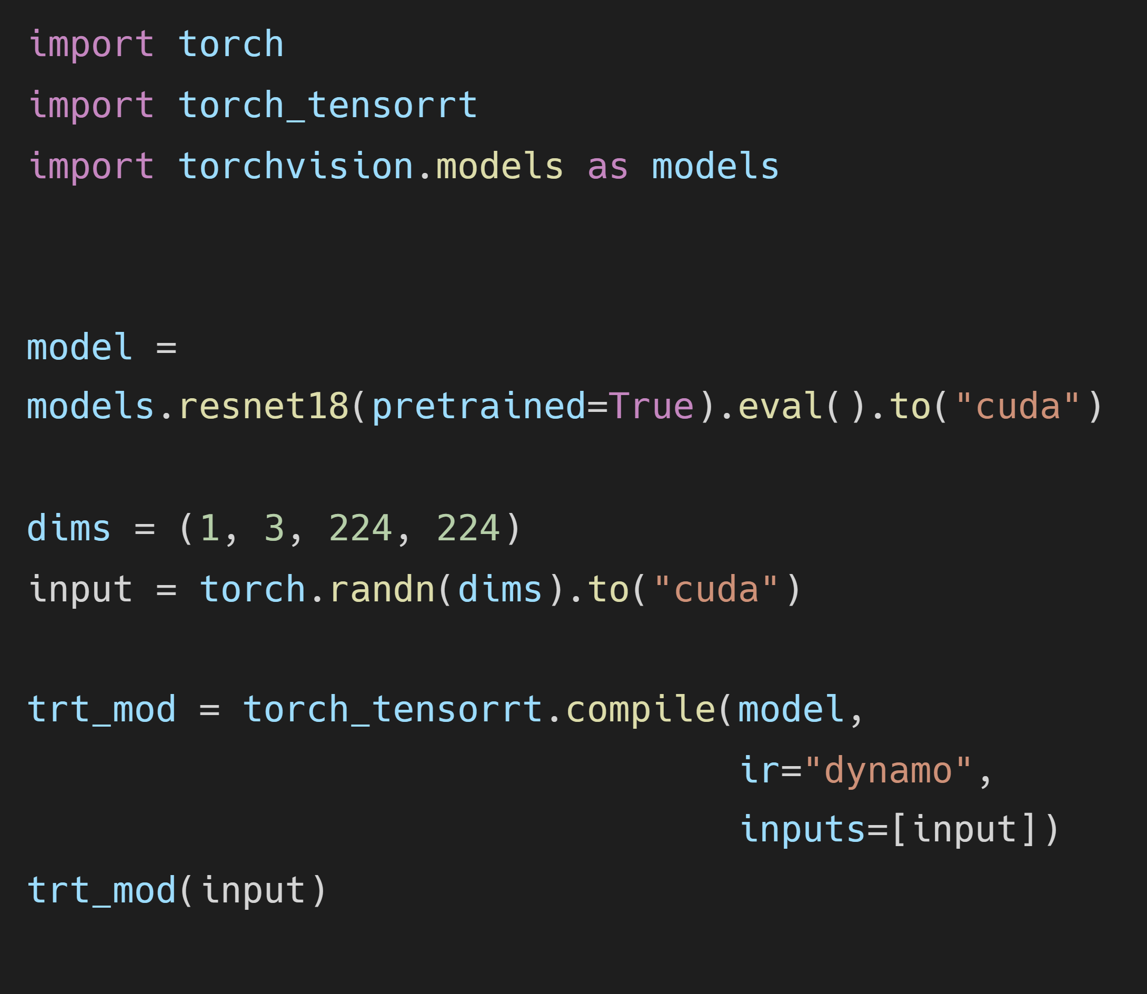

TensorRT-LLM provides a Python API to build LLMs into optimized TensorRT engines. It contains runtimes in Python (bindings) and C++ to execute those TensorRT engines. It also includes a backend for integration with the NVIDIA Triton Inference Server. Models built with TensorRT-LLM can be executed on a wide range of configurations from a single GPU to multiple nodes with multiple GPUs (using Tensor Parallelism and/or Pipeline Parallelism).

TensorRT-LLM comes with several popular models pre-defined. They can easily be modified and extended to fit custom needs via a PyTorch-like Python API. Refer to the Support Matrix for a list of supported models.

TensorRT-LLM is built on top of the TensorRT Deep Learning Inference library. It leverages much of TensorRT's deep learning optimizations and adds LLM-specific optimizations on top, as described above. TensorRT is an ahead-of-time compiler; it builds "Engines" which are optimized representations of the compiled model containing the entire execution graph. These engines are optimized for a specific GPU architecture, and can be validated, benchmarked, and serialized for later deployment in a production environment.

To get started with TensorRT-LLM, visit our documentation:

- Quick Start Guide

- Release Notes

- Installation Guide for Linux

- Installation Guide for Windows

- Supported Hardware, Models, and other Software

- Model zoo (generated by TRT-LLM rel 0.9 a9356d4b7610330e89c1010f342a9ac644215c52)