This repository contains code, scripts, and data for running experiments in the following paper:

Xinran Zhao*, Shikhar Murty*, Christopher D. Manning

[On Measuring the Intrinsic Few-Shot Hardness of Datasets]

The experiments use datasets that can be downloaded here:

- FS-GLUE (Wang et al., 2018, Wang et al., 2019): download

- FS-NLI (White et al., 2017, Poliak et al., 2018, Richardson et al., 2019): download

- Decomposed NER Dataset (for Table 3 in the original paper): download

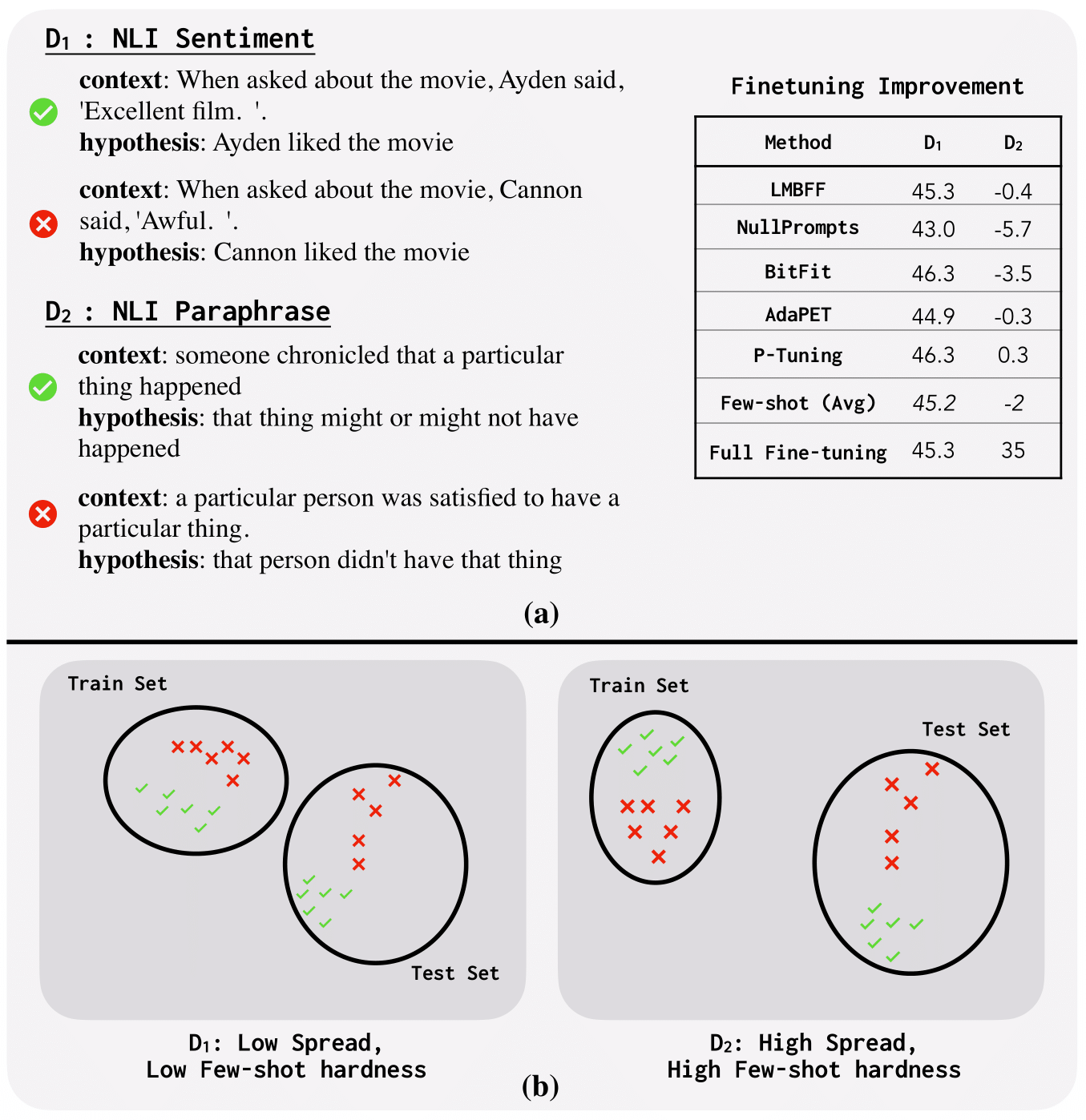

While advances in pre-training have led to dramatic improvements in few-shot learning of NLP tasks, there is limited understanding of what drives successful few-shot adaptation in datasets. In particular, given a new dataset and a pre-trained model, what properties of the dataset make it few-shot learnable and are these properties independent of the specific adaptation techniques used? We consider an extensive set of recent few-shot learning methods, and show that their performance across a large number of datasets is highly correlated, showing that few-shot hardness may be intrinsic to datasets, for a given pre-trained model. To estimate intrinsic few-shot hardness, we then propose a simple and lightweight metric called Spread that captures the intuition that few-shot learning is made possible by exploiting feature-space invariances between training and test samples. Our metric better accounts for few-shot hardness compared to existing notions of hardness, and is ~8--100x faster to compute.

Python 3.7, pandas, sklearn, scipy, matplotlib, seaborn

This repository contains the code to reproduce the results in the paper from the performance of various few-shot adaptation methods and hardness metrics we collected. Detailed instructions can be found in Heatmap_Generation.ipynb.

heatmap_raw_data.json: Bundled datasets of model performance and raw metric scores. The key, values are:

| Key | Value |

|---|---|

| output_collection | the output of each method on each task with roberta as the backbone |

| majority_collection | the majority baseline of each task |

| testsize_collection | the test data size of each task |

| all_dist_collection | the distance collection between each test example to the support set of each task |

| output_collection_electra | the output of each method and each task with electra as the backbone |

Link to the blog post.

to appear

If you have any other questions about this repo or any idea about few-shot learning to discuss, you are welcome to open an issue or send me an email, I will respond to that as soon as possible.