Revealing systematic gender biases in pre-trained BERT, and more importantly in debiased BERT models.

Lipstick on a Pig: Debiasing Methods Cover up Systematic Gender Biases in Word Embeddings But do not Remove Them shows that existing gender bias removal methods in word embeddings are superficial, and should not be trusted. Meanwhile, recent advances in contextual models have been astonishing, and many researchers have proved the existence of the biases and proposed different mitigation methods for those models. For this reason, we extend Gonen and Goldberg (2019) to discover gender biases in contextualised language models. As a result, we have identified the presence of systematic gender biases in pre-trained BERT and also in debiased BERT models. We hope that our work will inspire future research on analyzing gender biases of diverse debiasing methods.

For the entire piece, please refer to Lipstick on a Pig “Again”: Analysis on Contextualised Embeddings.

This project extends the original paper:

"Lipstick on a Pig: Debiasing Methods Cover up Systematic Gender Biases in Word Embeddings But do not Remove Them", Hila Gonen and Yoav Goldberg, NAACL 2019

Please refer to here for a brief description of the original paper.

Our approach tackles next two research questions:

- RQ1. How much gender bias do the contextualised BERT embeddings contain?

- RQ2. Did the proposed debiased BERT models really resolve gender biases?

RQ1 verifies the existence of gender bias in pre-trained BERT (bert-base-uncased), and with RQ2, we claim the state-of-the-art debiasing methods are not effective in mitigating gender biases.

To design a solid experiment in contextualised settings, word pools used in the original paper are retained, as a control variable (initially from Word2Vec (Bolukbasi et al., 2016), GloVe (Zhao et al., 2018)). Then, templates from May et al. (2019) were adopted to build the words into sentences. For a proper application of the templates, words other than nouns are filtered out, yielding 18,445 and 39,385 words respectively.

Bolukbasi et al., 2016. Man is to Computer Programmer as Woman is to Homemaker? Debiasing Word Embeddings (NeurIPS 2016)

Zhao et al., 2018. Learning Gender-Neutral Word Embeddings (EMNLP 2018)

May et al., (2019). On Measuring Social Biases in Sentence Encoders (NACCL 2019)

Next, first and last tokens in a word (e.g. babysitter => baby + ##sit + ##ter) was used for pooling (chosen among the options of Ács et al. (2021)) and last four hidden layers of BERT were concated to best preserve contextual information (Devlin et al., 2018).

Ács et al. (2021). Subword Pooling Makes a Difference (EACL 2021)

Devlin et al. (2018). BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding (NAACL 2019)

Experiment 1, 3, 5 from the original paper was used for our analysis.

The experiments were applied to three debiased models: Sent-Debiased, Context-Debiased, BERT-CDS, from three papers below, respectively.

Liang et al. (2020). Towards Debiasing Sentence Representations, (ACL 2020)

Kaneko and Bollegala (2021). Debiasing Pre-trained Contextualised Embeddings (EACL 2021)

Bartl et al. (2020). Unmasking Contextual Stereotypes: Measuring and Mitigating BERT’s Gender Bias (GeBNLP 2020)

-

RQ1. How much gender bias do the contextualised BERT embeddings contain?

: Contextualised BERT embeddings also demonstrated clear gender biases. -

RQ2. Did the proposed debiased BERT models really resolve gender biases?

: No, gender biases still remained after debiasing.

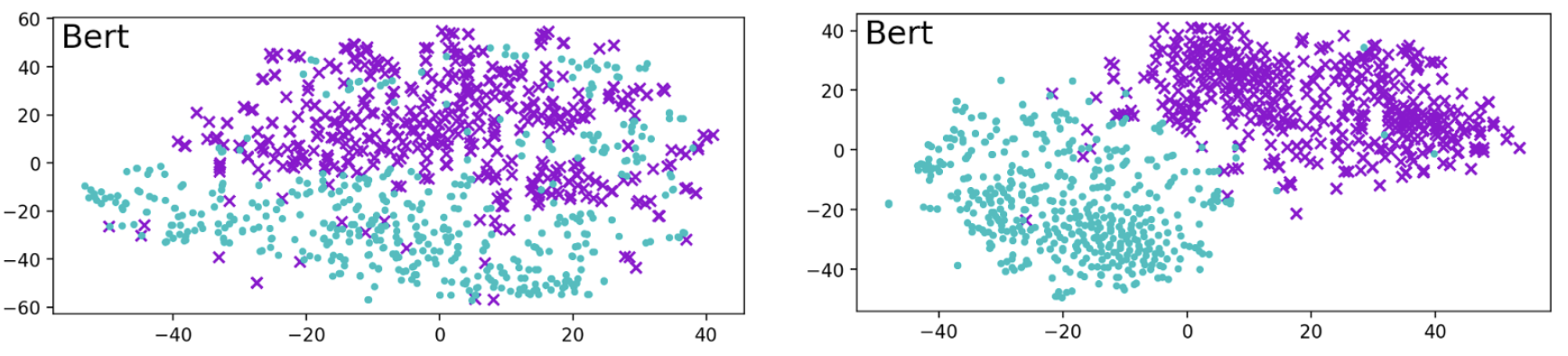

Figure 1. Clustering for contextualised BERT embeddings, using vocabulary from Word2Vec (left-hand side) and GloVe (right-hand side)

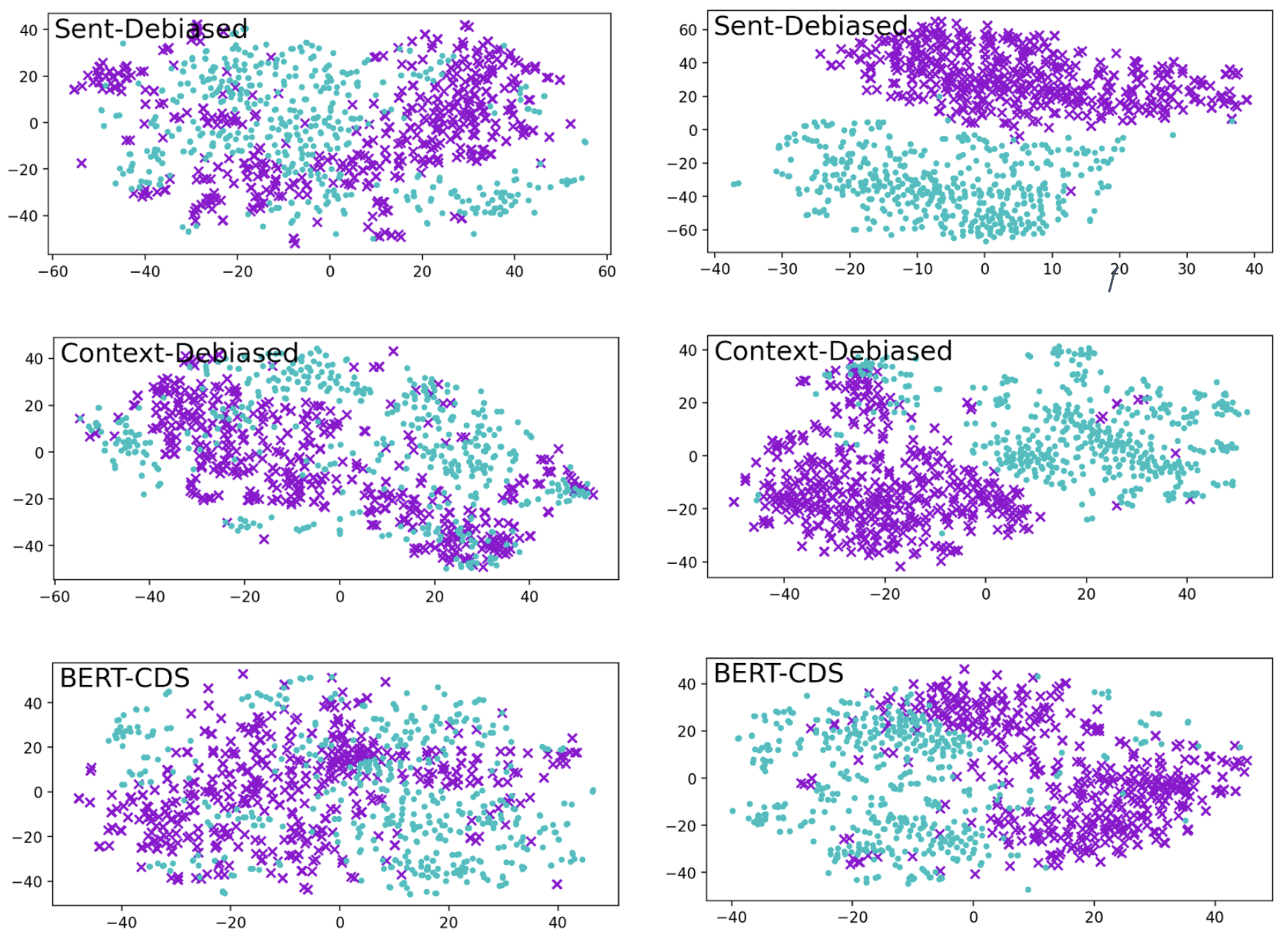

Figure 2. Clustering for debiased models (Sent-Debiased, Context-Debiased, BERT-CDS from the top). The same vocabularies are used as in BERT.

The embeddings from debiased models still clusters.

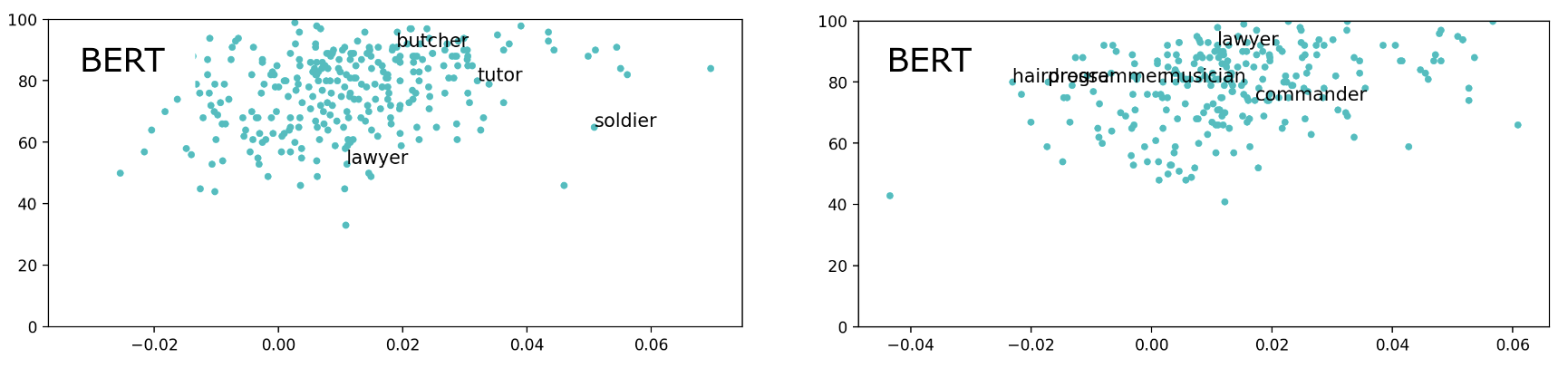

Figure 3. The plot for contextualised BERT embeddings, using vocabulary from Word2Vec (left-hand side) and GloVe (right-hand side)

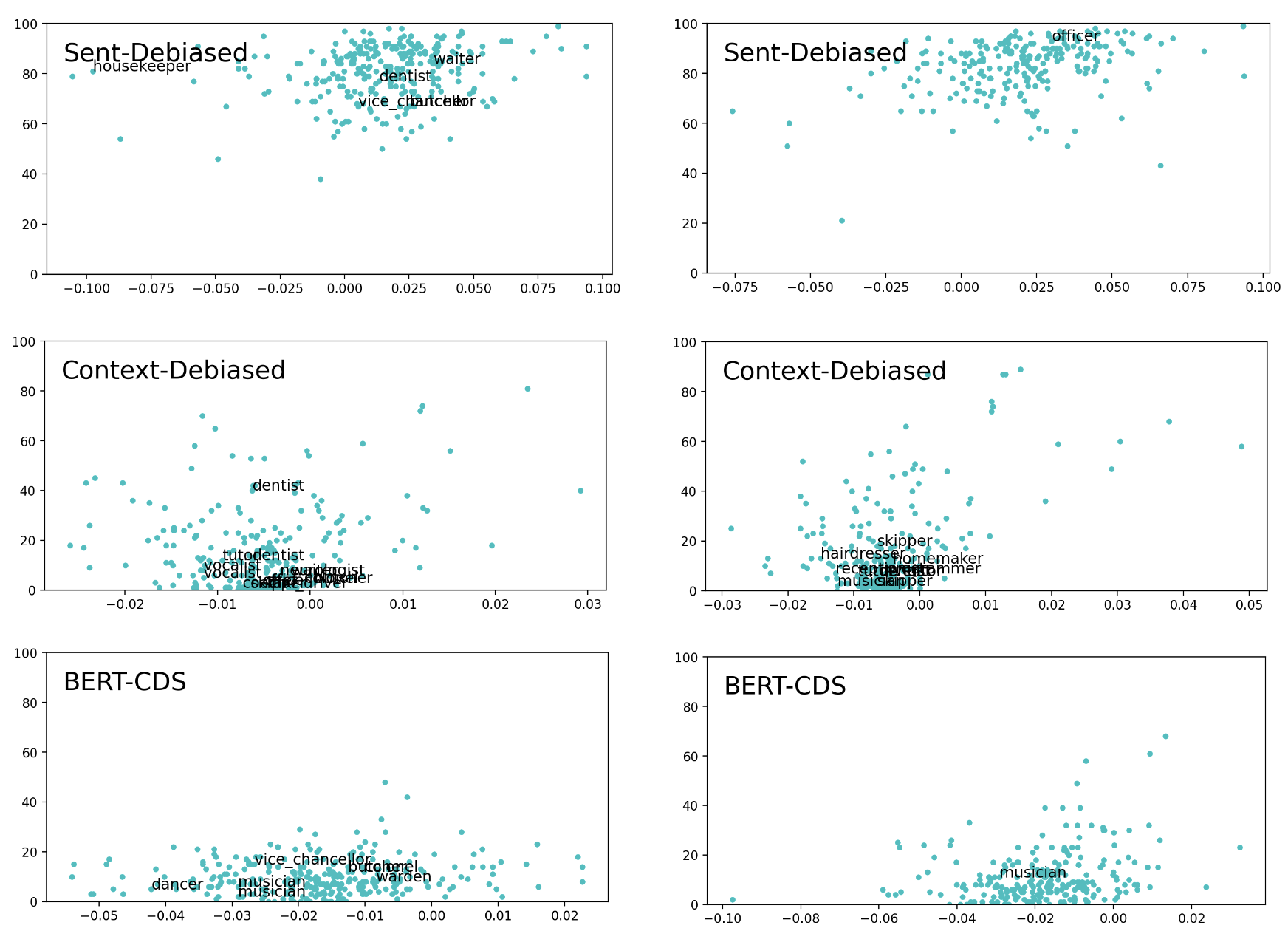

Figure 4. The plot for debiased models (Sent-Debiased, Context-Debiased, BERT-CDS from the top). The same vocabularies are used as in BERT.

Either male- or female-bias is observed. More discussed in the section 4.3.2 of the paper.

| Word2Vec | GloVe | |

|---|---|---|

| BERT | 99.4% | 99.9% |

| Sent-Debiased | 98.7% | 100% |

| Context-Debiased | 94.2% | 98.5% |

| BERT-CDS | 96.8% | 98.8% |

Classifier still can learn to distinguish male- and female-biased embeddings.

Please refer to https://github.com/gonenhila/gender_bias_lipstick for the code and data of the original project.

lipstick-on-a-pig

├── README.md

├── LICENSE

├── .gitignore

├── archive

├── data - original, extracted embeddings

│ ├── embeddings

│ ├── lists

│ └── extracted

├── results - images

├── source

│ ├── remaining_bias_2016.ipynb

│ ├── remaining_bias_2018.ipynb

│ ├── compute_bert_sentence.ipynb

│ ├── compute_debiased_sentence.ipynb

│ └── experiments.ipynb

├── assets

└── docs

Archive stores different experiments: retaining 2500 words from original paper (should only retain whole restricted vocabulary), sentence consisting of only one word (

compute_bert_word.ipynb,compute_debiased_word.ipynb), extracting the average of entire sentence embedding without positional information, and more.

- Process restricted vocabulary from

remaining_bias_2016.ipynb,remaining_bias_2018.ipynb - Compute BERT vocabulary and embeddings with

compute_bert_sentence.ipynb - Compute debiased embeddings with

compute_debiased_sentence.ipynb - Run

experiments.ipynb

Debiased models are acquired from github repositories:

- Sent-Debiased - https://github.com/pliang279/sent_debias

- Context-Debiased - https://github.com/kanekomasahiro/context-debias

- Bert-CDS - https://github.com/marionbartl/gender-bias-BERT

Sorted in Hangeul, Korean alphabetical order.

This project is licensed under Apache License - see the LICENSE file for details.