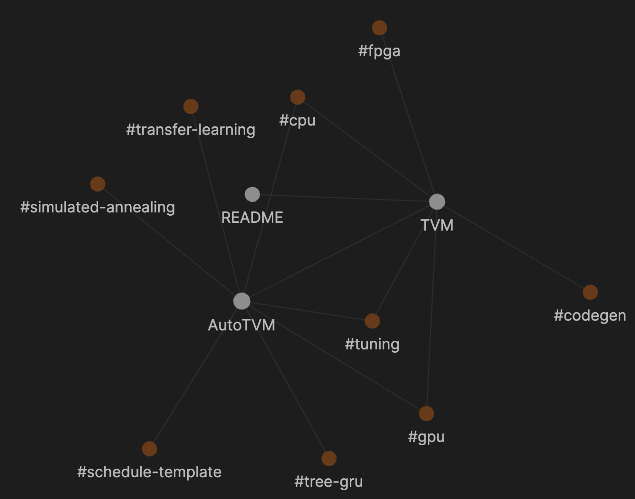

This repository is inspired by Obsidian, which stores each entry to a markdown (.md) file and maintains a knowledge graph between entries. For example, if an entry A mentions another entry B with the syntax [[B]], then Obsidian knowledge graph will build an edge between A and B. The following figure shows an example of the knowledge graph with tags included.

I attempt to use this feature to connect deep learning compiler research papers. I will start with TVM and gradually add more papers. Everyone is welcome to contribute new entries or comment on existing entries. However, I do not plan to build a forum or a discussion panel. Instead, I hope to keep the summary and comment concise so that everyone can easily catch up the overall idea about the latest developments of deep learning compilers.

I wrote a simple script to parse all markdown files in this repo and generate a JSON file. The JSON file could be used for web deployment. By setting a Github action to run the script and deploy the generated graph to gh-pages branch, an interactive graph is instantly deployed to the website of this repo: https://comaniac.github.io/dlc-literature/.

Please note that I am a very bad at the frontend, so the website is basically copy-paste from https://philogb.github.io/jit/index.html. You are VERY welcome to send pull requests to improve the website by all means.

Specifically, here are the TODOs I am currently thinking:

- Embed the markdown page of the currernt focused node on the bottom.

- Hyperlink the markdown file on the node name of the right container.

- A better CSS style.

- Create a new file named "paper-tag.md". Note that "paper-tag" would be the keyword mentioned by other entries, so it should be just one or two words. For the paper that presents a system such as TVM, the system name itself is a proper tag; otherwise, the representative algorithm, feature, methodology could also be candidates. If you really have no clue, then "LastNameOfFirstAuthor-Year.md" could also work.

- Copy the template to the created file and fill out the contents.

- If the paper is publicly available, please provide the PDF URL.

- Triton: An Intermediate Language and Compiler for Tiled Neural Network Computations

- SWIRL: High-performance many-core CPU code generation for deep neural networks

- TASO : Optimizing Deep Learning Computation with Automatic Generation of Graph Substitutions

- Diesel: DSL for linear algebra and neural net computations on GPUs

- Tensor Comprehensions: Framework-Agnostic High-Performance Machine Learning Abstractions

- Learning to Fuse

- Learned TPU Cost Model for XLA Tensor Programs

- Automatic generation of high-performance quantized machine learning kernels

- Automatic Kernel Generation for Volta Tensor Cores

- PolyDL: Polyhedral Optimizations for Creation of High Performance DL primitives

- A Learned Performance Model for the Tensor Processing Unit

- Evolutionary Algorithm with Non-parametric Surrogate Model for Tensor Program Optimization

- Transferable Graph Optimizers for ML Compilers

- A Deep Learning Based Cost Model for Automatic Code Optimization in Tiramisu

- GAMMA: Automating the HW Mapping of DNN Models on Accelerators via Genetic Algorithm

- Looks relate to BYOC.

- SODA: a New Synthesis Infrastructure for Agile Hardware Design of Machine Learning Accelerators

- From David Brooks's group. Software Defined Architecture.

- A Case for Generalizable DNN Cost Models for Mobile Devices

- Relate to cost model for edge devices.

- Rammer: Enabling Holistic Deep Learning Compiler Optimizations with rTasks

- From MS. Finer-grained fusion strategy.

- Jittor: a novel deep learning framework with meta-operators and unified graph execution

- JIT DLC for CPU and GPU.

- DLFusion: An Auto-Tuning Compiler for Layer Fusion on Deep Neural Network Accelerator

- Yet another fusion strategy.

- Estimating GPU memory consumption of deep learning models

- GPU memory cost model.

- The Deep Learning Compiler: A Comprehensive Survey

- Deep Learning Systems: Algorithms, Compilers, and Processors for Large-Scale Production

- Book. 10/2020.

- ApproxTuner: A Compiler and Runtime System for Adaptive Approximations.

- A three-phase approach to approximation-tuning at dev-/deploy-/runtime-stage.

- Mistify: Automating DNN Model Porting for On-Device Inference at the Edge.

- Deploy models on edge devices with mode architecture adaption and parameter tuning.