- Authors: Jaemin Cho, David Seunghyun Yoon, Ajinkya Kale, Franck Dernoncourt, Trung Bui, Mohit Bansal

- Findings of NAACL 2022 Paper

(Inference using pretrained model on custom image)

- Integrated into Huggingface Spaces 🤗 using Gradio. Try out the Web Demo

- Try Replicate web demo and docker image here

# Configurations

./configs/

# MLE

phase1/

# RL

phase2/

# COCO caption evaluation

./cider

./coco-caption

# Preprocessing

./clip # CLIP feature extractor

./scripts # COCO preprocessing

./scripts_FineCapEval # FineCapEval preprocessing

./data # Storing preprocessed features

# Core model / Rewards / Data loading

./captioning

# Training / Evaluation

./tools

# Fine-tuning CLIP Text encoder

./retrieval

# Pretrained checkpoints

./save

# Storing original dataset files

./datasets# Create python environment (optional)

conda create -n clip4caption python=3.7

source activate clip4caption

# python dependenceies

pip install -r requirements.txt

## Install this repo as package

pip install -e .

# Install Detectron2 (optional for training utilities)

pip install detectron2 -f https://dl.fbaipublicfiles.com/detectron2/wheels/cu113/torch1.10/index.html

# Setup coco-caption (optional for text metric evaluation)

git clone https://github.com/clip-vil/cider

git clone https://github.com/clip-vil/coco-caption

cd coco-caption

bash get_stanford_models.sh

bash get_google_word2vec_model.sh

# Install java (optional for METEOR evaluation as part of text metrics)

sudo apt install default-jreWe host model checkpoints via google drive.

Download checkpoints as below.

The .ckpt file size for captioning and CLIP models are 669.65M and 1.12G, respectively.

# Captioning model

./save/

clipRN50_cider/

clipRN50_cider-last.ckpt

clipRN50_cider_clips/

clipRN50_cider_clips-last.ckpt

clipRN50_clips/

clipRN50_clips-last.ckpt

clipRN50_clips_grammar/

clipRN50_clips_grammar-last.ckpt

clipRN50_mle/

clipRN50_mle-last.ckpt

# Finetuned CLIP Text encoder

./retrieval/

save/

clip_negative_text-last.ckpt# Original dataset files - to be downloaded

./datasets/

# Download from http://mscoco.org/dataset/#download

COCO/

images/

train2014/

val2014/

annotations/

captions_train2014.json

captions_val2014.json

# Download from https://drive.google.com/drive/folders/1jlwInAsVo-PdBdJlmHKPp34dLnxIIMLx

FineCapEval/

images/

XXX.jpg

- Download files

./datasets/

# Download from http://mscoco.org/dataset/#download

COCO/

images/

train2014/

val2014/

annotations/

captions_train2014.json

captions_val2014.json

./data/

# Download from http://cs.stanford.edu/people/karpathy/deepimagesent/caption_datasets.zip

dataset_coco.json

# Download from from https://drive.google.com/drive/folders/1eCdz62FAVCGogOuNhy87Nmlo5_I0sH2J

coco-train-words.p- Text processing

python scripts/prepro_labels.py --input_json data/dataset_coco.json --output_json data/cocotalk.json --output_h5 data/cocotalk- Visual feature extraction

python scripts/clip_prepro_feats.py --input_json data/dataset_coco.json --output_dir data/cocotalk --images_root datasets/COCO/images --model_type RN50

# optional (n_jobs)

--n_jobs 4 --job_id 0- Visual fetaure extraction for CLIP-S Reward

python scripts/clipscore_prepro_feats.py --input_json data/dataset_coco.json --output_dir data/cocotalk --images_root datasets/COCO/images

# optional (n_jobs)

--n_jobs 4 --job_id 0- Download files from https://drive.google.com/drive/folders/1jlwInAsVo-PdBdJlmHKPp34dLnxIIMLx?usp=sharing

./datasets/

FineCapEval/

images/

XXX.jpg

./data/

FineCapEval.json

FineCapEval.csv- Visual feature extraction

python scripts_FineCapEval/clip_prepro_feats.py --input_json data/FineCapEval.json --output_dir data/FineCapEval --images_root datasets/FineCapEval/images --model_type RN50

# optional (n_jobs)

--n_jobs 4 --job_id 0export MLE_ID='clipRN50_mle'

# Training

python tools/train_pl.py --cfg configs/phase1/$MLE_ID.yml --id $MLE_ID

# Evaluation

EVALUATE=1 python tools/train_pl.py --cfg configs/phase1/$MLE_ID.yml --id $MLE_ID

# Text-to-Iage Retrieval with CLIP VIT-B/32

python tools/eval_clip_retrieval.py --gen_caption_path "./eval_results/$MLE_ID.json"

# Evaluation on FineCapEval

python tools/finecapeval_inference.py --reward mle

python tools/eval_finecapeval.py --generated_id2caption ./FineCapEval_results/clipRN50_mle.jsonexport REWARD='cider'

export MLE_ID='clipRN50_mle'

export RL_ID='clipRN50_'$REWARD

# Copy MLE checkpoint as starting point of RL finetuning

mkdir save/$RL_ID

cp save/$MLE_ID/$MLE_ID-last.ckpt save/$RL_ID/$RL_ID-last.ckpt

# Training

python tools/train_pl.py --cfg configs/phase2/$RL_ID.yml --id $RL_ID

# Evaluation

EVALUATE=1 python tools/train_pl.py --cfg configs/phase2/$RL_ID.yml --id $RL_ID

# Text-to-Iage Retrieval with CLIP VIT-B/32

python tools/eval_clip_retrieval.py --gen_caption_path "./eval_results/$RL_ID.json"

# Evaluation on FineCapEval

python tools/finecapeval_inference.py --reward $REWARD

python tools/eval_finecapeval.py --generated_id2caption ./FineCapEval_results/$RL_ID.jsonexport REWARD='clips'

export MLE_ID='clipRN50_mle'

export RL_ID='clipRN50_'$REWARD

# Copy MLE checkpoint as starting point of RL finetuning

mkdir save/$RL_ID

cp save/$MLE_ID/$MLE_ID-last.ckpt save/$RL_ID/$RL_ID-last.ckpt

# Training

python tools/train_pl.py --cfg configs/phase2/$RL_ID.yml --id $RL_ID

# Evaluation

EVALUATE=1 python tools/train_pl.py --cfg configs/phase2/$RL_ID.yml --id $RL_ID

# Text-to-Iage Retrieval with CLIP VIT-B/32

python tools/eval_clip_retrieval.py --gen_caption_path "./eval_results/$RL_ID.json"

# Evaluation on FineCapEval

python tools/finecapeval_inference.py --reward $REWARD

python tools/eval_finecapeval.py --generated_id2caption ./FineCapEval_results/$RL_ID.jsonexport REWARD='clips_cider'

export MLE_ID='clipRN50_mle'

export RL_ID='clipRN50_'$REWARD

# Copy MLE checkpoint as starting point of RL finetuning

mkdir save/$RL_ID

cp save/$MLE_ID/$MLE_ID-last.ckpt save/$RL_ID/$RL_ID-last.ckpt

# Training

python tools/train_pl.py --cfg configs/phase2/$RL_ID.yml --id $RL_ID

# Evaluation

EVALUATE=1 python tools/train_pl.py --cfg configs/phase2/$RL_ID.yml --id $RL_ID

# Text-to-Iage Retrieval with CLIP VIT-B/32

python tools/eval_clip_retrieval.py --gen_caption_path "./eval_results/$RL_ID.json"

# Evaluation on FineCapEval

python tools/finecapeval_inference.py --reward $REWARD

python tools/eval_finecapeval.py --generated_id2caption ./FineCapEval_results/$RL_ID.json-

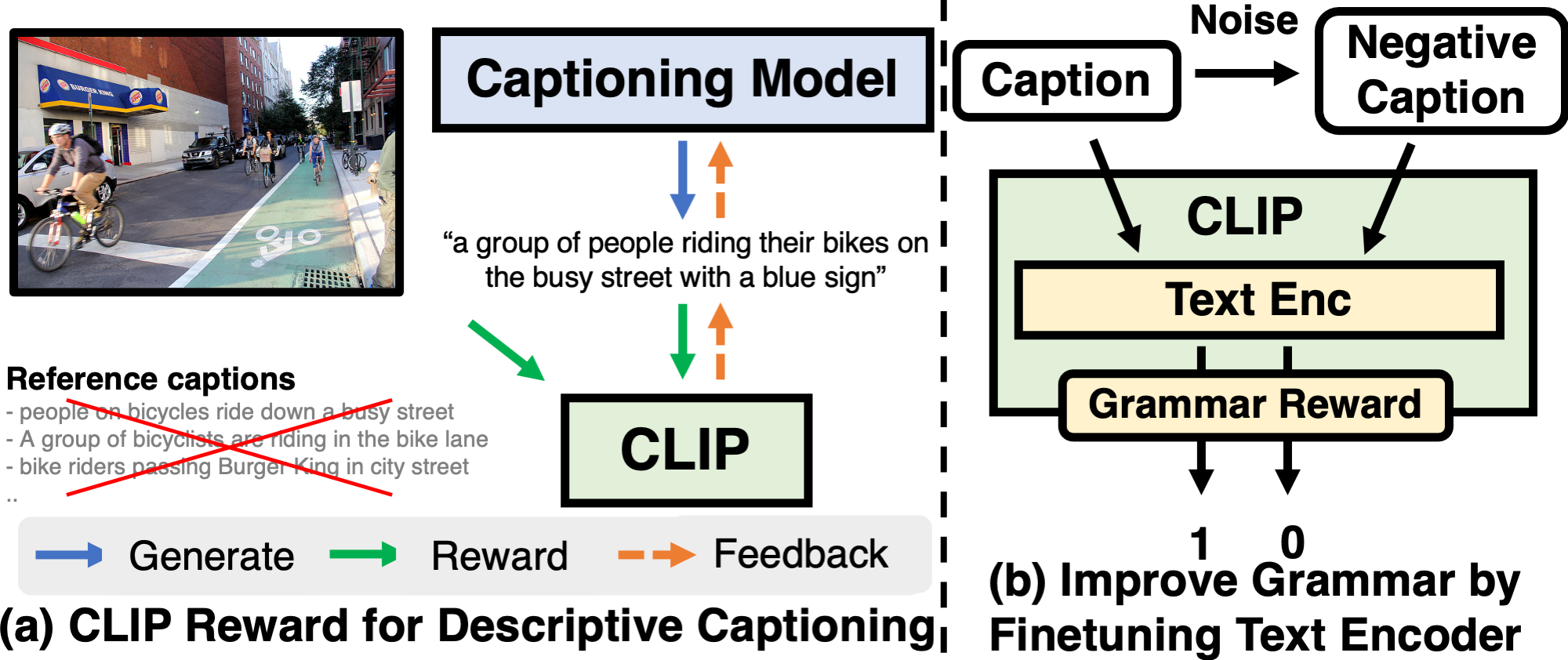

Run CLIP Finetuning (for grammar) following ./retrieval/README.md

-

Run RL training using the updated CLIP

export REWARD='clips_grammar'

export MLE_ID='clipRN50_mle'

export RL_ID='clipRN50_'$REWARD

# Copy MLE checkpoint as starting point of RL finetuning

mkdir save/$RL_ID

cp save/$MLE_ID/$MLE_ID-last.ckpt save/$RL_ID/$RL_ID-last.ckpt

# Training

python tools/train_pl.py --cfg configs/phase2/$RL_ID.yml --id $RL_ID

# Evaluation

EVALUATE=1 python tools/train_pl.py --cfg configs/phase2/$RL_ID.yml --id $RL_ID

# Text-to-Iage Retrieval with CLIP VIT-B/32

python tools/eval_clip_retrieval.py --gen_caption_path "./eval_results/$RL_ID.json"

# Evaluation on FineCapEval

python tools/finecapeval_inference.py --reward $REWARD

python tools/eval_finecapeval.py --generated_id2caption ./FineCapEval_results/$RL_ID.jsonWe thank the developers of CLIP-ViL, ImageCaptioning.pytorch, CLIP, coco-caption, cider for their public code release.

Please cite our paper if you use our models in your works:

@inproceedings{Cho2022CLIPReward,

title = {Fine-grained Image Captioning with CLIP Reward},

author = {Jaemin Cho and Seunghyun Yoon and Ajinkya Kale and Franck Dernoncourt and Trung Bui and Mohit Bansal},

booktitle = {Findings of NAACL},

year = {2022}

}