autograde is a toolbox for testing Jupyter notebooks. Its features include execution of notebooks (optionally isolated via docker/podman) with consecutive unit testing of the final notebook state. An audit mode allows for refining results (e.g. grading plots by hand). Eventually, autograde can summarize these results in human and machine-readable formats.

Install autograde from PyPI using pip like this

pip install jupyter-autogradeAlternatively, autograde can be set up from source code by cloning this repository and installing it using poetry

git clone https://github.com/cssh-rwth/autograde.git && cd autograde

poetry installIf you intend to use autograde in a sandboxed environment ensure rootless docker or podman are available on your system. So far, only rootless mode is supported!

Once installed, autograde can be invoked via theautograde command. If you are using a virtual environment (which

poetry does implicitly) you may have to activate it first. Alternative methods:

path/to/python -m autograderuns autograde with a specific python binary, e.g. the one of your virtual environment.poetry run autogradeif you've installed autograde from source

To get an overview over all options available, run

autograde [sub command] --helpautograde comes with some example files located in the demo/

subdirectory that we will use for now to illustrate the workflow. Run

autograde test demo/test.py demo/notebook.ipynb --target /tmp --context demo/contextWhat happened? Let's first have a look at the arguments of autograde:

demo/test.pya script with test cases we want to applydemo/notebook.ipynbis the a notebook to be tested (here you may also specify a directory to be recursively searched for notebooks)- The optional flag

--targettells autograde where to store results,/tmpin our case, and the current working directory by default. - The optional flag

--contextspecifies a directory that is mounted into the sandbox and may contain arbitrary files or subdirectories. This is useful when the notebook expects some external files to be present such as data sets.

The output is a compressed archive that is named something like

results-Member1Member2Member3-XXXXXXXXXX.zip and which has the following contents:

artifacts/: directory with all files that where created or modified by the tested notebook as well as rendered matplotlib plots.code.py: code extracted from the notebook includingstdout/stderras commentsnotebook.ipynb: an identical copy of the tested notebookrestults.json: test results

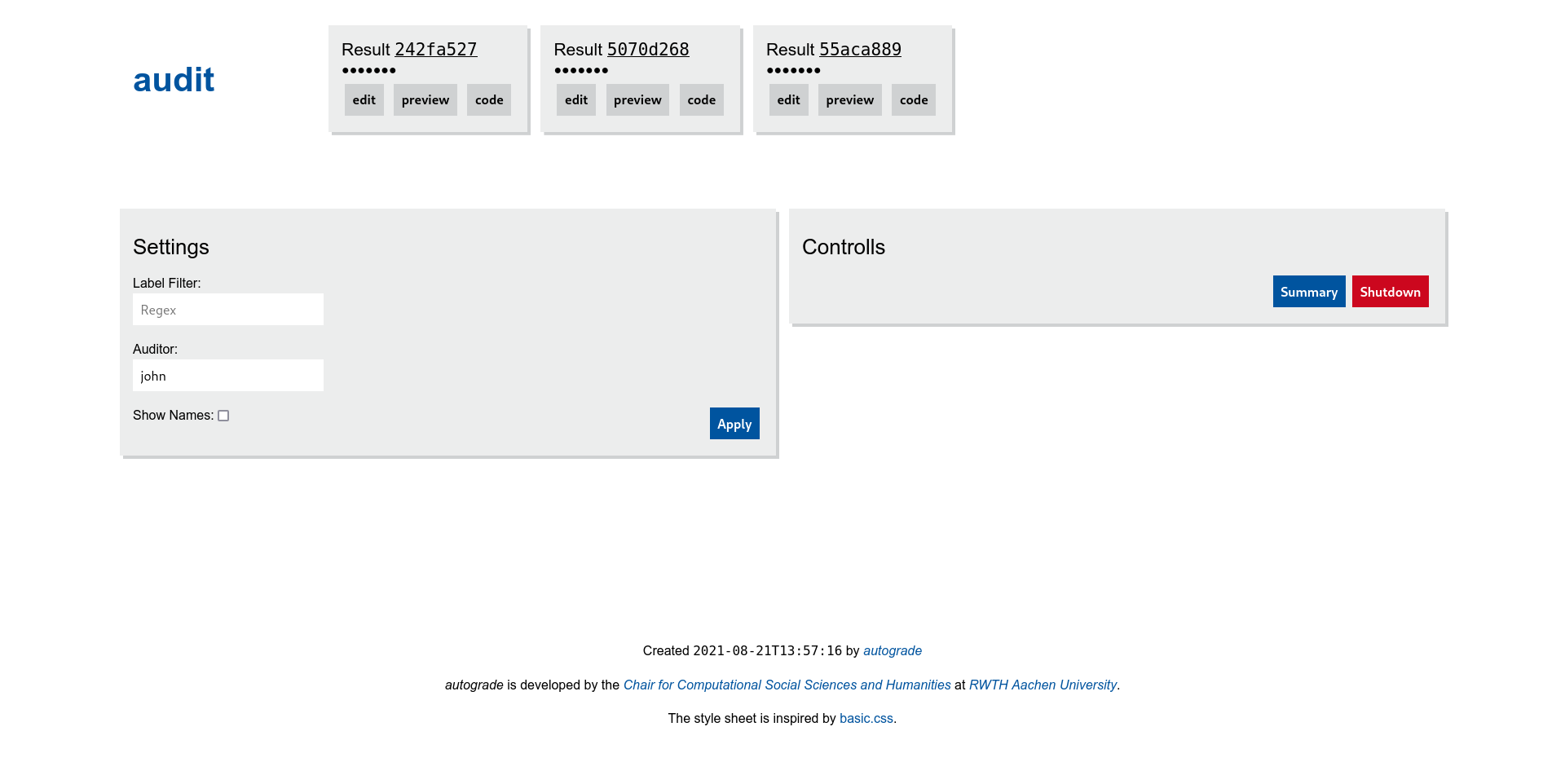

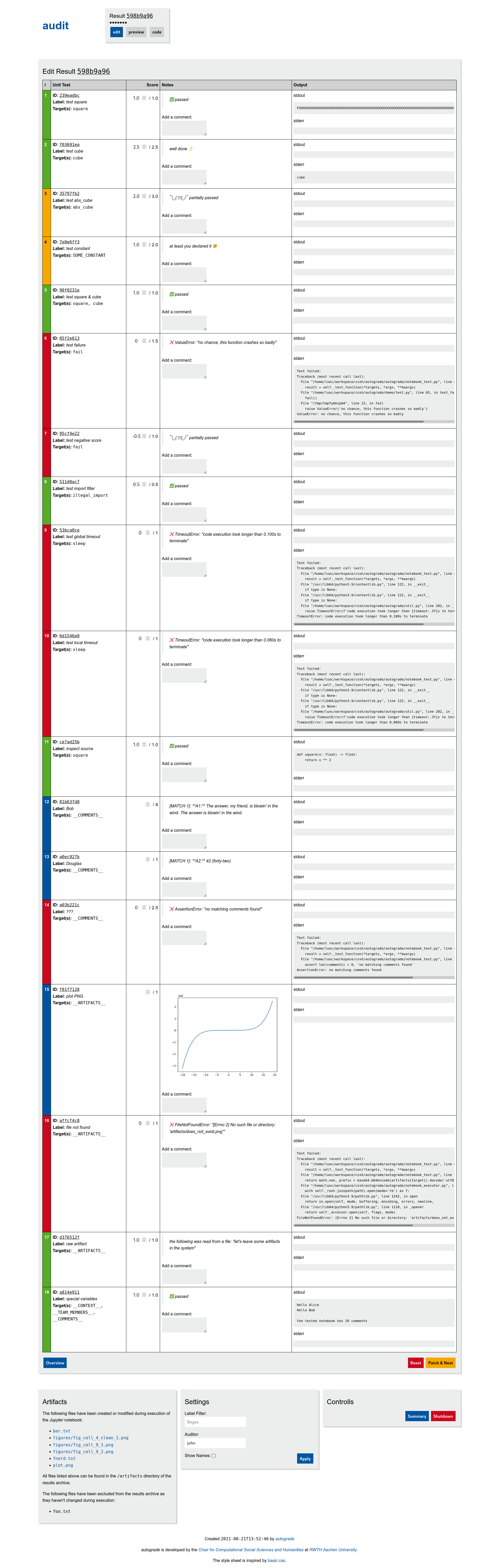

The interactive audit mode allows for manual refining the result files. This is useful for grading parts that cannot be tested automatically such as plots or text comments.

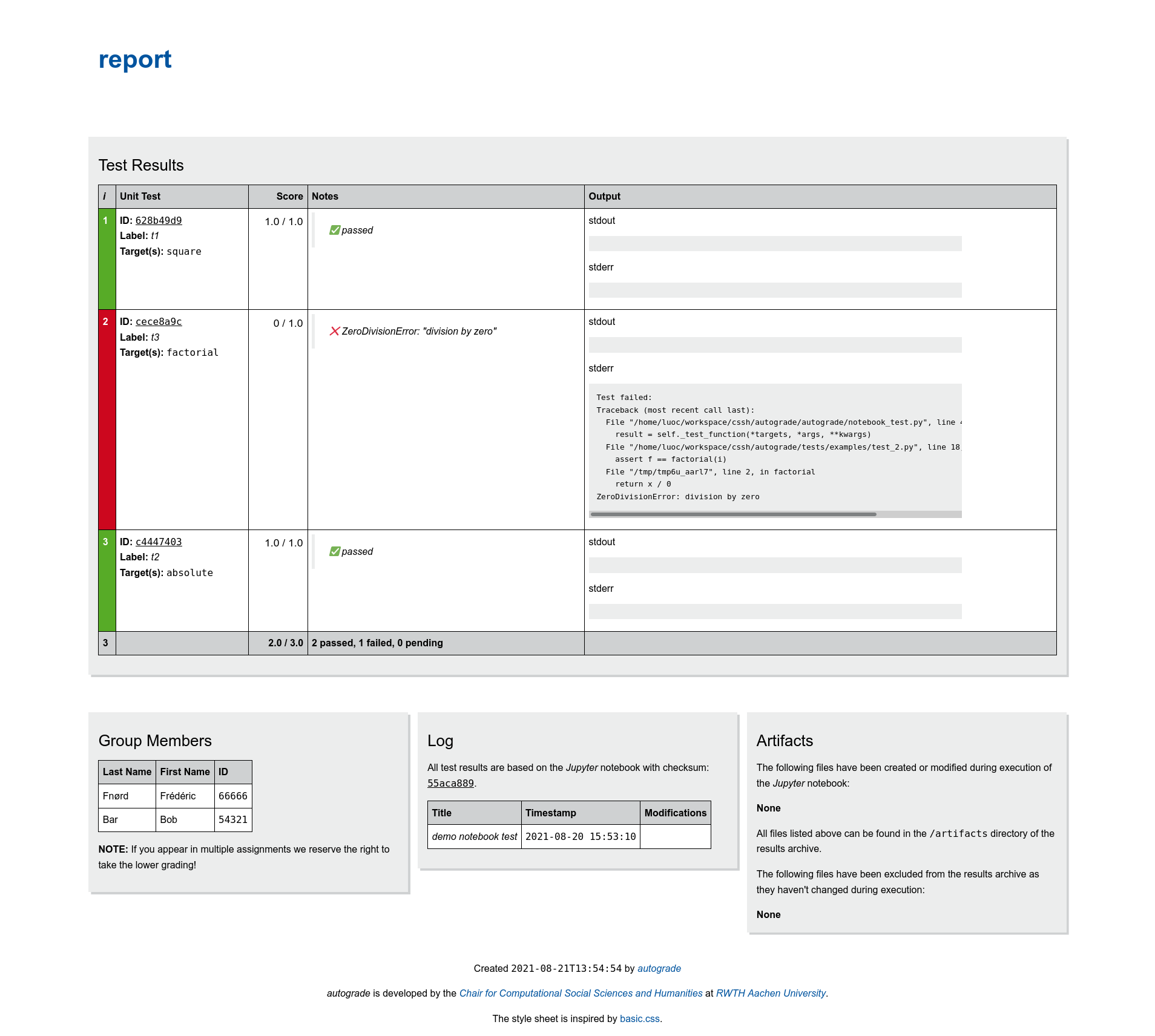

autograde audit path/to/resultsThe report sub command creates human readable HTML reports from test results:

autograde report path/to/result(s)The report is added to the results archive inplace.

Results from multiple test runs can be merged via the patch sub command:

autograde patch path/to/result(s) /path/to/patch/result(s)In a typical scenario, test cases are not just applied to one notebook but many at a time. Therefore, autograde comes with a summary feature, that aggregates results, shows you a score distribution and has some very basic fraud detection. To create a summary, simply run:

autograde summary path/to/resultsTwo new files will appear in the result directory:

summary.csv: aggregated resultssummary.html: human readable summary report

Work with result archives programmatically

Fix score for a test case in all result archives:

from pathlib import Path

from autograde.backend.local.util import find_archives, traverse_archives

def fix_test(path: Path, faulty_test_id: str, new_score: float):

for archive in traverse_archives(find_archives(path), mode='a'):

results = archive.results.copy()

for faulty_test in filter(lambda t: t.id == faulty_test_id, results.unit_test_results):

faulty_test.score_max = new_score

archive.inject_patch(results)

fix_test(Path('...'), '...', 13.37)Special Test Cases

Ensure a student id occurs at most once:

from collections import Counter

from autograde import NotebookTest

nbt = NotebookTest('demo notebook test')

@nbt.register(target='__TEAM_MEMBERS__', label='check for duplicate student id')

def test_special_variables(team_members):

id_counts = Counter(member.student_id for member in team_members)

duplicates = {student_id for student_id, count in id_counts.items() if count > 1}

assert not duplicates, f'multiple members share same id ({duplicates})'