Official implementation for paper: What You See is Not What the Network Infers: Detecting Adversarial Examples Based on Semantic Contradiction. NDSS 2022 [ArXiv] [PDF]

Abstract: Adversarial examples (AEs) pose severe threats to the applications of deep neural networks (DNNs) to safety-critical domains, e.g., autonomous driving. While there has been a vast body of AE defense solutions, to the best of our knowledge, they all suffer from some weaknesses, e.g., defending against only a subset of AEs or causing a relatively high accuracy loss for legitimate inputs. Moreover, most existing solutions cannot defend against adaptive attacks, wherein attackers are knowledgeable about the defense mechanisms and craft AEs accordingly.

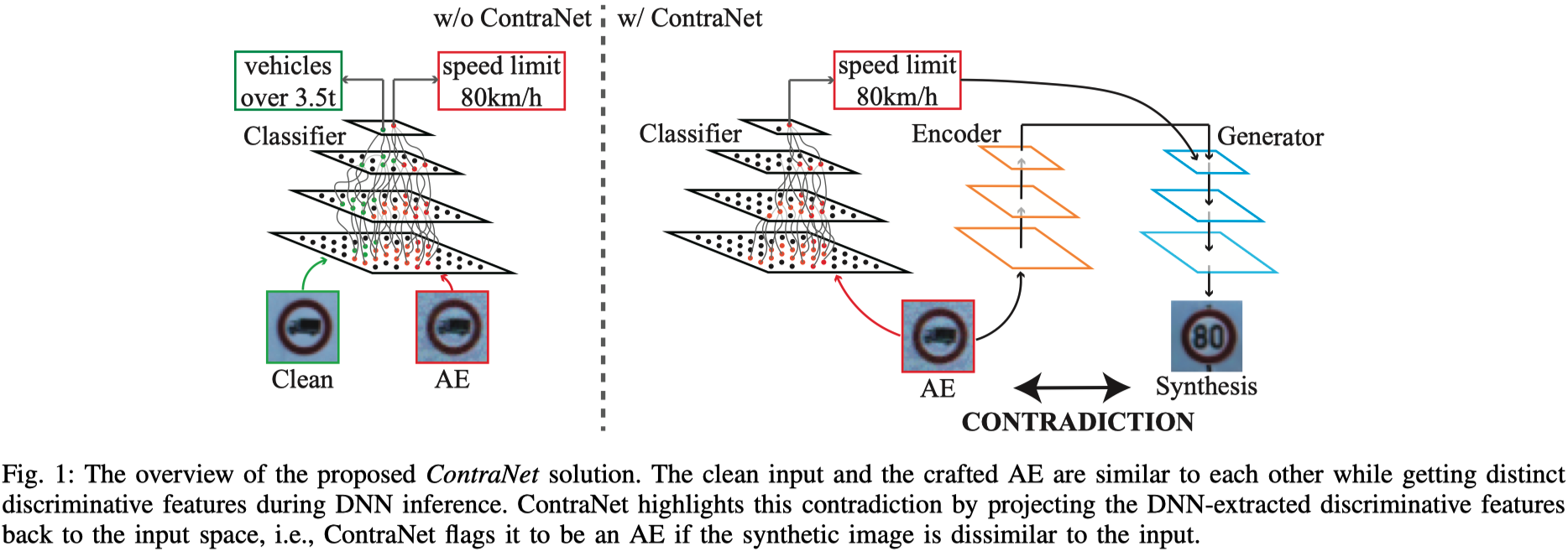

In this paper, we propose a novel AE detection framework based on the very nature of AEs, i.e., their semantic information is inconsistent with the discriminative features extracted by the target DNN model. To be specific, the proposed solution, namely ContraNet 1 , models such contradiction by first taking both the input and the inference result to a generator to obtain a synthetic output and then comparing it against the original input. For legitimate inputs that are correctly inferred, the synthetic output tries to reconstruct the input. On the contrary, for AEs, instead of reconstructing the input, the synthetic output would be created to conform to the wrong label whenever possible. Consequently, by measuring the distance between the input and the synthetic output with metric learning, we can differentiate AEs from legitimate inputs. We perform comprehensive evaluations under various AE attack scenarios, and experimental results show that ContraNet outperforms existing solutions by a large margin, especially under adaptive attacks. Moreover, our analysis shows that successful AEs that can bypass ContraNet tend to have much-weakened adversarial semantics. We have also shown that ContraNet can be easily combined with adversarial training techniques to achieve further improved AE defense capabilities.

- The testing code of ContraNet against white-box attacks, see

./whitebox_attacks - The testing code of ContraNet against adaptive attacks, see

./adaptive_attacks - The training code of ContraNet's deep metric model, see

./whitebox_attacks. - The training code of ContraNet's generative model on cifar10, see

./cifar10_ContraNet. - The training code of ContraNet's generative model on gtsrb, see

./GTSRB_ContraNet. - The training code of ContraNet's generative model on mnist

All python dependencies are listed in environment.yaml.

Pre-trained models on Google Drive

| Datatset | Classifier | cGAN | Similarity Measure Model |

|---|---|---|---|

| cifar10 | Densenet169 | Encoder(E), Encoder(V), Generator | dis, DMM |

| GTSRB | Resnet18 | Encoder(E), Encoder(V), Generator | dis, DMM |

Please check whitebox_attacks for more details.

cd adaptive_attacks

- Download pretrained models to

./pretrain - Download classifier

densenet169.ptto./ - For PGD adaptive attacks, run:

python adaptive_targeted_PGD_linf.py \ [--adaptive_PGD_loss all| ssim_dis_dml| dis_dml| ssim_dis| ssim_dml| dis| dml| ssim] - For ATC+ContraNet against PGD adaptive attacks, run:

python ATC_ContraNet/robust_classifier_adaptive_targeted_PGD_linf.py - For OrthogonalPGD attack, run:

python OrthogonalPGD/contraNetattack.py [--fpr 5|50] [--attack_iteration 200|40] \ [--adaptive_PGD_loss all| ssim_dis_dml| dis_dml| ssim_dis| ssim_dml| dis| dml| ssim] - ContraNet+ATC against OrthogonalPGD, run:

python OrthogonalPGD/robust_classifier_adaptive_targeted_PGD_linf.py [--fpr 5|50] \ [--attack_iteration 200|40] \ [--adaptive_PGD_loss all| ssim_dis_dml| dis_dml| ssim_dis| ssim_dml| dis| dml| ssim] - For C&W adaptive attacks, run:

python targeted_cw_adaptive_attack.py

cd cifar10_ContraNet

-

Train the cGAN component of ContraNet:

python adding_noise_main.pyNote that, you may turn off the --resume option if you want to train the model from scratch. After step 1, the basic version of ContraNet's generator part is done. Step 2 aimming to further improve the quality of the synthesis, one may skip this step. Our cGAN's implementation is based on StudioGAN, one may refer to this repo for more instructions.

-

(optional) Train the second discriminator to help the cGAN generating synthesis more faithful to the input image.

python mydiscriminator_main.pyOnce the second discriminator is done, finetune the cGAN model with the obtained second discriminator as an additional objective item by changing the

adding_noise_worker.pytoworker_train_d2D.py. Then run:python adding_noise_main.py -

Train the Dis component in the similarity measurement model.

python noisecGAN_adding_bengin_noise_augmentation_using_discrimator_as_dml.py -

Train the DMM component in the similarity measurement model. Please check whitebox_attacks for more details

For fair comparison with other methods, we re-implemented other baseline methods and test them under the same protocol. Please check AEdetection for more details.

If you find this repository useful for your work, please consider citing it as follows:

@inproceedings{Yang2022WhatYS,

title = {What You See is Not What the Network Infers: Detecting Adversarial Examples Based on Semantic Contradiction},

author = {Yang, Yijun and Gao, Ruiyuan and Li, Yu and Lai, Qiuxia and Xu, Qiang},

booktitle = {Network and Distributed System Security Symposium (NDSS)},

year = {2022}

}Please remember to cite all the datasets and backbone estimators if you use them in your experiments.