TL;DR: We study the transferability of the vanilla ViT pre-trained on mid-sized ImageNet-1k to the more challenging COCO object detection benchmark.

👨💻 This project is under active development 👩💻 :

-

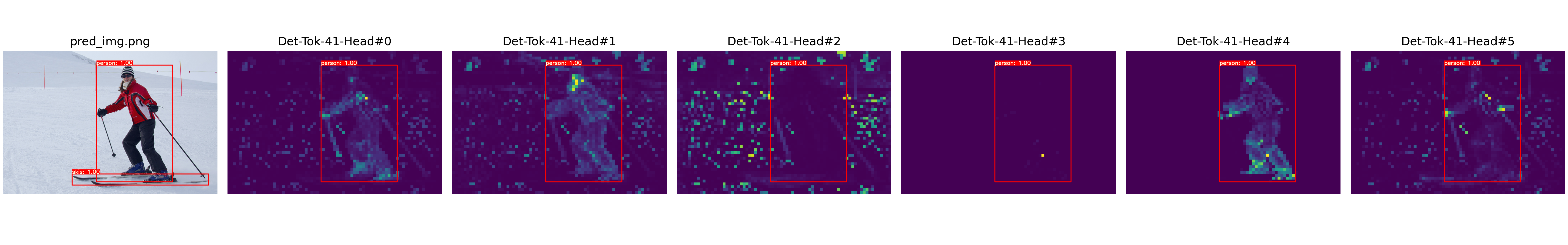

Jun 22, 2021: We update our manuscript on arXiv including discussion about position embeddings and more visualizations, check it out! -

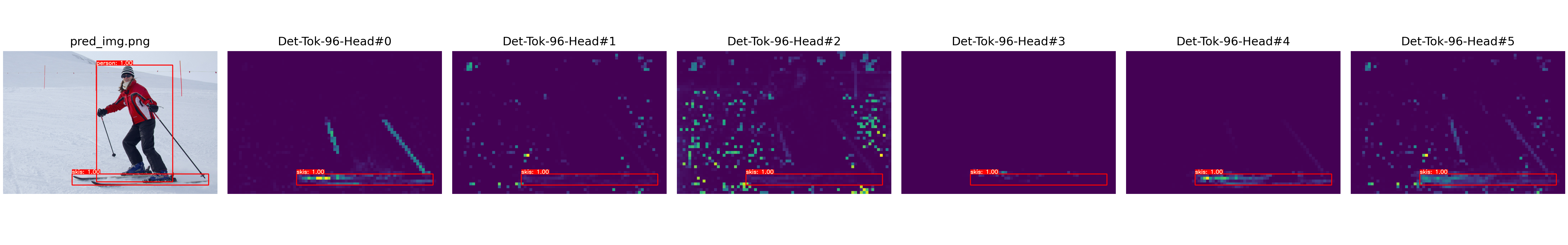

Jun 9, 2021: We add a notebook to to visualize self-attention maps of[Det]tokens on different heads of the last layer, check it out!

You Only Look at One Sequence: Rethinking Transformer in Vision through Object Detection

by Yuxin Fang1 *, Bencheng Liao1 *, Xinggang Wang1 📧, Jiemin Fang2, 1, Jiyang Qi1, Rui Wu3, Jianwei Niu3, Wenyu Liu1.

1 School of EIC, HUST, 2 Institute of AI, HUST, 3 Horizon Robotics.

(*) equal contribution, (📧) corresponding author.

arXiv technical report (arXiv 2106.00666)

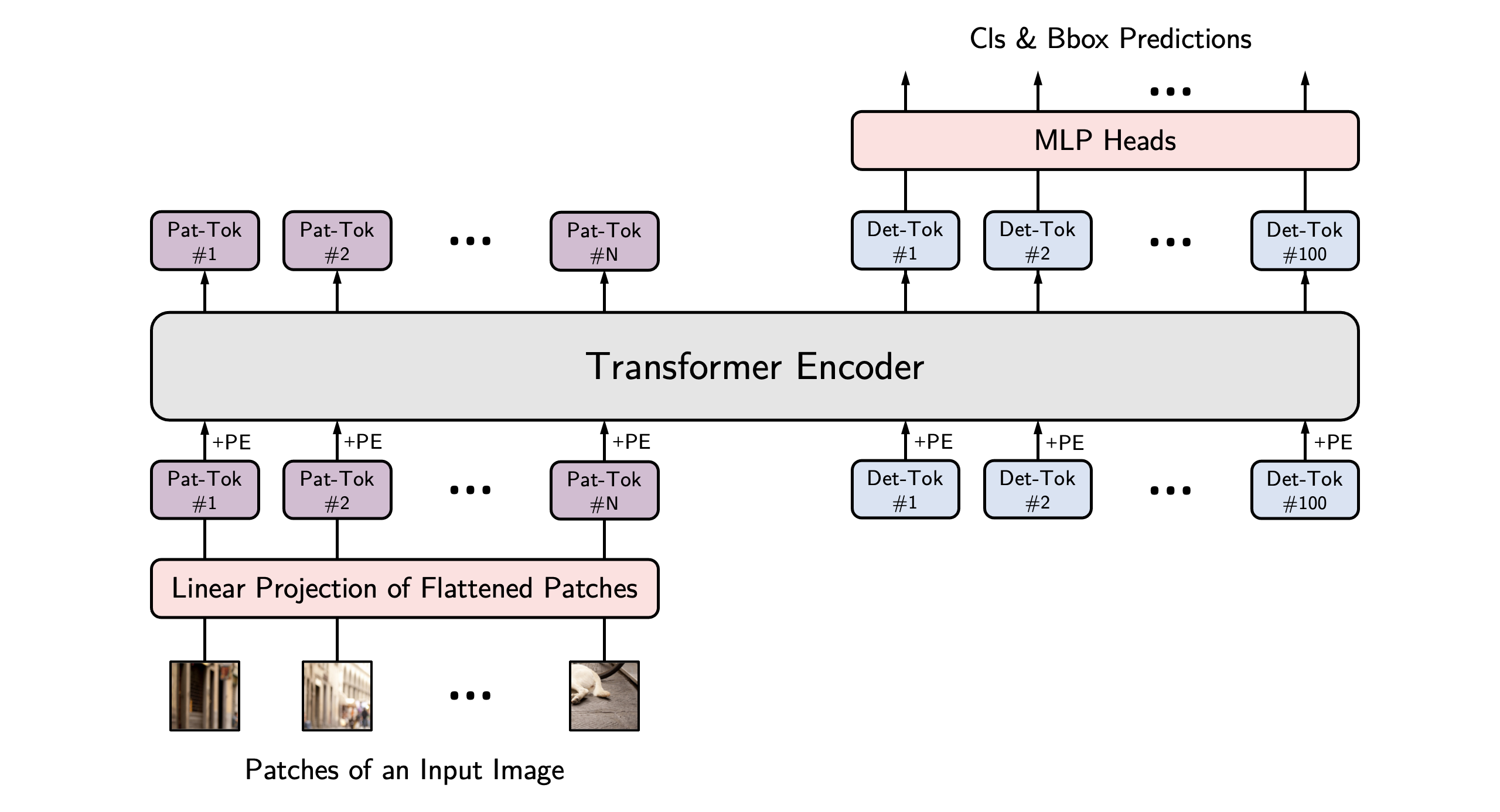

Directly inherited from ViT (DeiT), YOLOS is not designed to be yet another high-performance object detector, but to unveil the versatility and transferability of Transformer from image recognition to object detection. Concretely, our main contributions are summarized as follows:

-

We use the mid-sized

ImageNet-1kas the sole pre-training dataset, and show that a vanilla ViT (DeiT) can be successfully transferred to perform the challenging object detection task and produce competitiveCOCOresults with the fewest possible modifications, i.e., by only looking at one sequence (YOLOS). -

We demonstrate that 2D object detection can be accomplished in a pure sequence-to-sequence manner by taking a sequence of fixed-sized non-overlapping image patches as input. Among existing object detectors, YOLOS utilizes minimal 2D inductive biases. Moreover, it is feasible for YOLOS to perform object detection in any dimensional space unaware the exact spatial structure or geometry.

-

For ViT (DeiT), we find the object detection results are quite sensitive to the pre-train scheme and the detection performance is far from saturating. Therefore the proposed YOLOS can be used as a challenging benchmark task to evaluate different pre-training strategies for ViT (DeiT).

-

We also discuss the impacts as wel as the limitations of prevalent pre-train schemes and model scaling strategies for Transformer in vision through transferring to object detection.

| Model | Pre-train Epochs | ViT (DeiT) Weight / Log | Fine-tune Epochs | Eval Size | YOLOS Checkpoint / Log | AP @ COCO val |

|---|---|---|---|---|---|---|

YOLOS-Ti |

300 | FB | 300 | 512 | Baidu Drive, Google Drive / Log | 28.7 |

YOLOS-S |

200 | Baidu Drive, Google Drive / Log | 150 | 800 | Baidu Drive, Google Drive / Log | 36.1 |

YOLOS-S |

300 | FB | 150 | 800 | Baidu Drive, Google Drive / Log | 36.1 |

YOLOS-S (dWr) |

300 | Baidu Drive, Google Drive / Log | 150 | 800 | Baidu Drive, Google Drive / Log | 37.6 |

YOLOS-B |

1000 | FB | 150 | 800 | Baidu Drive, Google Drive / Log | 42.0 |

Notes:

- The access code for

Baidu Driveisyolo. - The

FBstands for model weights provided by DeiT (paper, code). Thanks for their wonderful works. - We will update other models in the future, please stay tuned :)

This codebase has been developed with python version 3.6, PyTorch 1.5+ and torchvision 0.6+:

conda install -c pytorch pytorch torchvision

Install pycocotools (for evaluation on COCO) and scipy (for training):

conda install cython scipy

pip install -U 'git+https://github.com/cocodataset/cocoapi.git#subdirectory=PythonAPI'

Download and extract COCO 2017 train and val images with annotations from http://cocodataset.org. We expect the directory structure to be the following:

path/to/coco/

annotations/ # annotation json files

train2017/ # train images

val2017/ # val images

Before finetuning on COCO, you need download the ImageNet pretrained model to the /path/to/YOLOS/ directory

To train the YOLOS-Ti model in the paper, run this command:

python -m torch.distributed.launch \

--nproc_per_node=8 \

--use_env main.py \

--coco_path /path/to/coco

--batch_size 2 \

--lr 5e-5 \

--epochs 300 \

--backbone_name tiny \

--pre_trained /path/to/deit-tiny.pth\

--eval_size 512 \

--init_pe_size 800 1333 \

--output_dir /output/path/box_model

To train the YOLOS-S model with 200 epoch pretrained Deit-S in the paper, run this command:

python -m torch.distributed.launch

--nproc_per_node=8

--use_env main.py

--coco_path /path/to/coco --batch_size 1

--lr 2.5e-5

--epochs 150

--backbone_name small

--pre_trained /path/to/deit-small-200epoch.pth

--eval_size 800

--init_pe_size 512 864

--mid_pe_size 512 864

--output_dir /output/path/box_model

To train the YOLOS-S model with 300 epoch pretrained Deit-S in the paper, run this command:

python -m torch.distributed.launch \ --nproc_per_node=8 \ --use_env main.py \ --coco_path /path/to/coco --batch_size 1 \ --lr 2.5e-5 \ --epochs 150 \ --backbone_name small \ --pre_trained /path/to/deit-small-300epoch.pth\ --eval_size 800 \ --init_pe_size 512 864 \ --mid_pe_size 512 864 \ --output_dir /output/path/box_model

To train the YOLOS-S (dWr) model in the paper, run this command:

python -m torch.distributed.launch \

--nproc_per_node=8 \

--use_env main.py \

--coco_path /path/to/coco

--batch_size 1 \

--lr 2.5e-5 \

--epochs 150 \

--backbone_name small_dWr \

--pre_trained /path/to/deit-small-dWr-scale.pth\

--eval_size 800 \

--init_pe_size 512 864 \

--mid_pe_size 512 864 \

--output_dir /output/path/box_model

To train the YOLOS-B model in the paper, run this command:

python -m torch.distributed.launch \

--nproc_per_node=8 \

--use_env main.py \

--coco_path /path/to/coco

--batch_size 1 \

--lr 2.5e-5 \

--epochs 150 \

--backbone_name base \

--pre_trained /path/to/deit-base.pth\

--eval_size 800 \

--init_pe_size 800 1344 \

--mid_pe_size 800 1344 \

--output_dir /output/path/box_model

To evaluate YOLOS-Ti model on COCO, run:

python main.py --coco_path /path/to/coco --batch_size 2 --backbone_name tiny --eval --eval_size 512 --init_pe_size 800 1333 --resume /path/to/YOLOS-Ti

To evaluate YOLOS-S model on COCO, run:

python main.py --coco_path /path/to/coco --batch_size 1 --backbone_name small --eval --eval_size 800 --init_pe_size 512 864 --mid_pe_size 512 864 --resume /path/to/YOLOS-S

To evaluate YOLOS-S (dWr) model on COCO, run:

python main.py --coco_path /path/to/coco --batch_size 1 --backbone_name small_dWr --eval --eval_size 800 --init_pe_size 512 864 --mid_pe_size 512 864 --resume /path/to/YOLOS-S(dWr)

To evaluate YOLOS-B model on COCO, run:

python main.py --coco_path /path/to/coco --batch_size 1 --backbone_name small --eval --eval_size 800 --init_pe_size 800 1344 --mid_pe_size 800 1344 --resume /path/to/YOLOS-B

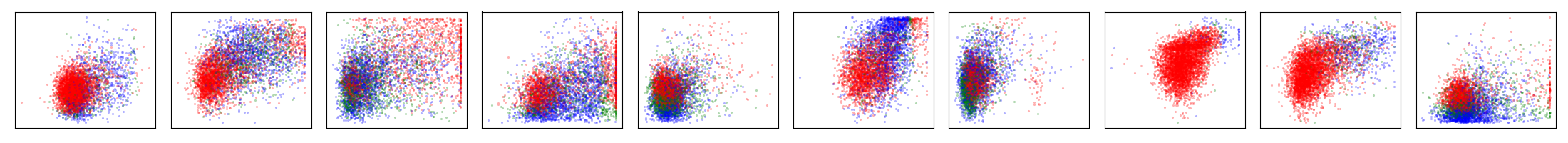

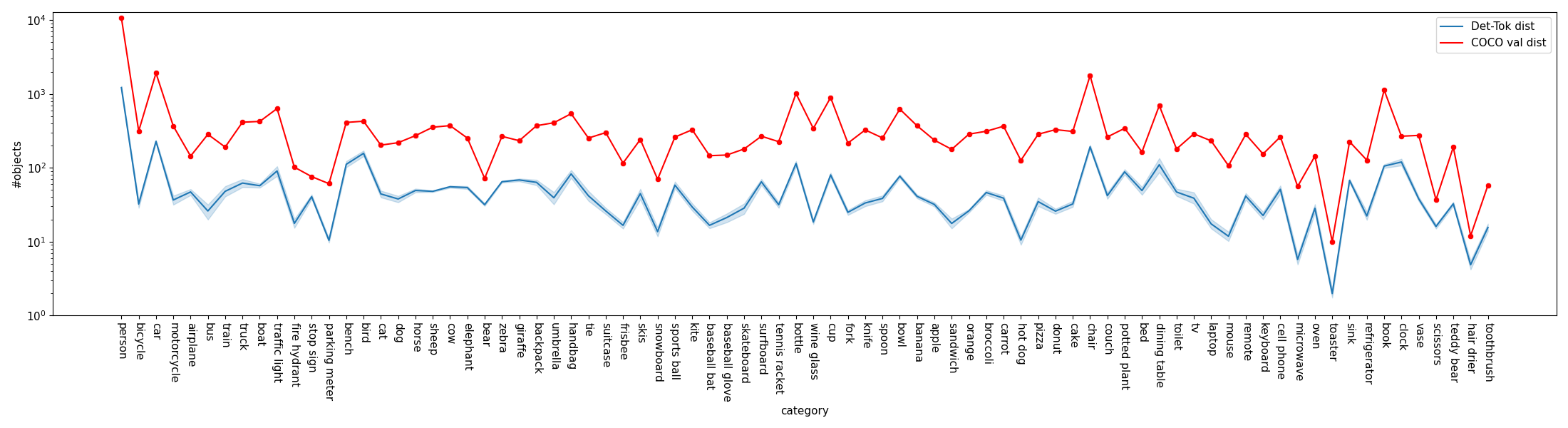

- Visualize box prediction and object categories distribution:

- To Get visualization in the paper, you need the finetuned YOLOS models on COCO, run following command to get 100 Det-Toks prediction on COCO val split, then it will generate

/path/to/YOLOS/visualization/modelname-eval-800-eval-pred.json

python cocoval_predjson_generation.py --coco_path /path/to/coco --batch_size 1 --backbone_name small --eval --eval_size 800 --init_pe_size 512 864 --mid_pe_size 512 864 --resume /path/to/yolos-s-model.pth --output_dir ./visualization

- To get all ground truth object categories on all images from COCO val split, run following command to generate

/path/to/YOLOS/visualization/coco-valsplit-cls-dist.json

python cocoval_gtclsjson_generation.py --coco_path /path/to/coco --batch_size 1 --output_dir ./visualization

- To visualize the distribution of Det-Toks' bboxs and categories, run following command to generate

.pngfiles in/path/to/YOLOS/visualization/

python visualize_dettoken_dist.py --visjson /path/to/YOLOS/visualization/modelname-eval-800-eval-pred.json --cococlsjson /path/to/YOLOS/visualization/coco-valsplit-cls-dist.json

- Use VisualizeAttention.ipynb to visualize self-attention of

[Det]tokens on different heads of the last layer:

This project is based on DETR (paper, code), DeiT (paper, code), DINO (paper, code) and timm. Thanks for their wonderful works.

If you find our paper and code useful in your research, please consider giving a star ⭐ and citation 📝 :

@article{YOLOS,

title={You Only Look at One Sequence: Rethinking Transformer in Vision through Object Detection},

author={Fang, Yuxin and Liao, Bencheng and Wang, Xinggang and Fang, Jiemin and Qi, Jiyang and Wu, Rui and Niu, Jianwei and Liu, Wenyu},

journal={arXiv preprint arXiv:2106.00666},

year={2021}

}