Azure Data Platform End2End

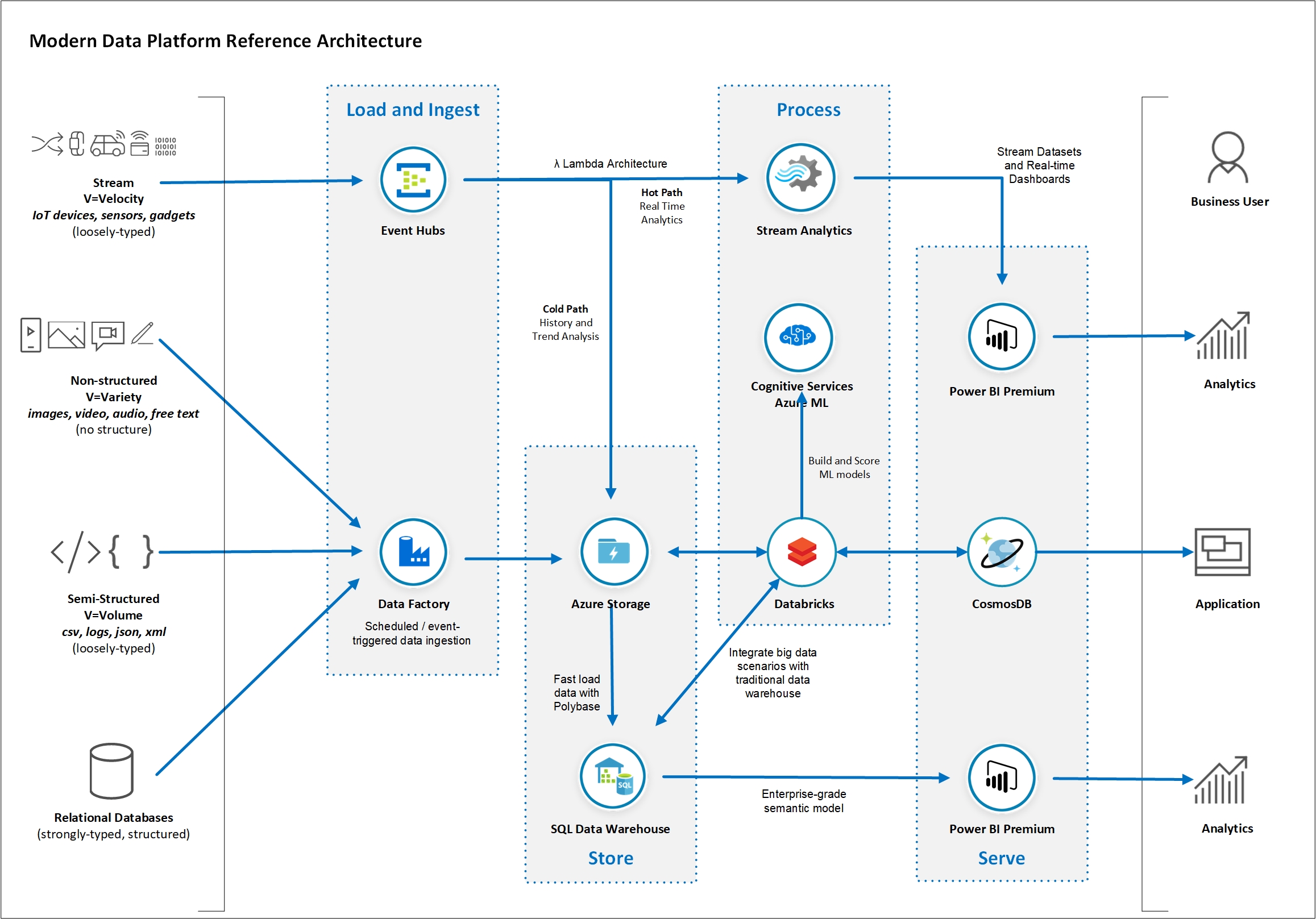

In this workshop you will learn about the main concepts related to advanced analytics and Big Data processing and how Azure Data Services can be used to implement a modern data warehouse architecture. You will understand what Azure services you can leverage to establish a solid data platform to quickly ingest, process and visualise data from a large variety of data sources. The reference architecture you will build as part of this exercise has been proven to give you the flexibility and scalability to grow and handle large volumes of data and keep an optimal level of performance. In the exercises in this lab you will build data pipelines using data related to New York City. The workshop was designed to progressively implement an extended modern data platform architecture starting from a traditional relational data pipeline. Then we introduce big data scenarios with large files and distributed computing. We add non-structured data and AI into the mix and finish with real-time streaming analytics. You will have done all of that by the end of the day.

IMPORTANT:

-

The reference architecture proposed in this workshop aims to explain just enough of the role of each of the Azure Data Services included in the overall modern data platform architecture. This workshop does not replace the need of in-depth training on each Azure service covered.

-

The services covered in this course are only a subset of a much larger family of Azure services. Similar outcomes can be achieved by leveraging other services and/or features not covered by this workshop.

-

Some concepts presented in this course can be quite complex and you may need to seek for more information from different sources.

Document Structure

This document contains detailed step-by-step instructions on how to implement a Modern Data Platform architecture using Azure Data Services. It’s recommended you carefully read the detailed description contained in this document for a successful experience with all Azure services.

You will see the label IMPORTANT whenever a there is a critical step to the lab. Please pay close attention to the instructions given.

You will also see the label IMPORTANT at the beginning of each lab section. As some instructions need to be execute on your host computer while others need to be executed in a remote desktop connection (RDP), this IMPORTANT label states where you should execute the lab section. See example below:

| IMPORTANT |

|---|

| Execute these steps on your host computer |

Data Source References

New York City data used in this lab was obtained from the New York City Open Data website: https://opendata.cityofnewyork.us/. The following datasets were used:

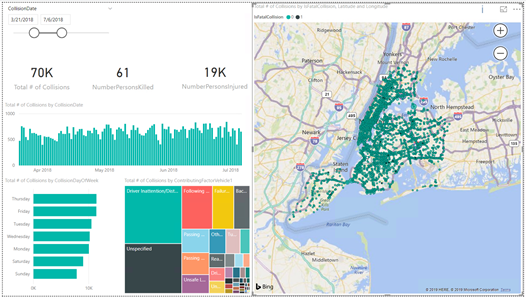

- NYPD Motor Vehicle Collisions: https://data.cityofnewyork.us/Public-Safety/NYPD-Motor-Vehicle-Collisions/h9gi-nx95

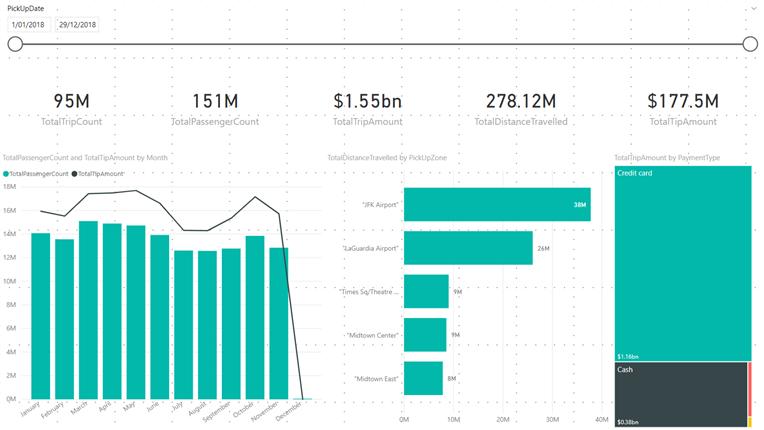

- TLC Yellow Taxi Trip Data: https://www1.nyc.gov/site/tlc/about/tlc-trip-record-data.page

Lab Prerequisites and Deployment

The following prerequisites must be completed before you start these labs:

- You must be connected to the internet;

- You must have an Azure account with administrator- or contributor-level access to your subscription. If you don’t have an account, you can sign up for free following the instructions here: https://azure.microsoft.com/en-au/free/

- Lab 5 requires you to have a Twitter account. If you don’t have an account you can sign up for free following the instructions here: https://twitter.com/signup.

- Lab 5 requires you to have a Power BI Pro account. If you don’t have an account you can sign up for a 60-day trial for free here: https://powerbi.microsoft.com/en-us/power-bi-pro/

- The approximate cost to run the resources provisioned for the estimated duration of this workshop (2 days) is around USD 150.00. You can minimise the costs by turning off MDWSQLServer and MDWDataGateway VMs and also the MDWASQLDW Azure SQL Data Warehouse after Lab 2 as they are no longer required for the remaining labs.

Lab Guide

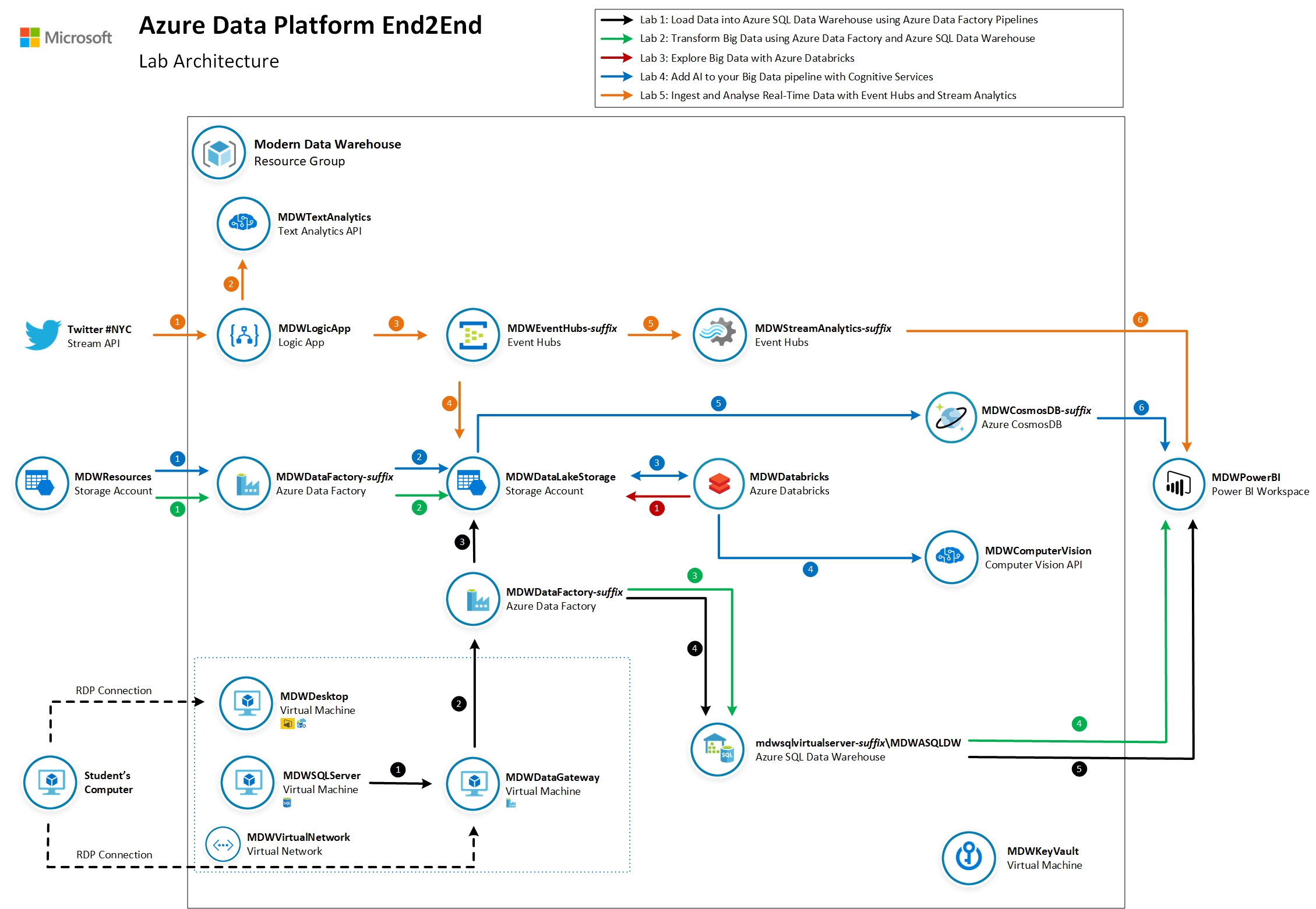

Throughout a series of 5 labs you will progressively implement the modern data platform architecture referenced below:

Lab 0: Deploy Azure Data Platform End2End to your subscription

In this section you will automatically provision all Azure resources required to complete labs 1 though to 5. We will use a pre-defined ARM template with the definition of all Azure services used to ingest, store, process and visualise data.

The estimated time to complete this lab is: 30 minutes.

| IMPORTANT |

|---|

| In order to avoid potential delays caused by issues found during the ARM template deployment it is recommended you execute Lab 0 prior to Day 1. |

Lab 1: Load Data into Azure SQL Data Warehouse using Azure Data Factory Pipelines

In this lab you will configure the Azure environment to allow relational data to be transferred from a SQL Server 2017 database to an Azure SQL Data Warehouse database using Azure Data Factory. The dataset you will use contains data about motor vehicle collisions that happened in New York City from 2012 to 2019. You will use Power BI to visualise collision data loaded from Azure SQL Data Warehouse.

The estimated time to complete this lab is: 75 minutes.

Lab 2: Transform Big Data using Azure Data Factory and Azure SQL Data Warehouse

In this lab you will use Azure Data Factory to download large data files into your data lake and use an Azure SQL Data Warehouse stored procedure to generate a summary dataset and store it in the final table. The dataset you will use contains detailed New York City Yellow Taxi rides for 2018. You will generate a daily aggregated summary of all rides and save the result in your data warehouse. You will then use Power BI to visualise summarised data.

The estimated time to complete this lab is: 60 minutes.

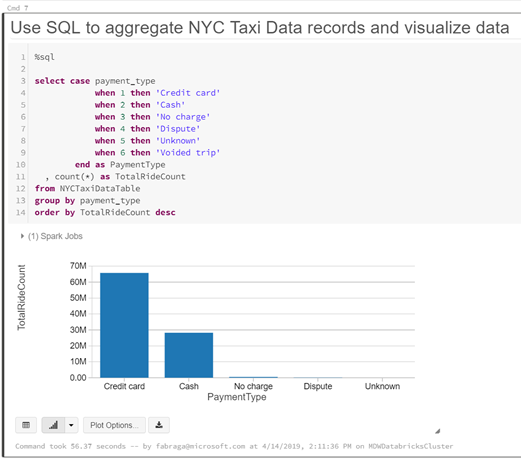

Lab 3: Explore Big Data using Azure Databricks

In this lab you will use Azure Databricks to explore the New York Taxi data files you saved in your data lake in Lab 2. Using a Databricks notebook you will connect to the data lake and query taxi ride details.

The estimated time to complete this lab is: 45 minutes.

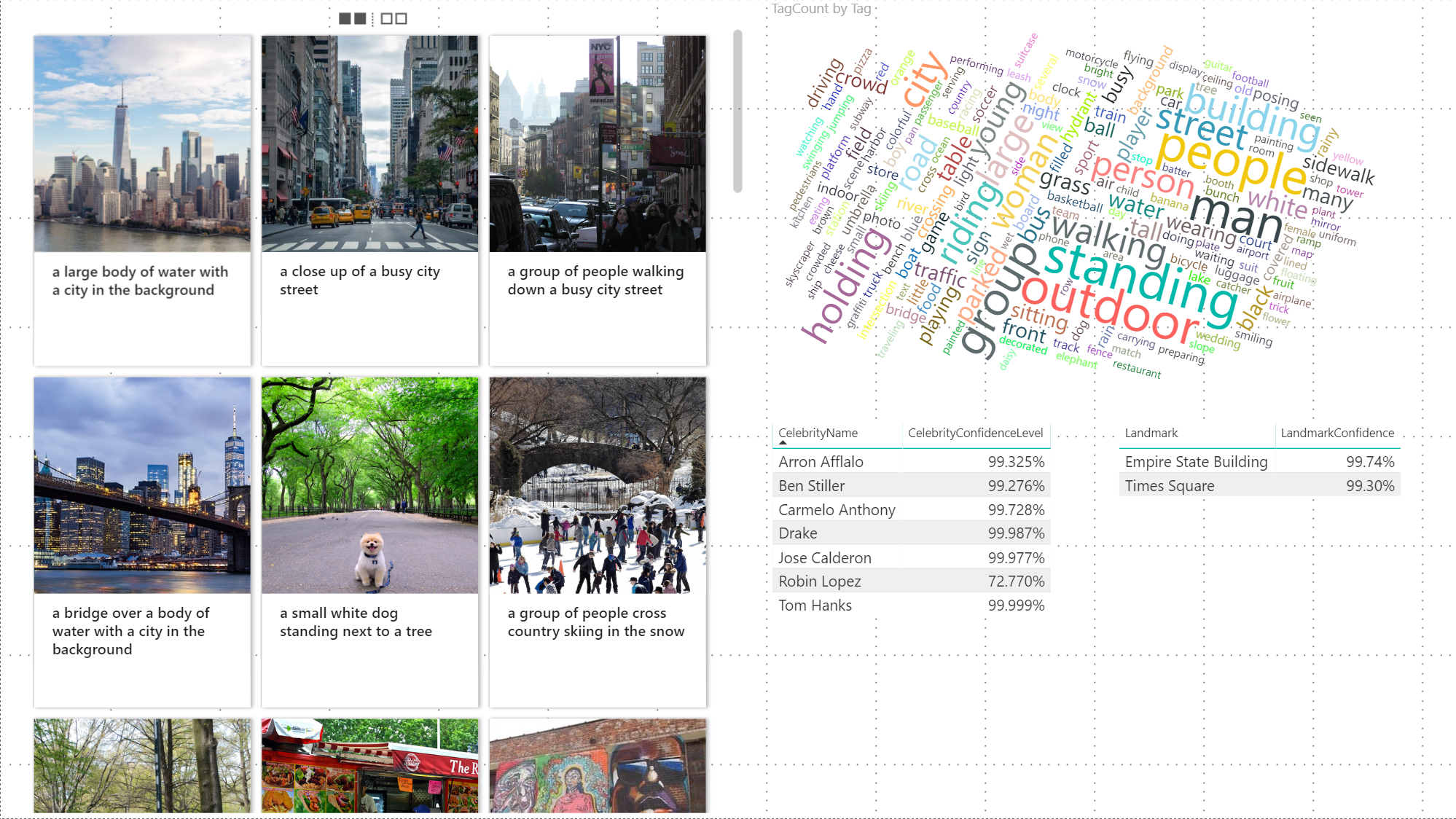

Lab 4: Add AI to your Big Data Pipeline with Cognitive Services

In this lab you will use Azure Data Factory to download New York City images to your data lake. Then, as part of the same pipeline, you are going to use an Azure Databricks notebook to invoke Computer Vision Cognitive Service to generate metadata documents and save them in back in your data lake. The Azure Data Factory pipeline then finishes by saving all metadata information in a Cosmos DB collection. You will use Power BI to visualise NYC images and their AI-generated metadata.

The estimated time to complete this lab is: 75 minutes.

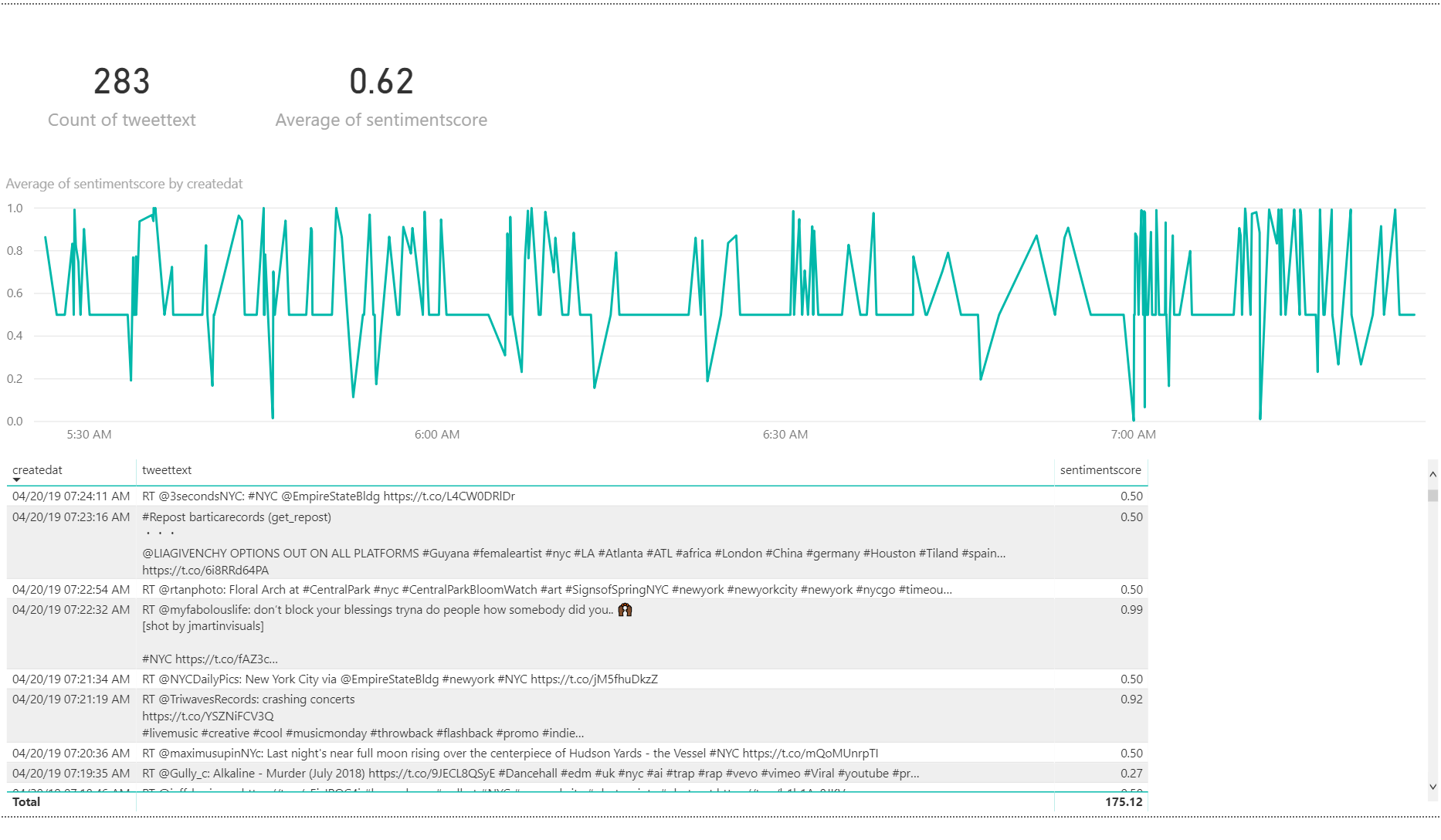

Lab 5: Ingest and Analyse real-time data with Event Hubs and Stream Analytics

In this lab you will use an Azure Logic App to connect to Twitter and generate a stream of messages using the hashtag #NYC. The logic app will invoke the Azure Text Analytics Cognitive service to score Tweet sentiment and send the messages to Event Hubs. You will use Stream Analytics to generate the average Tweet sentiment in the last 60 seconds and send the results to a real-time dataset in Power BI.

The estimated time to complete this lab is: 75 minutes.

Workshop Proposed Agenda

The workshop content will be delivered over the course of two days with the following agenda:

Day 1

| Activity | Duration |

|---|---|

| Workshop Overview | 45 minutes |

| Lab 0: Resource Deployment * | 30 minutes |

| Modern Data Platform Concepts: Part I | 90 minutes |

| Modern Data Warehousing | |

| Lab 1: Load Data into Azure SQL Data Warehouse using Azure Data Factory Pipelines | 75 minutes |

| Modern Data Platform Concepts: Part II | 90 minutes |

| Lab 2: Transform Big Data using Azure Data Factory and Azure SQL Data Warehouse | 60 minutes |

* Lab 0: Resource Deployment preferable done before Day 1.

Day 2

| Activity | Duration |

|---|---|

| Advanced Analytics | |

| Modern Data Platform Concepts: Part III | 60 minutes |

| Lab 3: Explore Big Data using Azure Databricks | 45 minutes |

| Modern Data Platform Concepts: Part IV | 60 minutes |

| Lab 4: Add AI to your Big Data Pipeline with Cognitive Services | 75 minutes |

| Real-time Analytics | |

| Modern Data Platform Concepts: Part V | 60 minutes |

| Lab 5: Ingest and Analyse real-time data with Event Hubs and Stream Analytics | 75 minutes |