BAML is a domain-specific-language to write and test LLM functions.

An LLM function is a prompt template with some defined input variables, and a specific output type like a class, enum, union, optional string, etc.

BAML LLM functions plug into python, TS, and other languages, which makes it easy to focus more on engineering and less on prompting.

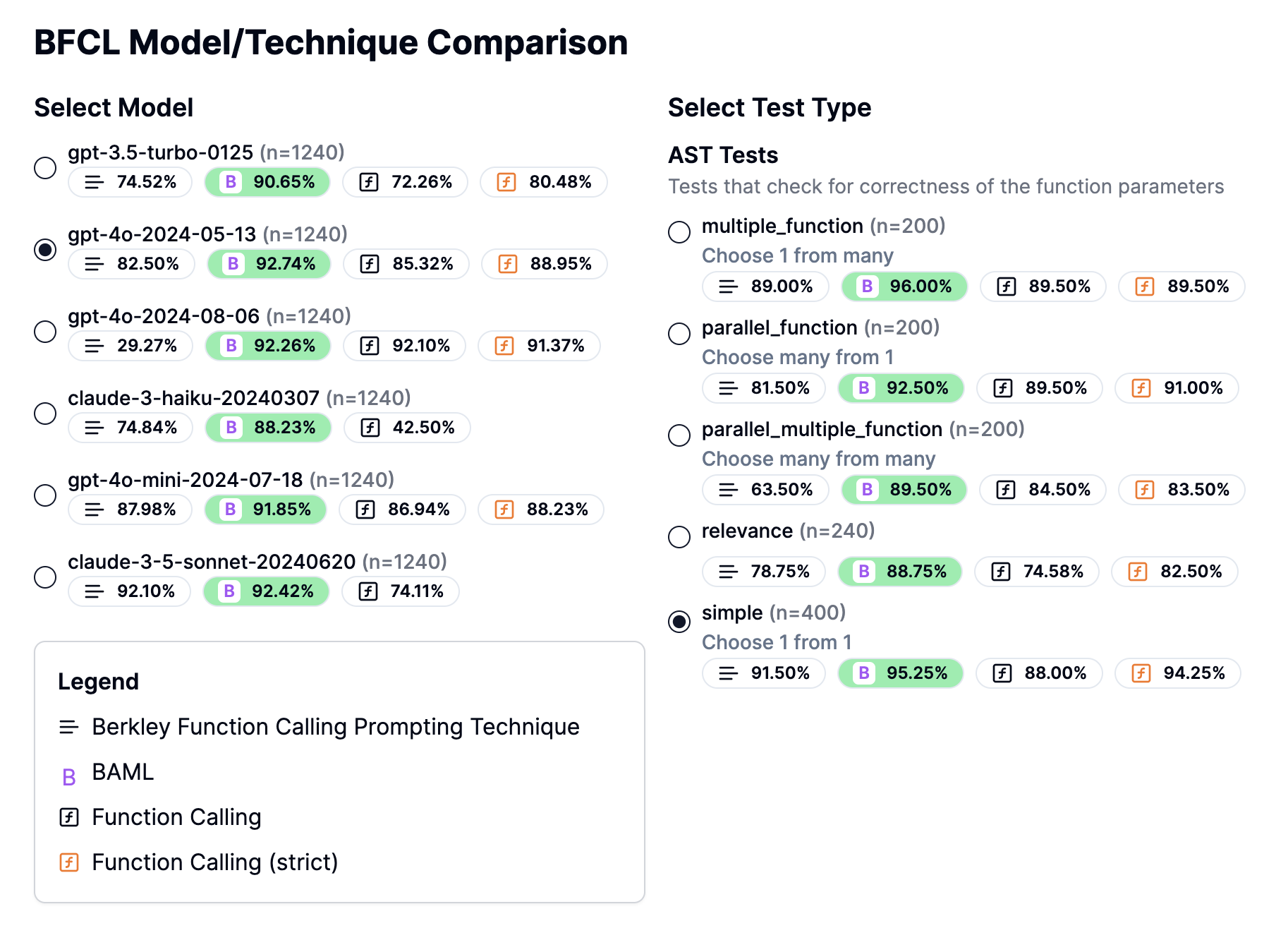

BAML outperforms all other current methods of getting structured data, even when using it with GPT3.5. It also outperforms models fine-tuned for tool-use. See the Berkeley Function Calling Benchmark results. Read more on our Schema-Aligned Parser.

Try it out in the playground -- PromptFiddle.com

Share your creations and ask questions in our Discord.

- Python and Typescript support: Plug-and-play BAML with other languages

- Type validation: more resilient to common LLM mistakes than Pydantic or Zod

- Wide model support: Ollama, Openai, Anthropic. Tested on small models like Llama2

- Streaming: Stream structured partial outputs.

- Realtime Prompt Previews: See the full prompt always, even if it has loops and conditionals

- Testing support: Test functions in the playground with 1 click.

- Resilience and fallback features: Add retries, redundancy, to your LLM calls

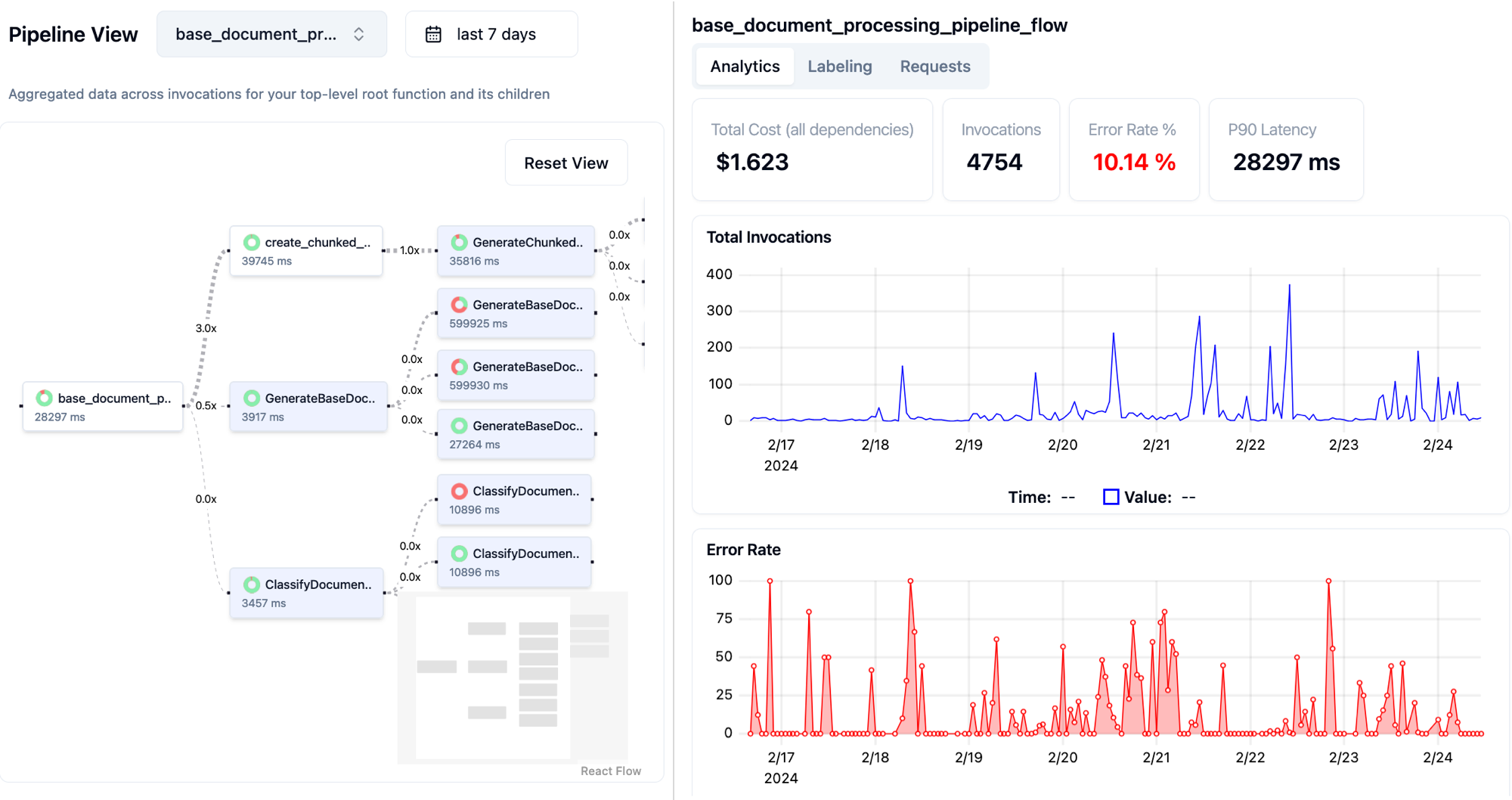

- Observability Platform: Use Boundary Studio to visualize your functions and replay production requests with 1 click.

- Discord Office Hours - Come ask us anything! We hold office hours most days (9am - 12pm PST).

- Documentation

- Boundary Studio - Observability of BAML functions

Here is how you extract a "Resume" from a chunk of free-form text. Run this prompt in PromptFiddle.

Note: BAML syntax highlight is not supported yet in Github -- so we apologize, we're working on it!

// Declare some data models for my function, with descriptions

class Resume {

name string

education Education[] @description("Extract in the same order listed")

skills string[] @description("Only include programming languages")

}

class Education {

school string

degree string

year int

}

function ExtractResume(resume_text: string) -> Resume {

// LLM client with params you want (not pictured)

client GPT4Turbo

// BAML prompts use Jinja syntax

prompt #"

Parse the following resume and return a structured representation of the data in the schema below.

Resume:

---

{{ resume_text }}

---

{# special Jinja macro to print the output instructions. #}

{{ ctx.output_format }}

JSON:

"#

}Once you're done iterating on it using the interactive BAML VSCode Playground, you can convert it to Python or TS using the BAML CLI.

# baml_client is autogenerated

from baml_client import baml as b

# BAML types get converted to Pydantic models

from baml_client.baml_types import Resume

async def main():

resume_text = """Jason Doe

Python, Rust

University of California, Berkeley, B.S.

in Computer Science, 2020

Also an expert in Tableau, SQL, and C++

"""

# this function comes from the autogenerated "baml_client".

# It calls the LLM you specified and handles the parsing.

resume = await b.ExtractResume(resume_text)

# Fully type-checked and validated!

assert isinstance(resume, Resume)// baml_client is autogenerated

import baml as b from "@/baml_client";

// BAML also auto generates types for all your data models

import { Resume } from "@/baml_client/types";

function getResume(resumeUrl: string): Promise<Resume> {

const resume_text = await loadResume(resumeUrl);

// Call the BAML function, which calls the LLM client you specified

// and handles all the parsing.

return b.ExtractResume({ resumeText: content });

}With BAML you have:

- Better output parsing than Pydantic or Zod -- more on this later

- Your code is looking as clean as ever

- Calling your LLM feels like calling a normal function, with actual type guarantees.

| Capabilities | |

|---|---|

| VSCode Extension install | Syntax highlighting for BAML files Real-time prompt preview Testing UI |

| Boundary Studio open (not open source) |

Type-safe observability Labeling |

pip install baml-py

npm install @boundaryml/baml

Search for "BAML" or Click here

If you are using python, enable typechecking in VSCode’s settings.json:

"python.analysis.typecheckingMode": "basic"

Typescript: npx baml-cli init

Python: baml-cli init

Showcase of applications using BAML

- Zenfetch - ChatGPT for your bookmarks

- Vetrec - AI-powered Clinical Notes for Veterinarians

- MagnaPlay - Production-quality machine translation for games

- Aer Compliance - AI-powered compliance tasks

- Haven - Automate Tenant communications with AI

- Muckrock - FOIA request tracking and filing

- and more! Let us know if you want to be showcased or want to work with us 1-1 to solve your usecase.

Analyze, label, and trace each request in Boundary Studio.

Existing frameworks can work pretty well (especially for very simple prompts), but they run into limitations when working with structured data and complex logic.

Iteration speed is slow

There are two reasons:

- You can't visualize your prompt in realtime so you need to run it over and over to figure out what string the LLM is actually ingesting. This gets much worse if you build your prompt using conditionals and loops, or have structured outputs. BAML's prompt-preview feature in the playground works even with complex logic.

- Poor testing support. Testing prompts is 80% of the battle. Developers have to deal with copy pasting prompts from one playground UI to the codebase, and if you have structured outputs you'll need to generate the pydantic json schema yourself and do more copy-pasting around.

Pydantic and Zod weren't made with LLMs in mind

LLMs don't always output correct JSON.

Sometimes you get something like this text blob below, where the json blob is in-between some other text:

Based on my observations X Y Z...., it seems the answer is:

{

"sentiment": Happy,

}

Hope this is what you wanted!

This isn't valid JSON, since it's missing quotes in "Happy" and has some prefix text that you will have to regex out yourself before trying to parse using Pydantic or Zod.

BAML handles this and many more cases, such as identifying Enums in LLM string responses.. See flexible parsing

Aliasing issues

Prompt engineering requires you to think carefully about what the name of each key in the schema is. Rather than changing your code everytime you want to try a new name out, you can alias fields to a different name and convert them back into the original field name during parsing.

Here's how BAML differs from these frameworks:

Aliasing object fields in Zod

const UserSchema = z

.object({

first_name: z.string(),

})

.transform((user) => ({

firstName: user.first_name,

}));Aliasing object fields BAML

class User {

first_name string @alias("firstName")

}Aliasing enum values in Zod/Pydantic

Zod: not possible

Pydantic:

class Sentiment(Enum):

HAPPY = ("ecstatic")

SAD = ("sad")

def __init__(self, alias):

self._alias = alias

@property

def alias(self):

return self._alias

@classmethod

def from_string(cls, category: str) -> "Sentiment":

for c in cls:

if c.alias == alias:

return c

raise ValueError(f"Invalid alias: {alias}")

...

# more code here to actually parse the aliasesAliasing enum values in BAML

enum Sentiment {

HAPPY @alias("ecstatic")

SAD @alias("sad")

}Finally, BAML is more of an ecosystem designed to bring you the best developer experience for doing any kind of LLM function-calling, which is why we've built tools like the playground and Boundary Studio -- our observability platform.

We basically wanted Jinja, but with types + function declarations, so we decided to make it happen. Earlier we tried making a YAML-based sdk, and even a Python SDK, but they were not powerful enough.

No, the BAML dependency transpiles the code using Rust 🦀. It takes just a few milliseconds!

Basically, A Made-up Language

BAML files are only used to generate Python or Typescript code. Just commit the generated code as you would any other python code, and you're good to go

Your BAML-generated code never talks to our servers. We don’t proxy LLM APIs -- you call them directly from your machine. We only publish traces to our servers if you enable Boundary Studio explicitly.

BAML and the VSCode extension will always be 100% free and open-source.

Our paid capabilities only start if you use Boundary Studio, which focuses on Monitoring, Collecting Feedback, and Improving your AI pipelines. Contact us for pricing details at contact@boundaryml.com.

Please do not file GitHub issues or post on our public forum for security vulnerabilities, as they are public!

Boundary takes security issues very seriously. If you have any concerns about BAML or believe you have uncovered a vulnerability, please get in touch via the e-mail address contact@boundaryml.com. In the message, try to provide a description of the issue and ideally a way of reproducing it. The security team will get back to you as soon as possible.

Note that this security address should be used only for undisclosed vulnerabilities. Please report any security problems to us before disclosing it publicly.

Made with ❤️ by Boundary

HQ in Seattle, WA

P.S. We're hiring for software engineers. Email us or reach out on discord!