Official PyTorch code for extracting features and training downstream models with

emotion2vec: Self-Supervised Pre-Training for Speech Emotion Representation

- [Jun. 2024] 🔧 We fix a bug in emotion2vec+. Please re-pull the latest code.

- [May. 2024] 🔥 Speech emotion recognition foundation model: emotion2vec+, with 9-class emotions has been released on Model Scope and Hugging Face. Check out a series of emotion2vec+ (seed, base, large) models for SER with high performance (We recommend this release instead of the Jan. 2024 release).

- [Jan. 2024] 9-class emotion recognition model with iterative fine-tuning from emotion2vec has been released in modelscope and FunASR.

- [Jan. 2024] emotion2vec has been integrated into modelscope and FunASR.

- [Dec. 2023] We release the paper, and create a WeChat group for emotion2vec.

- [Nov. 2023] We release code, checkpoints, and extracted features for emotion2vec.

GitHub Repo: emotion2vec

| Model | ⭐Model Scope | 🤗Hugging Face | Fine-tuning Data (Hours) |

|---|---|---|---|

| emotion2vec | Link | Link | / |

| emotion2vec+ seed | Link | Link | 201 |

| emotion2vec+ base | Link | Link | 4788 |

| emotion2vec+ large | Link | Link | 42526 |

- emotion2vec+: speech emotion recognition foundation model

- emotion2vec: universal speech emotion representation model

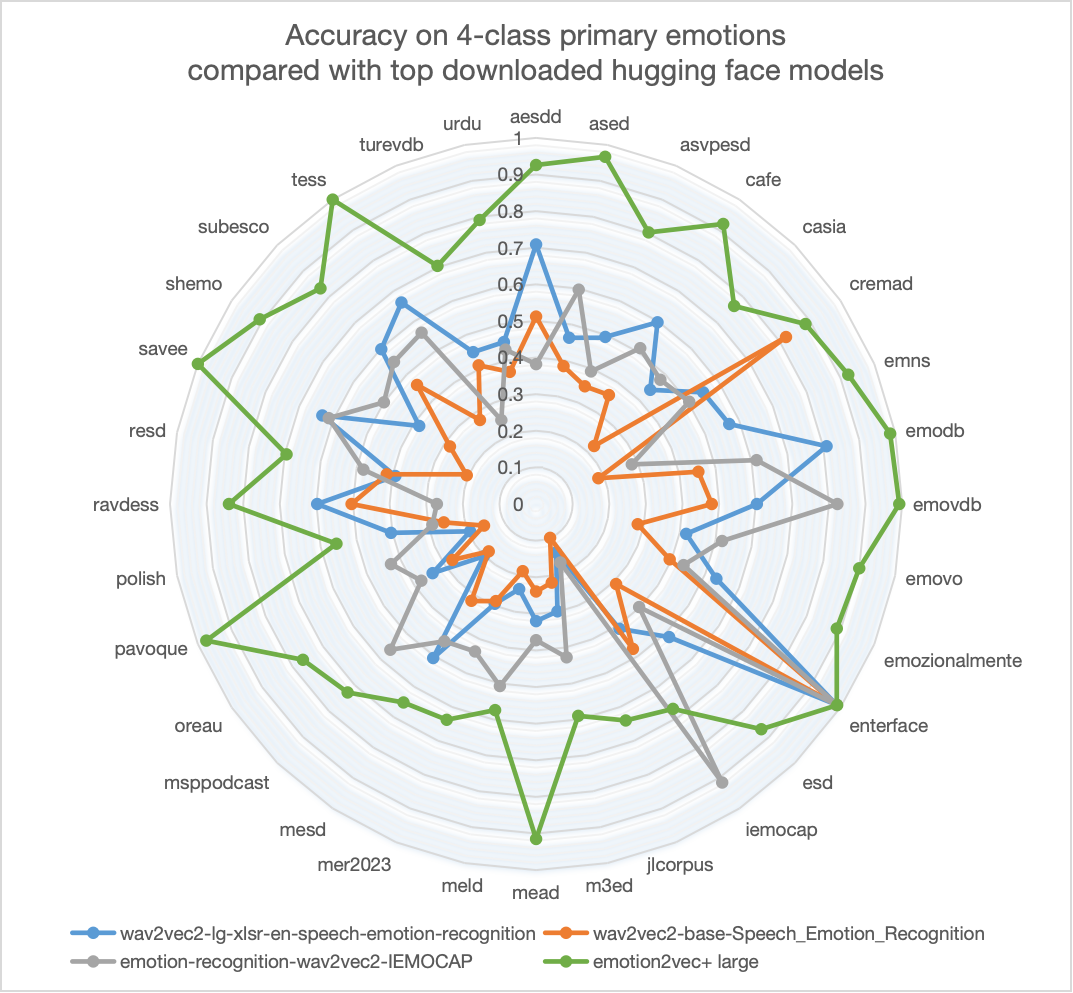

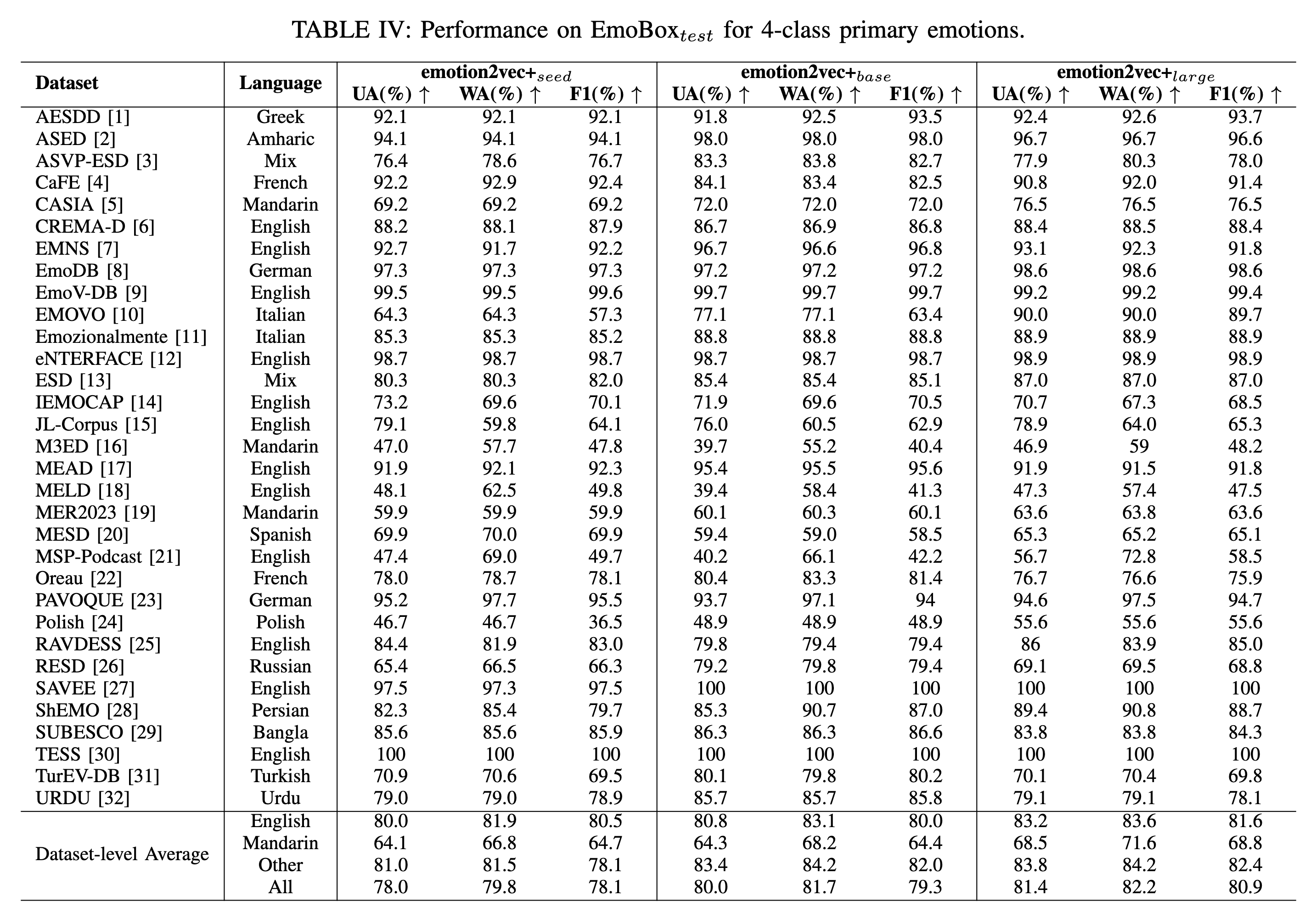

emotion2vec+ is a series of foundational models for speech emotion recognition (SER). We aim to train a "whisper" in the field of speech emotion recognition, overcoming the effects of language and recording environments through data-driven methods to achieve universal, robust emotion recognition capabilities. The performance of emotion2vec+ significantly exceeds other highly downloaded open-source models on Hugging Face.

We offer 3 versions of emotion2vec+, each derived from the data of its predecessor. If you need a model focusing on spech emotion representation, refer to emotion2vec: universal speech emotion representation model.

- emotion2vec+ seed: Fine-tuned with academic speech emotion data from EmoBox

- emotion2vec+ base: Fine-tuned with filtered large-scale pseudo-labeled data to obtain the base size model (~90M)

- emotion2vec+ large: Fine-tuned with filtered large-scale pseudo-labeled data to obtain the large size model (~300M)

The iteration process is illustrated below, culminating in the training of the emotion2vec+ large model with 40k out of 160k hours of speech emotion data. Details of data engineering will be announced later.

Performance on EmoBox for 4-class primary emotions (without fine-tuning). Details of model performance will be announced later.

- install modelscope and funasr

pip install -U funasr modelscope- run the code.

'''

Using the finetuned emotion recognization model

rec_result contains {'feats', 'labels', 'scores'}

extract_embedding=False: 9-class emotions with scores

extract_embedding=True: 9-class emotions with scores, along with features

9-class emotions:

iic/emotion2vec_plus_seed, iic/emotion2vec_plus_base, iic/emotion2vec_plus_large (May. 2024 release)

iic/emotion2vec_base_finetuned (Jan. 2024 release)

0: angry

1: disgusted

2: fearful

3: happy

4: neutral

5: other

6: sad

7: surprised

8: unknown

'''

from modelscope.pipelines import pipeline

from modelscope.utils.constant import Tasks

inference_pipeline = pipeline(

task=Tasks.emotion_recognition,

model="iic/emotion2vec_plus_large", # Alternative: iic/emotion2vec_plus_seed, iic/emotion2vec_plus_base, iic/emotion2vec_plus_large and iic/emotion2vec_base_finetuned

model_revision="master")

rec_result = inference_pipeline('https://isv-data.oss-cn-hangzhou.aliyuncs.com/ics/MaaS/ASR/test_audio/asr_example_zh.wav', output_dir="./outputs", granularity="utterance", extract_embedding=False)

print(rec_result)The model will be downloaded automatically.

- install funasr

pip install -U funasr- run the code.

'''

Using the finetuned emotion recognization model

rec_result contains {'feats', 'labels', 'scores'}

extract_embedding=False: 9-class emotions with scores

extract_embedding=True: 9-class emotions with scores, along with features

9-class emotions:

iic/emotion2vec_plus_seed, iic/emotion2vec_plus_base, iic/emotion2vec_plus_large (May. 2024 release)

iic/emotion2vec_base_finetuned (Jan. 2024 release)

0: angry

1: disgusted

2: fearful

3: happy

4: neutral

5: other

6: sad

7: surprised

8: unknown

'''

from funasr import AutoModel

model = AutoModel(model="iic/emotion2vec_base_finetuned") # Alternative: iic/emotion2vec_plus_seed, iic/emotion2vec_plus_base, iic/emotion2vec_plus_large and iic/emotion2vec_base_finetuned

wav_file = f"{model.model_path}/example/test.wav"

rec_result = model.generate(wav_file, output_dir="./outputs", granularity="utterance", extract_embedding=False)

print(rec_result)The model will be downloaded automatically.

FunASR support file list input in wav.scp (kaldi style):

wav_name1 wav_path1.wav

wav_name2 wav_path2.wav

...

Refer to FunASR for more details.

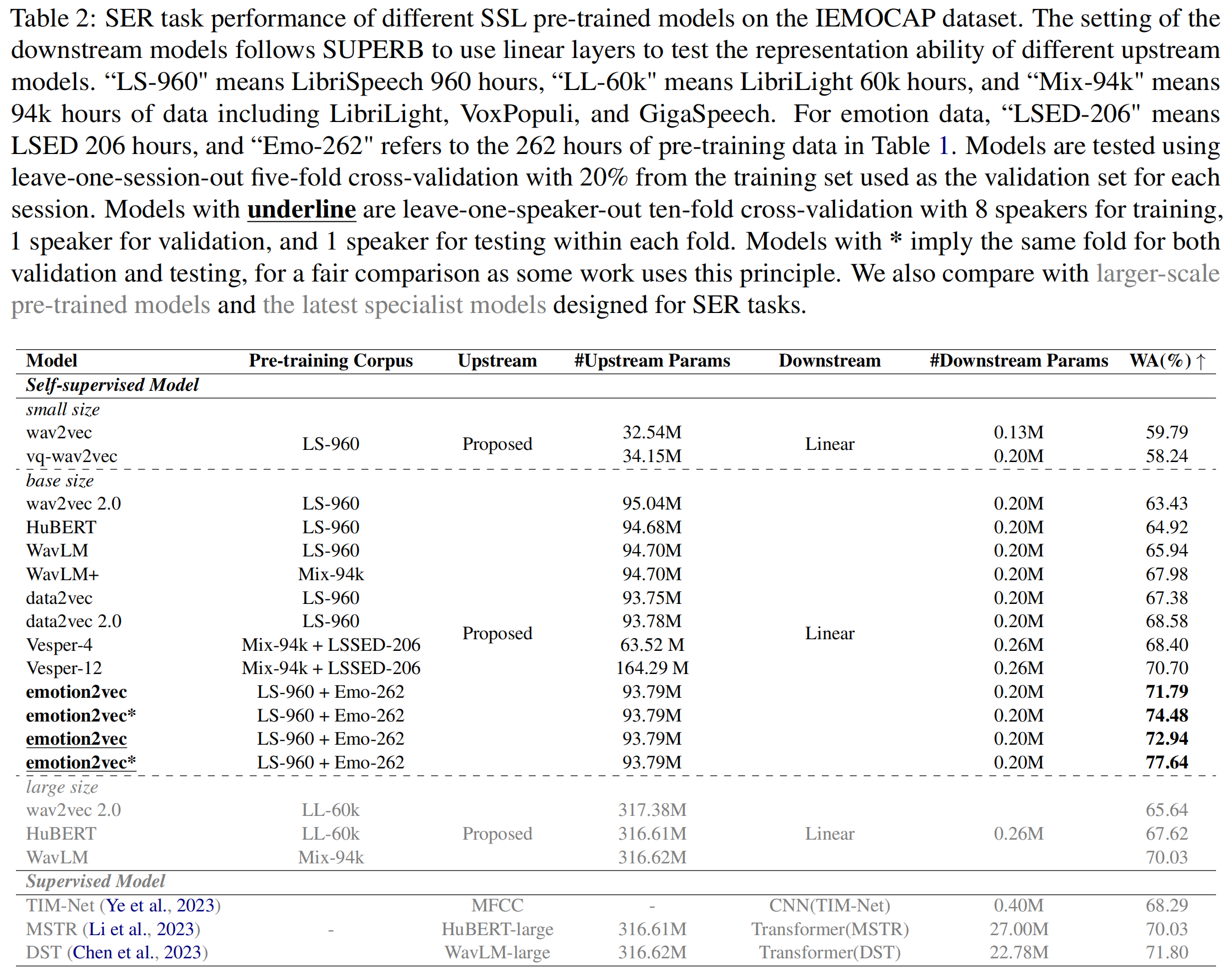

emotion2vec is the first universal speech emotion representation model. Through self-supervised pre-training, emotion2vec has the ability to extract emotion representation across different tasks, languages, and scenarios.

emotion2vec achieves SOTA with only linear layers on the mainstream IEMOCAP dataset. Refer to the paper for more details.

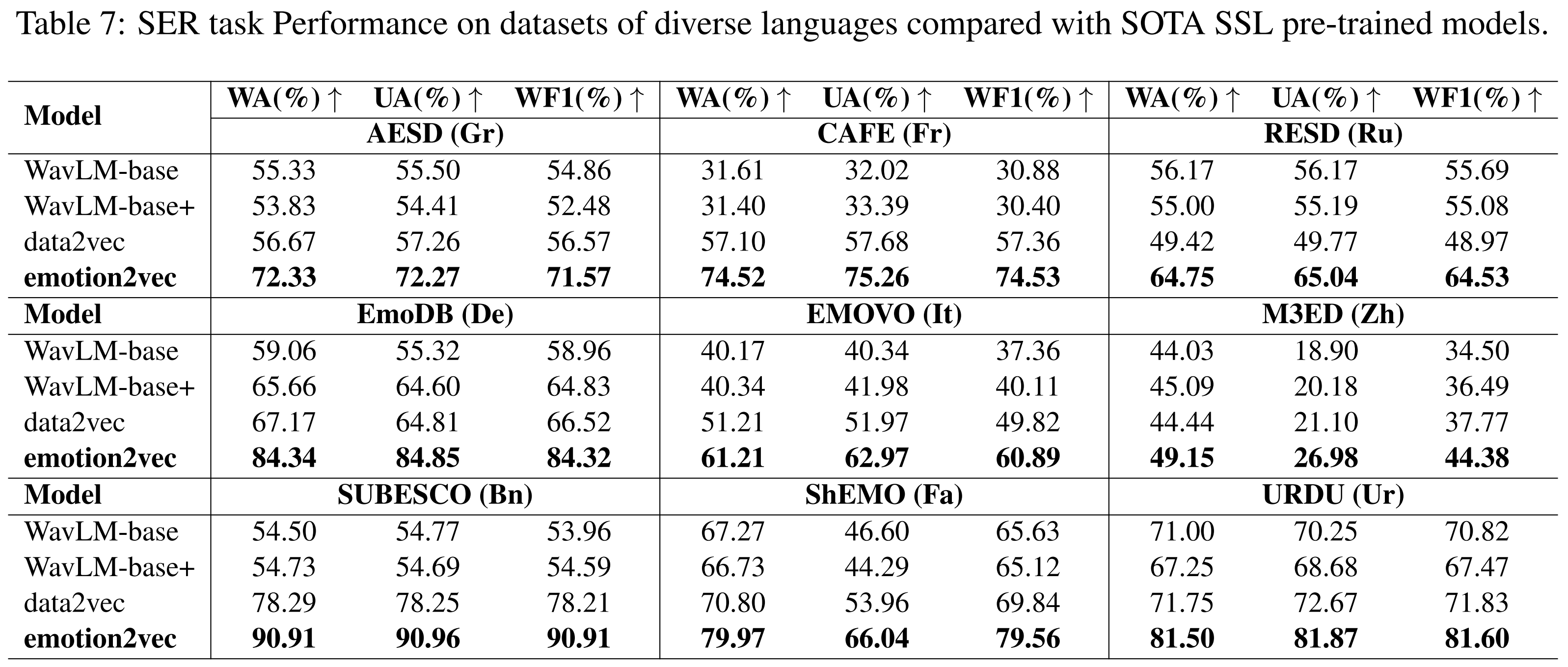

emotion2vec achieves SOTA compared with SOTA SSL models on multiple languages (Mandarin, French, German, Italian, etc.). Refer to the paper for more details.

Refer to the paper for more details.

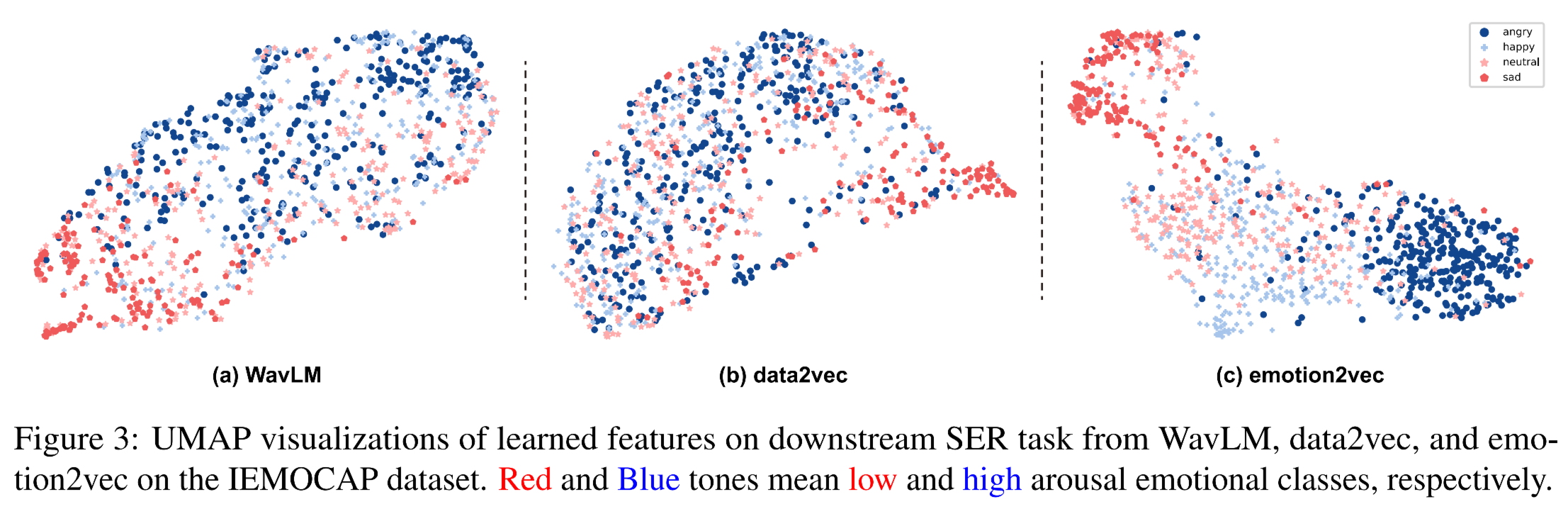

UMAP visualizations of learned features on the IEMOCAP dataset. Red and Blue tones mean low and high arousal emotional classes, respectively. Refer to the paper for more details.

We provide the extracted features of popular emotion dataset IEMOCAP. The features are extracted from the last layer of emotion2vec. The features are stored in .npy format and the sample rate of the extracted features is 50Hz. The utterance-level features are computed by averaging the frame-level features.

- frame-level: Google Drive | Baidu Netdisk (password: zb3p)

- utterance-level: Google Drive | Baidu Netdisk (password: qu3u)

All wav files are extracted from the original dataset for diverse downstream tasks. If want to train with standard 5531 utterances for 4 emotions classification, please refer to the iemocap_downstream folder.

The minimum environment requirements are python>=3.8 and torch>=1.13. Our testing environments are python=3.8 and torch=2.01.

- git clone repos.

pip install fairseq

git clone https://github.com/ddlBoJack/emotion2vec.git- download emotion2vec checkpoint from:

- Google Drive

- Baidu Netdisk (password: b9fq)

- modelscope:

git clone https://www.modelscope.cn/damo/emotion2vec_base.git

- modify and run

scripts/extract_features.sh

- install modelscope and funasr

pip install -U funasr modelscope- run the code.

'''

Using the emotion representation model

rec_result only contains {'feats'}

granularity="utterance": {'feats': [*768]}

granularity="frame": {feats: [T*768]}

'''

from modelscope.pipelines import pipeline

from modelscope.utils.constant import Tasks

inference_pipeline = pipeline(

task=Tasks.emotion_recognition,

model="iic/emotion2vec_base",

model_revision="master")

rec_result = inference_pipeline('https://isv-data.oss-cn-hangzhou.aliyuncs.com/ics/MaaS/ASR/test_audio/asr_example_zh.wav', output_dir="./outputs", granularity="utterance")

print(rec_result)The model will be downloaded automatically.

Refer to model scope of emotion2vec_base and emotion2vec_base_finetuned for more details.

- install funasr

pip install -U funasr- run the code.

'''

Using the emotion representation model

rec_result only contains {'feats'}

granularity="utterance": {'feats': [*768]}

granularity="frame": {feats: [T*768]}

'''

from funasr import AutoModel

model = AutoModel(model="iic/emotion2vec_base")

wav_file = f"{model.model_path}/example/test.wav"

rec_result = model.generate(wav_file, output_dir="./outputs", granularity="utterance")

print(rec_result)The model will be downloaded automatically.

FunASR support file list input in wav.scp (kaldi style):

wav_name1 wav_path1.wav

wav_name2 wav_path2.wav

...

Refer to FunASR for more details.

We provide training scripts for IEMOCAP dataset in the iemocap_downstream folder. You can modify the scripts to train your downstream model on other datasets.

If you find our emotion2vec code and paper useful, please kindly cite:

@article{ma2023emotion2vec,

title={emotion2vec: Self-Supervised Pre-Training for Speech Emotion Representation},

author={Ma, Ziyang and Zheng, Zhisheng and Ye, Jiaxin and Li, Jinchao and Gao, Zhifu and Zhang, Shiliang and Chen, Xie},

journal={arXiv preprint arXiv:2312.15185},

year={2023}

}