Experiment code for ICML 2021 paper Continuous-time Model-based Reinforcement Learning. Implemented in Python 3.7.7 and torch 1.6.0 (later versions should be OK). Also requires torchdiffeq, TorchDiffEqPack and gym.

runner.pyshould run off-the-shelf. The file can be used to reproduce our results and it also demonstrates how to- create a continuous-time RL environment

- initiate our model (with different variational formulations) as well as baselines (PETS & deep PILCO)

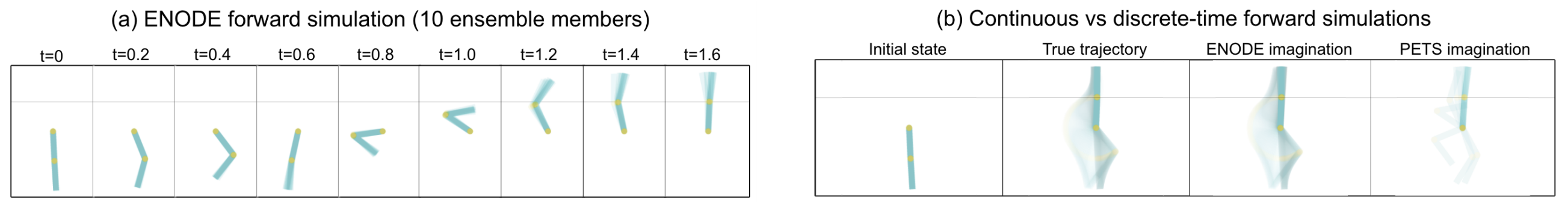

- visualize the dynamics fits

- execute the main learning loop (Algorithm-1 in the paper)

ctrlfolder has our model implementation as well as helper functions for training.ctrl/ctrl: creates our model and serves as an interface between the model and training/visualization functions.ctrl/dataset: contains state-action-reward trajectories and interpolation (for continuous-time action) classes.ctrl/dynamics: implements the dynamics model and is responsible for forward simulating all models.ctrl/policy: deterministic policy implementation

envscontains our continuous-time implementation of RL environments.utilsincludes the function approximators.